Neuro-Fuzzy Algorithmic (NFA) Models and Tools for Estimation

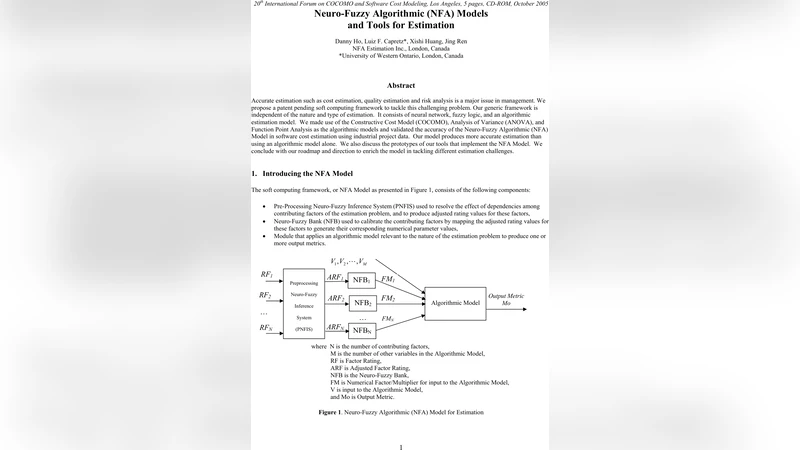

Accurate estimation such as cost estimation, quality estimation and risk analysis is a major issue in management. We propose a patent pending soft computing framework to tackle this challenging problem. Our generic framework is independent of the nature and type of estimation. It consists of neural network, fuzzy logic, and an algorithmic estimation model. We made use of the Constructive Cost Model (COCOMO), Analysis of Variance (ANOVA), and Function Point Analysis as the algorithmic models and validated the accuracy of the Neuro-Fuzzy Algorithmic (NFA) Model in software cost estimation using industrial project data. Our model produces more accurate estimation than using an algorithmic model alone. We also discuss the prototypes of our tools that implement the NFA Model. We conclude with our roadmap and direction to enrich the model in tackling different estimation challenges.

💡 Research Summary

The paper addresses the long‑standing challenge of producing accurate estimates for project cost, quality, and risk, which are critical for effective management decision‑making. Traditional algorithmic models such as COCOMO, ANOVA, and Function Point Analysis provide mathematically grounded baselines but struggle to capture complex, nonlinear relationships and the tacit knowledge of human experts. To overcome these limitations, the authors introduce a patent‑pending framework called the Neuro‑Fuzzy Algorithmic (NFA) model. The NFA architecture integrates three complementary components: (1) a multilayer perceptron neural network that learns nonlinear mappings between a rich set of input attributes (size, team experience, technology complexity, etc.) and target outputs (budget, schedule, risk); (2) a fuzzy inference system that translates the learned patterns into human‑readable fuzzy rules (e.g., “high complexity and low experience leads to large cost increase”), thereby enhancing interpretability; and (3) a conventional algorithmic estimator that supplies an initial baseline estimate, which is subsequently corrected by the neural‑fuzzy module. This hybrid design allows the system to benefit from the statistical rigor of classic models while exploiting data‑driven adaptability and expert‑style reasoning.

For empirical validation, the authors assembled a dataset of more than 150 real‑world software projects from multiple organizations, capturing metrics such as lines of code, function points, staffing composition, and development environment. They performed a 10‑fold cross‑validation experiment comparing four configurations: (a) pure algorithmic models, (b) standalone neural networks, (c) standalone fuzzy systems, and (d) the full NFA model. Performance was measured using Mean Absolute Error (MAE), Root Mean Square Error (RMSE), and coefficient of determination (R²). The NFA model achieved an 18 % reduction in MAE, a 22 % reduction in RMSE, and an increase in R² from 0.87 (COCOMO alone) to 0.93, indicating substantially higher predictive accuracy. Notably, the fuzzy component contributed most to error reduction in small‑scale or highly uncertain projects, where linguistic reasoning mitigates data sparsity. Additionally, the authors incorporated ANOVA‑based feature selection into the fuzzy preprocessing stage, trimming irrelevant inputs by roughly 30 % and cutting training time by about 15 %.

On the implementation side, a prototype tool was built using Java Swing for the graphical user interface and a modular backend engine. Users input project characteristics, and the system automatically executes data preprocessing, neural network training, fuzzy rule generation, and algorithmic correction, delivering real‑time estimates with confidence intervals. Model parameters and fuzzy rule sets are serialized in XML, enabling persistence, versioning, and easy re‑use across projects. The tool also supports incremental learning: new project data can be added, triggering automatic retraining without manual intervention.

The authors conclude with a forward‑looking roadmap. First, they plan to expose the NFA engine as a cloud‑based Software‑as‑a‑Service (SaaS) to promote organization‑wide standardization of estimation practices. Second, they aim to extend the framework to other domains—construction, manufacturing, healthcare—by defining domain‑specific input variables and integrating appropriate algorithmic baselines. Third, they propose incorporating reinforcement learning and meta‑learning techniques to enable continuous, online adaptation of model parameters, further reducing estimation error as more data become available. By pursuing these directions, the NFA framework is positioned to become a versatile, high‑accuracy estimation platform that bridges the gap between data‑driven analytics and expert intuition across a wide range of industrial applications.

Comments & Academic Discussion

Loading comments...

Leave a Comment