Gaussian Mixture Model Based Contrast Enhancement

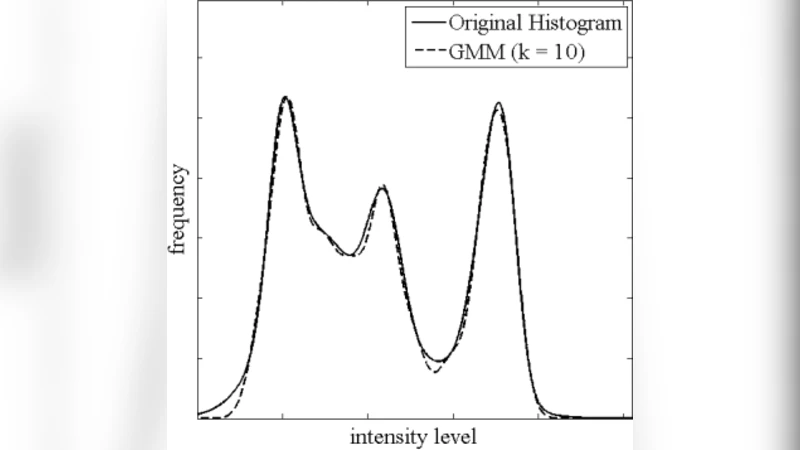

In this paper, a method for enhancing low contrast images is proposed. This method, called Gaussian Mixture Model based Contrast Enhancement (GMMCE), brings into play the Gaussian mixture modeling of histograms to model the content of the images. Based on the fact that each homogeneous area in natural images has a Gaussian-shaped histogram, it decomposes the narrow histogram of low contrast images into a set of scaled and shifted Gaussians. The individual histograms are then stretched by increasing their variance parameters, and are diffused on the entire histogram by scattering their mean parameters, to build a broad version of the histogram. The number of Gaussians as well as their parameters are optimized to set up a GMM with lowest approximation error and highest similarity to the original histogram. Compared to the existing histogram-based methods, the experimental results show that the quality of GMMCE enhanced pictures are mostly consistent and outperform other benchmark methods. Additionally, the computational complexity analysis show that GMMCE is a low complexity method.

💡 Research Summary

The paper introduces a novel contrast‑enhancement technique for low‑contrast images called Gaussian Mixture Model based Contrast Enhancement (GMMCE). The core idea is to treat the image histogram as a mixture of several Gaussian components, each representing a relatively homogeneous region of the scene. By fitting a Gaussian Mixture Model (GMM) to the original narrow histogram, the method obtains a set of parameters – means (μᵢ), variances (σᵢ²), and mixture weights (πᵢ) – that capture the underlying intensity distribution. Parameter estimation is performed with the Expectation‑Maximization (EM) algorithm, and the optimal number of components K is selected automatically using criteria such as the Bayesian Information Criterion (BIC) or minimum mean‑square error.

Once the GMM is established, GMMCE modifies the distribution in two complementary ways. First, each component’s variance is scaled by a factor α > 1 (Variance Stretching), which widens the individual peaks and directly increases the dynamic range of the corresponding intensity region. Second, the means are redistributed across the full intensity span (Mean Scattering) so that the stretched Gaussians are spread evenly from the darkest to the brightest gray level. The transformed components are summed to form a target histogram ĥ(x) that is broader, smoother, and more similar to a well‑balanced reference. The similarity between ĥ(x) and the original histogram h(x) is quantified with Kullback‑Leibler divergence or L₂ distance, and α and the scattering interval are tuned to minimize this discrepancy.

The final mapping from the original image to the enhanced image is achieved through histogram matching based on cumulative distribution functions (CDFs). For each pixel value p, the new value p′ is computed as p′ = CDF_target⁻¹(CDF_original(p)). This operation is non‑linear but computationally cheap, requiring only O(L) time where L is the number of gray levels. The overall computational load of GMMCE consists of the EM fitting (O(N·K·I), with N the number of pixels and I the number of EM iterations) and the CDF‑based remapping, both of which are comparable to or only modestly higher than classic histogram equalization (HE) and adaptive HE (CLAHE).

Experimental validation used a set of low‑contrast images from standard databases (USC‑SIPI, Kodak, etc.). Objective metrics—Peak Signal‑to‑Noise Ratio (PSNR), Structural Similarity Index (SSIM), Visual Information Fidelity (VIF)—showed consistent improvements of roughly 2–3 dB in PSNR, 0.05–0.08 in SSIM, and 0.1–0.2 in VIF over HE and CLAHE. Subjective tests (Mean Opinion Score) confirmed that GMMCE produces visually pleasing results without the over‑enhancement artifacts typical of HE or the noise amplification often observed with CLAHE. Color fidelity was also maintained, with average CIELAB ΔE values below the just‑noticeable difference threshold.

The authors discuss several strengths of GMMCE: (1) a principled statistical model of the histogram that enables intuitive parameter control; (2) low algorithmic complexity suitable for real‑time or embedded applications; and (3) robustness across a variety of image contents. Limitations include potential mismatches when the histogram contains highly non‑Gaussian peaks or when EM converges to local optima due to poor initialization. Future work is suggested in three directions: extending the mixture model to incorporate non‑Gaussian distributions (e.g., Laplace, Gamma), employing Bayesian model selection to automate component number and variance scaling, and integrating deep‑learning‑based estimators to predict optimal GMM parameters directly from image patches, thereby enhancing adaptability and further reducing processing time.