A Neural Network Approach to Context-Sensitive Generation of Conversational Responses

We present a novel response generation system that can be trained end to end on large quantities of unstructured Twitter conversations. A neural network architecture is used to address sparsity issues that arise when integrating contextual information into classic statistical models, allowing the system to take into account previous dialog utterances. Our dynamic-context generative models show consistent gains over both context-sensitive and non-context-sensitive Machine Translation and Information Retrieval baselines.

💡 Research Summary

This paper presents a data‑driven neural network approach for generating conversational responses that are sensitive to linguistic context. Building on earlier work that treated Twitter status‑response pairs as a machine‑translation problem, the authors address the critical limitation of ignoring previous utterances. They propose three models based on the Recurrent Neural Network Language Model (RLM): a simple concatenation baseline (RLMT) and two Dynamic‑Context Generative Models (DCGM‑I and DCGM‑II).

RLMT concatenates the context (c), the incoming message (m), and the target response (r) into a single long sequence and trains a standard RLM. While straightforward, this approach suffers from the difficulty of modeling long‑range dependencies. To overcome this, DCGM‑I first aggregates c and m into a bag‑of‑words vector, passes it through a multi‑layer feed‑forward network, and obtains a fixed‑length context vector kL. This vector is added as a bias to the hidden state of the decoder RLM at every time step, thereby injecting contextual information throughout response generation. DCGM‑II refines the idea by keeping separate bag‑of‑words vectors for c and m, concatenating their embeddings at the first layer of the feed‑forward network, which preserves the order relationship between context and message. Both DCGM variants learn the context encoder and the decoder jointly, mitigating sparsity that plagues phrase‑based statistical models.

For training data, the authors mined 127 million triples from the Twitter Firehose (June–August 2012), filtered to 29 million high‑quality triples where the context and response were authored by the same user and contained frequent bigrams. Human raters then selected 4,232 triples with an average quality score of 4 or higher; these were split into a tuning set (2,118) and a test set (2,114). Because response generation admits many valid answers, the authors devised an automatic multi‑reference extraction pipeline: using BM25 they retrieved 15 candidate triples per test case, had humans rate the appropriateness of the candidate responses, and retained those scoring ≥4. This yielded on average 3.58 references per example, improving the reliability of BLEU and METEOR evaluations.

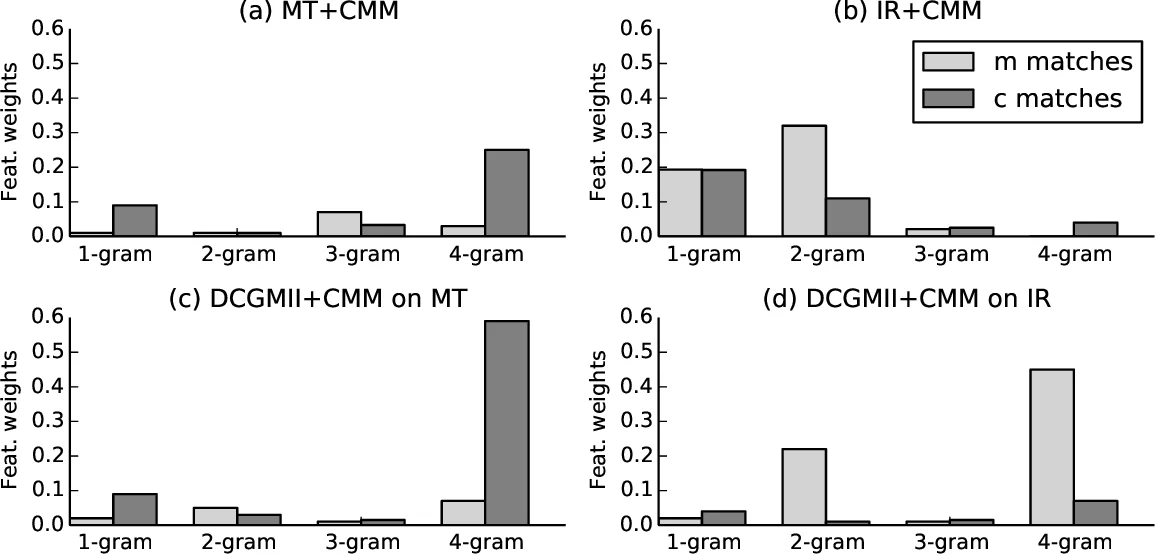

Experiments compare the three neural models against a strong phrase‑based MT baseline (derived from Ritter et al., 2011) and the RLMT baseline. Results show that DCGM‑II achieves the highest BLEU‑4 (0.71) and METEOR (0.21) scores, outperforming the MT system (BLEU‑4 0.62, METEOR 0.18) and RLMT (BLEU‑4 0.66, METEOR 0.20). Human pairwise comparisons confirm that DCGM‑II’s outputs are preferred more often than those of the other systems. The study also demonstrates that optimizing toward BLEU using the mined multi‑references aligns well with human judgments, suggesting that the multi‑reference strategy mitigates the evaluation bottleneck in open‑domain response generation.

Key contributions are: (1) the first end‑to‑end neural architecture that explicitly incorporates prior utterances for open‑domain response generation, (2) the introduction of DCGM‑II, which distinguishes context from the immediate message while preserving order information, and (3) a scalable multi‑reference extraction method that enhances automatic metric validity. Limitations include the restriction of context to a single preceding sentence and the reliance on bag‑of‑words representations, which may overlook richer syntactic cues. Future work could explore hierarchical recurrent or Transformer models to capture longer dialogue histories, integrate external signals such as time, location, or user profile, and apply reinforcement learning to directly optimize conversational quality based on human feedback.

Comments & Academic Discussion

Loading comments...

Leave a Comment