Pose Estimation Based on 3D Models

In this paper, we proposed a pose estimation system based on rendered image training set, which predicts the pose of objects in real image, with knowledge of object category and tight bounding box. We developed a patch-based multi-class classification algorithm, and an iterative approach to improve the accuracy. We achieved state-of-the-art performance on pose estimation task.

💡 Research Summary

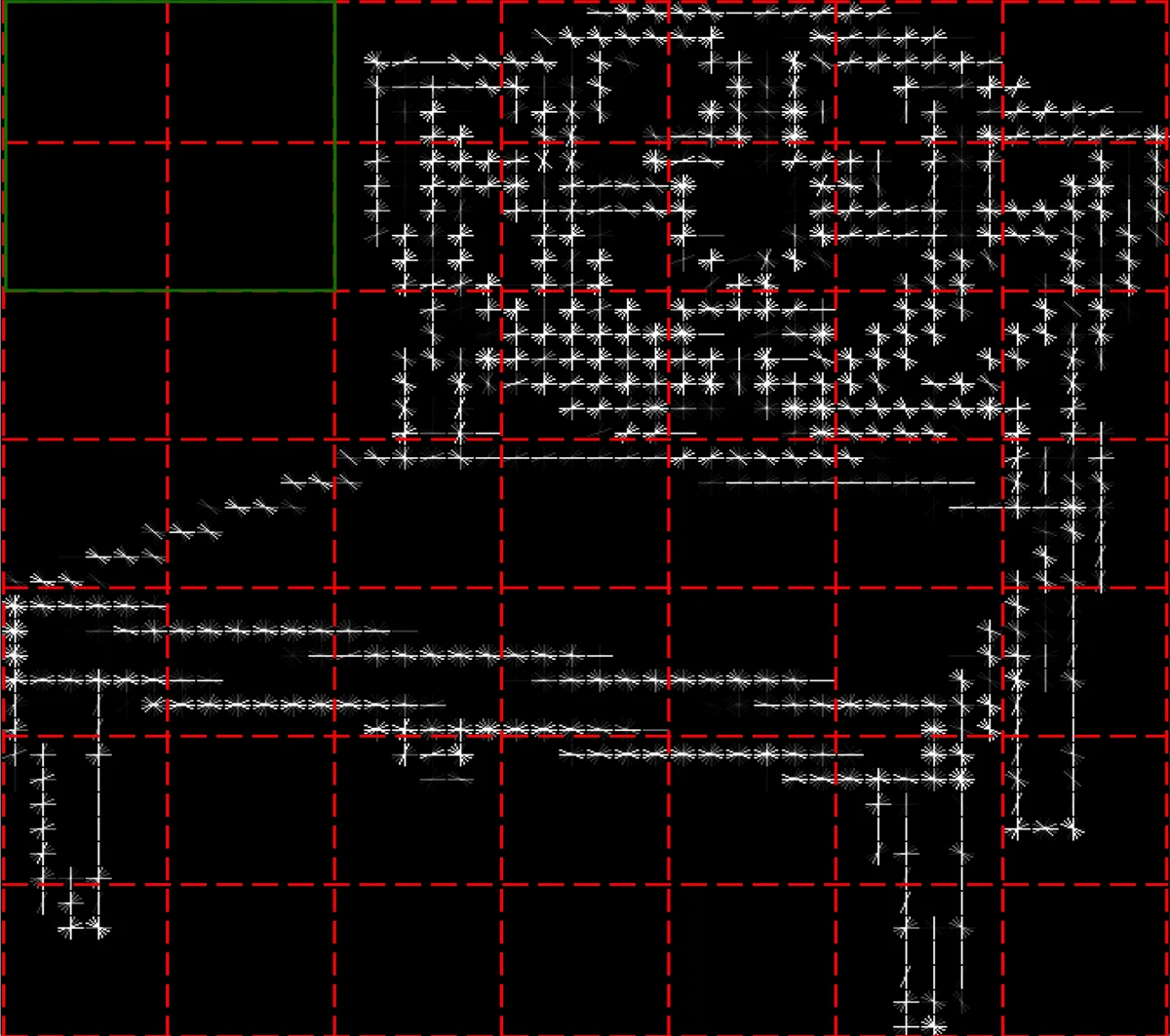

The paper presents a pose‑estimation framework that leverages large‑scale 3D model data to train a classifier capable of predicting the orientation of objects in real photographs. Using ShapeNet, the authors extracted 5,057 chair models and rendered each from 16 uniformly spaced azimuth angles, producing 64,000 synthetic training images. Each image is resized to 112 × 112 pixels and divided into a 6 × 6 overlapping patch grid (patch size 32 × 32, stride 16). From every patch a 576‑dimensional Histogram of Oriented Gradients (HoG) descriptor is extracted, yielding a 20,736‑dimensional feature vector for the whole image.

Instead of a global representation, the system trains a separate multi‑class Random Forest for each of the 36 patches (100 trees per forest, max depth 20). Each forest outputs a conditional probability distribution P(v | F_i) over a discretized pose space V. The image‑level pose distribution is approximated by the product of the patch‑level probabilities, and the pose with maximum probability is selected as the final estimate. This patch‑based approach reduces dimensionality per classifier, improves robustness to occlusion and background clutter, and avoids the severe over‑fitting observed with a global HoG classifier.

A key challenge is the mismatch between the uniform pose distribution of the synthetic training set and the biased distribution of real‑world images (e.g., front views appear more often). The authors formulate this discrepancy in a Bayesian framework:

P(v | F_i) = ˜P(v | F_i) · P(v) / ˜P(v)

where ˜P denotes quantities estimated from the training data. They propose an iterative calibration algorithm that (1) computes the image‑level pose distribution ˜P(v | I) from current patch probabilities, (2) aggregates these across all test images to obtain an empirical prior ˜P(v), (3) smooths ˜P(v) with a factor α, (4) updates the patch‑level probabilities by multiplying with the smoothed prior, and (5) repeats until convergence. Experiments show that α = 0.8 yields the best trade‑off; an automatic selection scheme drives α toward a value close to 1, which empirically matches the optimal setting.

Three test sets are used for evaluation: (1) rendered images (uniform pose distribution), (2) real images with clean backgrounds (1,309 samples), and (3) real images with cluttered backgrounds (1,000 samples). Results demonstrate that the patch‑based Random Forest (RF) attains 96.16 % accuracy on rendered data, 80.67 % on clean real images, and 76.80 % on cluttered real images. After Bayesian calibration (RF opt), performance improves to 88.90 % and 78.70 % respectively, a gain of 8 % and 2 % over the uncalibrated model. Using ground‑truth pose priors (RF GT) yields only a marginal 2 % additional improvement, confirming the effectiveness of the automatic calibration. In contrast, a global HoG‑based Random Forest reaches 97.03 % on rendered data but drops dramatically to 52.64 % (clean) and 10.90 % (cluttered), highlighting its susceptibility to over‑fitting.

Confusion matrices reveal that errors increase with dataset difficulty; poses differing by 90° are frequently confused, and front/back views are often misclassified due to similar visual appearance. The authors discuss limitations, notably the strong assumption that conditional feature distributions ˜P(F_i | v) and marginal ˜P(F_i) are identical between synthetic and real data—a simplification that likely hampers performance on heavily cluttered scenes.

The conclusion emphasizes three contributions: (1) constructing a large, precisely labeled synthetic training set from 3D models, (2) a patch‑wise classification architecture that outperforms global features, and (3) a Bayesian iterative calibration method that bridges the domain gap between synthetic and real images. Future work includes incorporating foreground/background segmentation, refining the statistical assumptions, learning patch discriminativeness via weighted voting, and extending the approach to occluded objects and other categories.

Comments & Academic Discussion

Loading comments...

Leave a Comment