Soft-Deep Boltzmann Machines

We present a layered Boltzmann machine (BM) that can better exploit the advantages of a distributed representation. It is widely believed that deep BMs (DBMs) have far greater representational power than its shallow counterpart, restricted Boltzmann machines (RBMs). However, this expectation on the supremacy of DBMs over RBMs has not ever been validated in a theoretical fashion. In this paper, we provide both theoretical and empirical evidences that the representational power of DBMs can be actually rather limited in taking advantages of distributed representations. We propose an approximate measure for the representational power of a BM regarding to the efficiency of a distributed representation. With this measure, we show a surprising fact that DBMs can make inefficient use of distributed representations. Based on these observations, we propose an alternative BM architecture, which we dub soft-deep BMs (sDBMs). We show that sDBMs can more efficiently exploit the distributed representations in terms of the measure. Experiments demonstrate that sDBMs outperform several state-of-the-art models, including DBMs, in generative tasks on binarized MNIST and Caltech-101 silhouettes.

💡 Research Summary

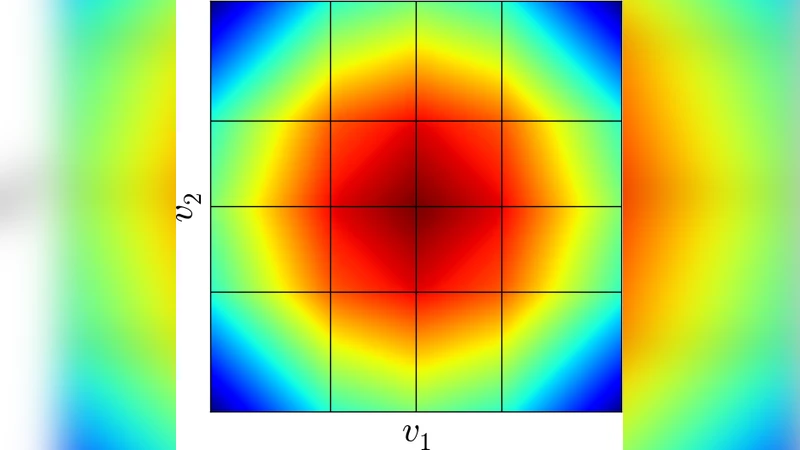

The paper investigates the representational power of deep Boltzmann machines (DBMs) and introduces a new architecture called soft‑deep Boltzmann machines (sDBMs) that overcomes identified limitations. The authors begin by proposing an approximate measure of a Boltzmann machine’s efficiency in using distributed representations. They define the “hard‑min free energy” ˆF(v)=min_H E(v,H) as a piecewise‑linear function whose number of linear regions serves as a proxy for the number of effective mixtures the model can realize. This measure captures how finely the visible space is partitioned, i.e., how many sub‑distributions a model can manage.

For restricted Boltzmann machines (RBMs), the authors leverage prior work showing that the free‑energy function can be approximated by a two‑layer ReLU network. Using known results on the number of linear regions of such networks, they derive that an RBM with N₀ visible units and N₁ hidden units can realize at most Θ(N₁^{N₀}) effective mixtures, far below the theoretical upper bound of 2^{N₁} (all possible hidden configurations).

The analysis of DBMs reveals a surprising limitation: despite having multiple hidden layers, the number of effective mixtures is bounded solely by the size of the first hidden layer, i.e., at most 2^{N₁}. The proof rests on the observation that, given a fixed configuration of the first hidden layer, the energies associated with deeper layers share identical gradients with respect to the visible units. Consequently, only the minimum‑energy term among configurations of deeper layers contributes to the hard‑min free energy, preventing the creation of additional linear regions. Thus, depth does not substantially increase the number of effective mixtures, contradicting the intuition that deeper generative models automatically exploit distributed representations more efficiently.

To break this bottleneck, the authors propose sDBMs, a superset of DBMs in which all pairs of layers are connected. The connections are “soft‑deep”: the weight magnitude decays exponentially with the layer distance (≈2^{‑d}). Algorithm 1 recursively constructs such a network by adding one hidden unit at a time and setting its outgoing weights so that each new unit introduces a distinct slope in the energy landscape. For a single‑visible‑unit model (gBM(L)) with L hidden units, the construction yields 2^{L} distinct linear regions of the hard‑min free energy, achieving the theoretical maximum 2^{N_h}. By bundling independent gBM(L) sub‑networks, an L‑layer sDBM with M hidden units per layer attains 2^{M·L}=2^{N_h} effective mixtures, i.e., exponential growth with depth.

Empirically, the authors train sDBMs without any layer‑wise pre‑training and evaluate them on binarized MNIST and Caltech‑101 silhouette datasets. Using log‑likelihood estimates, sample quality assessments, and visual inspection, sDBMs outperform standard DBMs, variational auto‑encoders, and several GAN variants. Moreover, because the model retains a bipartite structure within each layer pair, block Gibbs sampling remains applicable, preserving reasonable training and sampling efficiency despite the added inter‑layer dependencies.

Key contributions of the paper are:

- Introduction of a tractable metric (number of linear regions of the hard‑min free energy) to quantify the efficiency of distributed representations in Boltzmann machines.

- Theoretical proof that DBMs cannot leverage depth to increase this metric beyond 2^{N₁}, highlighting a fundamental representational limitation.

- Design of soft‑deep connections that restore exponential growth of effective mixtures with depth, matching the capacity of unrestricted Boltzmann machines.

- Demonstration that sDBMs achieve state‑of‑the‑art generative performance on benchmark datasets without pre‑training.

Overall, the work challenges the prevailing assumption that deeper generative models automatically gain expressive power, showing that the pattern of connections—not merely depth—determines how well a Boltzmann machine can exploit distributed representations. This insight opens avenues for designing more expressive energy‑based models by carefully engineering inter‑layer connectivity.

Comments & Academic Discussion

Loading comments...

Leave a Comment