Adaptive Image Denoising by Targeted Databases

We propose a data-dependent denoising procedure to restore noisy images. Different from existing denoising algorithms which search for patches from either the noisy image or a generic database, the new algorithm finds patches from a database that con…

Authors: Enming Luo, Stanley H. Chan, Truong Q. Nguyen

1 Adapti v e Image Denoising by T ar geted Databases Enming Luo, Student Member , IEEE , Stanley H. Chan, Member , I EEE , and T ruong Q. Nguyen, F ellow , IEEE Abstract —W e propose a data-depend ent denoising procedure to restor e noisy images. Different f ro m existing denoising algo- rithms which searc h for patches from either the noisy image or a generic d atabase, the n ew algorithm fin ds patches from a database that contains relev ant patches. W e formulate the denoising problem as an optimal filter design problem and make two contributions. First, we determine th e basis function of the denoising filter by solving a group sparsity minimization prob- lem. The optimization for mulation generalizes existing d enoising algorithms and offers systematic analysis of th e perform ance. Impro vement methods are pro posed to en hance th e patch search process. Second, we determine the spectral coefficients of the denoising filter by consid ering a localized Bayesian prior . The localized prior lev erages th e similarity of the targ eted database, alleviates the intensive Bayesian computation, and links the new method to the classical linear minimum mean squared error estimation. W e demonstrate applications of th e proposed method in a variety of scenarios, i ncluding text images, multiview images and face images. Experimental results show t he superiority of the new algorithm over existing methods. Index T erms —Patch-based filterin g, image denoising, external database, optimal filter , n on-local means, BM3D, group sparsity , Bayesian estimation I . I N T R O D U C T I O N A. P a tch-based Denoising Image denoising is a classical signal recovery problem where th e goa l is to restore a clean im age from its o bserva- tions. Although image denoising has been studied for decades, the pro blem remain s a fun damental one as it is the test bed for a variety of image processing tasks. Among the numero us contributions in image deno ising in the litera ture, th e most hig hly-regarded class o f methods, to date, is the class of pa tch-based image denoising a lgorithms [1 – 9]. The idea of a patch-based denoising algorith m is simple: Given a √ d × √ d patch q ∈ R d from th e noisy im age, the algor ithm finds a set of referen ce patches p 1 , . . . , p k ∈ R d and ap plies some linear (or non-line ar) fun ction Φ to ob tain an estimate b p of the unknown clean p atch p as b p = Φ( q ; p 1 , . . . , p k ) . (1) For example, in n on-local mean s (NLM) [1], Φ is a weigh ted av erage of the referen ce patches, wher eas in BM3D [3], Φ is a transform- shrinkage op eration. E. Luo and T . Nguyen are with Department of Electric al and Computer Engineeri ng, Uni versity of California at San Die go, La Jolla, CA 92093, USA. E mails: eluo@uc sd.edu and nguyent@ec e.ucsd.edu S. Chan is with School of E lect rical and Computer Engineering, Purdue Uni versi ty , W est Lafaye tte, IN 47907, USA. Email: stanle ychan@pu rdue.edu This work was supported in part by a Croucher Foundati on Post-doctoral Researc h Fello wship, and in part by the National Science Foundat ion under grant CCF-10653 05. Preliminary material in this pape r was present ed at the 39th IEEE International Conference on Acoustics, Speech and Signal Processing (ICASSP), Florence , May 2014. B. Interna l vs External Denoising For any patch-based den oising algorith m, the denoising perfor mance is intimately related to the reference p atches p 1 , . . . , p k . T yp ically , there are two so urces o f these patch es: the noisy image itself an d an external database of p atches. The for mer is kn o wn as interna l denoising [10], w hereas the latter is k nown as external denoising [1 1, 12]. Internal denoising is practically more pop ular tha n exter- nal den oising because it is computationa lly less expensi ve. Moreover , internal de noising does not r equire a train ing stage, hence making it free of training bias. Further more, Glasner et al. [13] sho wed that patches tend to recur within an image, e.g. , a t a different location , orientatio n, or scale. Thus searching for patch es in th e noisy image is o ften a plausible approa ch. Ho wever , on the downside, in ternal d enoising often fails for rare patches — patches that seldom recur in an image. This p henomeno n is kn o wn as “rare patch effect”, and is widely regarded as a bottlen eck o f internal deno ising [1 4, 15]. Th ere are some works [ 16, 17] attemp ting to alleviate the rare p atch pro blem. Howe ver , the extent to which these methods ca n ac hie ve is still lim ited. External d enoising [6, 18 – 21] is an alter nati ve solution to internal d enoising. Levin et al. [15, 22] sh o wed tha t in the limit, the theoretical minim um mea n sq uared error o f denoising is achievable using a n infinitely large external database. Recently , Chan et al. [2 0, 21] developed a comp u- tationally efficient sampling schem e to r educe the comp lexity and demonstrated practical usage of large databases. Ho wev er , in mo st of the recent works on external denoising , e.g. , [6, 18, 19], the d atabases used are generic . These d atabases, although large in volume, do no t necessarily co ntain useful in formation to de noise the n oisy image of interest. For example, it is cle ar that a database of natu ral images is not helpful to den oise a noisy portrait imag e. C. Adaptive Image Den oising In th is pap er , we pr opose an adaptiv e image denoising algorithm using a targeted external databa se instead of a generic database. Here, a targeted database refers to a database that co ntains imag es r elevant to the n oisy im age on ly . As will be illustrated in later parts of this pap er , targeted external databases c ould b e ob tained in many pr actical scen arios, such as text images ( e.g. , newspapers and do cuments), human faces (under certain cond itions), and imag es c aptured by multivie w camera systems. Other possible scen arios inclu de imag es of license p lates, medical CT an d MRI images, and images of landmark s. The concep t of using targeted external d atabases h as be en propo sed in various o ccasions, e.g. , [23 – 28]. Howe ver , non e 2 of these metho ds a re tailored fo r image d enoising problems. The o bjecti ve of this pap er is to bridge the gap by ad dressing the following question : (Q): Su ppose we ar e given a targeted external d atabase, how should we d esign a deno ising algorithm which can maximally utilize the d atabase? Here, we assume that th e referenc e patches p 1 , . . . , p k are given . W e emphasize that this assumption is application specific — for the examples we mentio ned earlier ( e.g. , text, multivie w , face, etc), the assum ption is ty pically tru e bec ause these images h a ve relatively less variety in content. When the refer ence patches are given, question ( Q) may look trivial at the first glance becau se we can extend existing internal d enoising algor ithms in a bru te-force way to hand le external databa ses. For example, one can modif y existing al- gorithms, e .g. , [1, 3, 5, 29, 30], so that the patches are searched from a datab ase instead of the no isy imag e. Likewise, one can also treat an external database as a “video” and feed the data to multi-image denoising algorithms, e.g. , [31 – 34]. Howe ver , the problem of these approach es is that the brute force mo difications ar e h euristic. The re is no theoretical g uar- antee of perfor mance. This su ggests that a straigh t-forward modification of existing metho ds doe s not solve que stion (Q), as th e d atabase is not maximally utilized. An alter nati ve response to que stion (Q) is to tr ain a statis- tical prior of th e targeted data base, e.g. , [ 6, 18, 19, 35 – 38]. The merit of this app roach is that the perfo rmance often ha s theoretical guarantee becau se the denoising pro blem can n o w be formu lated as a max imum a posterior i (MAP) estimatio n. Howe ver , the dr awback is that many o f th ese meth ods req uire a large num ber of trainin g samples which is not always av a ilable in practice . D. Contributions an d Organization In view of the above seemingly easy yet challenging q ues- tion, we intro duced a new den oising algorith m u sing targeted external d atabases in [39]. Com pared to existing method s, the method proposed in [39] achieves b etter performance and only requires a small numbe r o f external image s. In th is paper, we extend [39] by offering the following new con tributions: 1) Generalization of Existing Methods. W e propose a generalized framework which enca psulates a n umber of denoising algo rithms. In p articular , we show (in Sec tion III-B) th at the prop osed group sparsity minimization generalizes both fixed basis and PCA metho ds. W e also show (in Section IV -B) that the pro posed local Bayesian MSE solution is a generalization of many spectral o perations in existing method s. 2) Improvement Str ategies. W e pr opose two improvement strategies fo r the gener alized denoising framew ork. In Section I II-D, w e pr esent a patch selection optim ization to imp rove the patch search proc ess. In Section IV -D, we p resent a sof t-thresholding and a hard -thresholdin g method to impr ove the sp ectral coefficients learned b y the algorithm . 3) Detailed Proo fs. Proo fs of the r esults in this pap er and [39] ar e p resented in the Append ix. The rest of the paper is organized a s follo ws. After outlining the design fr ame work in Section II , we present the above contributions in Section III – IV . Experim ental results are discussed in Section V , and conclu ding remar ks ar e g i ven in Section VI. I I . O P T I M A L L I N E A R D E N O I S I N G F I LT E R The found ation of our propo sed metho d is the classical optimal linear denoising filter design prob lem [ 40]. In th is section, we give a b rief review of the design f ramew ork an d highligh t its limitation s. A. Optimal F ilter The de sign o f an optimal den oising filter c an be p osed as follows: Gi ven a noisy patch q ∈ R d , and a ssuming that the no ise is i.i. d. Gaussian with zer o mean and variance σ 2 , we want to find a linear op erator A ∈ R d × d such that the estimate b p = Aq h as the minimum mean s quared error (MSE) compare d to the g round tr uth p ∈ R d . That is, we want to solve the optimization A = arg min A E k Aq − p k 2 2 . (2) Here, we assume that A is symmetr ic, or otherwise the Sinkhorn -Knopp iteration [ 41] c an be used to sym metrize A , provided tha t entr ies of A are non-negative. Gi ven a symmetric A , one can ap ply the eigen-decom position, A = U Λ U T , where U = [ u 1 , . . . , u d ] ∈ R d × d is the basis matrix and Λ = diag { λ 1 , . . . , λ d } ∈ R d × d is the diago nal matrix containing the spectral coefficients. W ith U and Λ , the o ptimization pr oblem in (2) becomes ( U , Λ ) = arg min U , Λ E U Λ U T q − p 2 2 , (3) subject to the con straint that U is an orth onormal matrix . The joint optimization (3) can b e solved by noting the following Lemma. Lemma 1 : Let u i be th e i th column of th e matrix U , an d λ i be th e ( i, i ) th en try of the d iagonal matrix Λ . If q = p + η , where η iid ∼ N ( 0 , σ 2 I ) , then E U Λ U T q − p 2 2 = d X i =1 (1 − λ i ) 2 ( u T i p ) 2 + σ 2 λ 2 i . (4) The proof of L emma 1 is given in [42]. W ith Lemma 1, the d enoised p atch as a consequ ence of (3) is as follows. Lemma 2 : The den oised p atch b p using th e optimal U and Λ of ( 3) is b p = U diag k p k 2 k p k 2 + σ 2 , 0 , . . . , 0 U T q , where U is any orthonor mal ma trix with the first column u 1 = p / k p k 2 . Pr oo f: See Appen dix A. 3 Lemma 2 states that if hypo thetically w e are given the groun d truth p , the optimal denoising process is to first project the noisy observation q on to th e sub space spann ed by p , the n perfor m a W ien er shrinkage k p k 2 / ( k p k 2 + σ 2 ) , and finally re-pro ject the shrinkage co ef ficients to obtain the denoised estimate. Howe ver, since in reality we ne ver have access to the groun d tr uth p , this op timal result is n ot achievable. B. Pr oblem S tatement Since the ora cle op timal filter is n ot achiev able in practice, the q uestion becomes whe ther it is po ssible to find a surrogate solution that d oes n ot requ ire the grou nd tr uth p . T o an swer this question, it is helpful to separate th e jo int optimization (3) b y first fixing U and minimize th e MSE with respect to Λ . In this case, one can show that (4) achieves the minimum when λ i = ( u T i p ) 2 ( u T i p ) 2 + σ 2 , (5) in which the min imum MSE estimator is given by b p = U diag ( u T 1 p ) 2 ( u T 1 p ) 2 + σ 2 , . . . , ( u T d p ) 2 ( u T d p ) 2 + σ 2 U T q , (6) where { u 1 , . . . , u d } are th e co lumns of U . Inspecting (6), we identify two parts of the problem: 1) Determine U . The cho ice o f U plays a critical r ole in the deno ising performan ce. In literature, U are typically chosen as the FFT or the DCT b ases [3, 4]. In [5, 7 , 8] , the PCA bases of v arious data matrice s are proposed . Howe ver , the optimality of these bases is n ot fully understoo d. 2) Determine Λ . Even if U is fixed, the optim al Λ in (5) still d epends on the u nknown g round truth p . I n [3], Λ is determ ined by hard-thr esholding a stack of DCT coefficients or ap plying an empirica l W iener filter constructed fr om a first-pass estimate. I n [7], Λ is formed by the PCA coefficients of a set of r ele vant noisy patches. Again , it is u nclear which of these is optimal. Motiv ated by the problems about U and Λ , in the following two sections we present our p roposed method for e ach of these pro blems. W e discuss its relationship to pr ior works, and present ways to furthe r improve it. I I I . D E T E R M I N E U In this section , we pr esent ou r pr oposed metho d to deter- mine the basis matrix U an d show th at it is a gen eralization of a nu mber of existing denoising algo rithms. W e also discu ss ways to imp rove U . A. P a tch Selection v ia k Nea r est Neighbo rs Giv en a no isy patch q an d a targeted da tabase { p j } n j =1 , ou r first task is to fetch the k m ost “relev ant” patches. The patch selection is perfo rmed b y measur ing the similarity b etween q and each o f { p j } n j =1 , defined as d ( q , p j ) = k q − p j k 2 , for j = 1 , . . . , n. (7) W e n ote that (7) is equiv ale nt to the standard k nearest neighbo rs ( k NN) search. k NN has a drawback that under the ℓ 2 distance, some of the k selected patch es may not be tru ly relev ant to the den oising task, because the query patch q is no isy . W e will come back to this issue in Section III -D by discussing metho ds to impr ove the r obustness of the k NN. B. Gr o up S parsity W ith out loss of gener ality , we assum e that the k NN re- turned by the above pr ocedure are the first k p atches of the data, i.e., { p j } k j =1 . Our goal now is to construc t U fro m { p j } k j =1 . W e postulate that a good U shou ld hav e two pro perties. First, U should make th e projected vectors { U T p j } k j =1 similar in both magnitude and location . This hypothesis follows fr om th e observation th at since { p j } k j =1 have small ℓ 2 distances fro m q , it m ust hold that any p i and p j (hence U T p i and U T p j ) in the set shou ld also be similar . Seco nd, we requ ire that each p rojected vecto r U T p j contains as few non-ze ros as p ossible, i.e. , sparse . Th e r eason is related to the shrinkage step to be d iscussed in Section IV, b ecause a vector of few no n-zero coe f ficients ha s higher energy con centration and hence is m ore effectiv e for denoising. In order to satisfy these two criteria, we pr opose to consider the idea of gr oup sparsity 1 , which is c haracterized by the matrix ℓ 1 , 2 norm, defined as 2 k X k 1 , 2 def = d X i =1 k x i k 2 , (8) for any matrix X ∈ R d × k , where x i ∈ R k is the i th row of a matrix X . In word s, a small k X k 1 , 2 makes sure that X has few n on-zero e ntries, a nd the no n-zero en tries are located similarly in each colu mn [6, 4 3]. A p ictorial illustration is shown in Figure 1 . Going back to our problem , we propo se to minimize the ℓ 1 , 2 -norm of the m atrix U T P : minimize U k U T P k 1 , 2 sub ject to U T U = I , (9) where P def = [ p 1 , . . . , p k ] . Th e equa lity constraint in (9) ensures th at U is orthono rmal. Th us, th e solution of ( 9) is an orthon ormal matrix U wh ich max imizes the grou p sparsity o f the d ata P . 1 Group sparsity was first proposed by Cotter et al. for group sparse reconstru ction [43 ] and later used by Mairal et al. for denoising [6], but to wards a diffe rent end from the method presented in this paper . 2 In general one can define ℓ p,q norm as k X k p,q = P d i =1 k x i k p q , c.f. [6]. 4 (a) sparse (b) group sparse Fig. 1: Comparison between sparsity (where columns are sparse, but do n ot coordin ate) a nd g roup spa rsity (where all columns are sparse with similar loca tions). Interestingly , and surprisingly , th e solutio n of (9) is indee d identical to th e classical prin cipal com ponent an alysis (PCA). The following lemma summarizes the ob servation. Lemma 3: The solution to (9) is th at [ U , S ] = eig ( P P T ) , (10) where S is th e corre sponding eigenv a lue ma trix. Pr oo f: See Appen dix B. Remark 1 : In practice, it is p ossible to impr ove the fidelity of the data m atrix P by in troducing a diagonal weig ht m atrix W = 1 Z diag n e −k q − p 1 k 2 /h 2 , . . . , e −k q − p k k 2 /h 2 o , (11) for some user tunable parameter h and a nor malization constant Z def = 1 T W 1 . Co nsequently , we can define P = P W 1 / 2 . (12) Hence (10) becomes [ U , S ] = eig ( P W P T ) . C. Relatio nship to Prior W orks The fact that (10) is the solu tion to a grou p sparsity m ini- mization pr oblem allows us to understand the perfo rmance of a number of existing deno ising algorithm s to som e extent. 1) BM3D [3]: It is p erhaps a misconception th at the underly ing pr inciple of BM3D is to enforce sparsity of the 3-dimen sional data volume (which we shall call it a 3 -way tensor). Howe ver , what BM3 D enforces is the gr ou p spa r sity of th e slices of the tensor, not the spar sity of the tensor . T o see this, we note that the 3- dimensional transfo rms in BM3D are separ able ( e.g. , DCT2 + Haar in its d efault setting). If the patches p 1 , . . . , p k are sufficiently similar , the DCT2 coefficients will be similar in both magn itude and location 3 . Therefo re, by fixing the fr equency lo cation of a DCT2 coefficient and tracin g th e DCT2 coefficients alon g the third axis, the outp ut signal will b e almost flat. Hen ce, the final Haar transform will return a sparse vector . Clearly , such sparsity is based on the stationarity o f the DCT2 co effi cients along the th ird axis. In essence, this is grou p spar sity . 3 By DCT2 locati on we meant the frequency of the DCT2 components. 2) HOSVD [9]: T he true tensor sparsity can o nly b e utilized by the h igh order singu lar v alue decomp osition (HOSVD), which is recently studied in [ 9]. Let P ∈ R √ d × √ d × k be the tensor by stacking th e p atches p 1 , . . . , p k into a 3-dime nsional array , HOSVD seeks three orthono rmal matrices U (1) ∈ R √ d × √ d , U (2) ∈ R √ d × √ d , U (3) ∈ R k × k and an ar ray S ∈ R √ d × √ d × k , such th at S = P × 1 U (1) T × 2 U (2) T × 3 U (3) T , where × k denotes a tensor mode- k m ultiplication [4 4 ]. As reported in [9], the perfo rmance of HOSVD is indee d worse than BM3D. This ph enomeno n can now b e explain ed, because HOSVD ign ores the fact that imag e patches tend to be g roup sparse instead of being tensor sp arse. 3) Sh ape-ada ptive BM3D [4]: As a variation of BM3D, SA-BM3D gro ups similar patches accord ing to a shape- adaptive m ask. Under our propo sed framework, th is shape- adaptive mask can be modeled as a spatial weight matrix W s ∈ R d × d (where th e subscript s d enotes spatial ). Adding W s to (12), we d efine P = W 1 / 2 s P W 1 / 2 . (13) Consequently , the PCA of P is equ i valent to SA-BM3D. Here, the matrix W s is used to co ntrol the r elati ve em phasis of e ach pixel in the spatial co ordinate. 4) BM3D- PCA [5 ] an d LPG-PCA [7]: The idea o f both BM3D-PCA an d LPG-PCA is that given p 1 , . . . , p k , U is determined as the prin cipal c omponen ts of P = [ p 1 , . . . , p k ] . Incidentally , such appro aches arriv e at th e same result a s (10), i.e. , the princip al compon ents ar e indee d the solution of a group sparse minimization. Ho wever , th e ke y of using th e group sparsity is not n oticed in [5] and [7]. Th is provides additional theoretical justification s for both methods. 5) KSVD [18]: In KSVD, the dictio nary play s th e r ole of o ur basis matrix U . The dictio nary can b e train ed either from the sin gle n oisy image, or f rom an extern al (generic or targeted) datab ase. Howe ver , the tr aining is pe rformed once for all patches o f the image. I n o ther words, the noisy p atches share a c ommon dictio nary . In our propo sed meth od, each noisy patch has an ind i vidually tr ained basis matrix. Clearly , the latter approa ch, while computatio nally mo re expensive, is significantly m ore data adaptive than KSVD. D. Impr ovement: P a tch Selection R efinement The optimization problem (9) suggests that the U computed from ( 10) is the optimal basis with respect to the referen ce patches { p j } k j =1 . Howe ver , one issue th at rem ains is how to improve the selection of k p atches from t he original n patches. Our pro posed ap proach is to for mulate the p atch selection as an o ptimization pr oblem minimize x c T x + τ ϕ ( x ) sub ject to x T 1 = k , 0 ≤ x ≤ 1 , (14) where c = [ c 1 , · · · , c n ] T with c j def = k q − p j k 2 , ϕ ( x ) is a penalty fun ction an d τ > 0 is a parameter . In (14), each c j 5 (a) p (b) ϕ ( x ) = 0 (c) ϕ ( x ) = 1 T B x (d) ϕ ( x ) = e T x Fig. 2: Refin ed patch matching results: (a) gr ound truth, (b) 1 0 best referen ce p atches usin g q ( σ = 50 ) , ( c) 10 best referen ce patches using ϕ ( x ) = 1 T B x (where τ = 1 / (2 n ) ), ( d) 10 best reference patches u sing ϕ ( x ) = e T x (wh ere τ = 1 ). is the d istance k q − p j k 2 , an d x j is a weig ht indicating the emphasis of k q − p j k 2 . Theref ore, the minimizer of (1 4) is a sequence of weights th at min imize the overall distance. T o gain mor e insight into (14), we first con sider the special ca se when the penalty term ϕ ( x ) = 0 . W e claim that, un der this special condition, the solution of (14) is equiv alent to the original k NN solution in (7). This result is impor tant, because k NN is a fundam ental building block of all patch-ba sed denoising algo rithms. By linkin g k NN to the optimiza tion f ormulation in (14) we provide a systematic strategy to imp rove the k NN. The proof of the eq ui valence be tween k NN and (1 4 ) can be und erstood v ia the following case study where n = 2 and k = 1 . In this ca se, the co nstraints x T 1 = 1 and 0 ≤ x ≤ 1 f orm a closed lin e segment in the positi ve quadran t. Since the objective function c T x is linear , th e optimal poin t must be at one of the vertices of the line segment, which is either x = [0 , 1] T , or x = [1 , 0] T . Th us, by checking which of c 1 or c 2 is smaller , we can deter mine th e optima l solution by setting x 1 = 1 if c 1 is smaller (a nd vice versa). Correspon dingly , if x 1 = 1 , then the first patc h p 1 should b e selected. Clearly , the solution retu rned b y the optimizatio n is exactly the k NN solutio n. A similar argument holds for highe r dimensions, hence justifies ou r claim. Knowing that k NN can b e formulated as ( 14), our next task is to choose an appropr iate pena lty term. The fo llo wing are two p ossible choice s. 1) Regularization by Cr o ss Similarity: Th e first choice of ϕ ( x ) is to co nsider ϕ ( x ) = x T B x , where B ∈ R n × n is a symmetric matrix with B ij def = k p i − p j k 2 . Writing (14) explicitly , we see that the optim ization pr oblem ( 14) b ecomes minimize 0 ≤ x ≤ 1 , x T 1 = k X j x j k q − p j k 2 + τ X i,j x i x j k p i − p j k 2 . (1 5) The penalized pro blem (1 5) suggests that the optimal k referenc e patches should not be determined merely f rom k q − p j k 2 (which could be problematic du e to the noise present in q ). Instead, a goo d ref erence patch sho uld a lso be similar to all o ther patches that ar e selected. Th e cross similarity term x i x j k p i − p j k 2 provides a way f or such measure. This sha res some similarities to the p atch ord ering concept proposed by Cohen and Elad [2 9]. The difference is that the patch ord ering pro posed in [29] is a shortest path problem that trie s to organ ize the noisy patch es, wh ereas our s is to so lve a regularized op timization. p ϕ ( x ) = 0 ϕ ( x ) = 1 T B x ϕ ( x ) = e T x Ground T ruth 28.29 dB 28.50 dB 29.30 dB Fig. 3: Denoising results: A grou nd truth patch cropped from an image, and the denoised patches o f u sing dif ferent improvement schemes. Noise standard de viation is σ = 50 . τ = 1 / (2 n ) for ϕ ( x ) = 1 T B x and τ = 1 for ϕ ( x ) = e T x . Problem (15) is in gen eral not con vex because the matrix B is not positiv e semidefinite. One way to relax the for mulation is to consider ϕ ( x ) = 1 T B x . Geom etrically , the solutio n o f using ϕ ( x ) = 1 T B x tend s to identify patches that are close to the sum of all oth er patches in th e set. In many cases, this is similar to ϕ ( x ) = x T B x w hich finds p atches th at are similar to e very ind ividual patch in the set. In practice, we find that the difference between ϕ ( x ) = x T B x and ϕ ( x ) = 1 T B x in the final den oising result (PSNR o f the entire image) is ma rginal. Th us, for comp utational efficiency we choose ϕ ( x ) = 1 T B x . 2) Regularization by F irst-pass Estimate: The secon d choice of ϕ ( x ) is based on a first-pass estimate p using some denoising alg orithms, for examp le, BM3D or the proposed method without this patch selection step. In this case, by defining e j def = k p − p j k 2 we con sider th e penalty fun ction ϕ ( x ) = e T x , where e = [ e 1 , · · · , e n ] T . This implies the following optimizatio n pr oblem minimize 0 ≤ x ≤ 1 , x T 1 = k P j x j k q − p j k 2 + τ P j x j k p − p j k 2 . (16) By identifying the objectiv e of (16) as ( c + τ e ) T x , we observe that ( 16) can b e solved in closed fo rm by loc ating the k smallest e ntries of the vector c + τ e . The inter pretation of (1 6) is straight-forward: The linea r combinatio n of k q − p j k 2 and k p − p j k 2 shows a c ompetition between the noisy patch q and the first-pass estimate p . In most o f the common scen arios, k q − p j k 2 is p referred when noise lev el is low , where as p is pr eferred when no ise level is high. This in turn req uires a go od c hoice of τ . Empirically , we find that τ = 0 . 01 when σ < 3 0 and τ = 1 when σ > 3 0 is a g ood balanc e between the perform ance and g enerality . 6 20 30 40 50 60 70 80 22 24 26 28 30 32 34 noise standard deviation PSNR ϕ ( x ) = 0 ϕ ( x ) = 1 T Bx ϕ ( x ) = e T x Fig. 4: Deno ising results of three patch selection imp rovement schemes. Th e PSNR v alue is computed fr om a 4 32 × 381 image. 3) Compa risons: T o demonstrate th e effectiveness of the two pro posed patch selection steps, we co nsider a gro und truth ( clean) patch shown in Figure 2 (a). From a pool of n = 200 refe rence patches, we apply an exha usti ve searc h algorithm to cho ose k = 40 patche s th at best match with th e noisy observation q , wh ere the first 10 patches are shown in Figure 2 (b) . The results of the two selection refinement methods are shown in Figu re 2 (c)- (d), wher e in both cases the p arameter τ is ad justed for th e best per formance. For the case of ϕ ( x ) = 1 T B x , we set τ = 1 / (200 n ) when σ < 30 and τ = 1 / (2 n ) when σ > 3 0 . For the case of ϕ ( x ) = e T x , we use the d enoised result o f BM3D a s the first-pass estimate p , and set τ = 0 . 01 when σ < 3 0 an d τ = 1 when σ > 30 . The results in Figure 3 show that the PSNR increases f rom 28 .29 dB to 28.5 0 dB if we use ϕ ( x ) = 1 T B x , and furth er in creases to 29 .30 dB if we use ϕ ( x ) = e T x . The full perform ance co mparison is shown in Figure 4, where we show th e PSNR cur ve for a r ange of n oise lev els of an image. Since the pe rformance of ϕ ( x ) = e T x is consistently better than ϕ ( x ) = 1 T B x , in the rest o f the paper we focus on ϕ ( x ) = e T x . I V . D E T E R M I N E Λ In this section , we pr esent ou r pr oposed metho d to deter- mine Λ for a fixed U . Our p roposed method is based on the concept o f a Bay esian MSE estimator . A. Bayesian MSE Estimator Recall tha t th e noisy p atch is related to the laten t clean patch as q = p + η , where η iid ∼ N ( 0 , σ 2 I ) den otes the noise. Therefor e, the co nditional distribution o f q given p is f ( q | p ) = N ( p , σ 2 I ) . (17) Assuming that the prior distribution f ( p ) is kn o wn, it is natural to c onsider the Bayesian mean squared error (BMSE) between the estimate b p def = U Λ U T q and the groun d tr uth p : BMSE def = E p h E q | p h k b p − p k 2 2 p ii . (18) Here, the subscripts remark the distributions u nder wh ich the expectations are taken. The BMSE d efined in ( 18) sugg ests tha t the optimal Λ should b e the minimize r of the o ptimization pr oblem Λ = arg min Λ E p E q | p U Λ U T q − p 2 2 p . (19) In th e n ext sub section we discu ss how to solve (19). B. Localized Prior fr om the T ar geted Database Minimizing BMSE ov er Λ inv olves knowing the prior distribution f ( p ) . Howev er , in general, th e exact f orm of f ( p ) is never known. This leads to many pop ular mo dels in the literature, e.g. , Gaussian mix ture mo del [37], the field of expert mod el [36, 45], and the expected patch log- likelihood model (E PLL) [1 9, 46]. One common issue of all these models is that the prior f ( p ) is built from a gen eric database of pa tches. In other words, the f ( p ) mod els a ll patches in the database. As a r esult, f ( p ) is often a high dimensional distribution with complicated shapes. In our targeted datab ase setting, the difficult prior mo deling becomes a much simp ler ta sk. The reason is tha t while the shape of the distrib ution f ( p ) is still unknown, the subsampled reference patches (which are few b ut highly representative) could be well appro ximated a s sam ples dr awn from a single Ga ussian centered aro und some mean µ and covariance Σ . Th erefore, by app ropriately estimating µ and Σ o f this lo calized prior, we can derive the o ptimal Λ as giv en by the following Lemma: Lemma 4 : Let f ( q | p ) = N ( p , σ 2 I ) , and let f ( p ) = N ( µ , Σ ) fo r any vector µ and matrix Σ , then the optimal Λ that min imizes (18) is Λ = diag( G + σ 2 I ) − 1 diag( G ) , (20) where G def = U T µµ T U + U T Σ U . Pr oo f: See Appen dix C. T o specify µ and Σ , we let µ = k X j =1 w j p j , Σ = k X j =1 w j ( p j − µ )( p j − µ ) T , (21) where w j is the j th diagonal en try o f W defined in (1 1 ). Intuitively , a n interpretation of ( 21) is that µ is th e non-local mean of the reference p atches. Howe ver, the mo re impo rtant part of (21) is Σ , wh ich measur es the un certainty of the referenc e patches with r espect to µ . Th is un certainty measur e makes som e f undamental imp rovements to existing methods which will b e discussed in Section IV -C. W e note th at Lemm a 4 h olds even if f ( p ) is n ot Gaussian. In fact, for any distribution f ( p ) with th e first cumulant µ and the second cu mulant Σ , the optimal solution in (41) still 7 holds. This result is equiv alent to the classical linear minimum MSE (LMMSE) estimation [47]. From a com putational perspective, µ and Σ de fined in (21) lead to a very efficient im plementation as illustrated by the following lemma. Lemma 5: Using µ and Σ d efined in (21), th e op timal Λ is given b y Λ = diag( S + σ 2 I ) − 1 diag( S ) , (22) where S is th e eigenv alue matrix of P W P T . Pr oo f: See Appen dix D. Combining Lemma 5 with L emma 3, we ob serve that for any set of refer ence patches { p j } k j =1 , U and Λ can b e determined simultaneou sly thro ugh the eigen-d ecomposition of P W P T . Th erefore, we arrive at the overall algorith m shown in Algorith m 1. Algorithm 1 Pro posed Algorith m Input: Noisy patch q , noise variance σ 2 , and clean reference patches p 1 , . . . , p k Output: Estimate b p Learn U and Λ • Form d ata matrix P and weight matrix W • Compute eigen -decompo sition [ U , S ] = eig ( P W P T ) • Compute Λ = diag( S + σ 2 I ) − 1 diag( S ) Denoise: b p = U Λ U T q . C. Relatio nship to Prior W orks It is inter esting to note that many existing patch-based denoising algo rithms assume som e notion s of prior, either explicitly or im plicitly . I n th is subsectio n, we mention a few of th e impo rtant ones. For nota tional simplicity , we will f ocus on the i th d iagonal entr y of Λ = diag { λ 1 , . . . , λ d } . 1) BM3D [3], Shap e-Adaptive BM3 D [4] and BM3D-PCA [5] : BM3D a nd its variants have two de noising steps. In the first step, the algorithm applies a basis matrix U (e ither a pre-d efined basis such as DCT , or a basis learn ed from PCA). Then , it applies a hard -thresholdin g to the pr ojected coefficients to ob tain a filtered im age p . In the second step, the filtered image p is used as a p ilot estimate to the desired spectral c omponen t λ i = ( u T i p ) 2 ( u T i p ) 2 + σ 2 . (23) Follo wing our p roposed Bayesian f ramew ork, we obser ve that the role of u sing p in (2 3) is equiv a lent to assuming a dirac delta prior f ( p ) = δ ( p − p ) . (24) In other words, the prior that BM3D assumes is co ncentrated at one p oint, p , an d there is no measur e of uncertain ty . As a result, th e alg orithm beco mes high ly sensitive to th e first-p ass estimate. In contrast, (21) suggests that the first-pass estimate can be defined as a non- local mean solutio n. Add itionally , we µ 1 µ 2 targeted f 1 ( p ) targeted f 2 ( p ) generic f ( p ) Fig. 5 : Generic p rior vs ta rgeted priors: Gene ric prior h as an arbitrary shape spanned over the en tire space; T argeted priors are concen trated at the means. In this figure, f 1 ( p ) and f 2 ( p ) illustrate two targeted p riors which cor respond to two patches of a n image. incorpo rate a cov ariance matrix Σ to measu re th e un certainty of obser ving µ . Th ese p rovide a m ore r obust estimate to the denoising algorithm which is ab sent fro m BM3D an d its variants. 2) LPG-PCA [7]: In LPG-PCA, the i th spectral c omponen t λ i is d efined as λ i = ( u T i q ) 2 − σ 2 ( u T i q ) 2 , (25) where q is the noisy patch. Th e (implicit) assumption in [7] is that ( u T i q ) 2 ≈ ( u T i p ) 2 + σ 2 , and so b y substituting ( u T i p ) 2 ≈ ( u T i q ) 2 − σ 2 into (5) yields (25). Howe ver , the assumption implies th e existence of a perturbation ∆ p such that ( u T i q ) 2 = ( u T i ( p + ∆ p )) 2 + σ 2 . Letting p = p + ∆ p , we see that LPG-PCA im plicitly assumes a dir ac prior as in (23) an d (24). The d enoising result depen ds on the magnitud e of ∆ p . 3) Generic Glob al Prior [22 ]: As a comp arison to me th- ods usin g gener ic databases su ch as [22], we note that th e key difference lies in the usag e of a globa l prio r versus a local prior . Fig ure 5 illustrates the concept picto rially . Th e generic (glo bal) prior f ( p ) covers the entire space, wher eas the targeted ( local) prior is concen trated at its m ean. The advantage of the local prio r is th at it allo ws on e to denoise an image with fe w r eference p atches. It saves us f rom the intractable comp utation o f learning the g lobal p rior , which is a high-dim ensional no n-parametr ic functio n. 4) Generic Local Prior – EPLL [19], K-S VD [1 8, 3 5]: Compared to learning-ba sed meth ods th at u se lo cal priors, such a s EPLL [19] and K-SVD [18, 35], the most impo rtant merit of th e p roposed method is th at it requ ires significantly fewer training samples. A th orough ju stification will be dis- cussed in Sectio n V . 5) PLO W [48] : PLOW h as a similar design pr ocess as ours by considering th e op timal filter . Th e major difference 8 is that in PLO W , the deno ising filter is der i ved from the full covariance matrices of the data and noise. As we will see in the next subsection, the linea r denoisin g filter of o ur work is a truncated SVD matrix compu ted from a set of similar patches. The merit of the truncation is that it often reduces MSE in the b ias-v ariance tr ade off [42]. D. Impr oving Λ The Bayesian framework propo sed above ca n be g eneral- ized to furth er improve the de noising pe rformance. Referr ing to ( 19), we o bserve that the BMSE optimization can b e reform ulated to incorpo rate a p enalty term in Λ . Here, we consider the following ℓ α penalized BMSE: BMSE α def = E p E q | p U Λ U T q − p 2 2 p + γ k Λ1 k α , (26) where γ > 0 is the penalty parame ter , and α ∈ { 0 , 1 } contro ls which n orm to be used. The solution to the m inimization of (26) is giv en by the following lemma . Lemma 6: Let s i be the i th diag onal entry in S , where S is the eigen value m atrix of P W P T , then the optimal Λ that minimizes BMSE α is dia g { λ 1 , · · · , λ d } , wh ere λ i = max s i − γ / 2 s i + σ 2 , 0 , for α = 1 , (27) λ i = s i s i + σ 2 1 s 2 i s i + σ 2 > γ , for α = 0 . (28) Pr oo f: See Appen dix E. The mo ti vation of introducin g an ℓ α -norm p enalty in (26) is related th e grou p sparsity used in definin g U . Recall from Section III that since U is the o ptimal solution to a group sp arsity op timization, only fe w of the entries in the ideal projection U T p should b e non- zero. Con sequently , it is desired to re quire Λ to be sp arse so that U Λ U T q h as similar spectral c omponen ts as that of p . T o demon strate the effecti veness of the propo sed ℓ α for- mulation, we consider the examp le patch sh o wn in Figur e 3. For a refined d atabase of k = 40 patch es, we consider the original min imum BMSE solutio n ( γ = 0 ), th e ℓ 0 solution with γ = 0 . 02 , and th e ℓ 1 solution with γ = 0 . 02 . The results in Figur e 6 sho w that with the pr oposed p enalty term, the new BMSE α solution perf orms con sistently be tter than the original BMSE solution. V . E X P E R I M E N TA L R E S U LT S In th is section , we present a set of experimental results. A. Comparison Method s The methods we choose for compa rison are BM3D [3], BM3D-PCA [ 5], LPG-PCA [7], NLM [1], EPLL [19] and KSVD [18]. Excep t for EPLL and KSVD, all other four methods are internal denoising methods. W e re-implement and modify the interna l methods so that patch search is p erformed over the targeted external databases. These me thods are iter- ated for two times where the solution of the first step is used 20 30 40 50 60 70 80 24 26 28 30 32 34 noise standard deviation PSNR ori ginal ( γ = 0) ℓ 1 so lut i on ( γ = 0 . 0 2) ℓ 0 so lut i on ( γ = 0 . 0 2) Fig. 6 : Comparison s of the ℓ 1 and ℓ 0 adaptive solu tions over the o riginal solution with γ = 0 . The PSNR value for e ach noise level is averaged over 100 indepe ndent trials to reduce the b ias du e to a particular noise r ealization. as a basic estimate for th e second step. The spe cific settings of e ach algo rithm are as follows: 1) BM3D [3]: As a b enchmark of internal d enoising, we run the orig inal BM3D co de provid ed by the author 4 . Default parameter s are used in th e experimen ts, e.g. , the search windo w is 39 × 39 . W e have included a d iscussion in Section V -B ab out the in fluence of different search window size to the d enoising perfo rmance. As for external denoising, we im plement an external version of BM3D. T o ensure a fair c omparison, we set th e search window identical to other external deno ising methods. 2) BM3D-PCA [ 5] and LPG-PCA [7]: U is learned fr om the best k external patches, which is the same as in our pr oposed method . Λ is c omputed following (23) f or BM3D-PCA and ( 25) for LPG-PCA. In BM3D-PCA ’ s first step , th e thresho ld is set to 2 . 7 σ . 3) NLM [1]: Th e weights in NLM ar e computed accord ing to a Gaussian function of the ℓ 2 distance of two patc hes [49, 50]. Howe ver , instead of usin g all reference patches in the database, we use the best k patches fo llo wing [2]. 4) EPLL [19]: In EPLL, th e default patch p rior is learn ed from a g eneric d atabase (200,000 8 × 8 patch es). For a fair compar ison, we train the prior d istrib ution from our targete d databases using the same EM algorithm mentioned in [ 19]. 5) KSVD [18]: In KSVD, two dictionaries are trained including a glo bal diction ary and a targeted diction ary . The glob al diction ary is trained from a generic d atabase of 100 ,000 8 × 8 patches by the KSVD auth ors. The targeted dictionary is trained fr om a targeted d atabase of 1 00,000 8 × 8 patch es contain ing similar content of the no isy ima ge. Both dictio naries are of size 64 × 256 . 4 http:/ /www .cs.tut.fi/~foi/GCF-BM3D/ 9 (a) c lean (b) noisy σ = 100 (c) iBM3 D (d) EPLL(gene ric) (e) EPLL(target) 16.68 dB (0.710 0) 16. 93 dB ( 0.7341) 18.65 d B (0. 8234) (f) eNLM (g) eBM3D (h) eBM3D-PCA (i) eLPG-PCA (j) ours 20.72 dB (0. 8422) 20.33 dB (0.8 228) 21 .39 dB (0.8 435) 2 0.37 dB (0.72 99) 22.20 dB (0.9 069) Fig. 7: Denoising text imag es: V isual com parison and objec ti ve comparison (PSNR and SSIM in the paren thesis). The test image size is o f 127 × 104 . Pr efix “ i ” stands for intern al denoising ( i. e. , sin gle-image deno ising), and p refix “ e ” stands for external deno ising ( i.e. , usin g external d atabases). T o emphasize the difference between the original algorith ms (which are single-ima ge d enoising algo rithms) and the co r - respond ing new implem entations for external databases, we denote the origin al, (sing le-image) den oising algo rithms w ith “ i ” ( internal), an d the co rrespondin g new implementatio ns for external databa ses with “ e ” (external). W e add zero-m ean Gaussian noise with standar d deviations from σ = 20 to σ = 80 to the test images. The pa tch size is set a s 8 × 8 ( i.e., d = 64 ), an d the sliding step size is 6 in the first step and 4 in the secon d step. T wo quality metrics, namely Peak Signal to No ise Ratio (PSNR) and Structural Similarity ( SSIM) are used to ev aluate the objective qua lity of th e d enoised images. B. Denoisin g T ext and Docu ments Our first experimen t considers denoising a text image. The pu rpose is to simulate the case where we want to denoise a no isy docum ent with the h elp of o ther similar but non-id entical texts. This id ea can b e easily gener alized to other scenar ios such a s hand written signatu res, b ar code s and license plates. T o prepare this scen ario, we cap ture ran domly 8 region s of a do cument and ad d noise. W e th en build the targeted external database by crop ping 9 arbitrary portions from a different docume nt but with the same f ont sizes. 1) Deno ising P erformance: Figure 7 shows the den oising results when we add excessi ve noise ( σ = 100 ) to one qu ery image. Amo ng all the m ethods, the prop osed metho d yield s the h ighest PSNR and SSIM values. Th e PSNR is 5 dB better than the benchm ark BM3 D (in ternal) denoising algorith m. Some existing lear ning-based methods, such as EPLL , do no t perfor m well due to the insufficient trainin g samples from the targeted da tabase. Com pared to o ther extern al deno ising 20 30 40 50 60 70 80 16 18 20 22 24 26 28 30 32 34 noise standard deviation PSNR iBM3D EPLL(generic) EPLL(target) KSVD(generic) KSVD(target) eNLM eBM3D eBM3D−PCA eLPG−PCA ours Fig. 8: T ext imag e de noising: A verage PSNR vs noise levels. In th is plot, each PSNR value is averaged over 8 test im ages. The typical size of a test image is about 300 × 200 . methods, the propo sed method shows a b etter u tilization of the targeted database. Since the default search window size for inter nal BM3D is only 39 × 39 , we fu rther cond uct experiments to explo re the effect of different search window sizes f or BM3D. The PSNR results are shown in T able 1 . W e see that a larger window size improves the BM3D denoising perfo rmance since more patch redund ancy can b e exploited . However , ev en if we extend the search to an external datab ase (which is th e case f or eBM3D), the p erformanc e is still worse than the pr oposed method . In Fig ure 8, we plo t and com pare the av erage PSNR v alues on 8 test images over a range of noise levels. W e o bserve that 10 search w indo w si ze σ = 30 σ = 50 σ = 70 BM3D ( 39 × 39 ) 24.73 20.44 18.21 ( 119 × 119 ) 26.91 21.24 19.01 ( 199 × 199 ) 28.02 21.53 19.27 eBM3D ( e xternal database) 28.48 25.49 23.09 ours ( external database) 30.79 28.43 25.97 T able 1 : PSNR results u sing BM3D with different search win - dow sizes and the proposed metho d. W e test the per formance for thr ee different n oise levels ( σ = 3 0 , 50 , 70 ). The re ported PSNR is co mputed o n the entire image o f size 3 0 1 × 218 . 0.018 0.02 0.022 0.024 0.026 0.028 0.03 0.032 0.034 24 26 28 30 32 34 average patch−database distance PSNR σ = 2 0 σ = 3 0 σ = 4 0 σ = 5 0 σ = 6 0 σ = 7 0 σ = 8 0 Fig. 9: D enoising perfo rmance in terms of th e database quality . The a verage patch-to-databa se distance d ( P ) is a measure of the database quality . at low noise lev els ( σ < 30 ), our proposed method perfo rms worse than eBM3D-PCA and eLPG-PCA. One reason is that th e patch variety o f the text image datab ase makes o ur estimate of Λ in (22) worse th an the oth er two estimates in (23) an d (25). Howev er , as noise level increases, our pr oposed method outperform s other methods, which suggests th at the prior of o ur metho d is more informa ti ve. For exam ple, for σ = 6 0 , our average PSNR result is 1.26 d B better than the second best result b y eBM3D-PCA. For the two learn ing-based methods, i.e., EPLL an d KSVD, as can be seen, u sing a targeted datab ase yield s better results than using a generic datab ase, which validates the useful- ness of a targeted database. Howe ver, they perfor m worse than oth er no n-learning metho ds. One reason is that a lar ge number of training samples are needed fo r these learn ing- based methods – for EPLL, the large nu mber of sam ples is needed to build the Gaussian mixtu res, wher eas for KSVD, the large num ber of sam ples is need ed to train the over-complete dictionary . I n contrast, the proposed method is fully functiona l ev en if the datab ase is small. 2) Data base Qua lity: W e are interested in knowing how the q uality of a database would af fect the denoising p erfor- mance, as that cou ld offer u s impor tant insights about the sensiti vity of the algorith m. T o this end , we com pute the av erage distance f rom a given da tabase to a cle an image that we would like to obta in. Specifically , for each patch p i ∈ R d in a clean imag e containin g m patches and a database P o f n patches, we com pute its minimu m distance d ( p i , P ) def = min p j ∈P k p i − p j k 2 / √ d. The average patch-datab ase distance is then defined as d ( P ) def = (1 /m ) P m i =1 d ( p i , P ) . Ther efore, a sma ller d ( P ) indicates that th e database is mo re rele vant to the gro und truth (clean) im age. Figure 9 shows the r esults of six databases P , where each is a ran dom subset of th e original targeted datab ase. For all noise levels ( σ = 20 to 80) , PSNR decr eases linearly as the patch-to- database distance increase, More over , the decay rate is slower for higher n oise levels. The result suggests that the quality of the databa se has a m ore significan t impact unde r low noise condition s, and less un der high noise condition s. C. Denoising Multiview Im ages Our seco nd experiment considers the scen ario of cap turing images using a multivie w camera system. The m ulti view images are cap tured a t different v ie wing p ositions. Sup pose that one or more camer as ar e not fun ctioning p roperly so that some images a re corru pted with noise. Ou r goal is to demonstra te that with the help of the othe r clean vie ws, th e noisy view could be re stored. T o simulate the experiment, we download 4 multive w datasets from Middlebury Computer V ision Page 5 . Each set of im ages c onsists of 5 views. W e ad d i. i.d. Gaussian noise to one v ie w and then use the rest 4 views to assist in denoising. In Figure 10, we visually show the denoising r esults o f the “Barn ” and “Cone” multivie w da tasets. In comp arison to the com peting m ethods, our proposed metho d h as the h ighest PSNR values. The mag nified areas indicate that our pr oposed method removes the noise significantly and better reco nstructs some fine d etails. In Figure 11, we plot an d compare the av erage PSNR values on 4 test imag es over a ra nge of noise lev els. T he pr oposed metho d is consistently better th an its competitor s. For example, fo r σ = 5 0 , our p roposed method is 1.06 dB better than eBM3D-PCA and 2.73 dB better than iBM3D. The superio r perfo rmance confirms ou r belief that with a go od database, not any deno ising algorithm would perfor m equally well. I n fact, we still have to carefully design the deno ising algorithm in ord er to maxim ize the perform ance by fully u tilizing the database. D. Denoising Human F aces Our thir d experiment co nsiders deno ising h uman face im- ages. In low lig ht conditions, images captured are typically corrup ted b y noise. T o facilitate oth er high-level vision tasks such as reco gnition and tracking, denoising is a necessary pre - processing step. This experiment demon strates the a bility of denoising face images. 5 http:/ /vision.middl ebury . edu/stereo/ 11 (a) noisy (b) iBM3D (c) eNLM (d) eBM3D-PCA (e) eLPG-PCA (f) ours ( σ = 20 ) 28.99 dB 31.17 dB 32.18 dB 32.92 dB 33.65 dB (g) noisy (h) iBM3D (i) eNLM (j) eBM3D-PCA (k) eLP G-PCA (l) ours ( σ = 20 ) 28.77 dB 29.97 dB 31.03 dB 31.80 dB 32.18 d B Fig. 10: Multivie w image deno ising: V isual compar ison an d objec ti ve comparison (PSNR). [T op ] “Barn”; [Bo ttom] “Con e”. 20 30 40 50 60 70 80 22 24 26 28 30 32 34 36 noise standard deviation PSNR iBM3D EPLL(generic) EPLL(target) KSVD(generic) KSVD(target) eNLM eBM3D eBM3D−PCA eLPG−PCA ours Fig. 11: Multivie w image denoising : A verag e PSNR vs noise lev els. In this p lot, each PSNR value is av eraged over 4 test images. The ty pical size o f a test image is ab out 45 0 × 350 . In this experiment, we use the Gore face datab ase f rom [51], of which some examp les a re shown in the top row of Figure 12 ( each imag e is 60 × 80 ). W e simulate the den oising task by adding noise to 8 r andomly c hosen images an d th en use the other imag es (29 other face imag es in o ur experimen t) in the d atabase to assist in den oising. In the top row of Figu re 12, we show some clean face images in the da tabase while in the bottom row , we show one of the no isy faces and its deno ising results (mag nified). W e observe th at while th e facial expr essions ar e different an d there are misalign ments b etween im ages, the prop osed metho d still g enerates robust results. In Fig ure 13, we plot the average PSNR c urves on the 8 test im ages, wh ere we see con sistent gain com pared to other method s. noisy ( σ = 20 ) iBM3D 32.04 dB eNLM 32.74 dB eBM3D-PCA 33.29 dB ours 33.86 dB Fig. 12: Face denoising o f Gore dataset [51]. [ T op] Database images; [ Bottom] Den oising results. E. Run time Comparison Our curr ent implem entation is in MA TLAB (sin gle thread ). The ru ntime is abou t 14 4s to den oise an imag e ( 30 1 × 218 ) with a targeted d atabase consisting of 9 ima ges of similar sizes. The c ode is ru n on a n Intel Cor e i7-37 70 CPU. In T ab le 2, we show a runtime co mparison with other meth ods. W e observe that the runtime o f the p roposed metho d is in deed not significantly worse th an other external methods. I n particula r , the run time of the pro posed meth od is in the same o rder of magnitud e as eNLM, eBM3D, eBM3D-PCA and eLPG-PCA. W e note that most o f th e runtime of th e p roposed method is spent on search ing similar patch es and comp uting SVD. Speed improvement for the proposed m ethod i s possible. First, we can apply tech niques to en able fast patch search, e.g., patch match [52, 53], KD tree [5 4], or fast SVD [55]. Second, random sampling schemes can be applied to further reduce the 12 iBM3D EPLL(generic) EPLL (tar get) KSVD(generic) KSVD(target) runtime (sec) 0.97 35.17 10.21 0.32 0.13 eNLM eBM3D eBM3DPCA eLPGPCA ours runtime (sec) 95.68 99.17 102.21 102.14 144.33 T able 2 : Runtime comp arison for different denoising meth ods. The te st image is of size 301 × 218 . For EPLL and KSVD methods, the tim e to train a finite Gaussian mix ture mode l and th e time to le arn a dictionary is not included in th e above runtime. For other external den oising metho ds, the targeted databa se consists of 9 images o f similar sizes of the test im age. 20 30 40 50 60 70 80 22 24 26 28 30 32 34 noise standard deviation PSNR iBM3D EPLL(generic) EPLL(target) KSVD(generic) KSVD(target) eNLM eBM3D eBM3D−PCA eLPG−PCA ours Fig. 13 : Face denoising: A verage PSNR vs noise lev els. In this plot, ea ch PSNR value is averaged over 8 test images. Each test image is o f size 60 × 80 . computatio nal complexity [ 20, 2 1]. Third, since th e de noising is in dependen tly perfo rmed on each patch, GPU can b e used to parallelize the co mputation. F . Discussion and Future W ork One im portant aspect of the algorithm th at we did not discuss in depth is th e sensitivity . In p articular , two question s must be a nswered. First, assumin g that there is a perturbatio n on the d atabase, how much MSE will be cha nged? Answering the question will provide us inform ation about the sensitivity of the algorithm wh en there are c hanges in fon t size (in the text example), view an gle (in th e multivie w exam ple), and facial expr ession (in the face examp le). Secon d, given a clean patch, h ow many pa tches do we need to put in the database in orde r to ensure that the clean patch is close to at least o ne of th e patche s in th e database? T he answer to this question will info rm u s ab out th e size of the targeted database. Bo th problem s will be stud ied in our future work. V I . C O N C L U S I O N Classical image den oising meth ods based o n a single noisy input or gener ic databases are app roaching their p erformance limits. W e pr oposed an adaptive im age denoising algorithm using targeted da tabases. The pr oposed method applies a group sparsity m inimization and a localized p rior to learn the basis ma trix and the sp ectral coefficients of the o ptimal denoising filter , respectively . W e show that the ne w me thod generalizes a numbe r of existing patch-based deno ising algo- rithms such as BM3D, BM3D- PCA, Shape-a daptiv e BM3D, LPG-PCA, and E PLL. Bas ed on the ne w frame work, we propo sed improvement schemes, namely an improved patch selection pro cedure for d etermining the ba sis matrix and a pe- nalized min imization for deter mining the spectral coefficients. For a variety of scen arios includ ing text, multivie w images and faces, we demo nstrated em pirically that th e prop osed method has sup erior perfor mance over e xisting method s. W ith the increasing am ount of im age da ta av ailable online, we anticipate that the pr oposed m ethod is an impo rtant first step tow ards a d ata-depende nt gener ation of deno ising a lgorithms. A P P E N D I X A. Pr oof o f Lemma 2 Pr oo f: From (4), the optimization is minimize u 1 ,..., u d ,λ 1 ,...,λ d P d i =1 (1 − λ i ) 2 ( u T i p ) 2 + σ 2 λ 2 i sub ject to u T i u i = 1 , u T i u j = 0 . Since each term in the sum of the o bjecti ve function is non-n egati ve, we ca n consider the m inimization over eac h individual term separately . This gives minimize u i ,λ i (1 − λ i ) 2 ( u T i p ) 2 + σ 2 λ 2 i sub ject to u T i u i = 1 . (29) In (2 9 ), we temp orarily d ropped the ortho gonality co nstraint u T i u j = 0 , which will be ta ken into acc ount later . The Lagrang ian f unction of (29) is L ( u i , λ i , β ) = (1 − λ i ) 2 ( u T i p ) 2 + σ 2 λ 2 i + β (1 − u T i u i ) , where β is th e Lagrang e multiplier . Differentiating L with respect to u i , λ i and β yields ∂ L ∂ λ i = − 2 (1 − λ i )( u T i p ) 2 + 2 σ 2 λ i (30) ∂ L ∂ u i = 2 (1 − λ i ) 2 ( u T i p ) p − 2 β u i (31) ∂ L ∂ β = 1 − u T i u i . (32) Setting ∂ L /∂ λ i = 0 y ields λ i = ( u T i p ) 2 / ( u T i p ) 2 + σ 2 . (33) Substituting th is λ i into (31) and setting ∂ L /∂ u i = 0 yields 2 σ 4 ( u T i p ) p ( u T i p ) 2 + σ 2 2 − 2 β u i = 0 . (34) 13 Therefo re, the optimal pair ( u i , β ) of (29) mu st b e th e solution of ( 34). The co rrespondin g λ i can b e ca lculated via (33). Referring to (34), we observe two p ossible scen arios. First, if u i is any unit vector ortho gonal to p i , and β = 0 , then (34) can be satisfied. Th is is a trivial solution , b ecause u i ⊥ p implies u T i p = 0 , and hence λ i = 0 . The secon d case is that u i = p / k p k 2 , and β = σ 4 k p k 2 ( k p k 2 + σ 2 ) 2 . (35) Substituting (35) sho ws that (34) is satisfied. This is the non-tr i vial solution. The correspondin g λ i in this case is k p k 2 / ( k p k 2 + σ 2 ) . Finally , taking into accou nt of the or thogonality constraint u T i u j = 0 if i 6 = j , we can ch oose u 1 = p / k p k 2 , and u 2 ⊥ u 1 , u 3 ⊥{ u 1 , u 2 } , . . . , u d ⊥{ u 1 , u 2 , . . . u d − 1 } . There- fore, the d enoising result is b p = U diag k p k 2 k p k 2 + σ 2 , 0 , . . . , 0 U T q , where U is any orthonor mal matrix with the first colum n u 1 = p / k p k 2 . B. Pr oof of Lemma 3 Pr oo f: Let u i be the i th co lumn of U . Then, (9) b ecomes minimize u 1 ,..., u d P d i =1 k u T i P k 2 sub ject to u T i u i = 1 , u T i u j = 0 . (36) Since each ter m in the sum of (3 6) is non-negative, we can consider each individual term minimize u i k u T i P k 2 sub ject to u T i u i = 1 , which is equiv alent to minimize u i k u T i P k 2 2 sub ject to u T i u i = 1 . (37) The c onstrained prob lem (37) ca n be solved b y con sidering the Lagran ge fu nction, L ( u i , β ) = k u T i P k 2 2 + β (1 − u T i u i ) . (38) T aking der i vati ves ∂ L ∂ u i = 0 and ∂ L ∂ β = 0 yield P P T u i = β u i , and u T i u i = 1 . Therefo re, u i is the eigenvector of P P T , and β is the corre- sponding eigenv alue. Since the eige n vecto rs are o rthonorm al to each o ther , the solution automatically satisfies the orthog - onality constraint th at u T i u j = 0 if i 6 = j . C. Pr oo f o f Lemma 4 Pr oo f: First, b y plu gging q = p + η into BMSE we g et BMSE = E p E q | p U Λ U T ( p + η ) − p 2 2 p = E p h p T U ( I − Λ ) 2 U T p i + σ 2 T r Λ 2 . Recall the fact tha t f or any ran dom variable x ∼ N ( µ x , Σ x ) and any m atrix A , it holds that E x T Ax = E [ x ] T A E [ x ] + T r ( A Σ x ) . There fore, the ab ove BMSE can be simplified as µ T U ( I − Λ ) 2 U T µ + T r U ( I − Λ ) 2 U T Σ + σ 2 T r Λ 2 = T r ( I − Λ ) 2 U T µµ T U + ( I − Λ ) 2 U T Σ U + σ 2 T r Λ 2 = T r ( I − Λ ) 2 G + σ 2 T r( Λ 2 ) = d X i =1 (1 − λ i ) 2 g i + σ 2 λ 2 i , (39) where G def = U T µµ T U + U T Σ U and g i is the i th diagonal entry in G . Setting ∂ BMSE /∂ λ i = 0 yields 2(1 − λ i ) g i + 2 σ 2 λ i = 0 . (40) Therefo re, the optimal λ i is g i / ( g i + σ 2 ) and the optimal Λ is Λ = diag g 1 g 1 + σ 2 , · · · , g d g d + σ 2 , (41) which, by definition, is diag( G + σ 2 I ) − 1 diag( G ) . D. Pr o of of Lemma 5 Pr oo f: First, we write Σ in ( 21) in the matrix form Σ = P − µ 1 T W P − µ 1 T T = P W P T − µ 1 T W P T − P W 1 µ T + µ 1 T W 1 µ T . It is not d if ficult to see that 1 T W P T = µ T , P W 1 = µ and 1 T W 1 = 1 . Th erefore, Σ = P W P T − µµ T − µµ T + µµ T = P W P T − µµ T , which giv es µµ T + Σ = P W P T . (42) Note th at G = U T µµ T U + U T Σ U = U T ( µµ T + Σ ) U . Substituting ( 42) in to G and using e quation (1 0), we have G = U T P W P T U = U T U S U T U = S . Therefo re, b y Lemm a 4, Λ = diag( S + σ 2 I ) − 1 diag( S ) . (43 ) 14 E. Pr oof of Lemma 6 By Lemma 5, it ho lds that E p E q | p U Λ U T q − p 2 2 p = d X i =1 (1 − λ i ) 2 s i + σ 2 λ 2 i = d X i =1 " ( s i + σ 2 ) λ i − s i s i + σ 2 2 + s i σ 2 s i + σ 2 # . Therefo re, th e min imization of (26) becomes minimize λ i d X i =1 " ( s i + σ 2 ) λ i − s i s i + σ 2 2 # + γ k Λ 1 k α , (44) where γ k Λ 1 k α = γ P d i =1 | λ i | or γ P d i =1 1 ( λ i 6 = 0 ) for α = 1 or 0 . W e note th at when α = 1 or 0 , (4 4) is the standard sh rinkage pr oblem [ 56], in which a closed for m solution exists. Follo wing f rom [57], the solutions are given by λ i = max s i − γ / 2 s i + σ 2 , 0 , for α = 1 , and λ i = s i s i + σ 2 1 s 2 i s i + σ 2 > γ , for α = 0 . R E F E R E N C E S [1] A. Buades, B. Coll , and J. Morel, “ A re vie w of image denoising algorit hms, with a new one, ” SIAM Multisc ale Model and Simulation , vol. 4, no. 2, pp. 490–530, 2005. [2] C. Ke rvrann and J . Boulang er , “Local adapti vity to vari able smoothness for exempla r-base d image regulari zation and representat ion, ” Interna- tional J ournal of Computer V ision , vol. 79, no. 1, pp. 45–69, 2008. [3] K. Dabov , A. Foi, V . Katk ovnik, and K. Egiazarian, “Image denoising by sparse 3D transform-domain collaborati ve filtering, ” IEEE T rans. Imag e Proc ess. , vol. 16, no. 8, pp. 2080–2095, Aug. 2007. [4] K. Dabov , A. Foi, V . Katko vnik, and K. E giaz arian, “ A nonlocal and shape-adapt iv e transform-do main collabora ti ve filtering, ” in Proc. Intl. W orkshop on Local and Non-Loca l Appro x. in Imag e Pr ocess. (LNLA’0 8) , pp. 1–8, Aug. 2008. [5] K. Dabov , A. Foi, V . Katkov nik, and K. Egiazarian, “BM3D image denoisin g with shape-adapti ve principal component analysis, ” in Signal Pr ocess. with Adaptive Sparse Structur ed Represent ations (SP AR S’09) , pp. 1–6, Apr . 2009. [6] J. Mairal, F . Bach, J. Ponce, G. Sapiro, and A. Z isserman, “Non-local sparse models for image restoration , ” in Proc. IEEE Conf. Compute r V ision and P atte rn Reco gnition (CVPR’09) , pp. 2272–2279, Sep. 2009. [7] L. Zhang, W . Dong, D. Zhang, and G. Shi, “T wo-stage image denoising by principa l component analysis with local pixel grouping, ” P attern Recogn ition , vol. 43, pp. 1531–1549, Apr . 2010. [8] W . Dong, L . Zhang, G. Shi, and X. Li, “Nonloca lly centrali zed s parse represent ation for image restorati on, ” IEEE T rans. Image Proc ess. , vol. 22, no. 4, pp. 1620 – 1630, Apr . 2013. [9] A. Rajwa de, A. Rangara jan, and A. Banerj ee, “Image denoising using the higher order singular value decompositio n, ” IE EE T rans. P attern Anal. and Mach. Intell. , vol. 35, no. 4, pp. 849 – 862, Apr . 2013. [10] M. Zontak and M. I rani, “Internal statisti cs of a single n atural image, ” in Pr oc. IEEE Conf . Computer V ision and P attern Rec ognit ion (CVPR’11) , pp. 977–984, Jun. 2011. [11] I. Mosseri, M. Zontak, and M. Irani, “C ombining the power of interna l and exte rnal denoising , ” in P r oc. Intl. Conf. Computational Photogr aphy (ICCP’13) , pp. 1–9, Apr . 2013. [12] H. C. Burger , C. J . Schuler , and S. Harmeling, “Lea rning ho w to combine internal and exter nal denoising methods, ” P attern Recogniti on , pp. 121–130, 2013. [13] D. Glasner , S . Bagon, and M. Irani, “Super-resol ution from a single image, ” in Proc. Intl. Conf. Computer V ision (ICCV’09) , pp. 349–356, Sep. 2009. [14] P . Chat terjee and P . Milanfar , “Is denoising dead?, ” IEE E T rans. Imag e Pr ocess. , vol. 19, no. 4, pp. 895–911, Apr . 2010. [15] A. L e vin and B. Nadler , “Natural image denoising: Optimality and inheren t bounds, ” in Pr oc. IEEE Conf. Computer V ision and P attern Recogn ition (CVPR ’11) , pp. 2833–2840, J un. 2011. [16] R. Y an, L. Shao, S. D. Cvetk ovi c, and J. Klijn, “Impro ved nonlocal means based on pre-classifica tion and in va riant block matching, ” J our- nal of Display T echnolo gy , vol. 8, no. 4, pp. 212–218, Apr . 2012. [17] Y . Lou, P . Fa v aro, S. Soatto, and A. Bertozzi , “Nonloc al similarity image filtering, ” Lecture Notes in Comput er Scie nce, pp. 62–71. Springer Berlin Heidelbe rg, 2009. [18] M. Elad and M. Aharon, “Image denoisi ng via sparse and redundan t represent ations ove r learned dict ionarie s, ” IEEE Tr ans. Imag e Pr ocess. , vol. 15, no. 12, pp. 3736–3745, Dec. 2006. [19] D. Zoran and Y . W eiss, “From learning models of natural image patches to whole image restoration, ” in Proc . IEEE Intl . Conf . Computer V ision (ICCV’11) , pp. 479–486, Nov . 2011. [20] S. H. Chan, T . Zickler , and Y . M. Lu, “Fast non-local filtering by random sampling: it wo rks, especially for large images, ” in P r oc. IE EE Intl. Conf. Acoustics, Speech and Signal Pr ocess. (ICASSP ’13) , pp. 1603–1607, May 2013. [21] S. H. Chan, T . Zickler , and Y . M. L u, “Monte Carlo non-loca l means: Random s amplin g for large-sca le image filtering, ” IEEE T rans. Image Pr ocess. , vol. 23, no. 8, pp. 3711–3725, Aug. 2014. [22] A. Levi n, B. Nadle r , F . Durand, and W . T . Freeman, “Patch complex ity , finite pixe l correlation s and optimal denoising, ” in Proc . 12th Eur o. Conf . Computer V ision (ECCV’12) , vol. 7576, pp. 73–86. Oct. 2012. [23] N. Joshi, W . Matusik, E. Adelson, and D. Kriegman, “Persona l photo enhanc ement using exa mple images, ” ACM Tr ans. Graph , vol. 29, no. 2, pp. 1–15, Apr . 2010. [24] L. Sun and J. Hays, “Super -resoluti on from internet -scale scene matching , ” in Pr oc. IE EE Intl. Conf. Computational P hoto graphy (ICCP’12) , pp. 1–12, A pr . 2012. [25] J. Y ang, J. Wright, T . Huang, and Y . Ma, “Image super -resolution as sparse represent ation of raw image patches, ” in P r oc. IEE E Conf. Computer V ision and P attern Recogniti on (CVPR’08) , pp. 1–8, Jun. 2008. [26] M. K. Johnson, K. Dale, S. A vidan, H . Pfister , W . T . Freeman, and W . Matusik, “CG2R eal: Improving the realism of computer generated images using a lar ge colle ction of photographs, ” IEEE T rans. V isual- ization and Computer Graphics , vol. 17, no. 9, pp. 1273–1285, Sep. 2011. [27] M. Elad and D. Datsenko , “Example-based regul arizati on deployed to super-resolut ion reconstr uction of a single image, ” The Compute r J ournal , vol. 18, no. 2-3, pp. 103–121, Sep. 2007. [28] K. Dale, M. K. Johnson, K. Sunkav alli, W . Matusik, and H. Pfister , “Image restorat ion using online photo collecti ons, ” in Proc. Intl. Conf. Computer V ision (ICCV’09) , pp. 2217–2224, Sep. 2009. [29] I. Ram, M. Elad, and I. Cohen, “Image processin g using smooth ordering of it s patc hes, ” IEEE T rans. Image Proc ess. , vol. 22, no. 7, pp. 2764–2774, Jul. 2013. [30] L. Shao, H. Z hang, and G. de Haan, “ An ove rvie w and performance e va luatio n of classificat ion-based least s quare s traine d filters, ” IEEE T rans. Image P r ocess. , vol. 17, no. 10, pp. 1772–1782, Oct. 2008. [31] K. Dabov , A. Foi, and K. Egiazaria n, “V ideo denoising by sparse 3D transform-domai n collaborati ve filtering, ” in Proc . 15th Euro. Signal Pr ocess. Conf. , vol. 1, pp. 145–149, Sep. 2007. [32] L. Zhang, S. V addadi, H. Jin, and S. Nayar , “Multiple view image denoisin g, ” in Proc. IEEE Intl. Conf. Computer V ision and P attern Recogn ition (CVPR ’09) , pp. 1542–1549, J un. 2009. [33] T . Buades, Y . L ou, J. Morel, and Z. T ang, “ A note on multi-image denoisin g, ” in Proc. IEEE Intl. W orkshop on Local and Non-Local Appr ox. in Imag e Pro cess. (LNLA’09) , pp. 1–15, Aug. 2009. [34] E. Luo, S. H . Chan, S. Pan, and T . Q. Nguyen, “ Adapti ve non-local means for multi vie w image denoising: Searching for the right patches via a statistical approach, ” i n Pr oc. IEEE Intl. Conf. Imag e Pr ocess. (ICIP’13) , pp. 543–547, Sep. 2013. [35] M. Aharon, M. Elad, and A. Bruckstei n, “K-SVD: Design of diction ar- ies for sparse represent ation, ” Pr ocee dings of SP AR S , vol. 5, pp. 9–12, 2005. [36] S. Roth and M.J. Black, “Fields of experts, ” Intl. J. Computer V ision , vol. 82, no. 2, pp. 205–229, 2009. 15 [37] G. Y u, G. Sapiro, and S. Mallat, “Solving in ver se problems with piece wise linear estimators: From gaussian mixture models to structured sparsity , ” IEEE T rans. Image Proc ess. , vol. 21, no. 5, pp. 2481–2499, May 2012. [38] R. Y an, L. Shao, and Y . Liu, “Nonlocal hierarchical dicti onary learni ng using wa ve lets for image denoising, ” IEEE Tr ans. Ima ge Pr ocess. , vol. 22, no. 12, pp. 4689–4698, Dec. 2013. [39] E. Luo, S. H. Chan, and T . Q. Nguyen, “Image denoising by targe ted ext ernal database s, ” in P r oc. IEE E Intl. Conf. Acoustics, Speec h and Signal Proce ss. (ICASSP ’14) , pp. 2469–2473, May 2014. [40] P . Milanf ar , “ A tour of modern image filtering, ” IEEE Signal Pro cess. Magazi ne , vol. 30, pp. 106–128, Jan. 2013. [41] P . Milanfar , “Symmetriz ing smoothing filters, ” SIAM J. Imaging Sci. , vol. 6, no. 1, pp. 263–284, 2013. [42] H. T alebi and P . Milanfar , “Global image denoising, ” IEEE T rans. Imag e Proc ess. , vol. 23, no. 2, pp. 755–768, Feb . 2014. [43] S. Cott er , B. Rao, K. E ngan, and K. Kreutz-Delga do, “Sparse solut ions to linear in verse problems with multipl e measurement vect ors, ” IEEE T rans. Signal P r ocess. , vol. 53, no. 7, pp. 2477–2488, Jul. 2005. [44] T . Kol da and B. B ader , “T ensor deco mpositions and appli cations, ” SIAM Revie w , vol. 51, no. 3, pp. 455–500, 2009. [45] S. Roth and M. Black, “Fields of experts: A frame work for learning image priors, ” in Pr oc. IEE E Computer Socie ty Conf. Comput er V ision and P attern Recogn ition (CVPR’05), 2005 , vol. 2, pp. 860–867 vol. 2, Jun. 2005. [46] D. Z oran and Y . W eiss, “Natural images, gaussian m ixtur es and dead lea ves, ” Advances in Neural Information Pr ocess. Systems (NIPS’12) , vol. 25, pp. 1745–1753, 2012. [47] S. M. Kay , “Funda mentals of statist ical signal processing: Detecti on theory , ” 1998. [48] P . Chatte rjee and P . Milan far , “Patch-b ased near-opti mal image denois- ing, ” IEEE T rans. Image Pro cess. , vol. 21, no. 4, pp. 1635–1649, Apr . 2012. [49] A. Buades, B. Coll, and J. M. Morel, “Non-loca l means denoisi ng, ” [on line] http://www . ipol.im/ pub/art/ 2011/bcm nlm/, 2011. [50] E. Luo, S. Pan, and T . Nguyen, “Genera lized non-l ocal m eans for iterati ve denoising, ” in Pr oc. 20th Eur o. Signal Proc ess. Conf. (EUSIPCO’12) , pp. 260–264, Aug. 2012. [51] Y . Peng, A. Ganesh, J. Wright, W . Xu, and Y . Ma, “RASL: Robust alignmen t by sparse and low-rank decomposition for line arly corre lated images, ” IEE E T rans. P attern Anal. and Mach. Intell. , vol. 34, no. 11, pp. 2233–2246, Nov . 2012. [52] M. Mahmoudi and G. Sapiro, “Fast image and video denoising via nonloca l means of similar neighborhoods, ” IEEE Signal P r ocess. Letter s , vol. 12, no. 12, pp. 839–842, Dec. 2005. [53] R. V ignesh, B. T . Oh, and C.-C. J. Kuo, “Fast non-lo cal means (NL M) computat ion with probabil istic early terminati on, ” IEEE Signal Proce ss. Letter s , vol. 17, no. 3, pp. 277–280, Mar . 2010. [54] M. Muja and D. G. Lowe, “Scalable nearest neighbor algori thms for high dimensional data, ” IEEE T rans. P attern Anal. and Mac h. Intell. , vol. 36, no. 11, pp. 2227–2240, Nov . 2014. [55] C. Boutsidis and M. Magdon-Ismail, “Fa ster SVD-truncated regulari zed least-squ ares, ” in IEEE Intl. Symp. Informati on Theory (ISIT’14) , pp. 1321–1325, Jun. 2014. [56] C. Li, An effic ient algorithm for total variat ion re gulariza- tion with appli cations to the single pixe l camera and compr es- sive sensing , Ph.D. thesis, Rice Uni v ., 2009, av ailable at http:/ /www .caam.rice.edu/ ∼ optimiza tion/L1/TV AL3/tva l3 thesis.pdf. [57] S. H. Chan, R. Khoshabeh, K. B. G ibson, P . E. Gill, and T . Q. Nguyen, “ An augmented Lagrangian m ethod for tota l vari ation video restorat ion, ” IEEE T rans. Imag e Proce ss. , vol. 20, no. 11, pp. 3097– 3111, Nov . 2011.

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

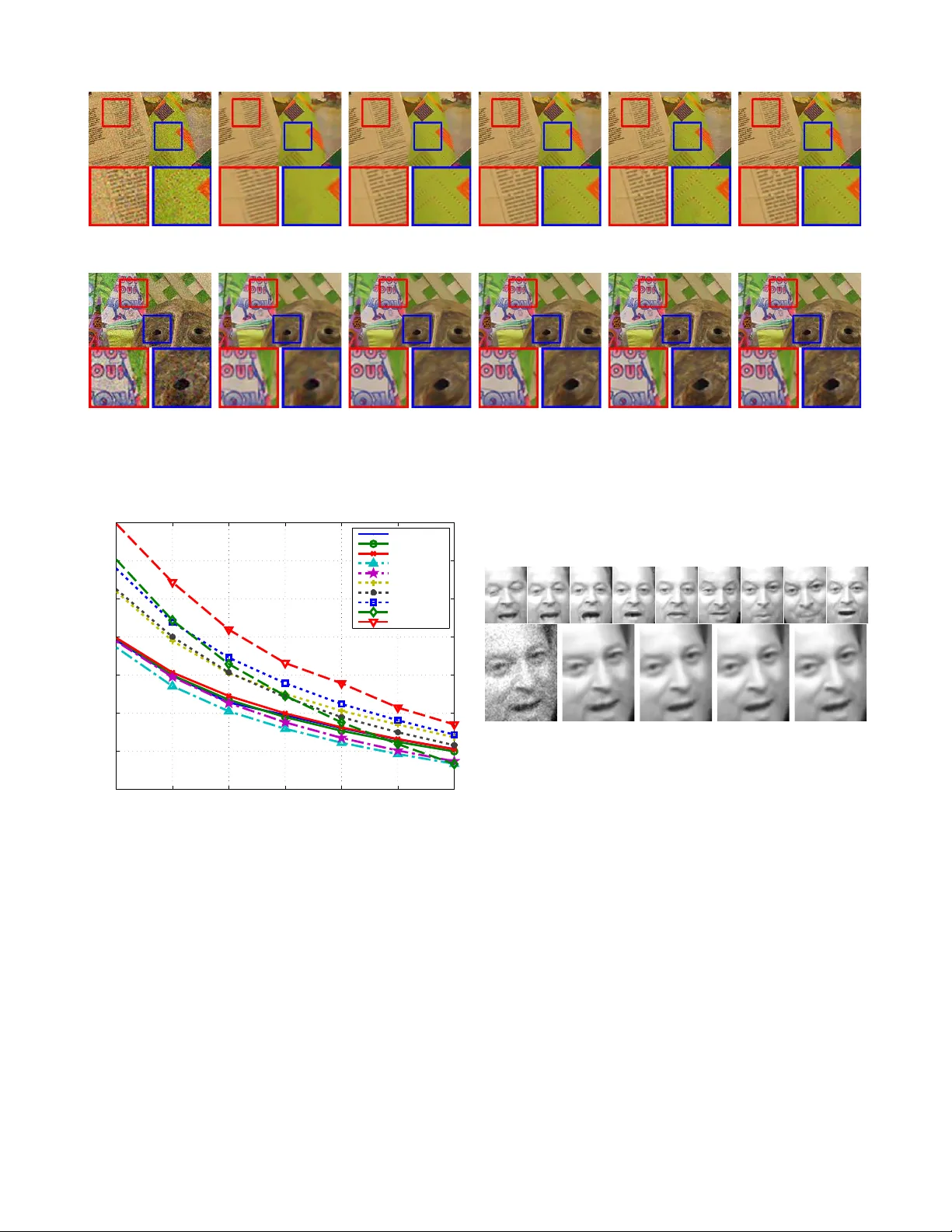

Leave a Comment