Kronecker PCA Based Spatio-Temporal Modeling of Video for Dismount Classification

We consider the application of KronPCA spatio-temporal modeling techniques [Greenewald et al 2013, Tsiligkaridis et al 2013] to the extraction of spatiotemporal features for video dismount classification. KronPCA performs a low-rank type of dimension…

Authors: Kristjan H. Greenewald, Alfred O. Hero III

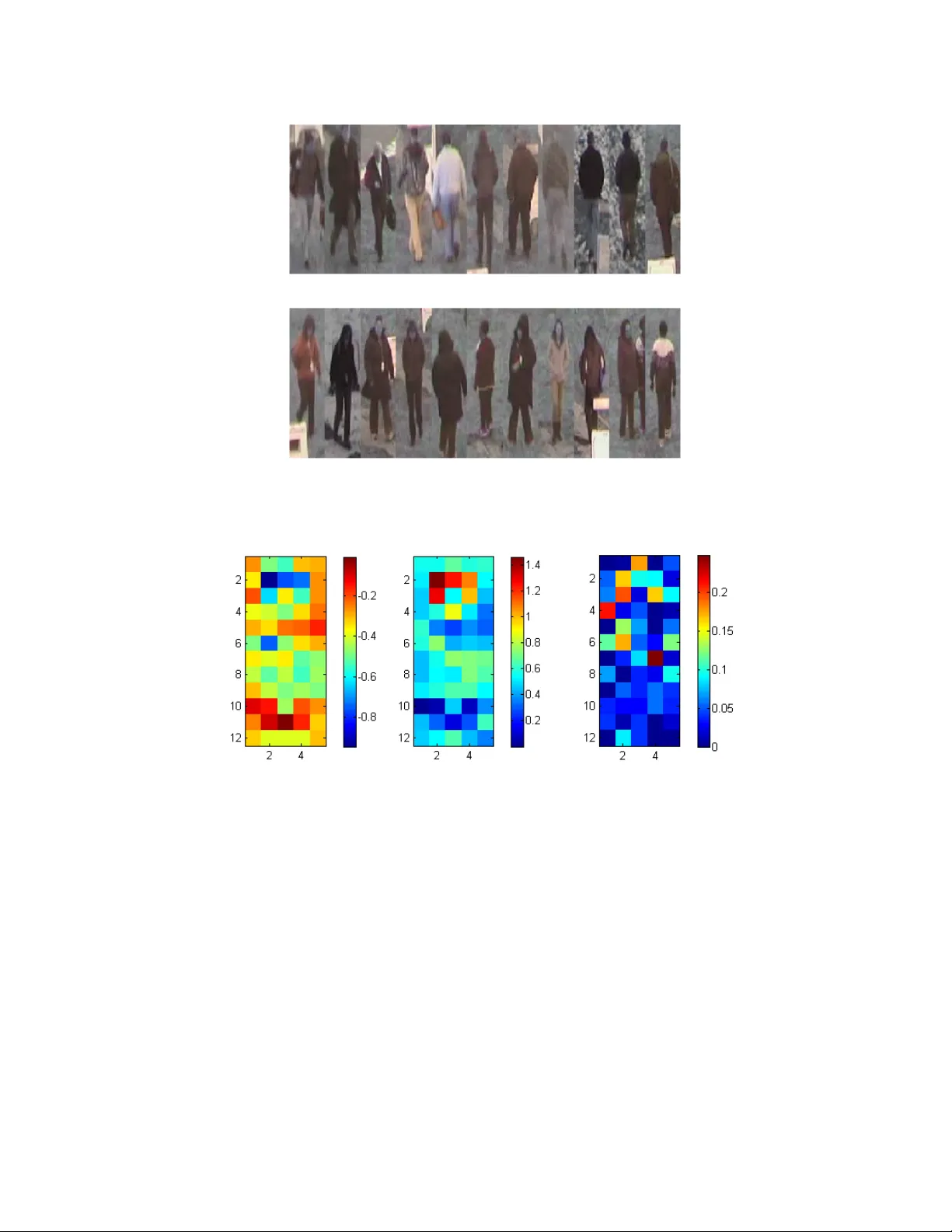

Kronec k er PCA Based Spatio-T emp oral Mo deling of Video for Dismoun t Classification Kristjan H. Greenew ald a and Alfred O. Hero I II a a Electrical Engineering and Computer Science, Univ ersity of Mic higan, Ann Arb or, MI, USA ABSTRA CT W e consider the application of KronPCA spatio-temp oral mo deling techniques 1, 2 to the extraction of spatio- temp oral features for video dismoun t classification. KronPCA p erforms a lo w-rank type of dimensionalit y re- duction that is adapted to spatio-temporal data and is characterized b y the T frame multiframe mean µ and co v ariance Σ of p spatial features. F or further regularization and improv ed inv erse estimation, w e also use the diagonally corrected KronPCA shrink age metho ds w e presen ted in. 1 W e apply this v ery general method to the mo deling of the multiv ariate temp oral behavior of HOG features extracted from p edestrian b ounding boxes in video, with gender classification in a c hallenging dataset c hosen as a sp ecific application. The learned cov ari- ances for eac h class are used to extract spatiotemp oral features whic h are then classified, achieving comp etitiv e classification p erformance. Keyw ords: Gender classification, spatio-temp oral mo deling, Kroneck er PCA, co v ariance estimation 1. INTR ODUCTION Accurate classification of v arious h uman c haracteristics such as gender, gait, age, etc. in video is a crucial part of surv eillance video scene understanding. 3 In this w ork, w e prop ose learning spatio-temp oral behavior mo dels and applying them to classifying h uman attributes that manifest themselves both in appearance and mo vemen t patterns. As a sp ecific application we will consider gender classification on a challenging lo w-resolution surv eillance video dataset. Our approac h is to first detect and trac k the humans in eac h video. A t eac h frame, w e extract spatial features from each human’s area in the image. W e then prop ose to learn models of the spatiotemporal b eha vior of the features. These mo dels are then used to create relev an t spatio-temp oral features with which to classify the test videos. The spatio-temporal behavior is modeled using the spatio-temp oral mean and cov ariance, 1, 2 since the num b er of training samples is small relativ e to the num b er of features and we wish to a void imp osing graphical structures a priori. Our goal is th us to learn correlations b et ween v ariables b oth within a time instant (frame) and across time using as few training samples as p ossible. The co v ariance for m ultiv ariate temporal processes manifests itself as m ultiframe cov ariance. 1, 2 Let X be a p × T matrix with entries ˜ x ( m, t ) denoting samples of a space- time random pro cess defined ov er a p -grid of space samples m ∈ { 1 , . . . , p } and a T -grid of time samples t ∈ { 1 , . . . , T } . Let x = vec( X ) denote the pT column v ector obtained by lexicographical reordering. Define the pT × pT spatiotemporal co v ariance matrix Σ = Cov[ x ] . (1) In this application, w e m ust perform large p and small n cov ariance estimation, that is, w e are in the high dimensional regime where the num b er of v ariables exceeds the n umber of training samples a v ailable to learn the co v ariance. F or p ≥ n , the standard sample cov ariance matrix (SCM) is the maximum likelihoo d cov ariance estimator. When n is on the order of p or smaller, ho wev er, it is w ell known that the SCM has a very undesirable p oorly conditioned eigenstructure which results in the po or conditioning of the in verse of the SCM, leading to p oor estimates of the in verse cov ariance ( Σ − 1 ), which is needed for classification. One approach for addressing this problem is shrink age estimation, whic h uses a weigh ted a v erage of the sample co v ariance and a deterministic cov ariance, often chosen as a diagonal matrix called the shrink age target. F urther author information: (Send correspondence to Kristjan H. Greenew ald at greenewk [at] umich [dot] edu) This can significantly improv e the accuracy of the in verse of the estimate due to the impro ved eigensp ectrum. In this work, w e use shrink age estimators of cov ariance matrices ha ving spatiotemp oral structure. 1 Estimation of spatio-temp oral co v ariance matrices based on reducing the n umber of parameters via a truncated sum of Kronec ker products representation, which we call KronPCA, is discussed in, 1, 2, 4 and asymptotic p erformance analysis 4 predicts significant gains in estimator MSE in the high dimensional regime as n, p → ∞ . W e then presen t a metho d for using the learned spatiotemp oral cov ariances for classification, and compare it to other standard classification metho ds such as the SVM. Gender classification in video is extensively co v ered in the literature. 3, 5 Of those based on whole-b o dy classification (e.g. as opp osed to face-based classification), some suc h as those referenced in 3 are based on v arious spatial features only , whereas others propose a v ariet y of features based off of silhouettes 5, 6 to capture spatio-temp oral b eha viors suc h as gait ( 5, 6 and citations). It has b een found that b oth gait and app earance are highly indicative of gender, 3 and thus aids classification significantly , with gait b eing esp ecially useful when the sub jects are heavily clothed and/or the video is low resolution. 3, 5 Our goal is thus to reduce the p oten tial loss of information that results from predefined feature extraction by automating the spatio-temp oral mo deling pro cess while maintaining lo w training sample requiremen ts, i.e. av oiding the curse of dimensionalit y . The rest of this pap er is organized as follows: in Section 2, we review blo ck T o eplitz DC-KronPCA. 1 Our metho ds 1 for standard shrink age of the KronPCA estimate are describ ed in Section 3, and our feature extraction and classification approac hes are describ ed in Section 5. The dataset w e use is describ ed in Section 4, and gender classification results and relev ant feature analysis are given in Section 6. Our conclusions are presen ted in Section 7. 2. BLOCK TOEPLITZ KR ONECKER PCA In this section, w e consider the regularization of the sample co v ariance via decomp osition into a blo c k T eoplitz sum of (space vs. time) Kroneck er pro ducts representation (KronPCA). 1, 2, 4 F ollo wing, 1, 2, 4, 7 the DC-KronPCA mo del is defined as Σ = X r i =1 T i ⊗ S i + I ⊗ U , (2) where T i are T × T T oeplitz matrices (the temporal Kronec ker factors), S i are p × p matrices (the spatial Kronec ker factors), U is a p × p diagonal matrix. F ollo wing the approach of, 4 w e prop ose to fit the mo del (2) to the sample cov ariance matrix Σ S C M = n − 1 P n i =1 ( x i − x )( x i − x ) T , where x is the sample mean, and n is the num b er of samples of the space time pro cess X . The estimation of the parameters T i , S i and U in (2) is p erformed by minimizing the follo wing ob jective function k R − ˆ R k 2 F + β k ˆ R − R ( I ⊗ U ) k ∗ , (3) where R = R ( Σ S C M ) and R denotes the p erm utation rearrangement operator defined in 4, 8 whic h maps pT × pT matrices to T 2 × p 2 matrices. The ob jective function is minimized ov er all T 2 × p 2 matrices ˆ R that satisfy the constrain t that R − 1 ( ˆ R ) = ˆ Σ is of the form (2). This estimation pro cedure is equiv alent 2 to setting ˆ Σ = I ⊗ U + R − 1 ( ˆ R ) where R − 1 is the dep erm utation op erator (inv erse of R ) and ˆ R is found by solving min ˆ R || M ◦ ( R − ˆ R ) || 2 F + β k ˆ R k ∗ , ˆ R = r X i =1 t i s T i , s.t. T i T o eplitz , ∀ i, (4) where the t i , s i are T i , S i p erm uted as in, 2, 8 M is a matrix masking out the elements corresponding to the co v ariance diagonal, and ◦ denotes the elemen twise pro duct. The minimizing U is trivial to compute once ˆ R is obtained. F ollo wing the metho d of 7 for incorporating the T oeplitz constraint, the optimization can b e shown to b e equiv alen t to min ˜ R || ˜ M ◦ ( B − ˜ R ) || 2 F + β k ˜ R k ∗ (5) where ˜ R = P ( ˆ R ), ˜ M = sign( P ( M )), and B = P ( R ). The op erator P (from T 2 × p 2 to (2 T − 1) × p 2 matrices) is defined as ˜ A = P ( A ) such that ˜ A j + T = 1 p T − | j | X k ∈K ( j ) A k , ∀ j ∈ [ − T + 1 , T − 1] , (6) where A j is the j th row of A . This w ell-studied optimization problem (nuclear norm penalized lo w rank matrix approximation with missing en tries) is considered in, 9 where it is sho wn to b e conv ex. The blo c k T o eplitz diagonally corrected cov ariance estimate is giv en b y ˆ Σ = I ⊗ U + R − 1 P ∗ ˜ R , (7) where ˜ R is the minimizer of (5) and P ∗ is defined b y A = P ∗ ( ˜ A ) where A k = 1 p T − | j | ˜ A j + T , ∀ k ∈ K ( j ) , ∀ j ∈ [ − T + 1 , T − 1] . (8) Additions to the diagonal matrix U are discussed in the next section. 3. KR ONECKER PCA SHRINKAGE ESTIMA TION Diagonal shrink age shrinks the sample cov ariance tow ards a scaled identit y matrix. This improv es the condition- ing of the estimate, which makes the inv erse of the estimate more stable. ˆ Σ = (1 − ˆ ρ ) ˆ Σ kr on + ˆ ρ F , (9) where F = trace( ˆ Σ kr on ) pT I and ˆ Σ kr on is the DC-KronPCA estimate of the cov ariance. It remains to determine the amoun t of shrink age, i.e. ˆ ρ . W e use the Ledoit-W olf (L W) 10 solution that asymptotically (large sample size n ) minimizes F rob enius estimation error when ˆ Σ kr on is the SCM (i.e. full separation rank estimate). Due to the lo wer v ariance of the DC-KronPCA co v ariance estimate relativ e to the SCM, the amoun t of shrink age is exp ected to b e o verestimated. W e call this metho d DC-KronPCA-L W. 4. D A T ASET W e test our methods on the SW A G-1 gender recognition dataset released b y AFRL. 11 This dataset consists of videos of p edestrians walking through a grassy field, imaged at a long distance using a lo w resolution (see Figure 2), very low frame rate staring surveillance camera. The unstaged truthed dataset was collected o ver a long p eriod, resulting in a wide v ariety of weather conditions such as rain, sno w, fog, and sun, and a wide v ariety of (often heavy) clothing. There are 89 videos eac h for both male and female front and back views (356 total). In this w ork random subsets (ev enly divided by gender) of the videos are used for training, and the remainder for testing, with the p erformance av eraged ov er m ultiple Monte Carlo trials. Some example frames demonstrating the dataset v ariability are shown in Figure 1. 5. FEA TURE EXTRA CTION W e choose to use Histogram of Oriented Gradien ts (HOG) based spatial features, due to their attractive inv ariance prop erties, spatial arrangemen t, and successful use in ob ject detection/recognition. F or eac h video frame, we use the F elzenswalb deformable part mo del HOG detector 12 to detect the h umans and draw b ounding b o xes. Due to the relatively uniform bac kground and relativ e lac k of clutter, the detector p erforms very well. A standard trac king approach is used to connect the detections into tracks, with interpolations for (rare) missed detections. W e resize the b ounding b o xes as needed to ac hiev e uniformit y , so that the num b er and p osition of the HOG Figure 1. Example video frames from SW AG-1 gender recognition dataset. Male sub jects are on the left and female on the right. Note the low resolution and the v ariations in weather, p ose, and clothing. Figure 2. Example zo omed in view of a face in the dataset. Note extremely low resolution. features is inv ariant. Finally , for each b ounding b o x we compute HOG features (total spatial dimension 1860). This gives a temp oral sequence of features for eac h human track. Due to the limited training sample size, estimation of the full co v ariance with sufficien t accuracy for classi- fication limits the usable num b er of HOG features. W e thus use a dy adic blo c kwise approac h, which in volv es successiv ely splitting the HOG feature array along differen t spatial dimensions to create levels of nested blocks (groups of features) for whic h the cov ariances are learned indep enden tly . This in addition allows for impro ved robustness b y generating multiple likelihoo d features that can b e used, th us reducing any negative impacts of using a Gaussian likelihoo d. F or classification, w e first use lab eled training data to learn m ultiframe feature means µ k,j and cov ariances Σ k,j for eac h gender k and eac h blo c k j , using the learning metho ds discussed ab o ve. W e then classify the testing data examples x . F or each test instance, the Gaussian log likelihoo d ratio (LLR) across gender is computed for eac h blo c k using the appropriate learned means and cov ariances. The blo c k LLRs are then combined in a linear com bination with p ositiv e weigh ts learned using iteratively thresholded logistic regression. W e call this approach “KronPCA logistic LLR”. Adv antages of this approach are the abilit y to adaptively “select” the appropriate blo c k size for best empirical p erformance, reduced need for training as opp osed to other approaches such as the SVM, and intuition relating to the fact that LLRs from independent observ ations add to form an ov erall LLR. As baselines we consider the SVM using the m ultiframe HOG features, and the standard quadratic classifier (“KronPCA ov erall LLR”) based off the learned global means and cov ariances (i.e. no partition). 6. RESUL TS Ov er sev eral Mon te Carlo trials, we randomly divided the dataset in to n frames of training trac ks and 3600 frames of testing tracks, equally divided b et ween male and female suc h that the sets of videos are disjoint. W e then extracted the multiframe HOG features and trained our DC-KronPCA-L W logistic LLR, the DC-KronPCA-L W o verall LLR method, and the SVM. Finally , the trac kwise classification p erformance w as ev aluated on the testing data. Note that the only tuning parameters are those related to the HOG features, the num b er of Kronec ker factors used ( β c hosen so that r = 2), the num b er of levels in the m ultilevel decomposition (for logistic LLR) (held constant at 4), the length of the m ultiframe windo w T , and the num b er of training examples n . Results for eac h classifier and differen t v alues for T as a function of n are shown in Figure 3 and T able 1. Note that the logistic (multilev el) LLR outp erforms the ov erall LLR b oth ov erall and in terms of robustness, and the SVM particularly when more training examples are a v ailable. T emp oral information (used when T > 1) impro ves p erformance as desired using our classifiers, but do es not when using the SVM, indicating that the temp oral regularization w e employ b etter o vercomes the curse of dimensionalit y stemming from the longer m ultiframe feature vector. F or comparison, note that when the RGB pixel v alues in the b ounding b o xes are used as the only features, neither classifier exceeds coin flip rates at 2400 training samples. Figure 3. Av erage correct classification rate using random training/testing data partition. Note the sup eriorit y of the Kro- nPCA logistic m ultilevel LLR (loglikelihoo d ratio) method to the SVM and the KronPCA partition free LLR (KronPCA Ov erall LLR), especially for more training examples. Also note the gains achiev ed using larger T (length of multiframe windo w) with the cov ariance metho ds, whereas the SVM loses p erformance. Figure 4 and Figure 5 shows example frames from trac ks that w ere correctly and incorrectly classified using the multilev el LLR classifier trained on a random subset of the data. Figure 6 shows results relating to the relev ance of the spatial HOG features, sp ecifically , the step-up and step-do wn performances for each feature. While no features app ear to b e crucial for classification, note the relativ e uselessness of the background areas (as exp ected) and the relative imp ortance of the upp er central areas corresp onding to the head and shoulders area, which fits with intuition regarding gender differences in ph ysical size, face shape, and hair. T = 1 T = 4 T = 6 KronPCA Logistic LLR 82.1 88.1 89.4 KronPCA Overall LLR 70.0 87.7 88.0 SVM 85.8 84.6 84.4 T able 1. Average performance (%) for 2400 training examples. Note the sup eriorit y of KronPCA Logistic LLR for T > 1 (i.e. when KronPCA is applicable). 7. CONCLUSION In this work we considered the application of high dimensional KronPCA spatiotemporal cov ariance learning tec hniques to the mo deling of the temporal b eha vior of spatial features in video. As an application we considered the classification of pedestrian gender in a challenging video dataset. This nonparametric modeling approac h allo wed the incorporation of b oth app earance and temporal characteristics such as gait into the features. It Figure 4. Bounding boxes selected from each misclassified track based on using a random training set. T op: Male classified as female. Bottom: F emale classified as male. Note v arious apparen t causes of misclassification such as heavy coats, baggage, neighbor interference, heavy w eather, and p ossibly tracks incorrectly truthed as female in the dataset. w as found that the addition of temp oral information aided classification significan tly , and that our metho ds consisten tly outp erform the baseline classifiers. A CKNOWLEDGMENTS This research w as partially supp orted b y ARO under gran t W911NF-11-1-0391 and b y AFRL under grant F A8650-07-D-1220-0006. The authors also wish to thank Mr. Edmund Zelnio for propos ing the application and dataset. REFERENCES [1 ] Greenew ald, K. and Hero, A., “Regularized blo c k to eplitz cov ariance matrix estimation via kroneck er pro d- uct expansions,” T o app e ar in pr o c e e dings of IEEE SSP, available as arXiv:1402.5568 (2014). [2 ] Greenew ald, K., Tsiligk aridis, T., and Hero, A., “Kronec ker sum decompositions of space-time data,” IEEE International Workshop on Computational A dvanc es in Multi-Sensor A daptive Pr o c essing (CAM- SAP) (2013). Figure 5. Bounding b o xes selected from example correctly classified tracks based on using a random training set. T op: Male correctly classified. Bottom: F emale correctly classified. Note successes in situations including hea vy w eather, neigh b or interference, heavy clothing/baggage, etc. Figure 6. Relev ance of individual HOG features to m ultiframe SVM classification p erformance (change in correct classi- fication %), av eraged ov er random data partitions). Images are of the HOG feature lo cations in the standard b ounding b o xes. Left: Performance c hange as a function of which HOG feature is remo ved. Middle: Performance c hange as a function of whic h HOG feature is added to half of the features. Right: F eature weigh t magnitudes learned b y sparse logistic regression on the HOG features. F or each of these plots, note the particular relev ance of the head and leg areas, and the edge areas in the logistic regression results. [3 ] Y u, S., T an, T., Huang, K., Jia, K., and W u, X., “A study on gait-based gender classification,” IEEE T r ansactions on Image Pr o c essing 18 (8), 1905–1910 (2009). [4 ] Tsiligk aridis, T. and Hero, A., “Co v ariance estimation in high dimensions via kronec k er product expansions,” IEEE T r ansactions on Signal Pr o c essing 61 (21), 5347–5360 (2013). [5 ] Hu, M., W ang, Y., Zhang, Z., and Zhang, D., “Gait-based gender classification using mixed conditional random field,” IEEE T r ansactions on Systems, Man, and Cyb ernetics, Part B: Cyb ernetics 41 (5), 1429– 1439 (2011). [6 ] Nixon, M. S. and Carter, J. N., “Automatic recognition b y gait,” Pr o c e e dings of the IEEE 94 (11), 2013–2024 (2006). [7 ] Kamm, J. and Nagy , J. G., “Optimal kronec ker pro duct approximation of block to eplitz matrices,” SIAM Journal on Matrix Analysis and Applic ations 22 (1), 155–172 (2000). [8 ] W erner, K., Jansson, M., and Stoica, P ., “On estimation of co v ariance matrices with kronec ker pro duct structure,” IEEE T r ansactions on Signal Pr o c essing 56 (2), 478–491 (2008). [9 ] Mazumder, R., Hastie, T., and Tibshirani, R., “Sp ectral regularization algorithms for learning large incom- plete matrices,” The Journal of Machine L e arning R ese ar ch 11 , 2287–2322 (2010). [10 ] Ledoit, O. and W olf, M., “A w ell-conditioned estimator for large-dimensional co v ariance matrices,” Journal of multivariate analysis 88 (2), 365–411 (2004). [11 ] McCoppin, R., Ko ester, N., Rude, H. N., Rizki, M., T amburino, L., F reeman, A., and Mendoza-Schrock, O., “Electro-optical seasonal w eather and gender data collection,” Pr o c e e dings of SPIE 8751 , 87510H–87510H–9 (2013). [12 ] F elzenszw alb, P ., McAllester, D., and Ramanan, D., “A discriminatively trained, m ultiscale, deformable part mo del,” IEEE Confer enc e on Computer Vision and Pattern R e c o gnition (CVPR) , 1–8, IEEE (2008).

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment