A higher-order MRF based variational model for multiplicative noise reduction

The Fields of Experts (FoE) image prior model, a filter-based higher-order Markov Random Fields (MRF) model, has been shown to be effective for many image restoration problems. Motivated by the successes of FoE-based approaches, in this letter, we propose a novel variational model for multiplicative noise reduction based on the FoE image prior model. The resulted model corresponds to a non-convex minimization problem, which can be solved by a recently published non-convex optimization algorithm. Experimental results based on synthetic speckle noise and real synthetic aperture radar (SAR) images suggest that the performance of our proposed method is on par with the best published despeckling algorithm. Besides, our proposed model comes along with an additional advantage, that the inference is extremely efficient. {Our GPU based implementation takes less than 1s to produce state-of-the-art despeckling performance.}

💡 Research Summary

This paper introduces a novel variational framework for reducing multiplicative (speckle) noise in coherent imaging modalities such as synthetic aperture radar (SAR), ultrasound, and laser imaging. The core of the approach is the Fields of Experts (FoE) model, a higher‑order Markov Random Field prior that captures natural image statistics through a set of learned linear filters and a non‑linear Lorentzian potential. By reusing 48 pre‑trained 7×7 filters (originally learned for additive Gaussian denoising), the authors avoid any additional training phase while retaining a powerful, data‑driven regularizer.

The observation model assumes that the measured amplitude f follows a Nakagami (or Gamma) distribution whose scale parameter is the true reflectivity u. The negative log‑likelihood yields a data fidelity term D(u,f)=L·(2·log u+f²/u²), which is non‑convex with respect to u>0. To handle this difficulty the authors propose two alternative formulations. First, they apply a logarithmic change of variables w=log u, turning the data term into a convex function of w: D₁(w)=½·(2w+f²·e^{-2w}). Second, they adopt the Csiszár I‑divergence, a surrogate that is strictly convex for u>0: D₂(u)=½·(u²−2f²·log u). Both terms have complementary strengths: D₁ excels on textured regions, while D₂ performs better on homogeneous areas. Consequently, they combine the two with separate weights λ₁ and λ₂, leading to the final energy (III.1):

E(w)=E_FoE(e^{w}) + λ₁·½·(2w+f²·e^{-2w}) + λ₂·½·(e^{2w}−2f²·w).

The resulting optimization problem is non‑convex because the FoE prior is itself non‑convex. The authors employ iPiano, a recent inertial forward‑backward splitting algorithm designed for problems of the form min F(u)+G(u) where F is smooth (possibly non‑convex) and G is convex (possibly non‑smooth). In this setting, F corresponds to the FoE term (smooth but non‑convex) and G to the combined convex data term. The gradient of F is efficiently computed via convolution with the learned filters followed by the derivative of the Lorentzian potential (ρ′(x)=2x/(1+x²)). The proximal operator of G reduces to a set of scalar equations that are solved with Newton’s method, typically converging in fewer than ten iterations.

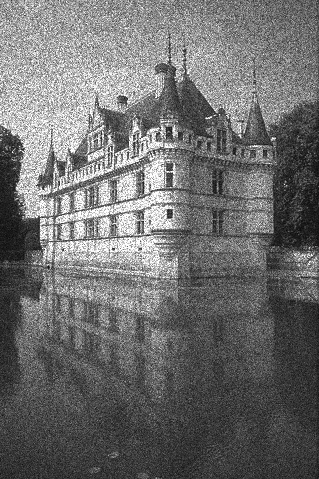

Experimental evaluation covers both synthetic speckle (L=1, 3, 8) on standard test images (Lena, Peppers, Couple) and a large Berkeley benchmark of 68 images, as well as a real SAR image with 5 looks. Parameter settings (λ₁, λ₂) are chosen empirically per look number (e.g., λ₁=550, λ₂=0.02 for L=8). Quantitative results (PSNR/SSIM) show that the proposed method matches the state‑of‑the‑art SAR‑BM3D algorithm across all test conditions, with only marginal differences. Visual inspection reveals that the combined model preserves fine structures better than BM3D in some cases, while BM3D’s non‑local patch aggregation can be advantageous when the number of looks is very low (L=1), where the local FoE model may produce block‑type artifacts.

From a computational standpoint, a pure MATLAB implementation runs in about 27 seconds for a 481×321 image, whereas a CUDA implementation on an NVIDIA GTX 680 processes a 512×512 image in roughly 0.6 seconds, demonstrating the suitability of the FoE‑based model for GPU parallelization. This speed is comparable to, and in some cases faster than, optimized versions of SAR‑BM3D (e.g., FANS).

The paper’s contributions can be summarized as follows: (1) a principled integration of a high‑order, filter‑based image prior into a multiplicative noise reduction framework; (2) a careful analysis of data fidelity terms, leading to a hybrid convex formulation that leverages the strengths of both the exact likelihood and the I‑divergence surrogate; (3) the application of iPiano to solve the resulting non‑convex problem efficiently; (4) extensive quantitative and qualitative validation showing parity with the best existing despeckling methods; and (5) a GPU‑accelerated implementation achieving near‑real‑time performance.

Limitations include the reliance on a local prior, which can struggle with extremely noisy (low‑look) data where non‑local self‑similarity is crucial, and the use of pre‑trained FoE filters that are not specifically adapted to SAR statistics. Future work could involve learning FoE filters directly on SAR data, incorporating non‑local mechanisms (e.g., patch‑based aggregation) into the variational formulation, or exploring alternative non‑convex solvers such as ADMM variants or stochastic proximal methods. Overall, the study demonstrates that high‑order MRF priors combined with modern non‑convex optimization can deliver state‑of‑the‑art speckle reduction while maintaining computational efficiency.

Comments & Academic Discussion

Loading comments...

Leave a Comment