Towards Provenance and Traceability in CRISTAL for HEP

This paper discusses the CRISTAL object lifecycle management system and its use in provenance data management and the traceability of system events. This software was initially used to capture the construction and calibration of the CMS ECAL detector at CERN for later use by physicists in their data analysis. Some further uses of CRISTAL in different projects (CMS, neuGRID and N4U) are presented as examples of its flexible data model. From these examples, applications are drawn for the High Energy Physics domain and some initial ideas for its use in data preservation HEP are outlined in detail in this paper. Currently investigations are underway to gauge the feasibility of using the N4U Analysis Service or a derivative of it to address the requirements of data and analysis logging and provenance capture within the HEP long term data analysis environment.

💡 Research Summary

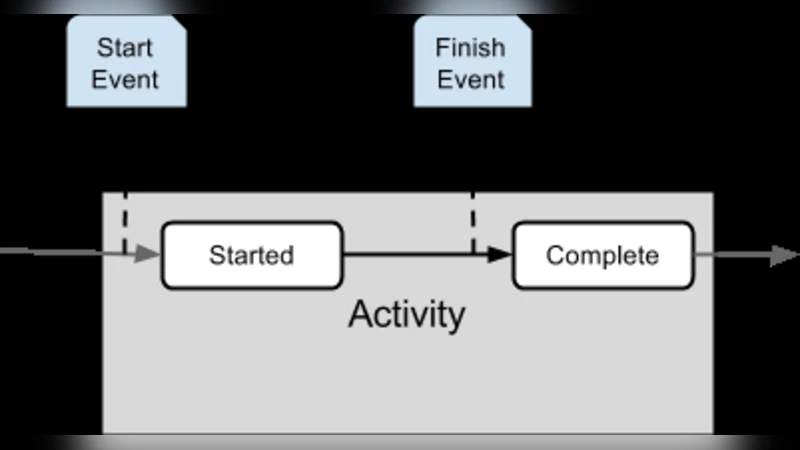

The paper presents the CRISTAL object‑lifecycle management system as a robust framework for provenance capture and traceability, and explores its applicability to long‑term data preservation in High Energy Physics (HEP). CRISTAL follows a “description‑driven” architecture: every data entity is defined by a meta‑description and instantiated as an object whose state changes are recorded as events with unique identifiers. This unified handling of data and metadata enables automatic generation of provenance records without the need for separate logging mechanisms.

The authors first describe the original deployment of CRISTAL during the construction and calibration of the CMS Electromagnetic Calorimeter (ECAL) at CERN. Each ECAL module’s manufacturing date, calibration parameters, responsible engineer, and test results were modelled as activities and transitions within CRISTAL. As a result, the full production history of every detector component became instantly queryable for later physics analyses, dramatically improving reproducibility and quality control.

Subsequent case studies involve the neuGRID and N4U (Neuro‑Imaging for Translational Research) projects, where CRISTAL was adapted to capture complex biomedical imaging pipelines. In N4U, the Analysis Service records every input dataset, algorithm version, parameter set, and output file as a graph‑structured provenance record. This fine‑grained capture allows researchers to reconstruct any analysis step, facilitating validation and reuse of results. The experience demonstrates CRISTAL’s flexibility: new workflow steps or data types can be added simply by extending the description schema, without altering the underlying storage engine.

From these examples the paper extracts lessons for HEP. The primary requirements identified are (1) the ability to record billions of events and parameter changes in near‑real time while keeping storage overhead low, and (2) seamless integration with existing preservation infrastructures such as the CERN Open Data Portal and the Analysis Preservation services. CRISTAL’s layered design satisfies both: metadata descriptions can be expressed in XML/JSON, while the persistence layer can be swapped among relational databases, NoSQL stores, or file systems. Event logs can be streamed to a time‑series database for fast queries, and the system can expose RESTful or GraphQL APIs for external tools.

However, the authors acknowledge several challenges before CRISTAL can be adopted at the scale of modern HEP experiments. First, the current implementation relies on a centrally managed database and has limited support for high‑concurrency, distributed writes typical of grid or cloud‑based analysis farms. Implementing distributed transaction handling, conflict resolution, and horizontal scaling (e.g., via Apache Kafka for event streaming and Cassandra for scalable storage) is essential. Second, while CRISTAL excels with structured production data, HEP workflows also generate large volumes of unstructured simulation logs, user‑defined analysis scripts, and ad‑hoc metadata. Mapping these heterogeneous artifacts into the description‑driven model requires additional tooling and conventions.

To address these gaps, the paper proposes a roadmap: (i) migrate the backend to a hybrid storage solution that combines relational tables for core metadata with NoSQL or time‑series stores for high‑frequency event streams; (ii) define a set of standard interfaces and schemas that align CRISTAL’s provenance model with CERN’s Analysis Preservation (CAP) and Open Data initiatives; (iii) develop lightweight client libraries that can be embedded in existing HEP software stacks (e.g., CMSSW, Gaudi) to automatically emit CRISTAL events during job execution.

The authors also report ongoing investigations into re‑using the N4U Analysis Service as a prototype for HEP long‑term analysis logging. By instrumenting a CMS analysis workflow with CRISTAL event collectors, they have demonstrated that provenance records can be generated with minimal overhead and that downstream tools can retrieve the full lineage of an analysis result, reducing the time needed for reproducibility checks.

In conclusion, CRISTAL offers a compelling solution for systematic provenance capture and traceability, with a flexible meta‑model that can accommodate the diverse data products of HEP experiments. Its successful deployment in CMS ECAL, neuGRID, and N4U validates its adaptability. Nevertheless, scaling the system to the distributed, high‑throughput environment of contemporary HEP, and harmonising its interfaces with existing preservation ecosystems, remain critical steps. The paper outlines concrete technical directions to overcome these hurdles, positioning CRISTAL as a promising candidate for future HEP data‑preservation frameworks.