Re-scale AdaBoost for Attack Detection in Collaborative Filtering Recommender Systems

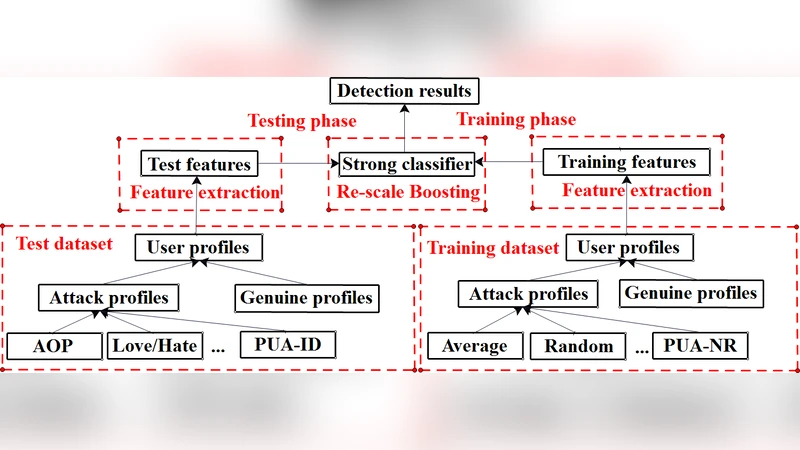

Collaborative filtering recommender systems (CFRSs) are the key components of successful e-commerce systems. Actually, CFRSs are highly vulnerable to attacks since its openness. However, since attack size is far smaller than that of genuine users, conventional supervised learning based detection methods could be too “dull” to handle such imbalanced classification. In this paper, we improve detection performance from following two aspects. First, we extract well-designed features from user profiles based on the statistical properties of the diverse attack models, making hard classification task becomes easier to perform. Then, refer to the general idea of re-scale Boosting (RBoosting) and AdaBoost, we apply a variant of AdaBoost, called the re-scale AdaBoost (RAdaBoost) as our detection method based on extracted features. RAdaBoost is comparable to the optimal Boosting-type algorithm and can effectively improve the performance in some hard scenarios. Finally, a series of experiments on the MovieLens-100K data set are conducted to demonstrate the outperformance of RAdaBoost comparing with some classical techniques such as SVM, kNN and AdaBoost.

💡 Research Summary

The paper addresses the vulnerability of collaborative‑filtering recommender systems (CFRSs) to “shilling” or profile‑injection attacks, where a small number of malicious users inject crafted rating profiles to bias recommendation results. Because the proportion of attackers is typically tiny compared with genuine users, conventional supervised classifiers such as SVM or k‑NN suffer from severe class‑imbalance, leading to low detection rates and high false‑alarm rates.

To overcome these challenges the authors propose a two‑pronged solution. First, they design a rich set of 18 statistical features extracted from each user’s rating vector. These features combine previously used generic descriptors (e.g., rating deviation from item mean – RDMA, weighted deviation – WDMA, weighted degree of agreement – WDA, profile length variance) with type‑specific metrics that capture the presence of filler items, selected items, and target items as defined in fourteen well‑known attack models (random, average, bandwagon‑average, bandwagon‑random, segment, reverse‑bandwagon, love/hate, AOP, PIA‑AS/ID/NR, PUA‑AS/ID/NR). In addition to five filler‑size based features, three novel features (mean, max, min rating among filler items) are introduced, yielding a high‑dimensional representation that makes the distinction between attack and genuine profiles more linearly separable even when filler size is small.

Second, the authors adapt the re‑scale boosting (RBoosting) concept to AdaBoost, creating a Re‑scale AdaBoost (RAdaBoost) algorithm. Standard AdaBoost iteratively re‑weights mis‑classified samples, but in highly imbalanced settings the minority class may never receive sufficient weight. RBoosting adds a scaling factor α_t to the aggregated classifier after each boosting round, effectively shrinking or expanding the contribution of the current weak learner. The paper adopts a simple schedule α_t = 1/(t+1), which gives early weak learners a larger influence and gradually reduces the scaling as the ensemble grows. Theoretical results from the RBoosting literature guarantee faster numerical convergence and near‑optimal convergence rates compared with classical boosting, while the AdaBoost framework ensures that the algorithm focuses increasingly on the hard (i.e., attack) instances.

Experimental validation is performed on the MovieLens‑100K dataset. The authors generate synthetic attack profiles according to the fourteen models, varying filler sizes (5–30 items) and attack ratios (1%–10% of the user base). For training they inject 160 attackers per model to alleviate imbalance; for testing they use separate sets with diverse filler and attack sizes. The 18‑dimensional feature vectors are fed to RAdaBoost with 50–100 decision‑tree weak learners. Baselines include SVM, k‑NN, and the original (non‑re‑scaled) AdaBoost. Evaluation metrics are overall classification error, detection rate (recall), and false‑alarm rate. Results show that RAdaBoost consistently outperforms the baselines: error rates drop by 3–5 percentage points, recall improves by 7–12 points, and false‑alarm rates are reduced, especially in the hardest scenarios (small filler, low attack proportion). The method also remains stable without over‑fitting despite the modest number of weak learners.

The paper’s contributions are threefold: (1) a comprehensive, model‑aware feature engineering scheme that captures subtle statistical signatures of shilling attacks; (2) the integration of re‑scale boosting into AdaBoost to handle severe class imbalance effectively; (3) extensive empirical evidence that the combined approach yields superior detection performance on a standard benchmark.

Limitations are acknowledged. All experiments rely on a single, relatively small dataset; real‑world e‑commerce platforms with millions of users and items may present scalability and latency challenges. The scaling schedule α_t is chosen heuristically; adaptive or data‑driven tuning could further improve robustness. Because the feature set is crafted with specific attack models in mind, novel or hybrid attacks might evade detection unless the feature set is updated. Computational complexity grows linearly with the number of weak learners and feature dimensions (O(T·d·n)), suggesting the need for parallel or incremental implementations for real‑time deployment.

Future work suggested includes (i) extending RAdaBoost to streaming or online learning settings; (ii) exploring automatic feature learning via deep neural networks or representation learning to reduce model‑specific engineering; (iii) testing on larger, diverse recommendation datasets (e.g., Netflix, Amazon) to assess generalization; and (iv) developing automated procedures for selecting the scaling factor and weak‑learner hyper‑parameters.

Overall, the study demonstrates that a carefully engineered feature space combined with a re‑scaled boosting ensemble can substantially improve shilling‑attack detection in collaborative‑filtering recommender systems, offering a promising direction for securing recommendation engines against malicious manipulation.

Comments & Academic Discussion

Loading comments...

Leave a Comment