Multi-View Video Packet Scheduling

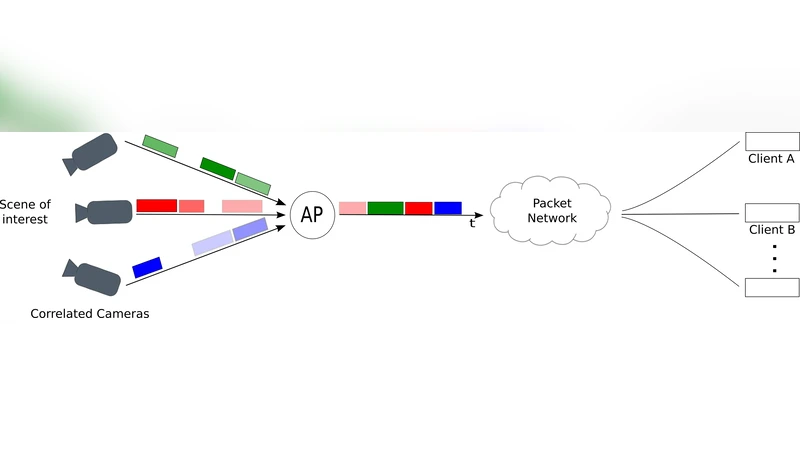

In multiview applications, multiple cameras acquire the same scene from different viewpoints and generally produce correlated video streams. This results in large amounts of highly redundant data. In order to save resources, it is critical to handle properly this correlation during encoding and transmission of the multiview data. In this work, we propose a correlation-aware packet scheduling algorithm for multi-camera networks, where information from all cameras are transmitted over a bottleneck channel to clients that reconstruct the multiview images. The scheduling algorithm relies on a new rate-distortion model that captures the importance of each view in the scene reconstruction. We propose a problem formulation for the optimization of the packet scheduling policies, which adapt to variations in the scene content. Then, we design a low complexity scheduling algorithm based on a trellis search that selects the subset of candidate packets to be transmitted towards effective multiview reconstruction at clients. Extensive simulation results confirm the gain of our scheduling algorithm when inter-source correlation information is used in the scheduler, compared to scheduling policies with no information about the correlation or non-adaptive scheduling policies. We finally show that increasing the optimization horizon in the packet scheduling algorithm improves the transmission performance, especially in scenarios where the level of correlation rapidly varies with time.

💡 Research Summary

The paper addresses the problem of efficiently transmitting highly redundant multi‑view video (MVV) streams generated by multiple cameras capturing the same scene from different viewpoints. Traditional approaches either treat each view independently or rely on fixed scheduling policies, which ignore the strong spatial and temporal correlations among the views and consequently waste bandwidth on a bottleneck channel. To overcome this limitation, the authors propose a correlation‑aware packet scheduling framework that consists of two main contributions: (1) a novel rate‑distortion (R‑D) model that quantifies the importance of each packet for the final multi‑view reconstruction, and (2) a low‑complexity trellis‑search based scheduler that selects the most beneficial subset of packets to transmit within a given optimization horizon.

The R‑D model captures both intra‑view temporal correlation and inter‑view spatial correlation. For each packet i, the expected distortion reduction D_i is expressed as D_i = D_base – α·C_time(i) – β·C_space(i), where C_time(i) measures the predictive power of previously transmitted frames of the same view, C_space(i) measures the compensatory power of frames from other views, and α, β are weighting factors derived from scene statistics. This formulation enables the scheduler to rank packets by their marginal contribution to the overall reconstruction quality under a strict bitrate budget.

Optimizing the selection of packets is cast as a combinatorial problem. Direct integer linear programming would be computationally prohibitive for real‑time operation because the state space grows exponentially with the number of cameras and time slots. The authors therefore construct a trellis where each level corresponds to a transmission time slot and each node represents a candidate packet to be sent at that slot. Transition costs combine the cumulative distortion (as given by the R‑D model) and the bitrate consumed. A pruning strategy retains only the most promising nodes (e.g., those within the top X % of lowest cumulative cost), dramatically reducing the search complexity to O(H·X·|Candidates|), where H is the optimization horizon. Moreover, the scheduler incorporates a “prediction window” that anticipates future changes in correlation (e.g., rapid scene cuts or camera motion) and adjusts the packet importance scores accordingly. This forward‑looking capability allows the algorithm to remain robust when correlation levels vary quickly over time.

The authors evaluate their method using extensive simulations that emulate both static scenes with high inter‑view correlation and highly dynamic scenes with frequent viewpoint changes. Experiments involve configurations of 4 × 4 and 8 × 8 camera networks under bandwidth constraints ranging from 2 Mbps to 5 Mbps. Three baselines are considered: (i) a random scheduler that ignores correlation, (ii) a fixed‑bitrate non‑adaptive scheduler, and (iii) a trellis scheduler with a horizon of one frame (i.e., no forward prediction). Performance metrics include average PSNR, SSIM, and packet loss‑induced latency.

Results show that the proposed correlation‑aware scheduler consistently outperforms the baselines. In static scenarios, it yields an average PSNR gain of 2.8 dB over the random scheduler and 2.1 dB over the fixed‑bitrate approach, with SSIM improvements of 0.04–0.06. In dynamic scenarios, extending the optimization horizon to five frames reduces PSNR degradation during abrupt scene transitions by 1.5 dB compared with a one‑frame horizon, and overall PSNR remains about 0.9 dB higher. Packet loss rates stay below 3 %, indicating suitability for real‑time applications.

The significance of the work lies in (1) providing a principled R‑D model that explicitly accounts for inter‑view redundancy, (2) delivering a practical trellis‑based scheduler that achieves near‑optimal performance with manageable computational load, and (3) demonstrating that a longer, adaptive optimization horizon can effectively handle rapid correlation fluctuations. The authors suggest future extensions such as integrating channel state information for adaptive bitrate control, and employing deep‑learning techniques to predict correlation patterns more accurately, thereby further enhancing scheduling efficiency in real‑world wireless multi‑camera networks.