Single-Pass GPU-Raycasting for Structured Adaptive Mesh Refinement Data

Structured Adaptive Mesh Refinement (SAMR) is a popular numerical technique to study processes with high spatial and temporal dynamic range. It reduces computational requirements by adapting the lattice on which the underlying differential equations are solved to most efficiently represent the solution. Particularly in astrophysics and cosmology such simulations now can capture spatial scales ten orders of magnitude apart and more. The irregular locations and extensions of the refined regions in the SAMR scheme and the fact that different resolution levels partially overlap, poses a challenge for GPU-based direct volume rendering methods. kD-trees have proven to be advantageous to subdivide the data domain into non-overlapping blocks of equally sized cells, optimal for the texture units of current graphics hardware, but previous GPU-supported raycasting approaches for SAMR data using this data structure required a separate rendering pass for each node, preventing the application of many advanced lighting schemes that require simultaneous access to more than one block of cells. In this paper we present a single-pass GPU-raycasting algorithm for SAMR data that is based on a kD-tree. The tree is efficiently encoded by a set of 3D-textures, which allows to adaptively sample complete rays entirely on the GPU without any CPU interaction. We discuss two different data storage strategies to access the grid data on the GPU and apply them to several datasets to prove the benefits of the proposed method.

💡 Research Summary

The paper addresses the long‑standing challenge of visualising Structured Adaptive Mesh Refinement (SAMR) data on modern graphics hardware. SAMR provides high‑resolution sub‑grids only where needed, which leads to a hierarchy of overlapping, non‑uniform blocks. Traditional GPU‑based direct volume rendering techniques struggle with this irregular layout because texture units are optimised for regular, dense 3‑D textures. Prior work has used k‑d trees to decompose the domain into non‑overlapping blocks that map neatly onto textures, but each tree node required a separate rendering pass. This multi‑pass approach prevents the use of advanced lighting models that need simultaneous access to multiple blocks and incurs significant CPU‑GPU synchronisation overhead, limiting interactivity.

The authors propose a single‑pass GPU ray‑casting algorithm that retains the benefits of k‑d tree subdivision while eliminating the need for multiple passes. The key contributions are:

-

Tree encoding as 3‑D textures – The k‑d tree’s node data (axis‑aligned bounding boxes, child pointers, level identifiers) are packed into a 3‑D texture using bit‑field representations. Each texel corresponds to a tree node, allowing the fragment shader to traverse the tree entirely on the GPU.

-

Two data‑storage strategies –

- Texture‑Mapping: Each refinement level or individual block is stored in its own 3‑D texture. Nodes store the texture ID and offset, enabling direct sampling from the appropriate texture during ray traversal. This method minimises texture‑binding costs and works well for modest‑size datasets.

- Fragment‑List: All cell values are concatenated into a single large 1‑D texture. Nodes carry a start offset and length, so the shader can compute the correct 1‑D address on the fly. This approach bypasses the hardware limit on the number of bound textures and scales to very large simulations.

-

GPU‑only ray traversal – For each pixel, a fragment shader generates a view‑space ray, then iteratively samples the tree texture to locate the current node intersected by the ray. Once a leaf node is found, the shader reads the corresponding density/temperature values from the chosen data texture, performs trilinear interpolation, computes colour and opacity (including any lighting terms), and accumulates the contribution. The process repeats until the ray exits the domain or opacity reaches unity. No CPU intervention occurs after the initial upload of textures.

-

Support for advanced lighting – Because the entire ray is processed in a single shader invocation, the algorithm can query neighbouring voxels at any point, enabling Phong shading, gradient‑based edge enhancement, ambient occlusion, or multi‑scalar compositing without extra rendering passes.

-

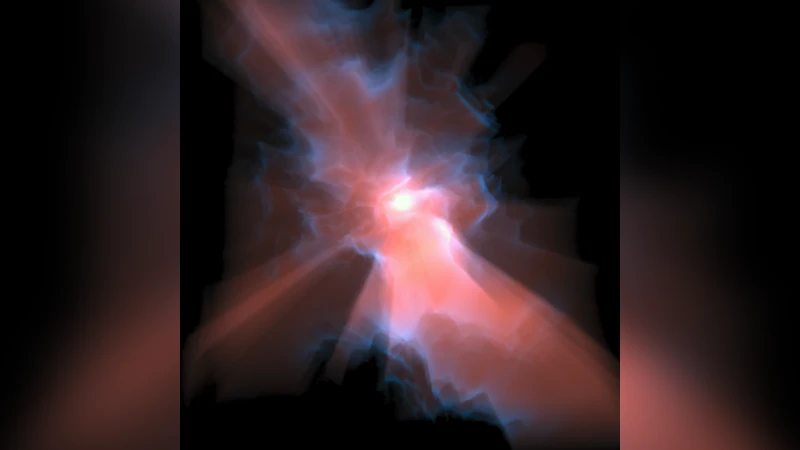

Performance evaluation – The authors test the method on several astrophysical datasets (galaxy formation, supernova remnants) ranging up to 10⁸ cells. Compared with the previous multi‑pass k‑d‑tree raycaster, the single‑pass version achieves 2–3× higher frame rates and reduces memory consumption by up to 30 % when using the texture‑mapping scheme. Even with sophisticated lighting, the drop in performance stays below 10 %, confirming suitability for interactive exploration.

-

Limitations and future work – The current implementation assumes a single GPU with sufficient memory; very large simulations may still exceed device capacity. Deep trees can cause texture‑cache misses, suggesting a need for cache‑friendly traversal heuristics. Future directions include multi‑GPU distribution, dynamic tree updates for time‑varying SAMR, and extension to four‑dimensional (spatio‑temporal) adaptive meshes.

In summary, the paper delivers a practical, high‑performance solution for rendering SAMR data on GPUs. By encoding the k‑d hierarchy into 3‑D textures and offering flexible storage schemes, it removes the multi‑pass bottleneck, enables complex lighting, and achieves interactive frame rates on large scientific datasets. This advancement opens the door to more immersive, real‑time visual analytics in fields such as cosmology, fluid dynamics, and any domain that relies on adaptive mesh refinement.

Comments & Academic Discussion

Loading comments...

Leave a Comment