Low-Cost Learning via Active Data Procurement

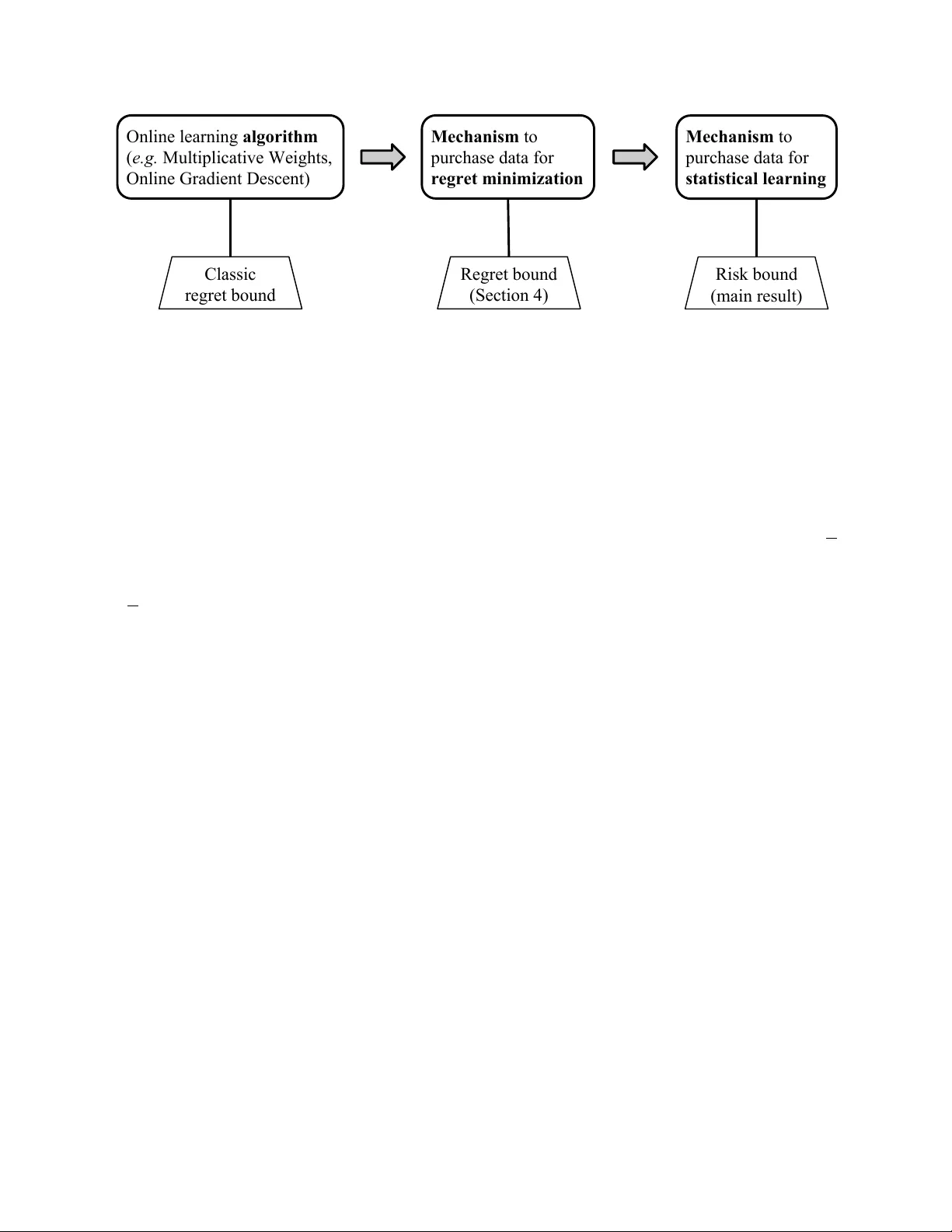

We design mechanisms for online procurement of data held by strategic agents for machine learning tasks. The challenge is to use past data to actively price future data and give learning guarantees even when an agent's cost for revealing her data may…

Authors: Jacob Abernethy, Yiling Chen, Chien-Ju Ho