Policy Gradient for Coherent Risk Measures

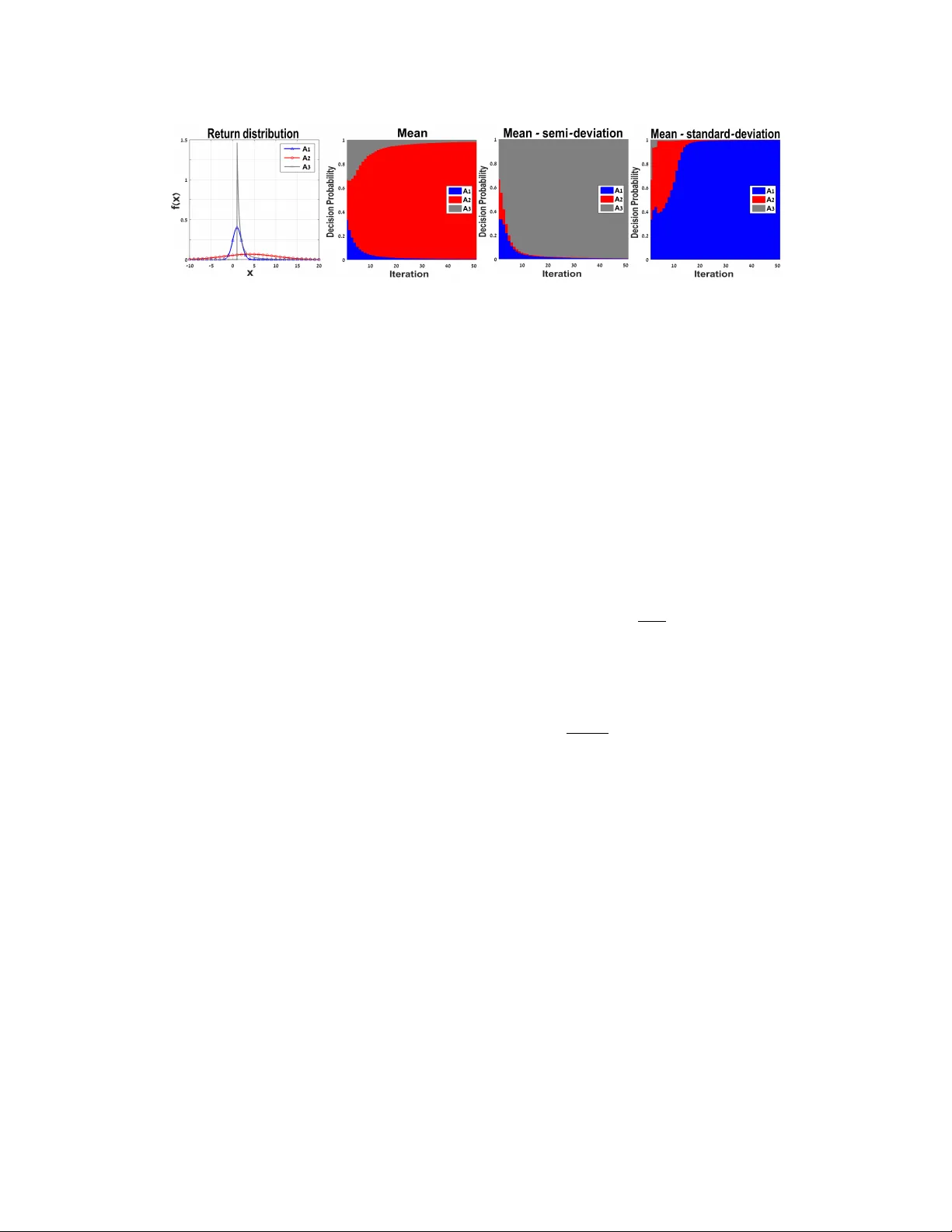

Several authors have recently developed risk-sensitive policy gradient methods that augment the standard expected cost minimization problem with a measure of variability in cost. These studies have focused on specific risk-measures, such as the varia…

Authors: Aviv Tamar, Yinlam Chow, Mohammad Ghavamzadeh