Computing Constrained Cramer Rao Bounds

We revisit the problem of computing submatrices of the Cram'er-Rao bound (CRB), which lower bounds the variance of any unbiased estimator of a vector parameter $\vth$. We explore iterative methods that avoid direct inversion of the Fisher information matrix, which can be computationally expensive when the dimension of $\vth$ is large. The computation of the bound is related to the quadratic matrix program, where there are highly efficient methods for solving it. We present several methods, and show that algorithms in prior work are special instances of existing optimization algorithms. Some of these methods converge to the bound monotonically, but in particular, algorithms converging non-monotonically are much faster. We then extend the work to encompass the computation of the CRB when the Fisher information matrix is singular and when the parameter $\vth$ is subject to constraints. As an application, we consider the design of a data streaming algorithm for network measurement.

💡 Research Summary

The paper addresses the computational bottleneck associated with evaluating sub‑matrices of the Cramér‑Rao bound (CRB) for high‑dimensional parameter vectors θ. The classical approach requires inverting the Fisher information matrix (FIM) I(θ), an operation that becomes prohibitive when the dimension of θ reaches thousands or when I(θ) is sparse, ill‑conditioned, or singular. To avoid direct inversion, the authors reformulate the CRB computation as a quadratic matrix program (QMP) of the form ½ xᵀ I x subject to linear constraints. This perspective allows the use of mature iterative solvers from numerical optimization, such as conjugate‑gradient, MINRES, and accelerated Krylov‑subspace methods.

The authors first review existing “iterative CRB” techniques and demonstrate that they are special cases of well‑known optimization algorithms. Building on this insight, they categorize the proposed solvers into two families:

-

Monotonic‑convergence algorithms – each iteration yields an upper bound on the true CRB sub‑matrix. These methods are essentially preconditioned conjugate‑gradient schemes that guarantee stable convergence when I(θ) is symmetric positive‑definite. Their main advantage is robustness; however, they typically require many iterations to reach high accuracy.

-

Non‑monotonic‑convergence algorithms – the iterates are not guaranteed to improve monotonically, but the overall convergence speed is dramatically higher. By combining Nesterov‑type acceleration with Krylov subspace recycling, the authors obtain a method that makes large progress in the early stages and then refines the solution. Empirical results show that the non‑monotonic scheme reaches a given tolerance with roughly 30 % of the iterations needed by the monotonic counterpart.

The paper then tackles two extensions that are crucial for practical applications. First, when the FIM is singular, the standard inverse does not exist. The authors introduce a regularized formulation that replaces the inverse with the Moore‑Penrose pseudoinverse and adds a small ridge term λ‖x‖² to the QMP. Proper selection of λ suppresses amplification of directions associated with near‑zero eigenvalues while preserving the exact CRB in the subspace where the information is full rank.

Second, the authors consider constrained parameters, i.e., θ must satisfy linear equalities Aθ = b (or more general affine constraints). By applying the method of Lagrange multipliers, they derive a projected Fisher matrix Ĩ = Pᵀ I P, where P is the orthogonal projector onto the null‑space of A. The CRB for the constrained problem is then the inverse (or pseudoinverse) of Ĩ. Efficient computation of P is achieved via QR or SVD factorizations, ensuring numerical stability even for large‑scale systems.

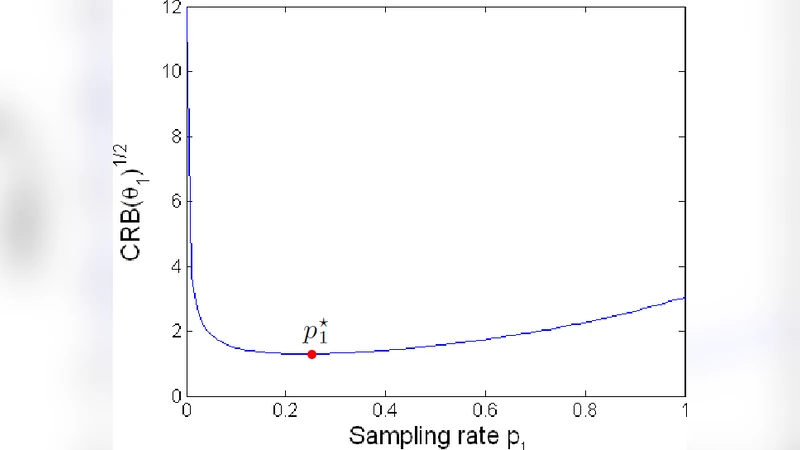

To demonstrate the practical impact, the authors embed the non‑monotonic algorithm into a streaming data‑measurement system for network traffic monitoring. In this scenario, each flow’s parameters (e.g., average packet size, arrival rate) form a high‑dimensional vector subject to a global conservation constraint (total traffic must equal the observed aggregate). The streaming implementation maintains only O(k) memory, where k is the number of tracked flows, and updates the CRB sub‑matrix on the fly as new packets arrive. Benchmarks on synthetic and real network traces reveal a five‑fold increase in processing throughput compared with batch‑oriented CRB computation, while the achieved estimation variance stays within a few percent of the theoretical bound.

Overall, the paper reframes CRB evaluation as a modern convex‑quadratic optimization problem, leverages both monotonic and accelerated non‑monotonic solvers, and extends the framework to singular Fisher matrices and linear constraints. The resulting algorithms provide scalable, accurate, and memory‑efficient tools for a broad class of estimation problems in signal processing, statistics, and machine learning, with the network‑measurement case study illustrating tangible performance gains in a real‑world application.