Finding Density Functionals with Machine Learning

Machine learning is used to approximate density functionals. For the model problem of the kinetic energy of non-interacting fermions in 1d, mean absolute errors below 1 kcal/mol on test densities similar to the training set are reached with fewer tha…

Authors: John C. Snyder, Matthias Rupp, Katja Hansen

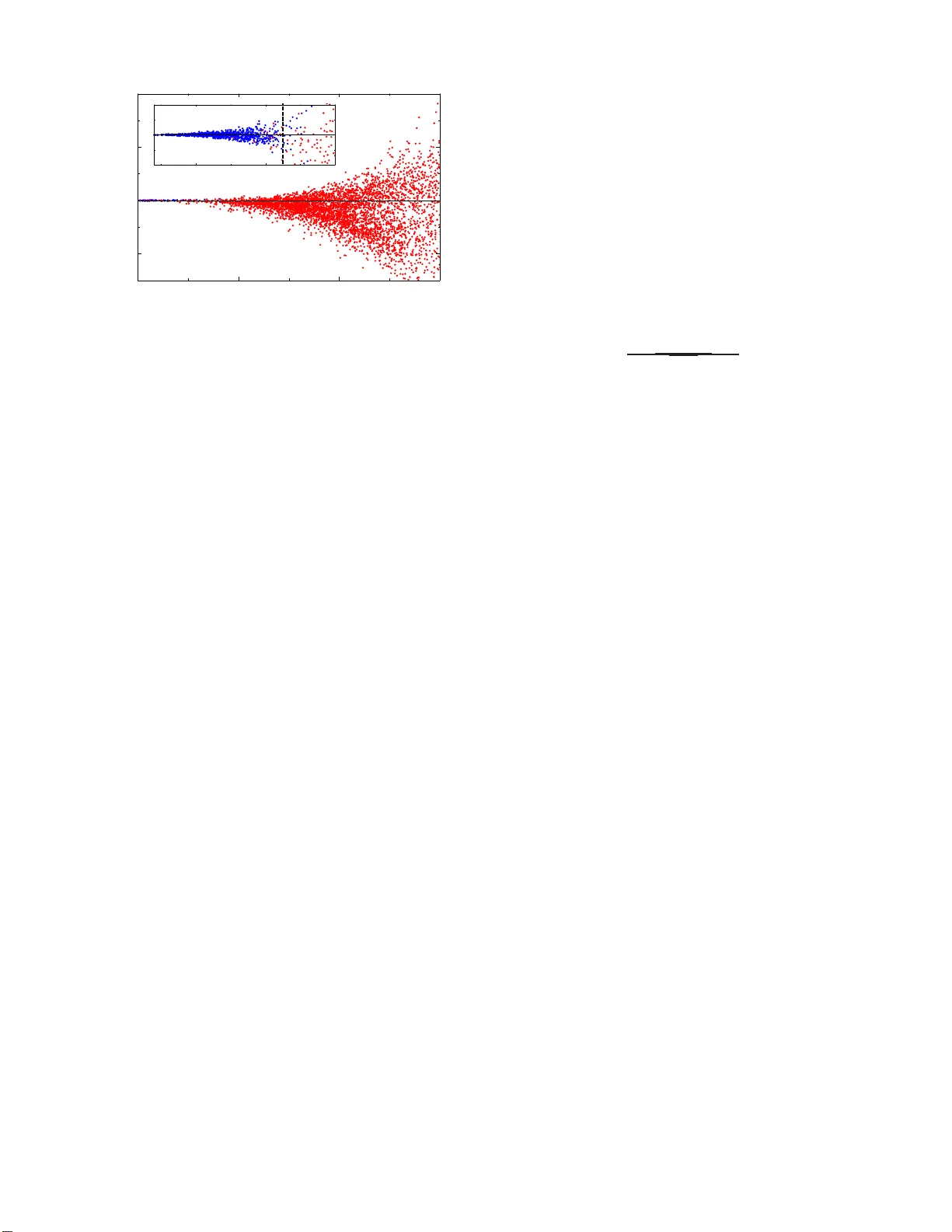

Finding Densit y F unctionals with Mac hine Learning John C. Snyder, 1 Matthias Rupp, 2, 3 Katja Hansen, 2 Klaus-Rob ert M¨ uller, 2 and Kiero n Burke 1 1 Dep art ments of Chemistry and of Physics, University of California, Irvine, CA 92697, USA 2 Machine L e arning Gr oup, T e chnic al University of Berlin, 10587 Berlin, Germany 3 Institute of Pharmac eutic al Scienc es, ETH Zurich, 8093 Z ¨ urich, Switzerland (Dated: No vem b er 27, 2024) Mac hine learning is used to app roximate density f unctionals. F or the model problem of the kinetic energy of n on-in teracting fermions in 1d, mean absolute errors below 1 kca l/mol on t est densities similar to the training set are reac hed with few er than 100 training densities. A predictor iden tifies if a test d ensit y is within the interpolation regi on. Via principal component analysis, a pro jected functional d eriv ative finds highly accurate self-consisten t densities. Challenges for application of our metho d to real electronic structure problems are discussed. P A CS num bers : 31.15.E-, 31.15.X-, 02.60.Gf, 89.20.Ff Each year, more than 10 ,0 00 pap ers repo rt solutio ns to electronic structure pr o blems using Ko hn- Sham (KS) density functional theor y (DFT) [1, 2]. All approximate the exc hange-c o rrelation (XC) energy as a functional of the electronic spin dens ities . The quality of the r esults depe nds crucially on these densit y functional approxima- tions. F or example, presen t approximations often fail for strongly cor related systems, rendering the metho dology useless fo r some of the most int eresting problems. Thu s, ther e is a nev er-ending sear ch fo r improv ed XC approximations. The original lo cal density approxima- tion (LD A) of Kohn and Sham [2] is uniquely defined by the prop erties of the unifor m g as, and has b een arg ued to be a universal limit of al l systems [3]. But the refine- men ts that hav e proven useful in c hemis tr y [4] and mate- rials [5] ar e not, and differ b oth in their deriv atio ns and details. T raditionally , physicists fav or a non-empir ical approach, deriving approximations from quantum me- chanics and a voiding fitting to sp ecific finite systems [6]. Such non-empirical functionals can b e co nsidered con- trolled extrap olations that w o rk well across a broad range of systems and pr o perties, bridging the divide b et ween molecules and s olids. Chemists typically use a few [7, 8] or se veral do zen [9] par ameters to impro ve accuracy on a limited class of molecules. E mpirical functionals are lim- ited in terp olations that are more accurate for the molec- ular systems they are fitted to, but often fail for solids. Passionate debates a re fueled by this cultural divide. Machine le arning (ML) is a p ow e rful too l for finding patterns in hig h- dimensional da ta. ML employs alg o- rithms by which the computer learns from empirical data via induction, and has been very s uccessful in man y ap- plications [10 – 12]. In ML, intuition is used to c ho ose the basic mec hanism and represe ntation of the da ta, but not directly applied to the details of the mo de l. Mean error s ca n b e systematically decrea s ed with increas ing nu mber of inputs. In contrast, hum an-desig ned empiri- cal approximations employ standa rd forms derived from general principles, fitting the para meters to training sets. These explor e only a n infinitesimal fraction of a ll po ssible functionals a nd use relatively few da ta po ints. DFT w o rks fo r electronic structure b ecause the under- lying ma n y- bo dy Hamiltonian is simple, while accurate solution of the Schr¨ o ding er equatio n is very demanding. All electrons Coulomb rep el o ne-another, hav e spin 1 / 2, and a r e Coulomb attracted to the nuclei. ML is a natural to ol for taking maximum adv antage of this simplicity . Here, w e adapt ML to a prototype densit y functional problem: non-interacting spinless fer mions confined to a 1d b o x, sub ject to a smo oth p otent ial. W e define k ey techn ical concepts needed to a pply ML to DFT pro blems. The accura c y we achieve in approximating the kinetic en- ergy (K E) of this system is far beyond the ca pabilities of any present approximations a nd is ev en sufficien t to pro - duce highly a ccurate s elf-consisten t densities. Our ML approximation (MLA) a c hie ves chemical accura cy us ing many more inputs, but requires far less insight into the underlying physics. 0 0.5 1 - 15 - 10 - 5 0 5 MLA Exact x − P m,ℓ ( n ) ∇ n T ML ( n ) / ∆x FIG. 1. (color online). Comparison of a pro jected (see within) functional deriv ative of our MLA with the ex act cu rv e. W e illustr ate the accura cy of our MLA with Fig. 1, in which the functional was cons tructed from 100 densities on a dense gr id. This success op ens up a new approach to functional approximation, en tirely distinct fro m previous approaches: Our MLA contains ∼ 10 5 empirical n umbers and satis fie s none of the s ta ndard exact c o nditions. 2 The prototype DFT problem we consider is N non- int eracting spinless fermions confined to a 1d box, 0 ≤ x ≤ 1, with har d walls. F or contin uous potentials v ( x ), we solve the Sc hr¨ odinger equation n umerically with the low es t N or bitals o ccupied, finding the KE and the elec- tronic density n ( x ), the sum of the squar es o f the o ccu- pied orbitals. Our aim is to co ns truct an MLA for the KE T [ n ] that b ypasses the need to s o lv e the Schr¨ oding e r equation—a 1d analog of orbital-free DFT [13]. (In (3d) orbital-fre e DFT, the loca l approximation, as used in Thomas-F ermi theory , is typically a ccurate to within 10%, a nd the addition of the leading gradie nt correction reduces the err o r to ab o ut 1 % [14]. Even this small a n er- ror in the to tal KE is too lar ge to give accura te chemical prop erties.) First, we sp ecify a cla ss of p otent ials fro m which we generate dens ities, which are then discretized on a uni- form grid of G p oin ts. W e use a linear com bination o f 3 Gaussian dips with different depths, widths, and centers: v ( x ) = − 3 X i =1 a i exp( − ( x − b i ) 2 / (2 c 2 i )) . (1) W e gener ate 2000 suc h p otent ials, randomly sa mpling 1 < a < 10, 0 . 4 < b < 0 . 6, and 0 . 03 < c < 0 . 1. F or ea c h v j ( x ), we find, for N up to 4 electrons, the KE T j,N and density n j,N ∈ R G on the grid using Numerov’s metho d [15]. F or G = 500 , the error in T j,N due to disc retization is less than 1 . 5 × 1 0 − 7 . W e take 1000 densities a s a test set , and choose M o thers for training. The v ariation in this da taset for N = 1 is illustrated in Fig. 2. Kernel ridge regression (KRR) is a non-linear version of regres sion with reg ularization to preven t ov erfitting [16]. F or KRR, our MLA takes the form T ML ( n ) = ¯ T M X j =1 α j k ( n j , n ) , (2) where α j are weigh ts to b e determined, n j are training densities and k is the kernel, which measures similarity betw een densities. Her e ¯ T is the mean K E of the train- ing set, inserted for con venience. W e choose a Gaussian kernel, common in ML: k ( n , n ′ ) = exp( − k n − n ′ k 2 / (2 σ 2 )) , (3) where the hyperpara meter σ is calle d the length scale. The weights are found by minimizing the cos t function C ( α ) = M X j =1 ∆T 2 j + λ k α k 2 , (4) where ∆T j = T ML j − T j and α = ( α 1 , . . . , α M ). The second term is a r egularizer that p enalizes large weigh ts to preven t ov erfitting. The hyperpar ameter λ c on trols regular iz ation str ength. Minimizing C ( α ) gives α = ( K + λ I ) − 1 T , (5) 0 0.5 1 0 1.5 3 x n ( x ) FIG. 2. (color online). The shaded region shows the extent of v ariation of n ( x ) within our d atas et for N = 1. Exact (red) and a self-consistent (black, dashed) densit y for potential of Fig. 3. where K is the kernel matrix, with elements K ij = k ( n i , n j ), and I is the iden tity ma trix. Then σ and λ ar e determined through 10- fold cross- v alidation: The train- ing set is par titioned in to 1 0 bins of equal size. F or each bin, the functional is tr ained on the remaining sa mples and σ and λ ar e optimized by minimizing the mea n a bs o- lute er r or (MAE) o n the bin. The partitioning is rep eated up to 4 0 times a nd the hyperpa rameters are c hosen as the median over all bins. N M λ × 10 14 σ | ∆T | | ∆T | std | ∆T | max 1 40 57600 238 3.3 3.0 23 60 10000 95 1.2 1.2 10 80 4489 48 0.43 0.54 7.1 100 12 43 0.15[ 3.0] 0.24[5. 3] 3.2[46 ] 150 6 . 3 33 0.06 0.10 1.3 200 3 . 2 28 0.03 0.05 0.65 2 100 1 . 7 52 0.13[ 1.4] 0.20[3. 0] 1.8[37 ] 3 100 4 . 0 74 0.12[ 0.9] 0.18[1. 5] 1.8[14 ] 4 100 2 . 0 73 0.08[ 0.6] 0.14[0. 8] 2.3[6] 1-4 † 400 3 . 2 47 0.12 0.20 3.6 T ABLE I . P arameters and errors (mean absolute, std. dev., and max abs. in kcal/mol ) as a function of electron num b er N and number of training densities M . Brac kets represen t errors on self-consisten t densities with m = 30 and ℓ = 5. The α j are on t h e order of 10 6 and b oth p ositiv e and negative [17]. † T raining set includ es n j,N , for j = 1 , . . . , 100, N = 1 , . . . , 4. T able I g ives the perfor ma nce of T ML (Eq. 2) tra ined on M N -electron dens ities and ev a luated on the corr e- sp onding tes t set. The mea n KE of the test set for N = 1 is 5.4 0 Hartree (3390 kcal/mol). T o contrast, the LDA in 1d is T lo c [ n ] = π 2 R dx n 3 ( x ) / 6 and the v on W eiz s ¨ ack e r functiona l is T W [ n ] = R dx n ′ ( x ) 2 / (8 n ( x )). F o r N = 1, the MAE of T lo c on the test set is 21 7 3 kcal/mol and the mo dified g radien t expansion approxi- mation [18], T MGEA [ n ] = T lo c [ n ] − c T W [ n ], ha s a MAE of 16 0 k cal/mol, where c = 0 . 0 543 has b een c hosen to minimize the e rror (the gradient corr ection is not as be n- eficial in 1 d as in 3 d). F or T ML , bo th the mea n and maximum absolute err ors improv e as N o r M incr eases (the system beco mes more unifor m a s N → ∞ [3]). A t M = 80, we hav e alr eady achieved “chemical ac curacy ,” i.e., a MAE be low 1 kcal/mol. A t M = 200, no erro r is ab o ve 1 kcal/mol. Sim ultaneo usly incor porating differ- ent N into the training set has little effect on the overall per formance. With such unheard of a ccuracy , it is tempting to de- clare “missio n accomplished,” but this would b e prema- ture. A KE functional that predicts only the energy is useless in pra ctice, s ince orbital- free DFT uses functional deriv atives in self-consistent pro cedures to find the den- sity within a given approximation, via δ T [ n ] δ n ( x ) = µ − v ( x ) , (6) where µ is a djusted to pro duce the required particle num- ber . The (discr etized) functional deriv a tiv e of T ML is 1 ∆x ∇ n T ML ( n ) = M X j =1 α ′ j ( n j − n ) k ( n j , n ) , (7) where α ′ j = α j / ( σ 2 ∆x ). This os cillates wildly relative to the exact curve (Fig. 3), t ypical b eha vior that do es not improv e with increasing M . No finite in terp olation can accurately repr oduce all details of a functional deriv ative. W e ov ercome this problem using principa l comp onent analysis (PCA). The space of all densities is contained in R G , but only a few directions in this space are rele- v ant. F or a given densit y n , find the m training den- sities ( n j 1 , . . . , n j m ) closest to n . Constr uct the cov ari- ance matrix of directions from n to each training densit y C = X ⊤ X /m, where X = ( n j 1 − n , . . . , n j m − n ) ⊤ . Diagonalizing C ∈ R G × G gives eigenv alues λ j and e ig en- vectors x j which we list in decrea sing order. The x j with larg er λ j are directio ns with substantial v ariation in the dataset. Those with λ j below a cutoff are irrel- ev ant [1 7]. In these extraneo us dimensio ns, there is to o little v a riation within the dataset, pro ducing noise in the mo del functional der iv ative. By pro jecting on to the sub- space spanned by the re le v an t dimensio ns, we eliminate this no ise. This pro jectio n is given by P m,ℓ ( n ) = V ⊤ V , where V = ( x 1 , . . . , x ℓ ) ⊤ and ℓ is the n um b er of r elev ant eigenv ectors. In Fig 1, with m = 3 0 a nd ℓ = 5, the pro- jected functiona l der iv ativ es are in excellent agreement. The ultimate tes t for a density functional is to pro duce a self-consistent density that minimizes the total energy and check its erro r. This erro r will b e several times lar ger than that of the functional e v aluated o n the exact dens ity . F o r exa mple, T lo c on particles in 1 d flat b o xes alwa ys 0 0.5 1 - 40 - 20 0 20 40 MLA Exact x −∇ n T ML ( n ) / ∆x FIG. 3. (color online). F unctional deriv ativ e of T ML , ev alu- ated on the densit y of Fig. 2. gives 4 times la rger err or. T o find a minimizing de ns it y , per form a gradient desc e n t sear c h restricted to the lo cal PCA subspace: Starting from a guess n (0) , take a small step in the opposite dir ection of the pro jected functional deriv ative of the total energy in each iteration j : n ( j +1) = n ( j ) − ǫ P m,ℓ ( n ( j ) )( v + ∇ n T ML ( n ( j ) ) / ∆x ) , (8) where ǫ is a small num b er a nd v is the discretized poten- tial. The sea rc h is unstable if ℓ is to o large, inaccurate if ℓ is to o small, and relatively insensitive to m [17]. The per formance o f T ML in finding self-co nsisten t den- sities is given in T able I. Erro rs are an or der o f magnitude larger than that o f T ML on the exact densities . W e do not find a unique densit y , but instead a set of simila r den- sities dep ending on the initial guess (e.g. Fig. 2). The density with lo west tota l energy does not have the small- est error . Although the search do es not pro duce a unique minim um, it pro duces a rang e of similar but v alid approx- imate densities, each with a small error. Even with an order of magnitude larg er er ror, we still reach ch emical accuracy , now on self-co nsisten t densities . No existing KE a ppro ximation comes close to this p erformance. What are the limitations of this appr oac h? ML is a balanced interp olation on k no wn data, and should b e unreliable for densities far from the training set. T o demonstrate this, we gener ate a new da taset o f 5000 densities with N = 1 for an expanded parameter r ange: 0 . 1 < a < 20, 0 . 2 < b < 0 . 8 and 0 . 01 < c < 0 . 3. The predictive v ariance (bor ro wed from Gaussian pro cess re- gressio n [1 9]) V [ T ML ( n )] = k ( n , n ) − k ( n ) ⊤ ( K + λ I ) − 1 k ( n ) , (9) where k ( n ) = ( k ( n 1 , n ) , . . . , k ( n M , n )), is a measure of the uncer tain t y in the prediction T ML ( n ) due to spar se- ness of training densities around n . In Fig. 4, we plo t the error ∆T as a function of log( V [ T ML ( n )]), for b oth the test set and the new dataset, showing a clear cor relation. F r om the inset, we exp ect our MLA to deliver c hemical accuracy fo r log( V [ T ML ( n )]) < − 24. 4 - 24 - 20 - 16 - 12 - 100 0 100 200 - 28 - 24 - 20 - 2 0 2 log( V [ T ML ( n )]) ∆T (kcal /mol) FIG. 4. (color online). The correlation betw een MLA error and predictive v ariance for N = 1, M = 100. Eac h p oi nt represents a density in the test set (b lue) or new dataset (red ). The vertical line den otes the transition b etw een interpolation and extrapolation. Do e s ML allow fo r human in tuition? In fact, the more prior kno wledge we ins ert into the MLA, the higher the accuracy w e can achiev e. W riting T = T W + T θ , where T θ ≥ 0 [13], we rep eat our calculations to find an MLA for T θ . F or N = 1 we get a lmost zero error , and a factor of 2-4 re ductio n o f erro r o ther wise. Thus, intuition a bout the functiona l ca n be built in to improv e results. The primary in terest in K S DFT is X C for mole cules and solids. W e hav e far less infor ma tion about this than in the pro tot yp e studied here. F or small molecules and simple solids , dire c t solutions of the Schr¨ odinger equa- tion yield highly a c curate v alues of E XC . Imagine a se- quence of models, b e ginning with ato ms, diatomics , etc., in which such accurate r esults are used as tra ining data for a n MLA. Key issues ar e how a ccurate a functional can be attained with a finite n um b er of data, and wha t fraction o f the density spac e it is accurate for. A more immediate targe t is the non-interacting KE in KS DFT calculations. An accura te approximation would allow finding densities and ener gies without solving the KS equa tions, greatly increa sing the sp eed of la rge cal- culations [1 3]. The key differences with our prototype is the three-dimensional nature, the Coulomb sing ularities, and the v a riation with nuclear p ositions. F or this pro b- lem, finding se lf-consisten t densities is crucia l, and hence our fo cus here. But in the 3d case , every KS calcula- tion ever r un, including every iteration in a s e lf-consisten t lo op, generates tr aining data—a density , KE , KS po ten- tial and functiona l deriv ative. The space of systems, in- cluding both solids and molecules, is v ast, but could be approached in s ma ll steps. Two la st po ints: The first is that this type of em- piricism is qualitatively distinct from that present in the literature. The c ho ices made ar e those customary in ML, and require no intuition abo ut the ph ysical nature of the pro blem. Second, the approximation is expresse d in terms of ab out 10 5 nu mbers, and only the pro jected func- tional deriv ative is accurate. W e ha ve no simple wa y of comparing such approximations to those presently p op- ular. F or example, for N = 1 in the prototype, the exact functional is T W . How is this rela ted to our MLA, and how does our MLA account for this exact limit? The author s thank the Institute for P ure and Applied Mathematics at UCLA for hospitalit y and a c k no wledge NSF CHE- 1112442 (JS, KB), E U P ASCAL2 and DFG MU 9 8 7/4-2 (MR, KH, KRM) and E U Marie Curie IE F 27303 9 (MR). [1] P . Hohenb erg and W. Kohn , Ph ys. Rev . B 136 , 864 (1964). [2] W. Kohn and L. J. Sham, Phys. Rev. A 140 , 1133 (1965). [3] P . Elliott, D. L ee, A . Cangi, and K. Burk e, Ph ys. Rev. Lett. 100 , 256406 (2008). [4] P . J. Step hens, F. J. D evlin, C. F. Chabalo wski, and M. J. F risc h, J. Ph ys. Chem. 98 , 1162 3 (1994). [5] J. P . Perdew, K. Burke, and M. Ernzerhof, Phys. Rev. Lett. 77 , 3865 (1996). [6] J. P . P erd ew and A. Ruzsinszky , Int. J. Quant. Chem. 110 , 2801 (2010). [7] A. D. Beck e, Phys. Rev. A 38 , 3098 (1988). [8] C. Lee, W. Y ang, and R. G. Parr, Phys. R ev. B 37 , 785 (1988). [9] Y. Zhao and D. T ruhlar, Theor. Chem. Accounts 120 , 215 (2008). [10] K.-R. M ¨ uller, S. Mik a, G. R ¨ atsch, K. Tsuda, and B. Sch¨ olkopf, I EE E T rans. Neural Netw ork 12 , 181 (2001). [11] O. Iva nciuc, in R eviews in Computational Chemistry , edited b y K. Lipko witz and T. Cundari ( Wil ey , Hob o- ken, 20 07), V ol. 23, p. 291. [12] M. Rupp, A. Tk atchenk o, K.-R. M ¨ uller, and O. A. von Lilienfeld, Phys. Rev . Lett. (to b e published). [13] V. Karasiev, R. Jones, S. T ric key , and F. Harris, in New Developments in Quantum Chemistry , edited by J. P az and A. Hern´ andez (Research Signp os t, Kerala, in p ress ). [14] R. M. Dreizler and E. K. U. Gross, Density F unctional The ory: An Appr o ach to the Quantum Many-Bo dy Pr ob- lem (Springer, Berlin, 1990). [15] See e.g. E. Hairer, P . N ørs ett, P . Syvert Paul and G. W anner, Solving or dinary differ ential e quations I : Nons- tiff pr oblems (Sp ringer, New Y ork, 199 3). [16] T. Hastie, R. Tibshirani, and J. F riedman, The Elements of Statistic al L e arning. Data Mi ning, Infer enc e, and Pr e- diction , 2nd ed. (Springer, New Y ork, 2009). [17] See Sup plemen tal Material (app ended at th e end of th is manuscri pt) for informati on n ecess ary to constru ct the MLA functional and more detail on the PCA pro jections and self-consisten t den si ties. [18] D. Lee, L. A. Constantin, J. P . Perdew, an d K. Burke, J. Chem. Phys. 130 , 034107 (2009). [19] C. Rasm ussen and C. W illiams, Gaussian Pr o c esses for Machine L e arning (MIT Press, Cam bridge, 2006). Supplemen tary I nforma tion for “Finding Density F unctionals with Mac hine Learning” John C. Snyder, 1 Matthias Rupp, 2, 3 Katja Hansen, 2 Klaus-Rob ert M¨ uller, 2 and Kiero n Burke 1 1 Dep artments of Chemistry and of Physics, University of California, I rvine, CA 92697, USA 2 Machine L e arning Gr oup, T e chnic al University of Berlin, 10587 Berlin, Germany 3 Institute of Pharmac eutic al Scienc es, ETH Zurich, 8093 Z ¨ urich, Switzerland (Dated: No vem b er 27, 2024) The pur pose of this s upplemen tar y material is to pro- vide the info rmation necessary to repr oduce our ma - chine learning approximation (MLA) to the kinetic en- ergy functional exactly , as well as extra details ab out the nature of the MLA. T able I II gives the first 100 po ten tial parameters a i , b i , and c i to do uble precision. Along with the equations in the ma in pap er and details of Numerov’s metho d, one should b e a ble to r eproduce the corr espond- ing weigh ts a j of the functional on line 4 of T able I in the main text. SELF-CONSISTENT DENSITIES T able I gives the pe rcen tage of v ar iance lost, (1 − P ℓ j =1 λ j / P G j =1 λ j ) × 100%, in taking the first ℓ eigen v al- ues in the principle compo nen t analysis (PCA) pro jec- tion. F or eac h pr o jection, ther e ar e G = 500 eigen v alues , but only a few are necess ary to represe n t the loca l v a ria- tion in the densities. F or example, with N = 1, m = 30 and ℓ = 5 , we retain 99 .98% of the v ariance when pro- jecting onto the PCA subspace. In T able I I, we coarsely optimize the pa rameters m and ℓ in the PCA pro jection. ❅ ❅ ❅ N ℓ 1 2 3 4 5 6 7 8 1 35 3 0 . 8 0 . 07 0 . 02 0 . 004 0 . 002 0 . 0003 2 45 15 3 . 7 0 . 36 0 . 10 0 . 019 0 . 00 6 0 . 0015 3 44 18 4 . 9 0 . 78 0 . 16 0 . 043 0 . 01 2 0 . 0037 4 48 24 9 . 5 1 . 9 0 . 40 0 . 087 0 . 023 0 . 0063 T ABLE I. Percen t of v ariance lost in takin g the first ℓ PCA eigen v alues with m = 30. Averaged ov er 100 PCA pro jections P m,ℓ ( n ) with randomly c hosen cen ters n in the test set. NUMERO V’S MET HOD W e use Numerov’s method [1] to solve Schr¨ o dinger’s equation for N non-interacting spinless fer mions co nfined to 1d b o x: − 1 2 ∂ 2 ∂ x 2 + v ( x ) ψ ( x ) = ǫψ ( x ) , (1) with bo unda ry conditions ψ (0) = ψ (1) = 0. W e dis- cretize ψ ( x ) and v ( x ) on a uniform g r id with spacing ❅ ❅ ❅ m ℓ 3 4 5 6 15 6 . 5 1 . 9 0 . 92 1 . 2 20 6 . 3 1 . 9 0 . 87 0 . 89 25 5 . 2 1 . 5 0 . 87 0 . 92 30 4 . 2 1 . 3 0 . 86 0 . 97 35 4 . 6 1 . 2 0 . 90 1 . 0 40 3 . 9 1 . 2 0 . 95 1 . 0 45 3 . 9 1 . 3 0 . 98 0 . 98 T ABLE II. Mean absolute errors | ∆T | , in kcal/mol, on 100 randomly chosen self-consisten t densities in t he test set, av- eraged over N = 1 , 2 , 3 and 4. This coarse optimiza tion gives m = 30, ℓ = 5. Errors are less sensitiv e to m than ℓ . F or ℓ ≥ 7, th e gradien t descent searc h fails to conv erge in some cases. ∆x = 1 / ( G − 1): ψ j = ψ ( j / ( G − 1)), v j = v ( j / ( G − 1))) for j = 0 , . . . , G − 1 . Starting from ψ 0 = 0 and ψ 1 = 1, we calculate the remaining ψ j iteratively: ψ j +1 = (2 − 5 ∆x 2 f j / 6) ψ j − (1 + ∆x 2 f j − 1 ) / 12) ψ j − 1 1 + ∆x 2 f j +1 / 12 , (2) where f j = 2( ǫ − v j )). T o determine the eigenv alues ǫ ( k ) and eig enfunctions ψ ( k ) , we find the fir st N in terv als that contain a ro ot of ψ G − 1 ( ǫ ), scanning from ǫ = − 3 P 3 i =1 a i in steps of ∆ǫ = 1. F or each in terv a l k , we pe rform a binary search for the ro ot, reducing the length of the int erv a l to less than 10 − 14 . The eige nv alue is taken as the midp oin t of the in terv a l (the error in ǫ k is le s s than 5 × 10 − 15 ). After normalizing the eig e nfu nctions ψ ( k ) , the density and kinetic energy are given by: n ( x j ) = N X k =1 ψ ( k ) ( x j ) 2 , T = N X k =1 ǫ k − G − 1 X j =1 n ( x j ) v j ∆x. (3) [1] See e.g. E. Hairer, P . Nørsett, P . S yv ert Paul and G. W anner, Solvi ng or dinary di ffer ential e quations I: Nons- tiff pr oblems (Springer, New Y ork, 1993 ). 2 j α j a 1 b 1 c 1 a 2 b 2 c 2 a 3 b 3 c 3 1 14 . 34 267159730358 1 . 1802700563815 77 0 . 07195101071267996 0 . 529934532594325 4 9 . 01320520984288 0 . 09267571195514 4 0 . 5396431787675 333 7 . 21030205171728 0 . 0740833092851 918 0 . 4120341150720884 2 5 . 17536084925 0056 6 . 168993733519782 0 . 0829932529916 43 0 . 5167227315230085 8 . 30495143619142 0 . 08901 45060213322 0 . 4169122081529295 8 . 804876133 49391 0 . 0965994509648079 0 . 57110570 94413816 3 0 . 83553419337 8979 9 . 08090433047071 0 . 07685302774688 097 0 . 5622686436063149 3 . 911674577648888 0 . 0755 1832009012722 0 . 5086649497775319 1 . 8097090 70880952 0 . 09597668450269 7 0 . 4024220371 493795 4 − 5 . 3 73806223157635 8 . 69723148795 899 0 . 06384871663070496 0 . 5859464293596 277 8 . 67330877015531 0 . 0876928787020 957 0 . 485 0650257010839 4 . 18922916259 5343 0 . 04518368077127609 0 . 5819244871 260409 5 − 0 . 3 190730633815225 1 . 880 199805158718 0 . 034255402984 95952 0 . 4874044587130534 3 . 5415146367474 03 0 . 0983660639956121 0 . 45151514041983 66 9 . 82668062409708 0 . 0326461708973 3192 0 . 4352642564533179 6 0 . 43513158909 42081 3 . 522977833467916 0 . 0721805520659 9771 0 . 4489170887611125 3 . 33271215737528 9 0 . 07852548530734836 0 . 585294102801011 6 8 . 14 461268619626 0 . 0484892988 1736392 0 . 5484789894348707 7 − 5 . 7 83654943336363 5 . 28689643616 7961 0 . 04973324501652048 0 . 465360994349 1444 7 . 135152688153955 0 . 0956349249 199585 0 . 4408949440211174 2 . 2438250168814 91 0 . 05865588304821763 0 . 56874811700 88626 8 5 . 85725333572 1763 1 . 896112874949486 0 . 0326089788643 8952 0 . 4501033283280557 5 . 8855419254851 73 0 . 06240932269593134 0 . 561861175474471 3 6 . 486450948532671 0 . 0729363377514 5438 0 . 5695359084287562 9 0 . 36277959732 40678 7 . 516612508143043 0 . 0993184090181 399 0 . 5699793290550055 5 . 2626839876103 52 0 . 0972169632428748 0 . 47267116033816 38 8 . 89032075863951 0 . 05632091198742 537 0 . 4056148 051043074 10 29 . 95 678925033907 8 . 4930924815946 9 0 . 06 47507853307116 0 . 44813765273 88373 3 . 62263291813646 0 . 04018659271 978259 0 . 5253173968297499 1 . 15575664317595 5 0 . 0910615024672208 0 . 587031705582 7734 11 − 8 . 10086522849 575 7 . 105439309279282 0 . 084318444668049 3 0 . 4223871323591302 4 . 606936643431428 0 . 0632 6693058647106 0 . 5381643193286885 2 . 83228923 9384128 0 . 07970384658584 057 0 . 4171275 695759011 12 − 1 . 63152765257 8485 8 . 53955034289036 0 . 072849047224670 74 0 . 58 39058233689045 1 . 3400248910 88614 0 . 0781592051575039 0 . 538335369598 3426 7 . 396341414064901 0 . 0594511016 4542845 0 . 4924043842188951 13 11 . 06 17514474351 7 . 853417776611835 0 . 07070077317679 758 0 . 5080787363762617 4 . 40848170656282 6 0 . 06298528328388489 0 . 4611686076617371 7 . 9054286 7326401 0 . 03887933278705098 0 . 5872227397532248 14 4 . 4408932901 1033 6 . 39698431139937 0 . 057306606685783 78 0 . 59 64415398755241 5 . 47233457960 0375 0 . 06570186368826426 0 . 547372870918 3074 4 . 715521015256764 0 . 0803629674 069167 0 . 5354179520994888 15 2 . 7315047892 38804 4 . 228544205571447 0 . 0607189900997 7901 0 . 4135235776817701 2 . 5502248519081 71 0 . 06424771468342688 0 . 52033951671138 64 4 . 842862964307187 0 . 063339956036 35176 0 . 5244121975227667 16 − 12 . 35513145514341 2 . 579686666551495 0 . 0664318 905447018 0 . 4888525450630048 3 . 7641074 10086705 0 . 06576720199559 433 0 . 5481686020 053234 1 . 399253719979948 0 . 07540490 302513119 0 . 4655456988965731 17 11 . 55 095248921101 1 . 1077005963458 98 0 . 05955381913217193 0 . 535920752160891 4 2 . 706093393731662 0 . 0656184100661 7781 0 . 426 9114966922994 1 . 50104859087 7154 0 . 06352034496843162 0 . 4331821043 592771 18 0 . 1703484854 064119 8 . 26435624701031 0 . 04624196264776 803 0 . 4236237608297072 7 . 025290029097274 0 . 0798 0750197266955 0 . 4683927184025036 2 . 82250309 9972245 0 . 05222522416667 061 0 . 4810998 521431096 19 − 0 . 13017855474 333 8 . 74124547878586 0 . 0628793144768381 0 . 47361964 23196364 8 . 59166251408243 0 . 0847382 61964188 0 . 4564662508099403 8 . 228777497762 42 0 . 03727774324972055 0 . 469735079819 3826 20 − 1 . 64652853185 4399 4 . 545173787725101 0 . 0343318969342 1491 0 . 4919021595234177 1 . 72693549453314 8 0 . 03135796595505673 0 . 580800322296872 2 . 50219118 5545461 0 . 0856788809687475 0 . 590962 1331611888 21 − 2 . 37117970222 3466 1 . 015154584908506 0 . 0772659570851 1765 0 . 4008988537732911 6 . 68853420273312 3 0 . 0869544063742346 0 . 403293222827825 6 . 29713331 3147086 0 . 03345369328197 418 0 . 5433070 470097552 22 13 . 69 383242214402 3 . 2830981348167 01 0 . 06841617803957927 0 . 430419549693747 5 5 . 887062834756559 0 . 0515239943949 6422 0 . 432 1995606997443 3 . 20712247775 0064 0 . 07371808389404321 0 . 4967284534 354185 23 − 2 . 21641977203 5322 2 . 056773486230531 0 . 0825699915945 694 0 . 479224783903814 6 . 945590707033352 0 . 0740 8636456892297 0 . 4957750456547108 5 . 84622267 1659907 0 . 08364524544720 81 0 . 4094720200 825416 24 − 6 . 00076435563 604 2 . 03703352205463 0 . 0760551754279399 5 0 . 450 1291515683585 1 . 452563564782 88 0 . 07433692379389613 0 . 423396907640434 4 8 . 95059302019488 0 . 05703615620837 187 0 . 4679023 659842935 25 8 . 1640910866 7899 6 . 400475835681034 0 . 03425589949139 343 0 . 5544095818848655 6 . 08648447447863 5 0 . 07282844956128038 0 . 4272596897186396 5 . 3620764 6433345 0 . 05780634160661002 0 . 5503173198658324 26 − 22 . 3897314906413 3 . 751807395744921 0 . 0852624107335768 0 . 4453450 848668749 7 . 559337237177321 0 . 05840 909867970421 0 . 4990223467101435 4 . 729884445 483556 0 . 0929218712306724 0 . 5590503 374710945 27 5 . 5957991737 73367 4 . 522456026868127 0 . 0362020194684 1309 0 . 5050210060676815 1 . 8850912775409 55 0 . 06521780385848891 0 . 49616010359558 42 9 . 47421945496329 0 . 05567401333961 59 0 . 4834045749 740091 28 1 . 9464558256 6549 2 . 751346713386582 0 . 09481955285168 15 0 . 4901204212506473 7 . 86359222726685 8 0 . 0951797983951825 0 . 519662004980269 1 9 . 51 608680035275 0 . 0778537731 5463609 0 . 5806015592262945 29 − 1 . 10555982253 3069 5 . 725277193011525 0 . 0309730311626 1406 0 . 5511177785567803 7 . 98851334230923 4 0 . 0928323179868588 0 . 514641511636756 9 1 . 101098863639624 0 . 0882939028519 791 0 . 5265108634130272 30 − 3 . 88638153257 7754 4 . 95871172966177 0 . 068232544387776 03 0 . 56 45951313008806 9 . 0813522531 7634 0 . 04472856905840844 0 . 4299117124064 201 8 . 24973637849809 0 . 0885443136185 6 0 . 5416193648861009 31 − 1 . 34193069637 8109 6 . 451869235304859 0 . 0548280488927 0922 0 . 5694170176403242 4 . 41692433351999 5 0 . 04212208612720639 0 . 589712500067734 2 8 . 86 521100790565 0 . 0866581794 842557 0 . 5311744230227635 32 − 10 . 16467121571373 6 . 705508349541454 0 . 0934749 698077619 0 . 4420693171728495 2 . 8583711 31410651 0 . 03722557506068 529 0 . 4216580500 297413 6 . 670274811535954 0 . 09773324 70946321 0 . 538756085129185 33 − 1 . 10763324674 4663 7 . 390220519784453 0 . 0797066167418 1083 0 . 5081940602224835 3 . 81512365682127 5 0 . 04965529863397879 0 . 497023655525965 9 . 69945245 311718 0 . 07039341131023045 0 . 4990744291774679 34 10 . 39 451871866851 1 . 4781703001651 34 0 . 03602109509210016 0 . 579911338104815 4 2 . 105782960173057 0 . 0861928382367 878 0 . 48 12528298377607 7 . 01022638301 5251 0 . 03535327789989237 0 . 496998966 4577149 35 − 0 . 13992536135 1959 4 . 045190240200832 0 . 0357995555258 2782 0 . 5678362348598895 9 . 32386837735074 0 . 08586 48976559044 0 . 5698752996855582 9 . 81895903 799508 0 . 04704020956533079 0 . 5708692337579542 36 3 . 6561245696 05117 4 . 944179742822627 0 . 0931916525333 049 0 . 4834640277977335 9 . 3847979419491 0 . 084081006349 7973 0 . 4090254720704045 3 . 673880603434117 0 . 0715 8934023489803 0 . 5598196803643 543 37 8 . 0673467911 2684 2 . 988178201634472 0 . 07482186947515 447 0 . 4950144569660614 1 . 91077031583832 4 0 . 03877152903045122 0 . 5786659568499292 4 . 2857064 79098184 0 . 05940487550770 145 0 . 484451 3368813702 38 0 . 3011566621 835722 8 . 78996207364355 0 . 04833452393773 747 0 . 459203937360375 9 . 93467757777873 0 . 0973964 303071365 0 . 4280349684453508 4 . 9319480941 46255 0 . 0969410287755626 0 . 45606603 53529726 39 − 0 . 24691789583 24283 6 . 242957525364009 0 . 09895683142341 12 0 . 4205662570527381 4 . 73983230004983 0 . 04501 10225161678 0 . 559400017887981 9 . 119789479 43194 0 . 06189826031162779 0 . 493100819 449519 40 − 5 . 70490564214 8452 4 . 447521595114223 0 . 0677052320545 7797 0 . 5047965897298675 3 . 98788863007371 4 0 . 03595136615254331 0 . 592901104492553 6 5 . 22 121823715344 0 . 0402943190 5467698 0 . 514129411681418 41 0 . 1425795779 687519 6 . 919606258393273 0 . 0821756368975 312 0 . 5976799418614426 8 . 0409903701816 8 0 . 03129262110881438 0 . 4686530881925831 3 . 4216958 73368174 0 . 05166215779587 051 0 . 420856 0803818513 42 0 . 6843702894 502789 8 . 27200587323167 0 . 05470133884316 61 0 . 4453254786423237 7 . 43388381062865 4 0 . 04612202343826998 0 . 5269730370279639 8 . 3641619 521824 0 . 07996879390 804805 0 . 5036755718396144 43 − 14 . 06571757369153 6 . 220712308892191 0 . 0476595 6545437692 0 . 4898959293071353 8 . 72249431 241257 0 . 05059967705235588 0 . 442 1274052875277 2 . 41627642331 7733 0 . 07373660930828208 0 . 5403850652 535799 44 8 . 3234610944 3768 9 . 29716067662943 0 . 065681060341482 7 0 . 49 60066859011466 6 . 79626345929 6078 0 . 04037116107097755 0 . 438988573786 9158 2 . 206183147865627 0 . 0804910989 081056 0 . 5806221323132373 45 − 5 . 25231707978 0442 3 . 424683176707511 0 . 0867529985575 643 0 . 4322548560407479 6 . 85925529697194 3 0 . 0955962752949951 0 . 517620399931194 5 . 82614494 2819697 0 . 09104084426311 35 0 . 4167014408 128501 46 6 . 1864138889 8792 3 . 789578783769164 0 . 07617326304382 499 0 . 5418490045761073 1 . 36317713364923 2 0 . 03197249245240319 0 . 5284999992906743 4 . 9879001 955338 0 . 04126125322 920861 0 . 4895167206754735 47 0 . 4821906326 173532 1 . 195769022526768 0 . 0492874908297 0199 0 . 5286859472378347 8 . 7671406303363 2 0 . 0870852412295278 0 . 5989638152903285 2 . 4895651 14307663 0 . 05568685410478 115 0 . 578705 6957942209 48 5 . 7076634388 68804 4 . 058614230578957 0 . 0474387593329 4599 0 . 4981149890354436 1 . 0151712378427 36 0 . 085826684646538 0 . 547034932077456 6 6 . 34 3764732282688 0 . 087876943 6185823 0 . 5449452367497044 49 − 0 . 18579586790 22612 5 . 159763617905121 0 . 08505704225876 59 0 . 5311491284465588 7 . 23899014801596 5 0 . 05867048711393652 0 . 566241078122218 7 8 . 70 600609334284 0 . 0408838754 8046445 0 . 5305143392409284 50 2 . 3215944438 93878 3 . 319181325761367 0 . 0539108086798 1007 0 . 5914435434600773 9 . 1177604537749 1 0 . 03583354001918901 0 . 4669413688494417 6 . 1176693 36479262 0 . 04553014156422 459 0 . 413132 4263527175 51 2 . 4510699947 22774 4 . 005247740100538 0 . 0762086106749 4449 0 . 5759225433332955 6 . 7435299783947 92 0 . 0967347280360008 0 . 49806319859268 95 5 . 505849306951131 0 . 097144036358 6883 0 . 45173467721102 52 − 0 . 96135089703 0818 7 . 710615861039985 0 . 0713161712530 7138 0 . 4007362522722102 3 . 37206168531194 5 0 . 04579335436967417 0 . 484550326849621 7 8 . 60 21995929235 0 . 0634681851083368 0 . 4207242810 824543 53 − 10 . 67971490671366 4 . 728468083127391 0 . 0650920 0806253813 0 . 4959400801351127 9 . 81266481 664175 0 . 0951346706530719 0 . 5047212015 277842 3 . 180268423645401 0 . 06891913 385037023 0 . 5758580789792047 54 1 . 3759980393 23297 2 . 740626958875014 0 . 0643832383656 6841 0 . 5077158168564866 1 . 8139529307187 81 0 . 0845523320869031 0 . 40724765921871 1 1 . 05 4506345314588 0 . 099827478 9144316 0 . 5956344420441539 55 − 4 . 33086379329 9111 3 . 209443235555092 0 . 0515080676709 4947 0 . 5932432195310449 4 . 68240832465570 6 0 . 03641376226612134 0 . 449194004317304 3 9 . 57 800470422746 0 . 0897042234 65467 0 . 4702887735348434 56 6 . 9616552781 26058 3 . 013659237176324 0 . 0802684211883 349 0 . 5733460791404235 7 . 4410476480059 95 0 . 0924462539370359 0 . 52166714663817 39 8 . 15403131934562 0 . 0561193435056 8269 0 . 4910483719005932 57 − 5 . 30540083281 513 2 . 088882156026381 0 . 074640912274694 28 0 . 47 40072756248339 4 . 2286943696 63863 0 . 03993178348974601 0 . 440294623814 8492 4 . 912298433960689 0 . 0652835949 2264517 0 . 4923580906843156 58 3 . 3951044009 83763 7 . 9733680986292 0 . 0911843583955236 0 . 53140078714065 56 6 . 375772536276909 0 . 093302636410 8379 0 . 447244215655568 7 . 387561983870292 0 . 097764 3384454912 0 . 47982459029335 6 59 1 . 3302247890 15745 5 . 105339790874075 0 . 0633171828434 2962 0 . 5503804706506275 9 . 5740821641842 0 . 074635006441 32846 0 . 58 14481952546582 5 . 81034234144 3878 0 . 03327635127803191 0 . 555021635 6360829 60 − 0 . 29206049552 63079 6 . 773414983805557 0 . 07595819905259 191 0 . 5210834626114553 4 . 22997480488147 6 0 . 03532177545633547 0 . 5725615130215063 8 . 8845282 3254966 0 . 0932985710685446 0 . 5046388 124857028 61 − 26 . 41840990525562 6 . 41701161854283 0 . 062469446 46172664 0 . 4258923441426071 1 . 379491105 318868 0 . 06306367927818108 0 . 453 1443030507202 2 . 18945697418 3496 0 . 098078005933584 0 . 51743845640 79299 62 6 . 6303930888 25135 6 . 476325542442256 0 . 0759943564718 8182 0 . 4345528705798566 6 . 9693638976940 16 0 . 06669302037176108 0 . 51742789439859 5 3 . 96 1825639220393 0 . 092911532 2244988 0 . 4621043570336795 63 3 . 0657488172 27897 1 . 300093759156537 0 . 0674188507559 9173 0 . 4866345116967513 6 . 9420523315902 17 0 . 07107963920756023 0 . 49306727813115 88 7 . 834870626014938 0 . 064461156038 70624 0 . 5367219218216266 64 − 1 . 74310569703 9855 3 . 145607697855832 0 . 0415292992761 004 0 . 5859414343226601 5 . 94529572443504 9 0 . 07414379406386953 0 . 454862947673868 1 1 . 062923665624632 0 . 0763621408033 8054 0 . 4330291254331895 65 − 1 . 63125530290 4965 3 . 121291975208031 0 . 0313356174175 2311 0 . 524510056949801 8 . 03118879837252 0 . 098737 6029412809 0 . 5128559599511593 7 . 572783963 151993 0 . 0936192481138518 0 . 4523240 987335903 66 − 9 . 50982692642 515 7 . 367027947839741 0 . 073981085154265 52 0 . 47 53257600466925 1 . 5891601473 17211 0 . 07398588942349949 0 . 464410581491 0987 1 . 719981611302808 0 . 0498697606 3479617 0 . 5394709698394433 67 − 4 . 49673642106 8983 8 . 56498650586069 0 . 087095015579911 9 0 . 4807004710871501 8 . 85815411069566 0 . 093669 0521145114 0 . 4120764594786555 2 . 422694718 173737 0 . 05651923264880363 0 . 4832351547748815 68 − 1 . 00297791754 3076 9 . 43121814362608 0 . 036898943831728 01 0 . 48 01850915018365 6 . 7624631103 15146 0 . 0977990134875495 0 . 588037729906 9773 8 . 48288777357423 0 . 05983735955 021298 0 . 4837560035001434 69 − 9 . 23106545170 694 8 . 47929696159459 0 . 0547214122545576 1 0 . 494 9989702352598 4 . 776138672418 544 0 . 03835162624659654 0 . 5785166211495 689 4 . 459069855546714 0 . 06892772167 363432 0 . 5908529311844791 70 14 . 99 926304831282 4 . 7089177116583 32 0 . 0907364284065382 0 . 45500333349599 29 5 . 373320800648651 0 . 037399245362 69347 0 . 44 68132406615605 1 . 83166224607 1428 0 . 05825558914206748 0 . 566434199 642786 71 4 . 1891811045 05013 7 . 583635162152007 0 . 0936829708421 792 0 . 4110679099121856 6 . 0900221521915 3 0 . 05895044789139901 0 . 545125369204061 4 6 . 85 1478307492325 0 . 047638403 34231468 0 . 5010633160850224 72 8 . 3283718813 8842 3 . 414315967033806 0 . 05054048464274 665 0 . 5043307410524706 3 . 07099656728179 9 0 . 06968276328974509 0 . 5702669702356848 5 . 3607838 54112533 0 . 03888883830669 67 0 . 5784155467 566237 73 − 21 . 84200888491582 1 . 625552430388586 0 . 0454623 6831654488 0 . 5426078576324179 4 . 09117692 3293506 0 . 03836885039652 842 0 . 5048795129 49007 4 . 527748284314512 0 . 0597589410 2927981 0 . 4670598506496871 74 6 . 6047389779 45651 3 . 42187897931262 0 . 08944499506971 87 0 . 4941485628974172 4 . 96749741331263 0 . 06031 151123783582 0 . 4119028637456998 4 . 58192760 7849956 0 . 0871948963692279 0 . 417680 5754532423 75 15 . 27 988423246628 6 . 2295384269574 54 0 . 0821521091215863 0 . 41187484492523 15 2 . 377028497509498 0 . 096311093310 6458 0 . 47 46736556808109 5 . 39854554159 4487 0 . 0822574656780643 0 . 525894723 3190487 76 1 . 3771307638 85458 9 . 15176346333775 0 . 04818682488618 507 0 . 574408949666749 2 . 366656226877287 0 . 05059 199769749539 0 . 4731074949729489 4 . 342903653 70739 0 . 07492904049493838 0 . 403865637 5892337 77 − 0 . 77569593514 84244 8 . 1525112801581 0 . 0812975344211981 0 . 511285375196410 7 4 . 530310672134927 0 . 0665291248613 4806 0 . 594 6245231853162 1 . 56886062625 4933 0 . 04256882025605258 0 . 5491027801 043556 78 − 9 . 50432792804 336 4 . 670517118870347 0 . 043982677995534 92 0 . 45 19472673718893 2 . 1552761874 76066 0 . 0489411436180854 0 . 569475627865 2033 1 . 947130206816878 0 . 0632130904 1201674 0 . 4122312147733568 79 3 . 7541371230 31758 6 . 801389212739803 0 . 0700330934262 3426 0 . 5129214842481318 9 . 9243391014391 3 0 . 0931392820982872 0 . 410401649514640 4 5 . 23 036608102518 0 . 0919529182 014262 0 . 4465294974738288 80 − 4 . 49980465406 7136 4 . 406584351091798 0 . 0970211159011 626 0 . 4772975938521527 2 . 27076443184844 1 0 . 0853562757122116 0 . 466959692098883 9 4 . 42557413334857 0 . 05178005094737 99 0 . 5645191809 121128 81 − 13 . 9999061688152 8 . 30408622139241 0 . 0903948738093222 0 . 505320958 3744364 2 . 454354187669313 0 . 0857681 690400504 0 . 576877467653029 7 . 16728903797 9035 0 . 0831206320171505 0 . 422133968 0792799 82 − 4 . 87840564976 4898 7 . 975675712689654 0 . 0809649515974 139 0 . 5831990158889571 1 . 69637649019113 5 0 . 06783046119270637 0 . 578856072348660 3 5 . 118512758012828 0 . 0655461700651 2321 0 . 5571586558825978 83 − 8 . 70611662690 158 3 . 078380132262833 0 . 063276267522935 22 0 . 40 17988981679418 2 . 1386292615 88504 0 . 05374733302715019 0 . 543244322989 1937 7 . 596013793576791 0 . 0999924467 044729 0 . 5115245622576321 84 3 . 5786546621 13496 4 . 294915349281119 0 . 0664706641817 281 0 . 5566289165852036 3 . 3875164208975 19 0 . 06654228293431248 0 . 43049354984948 62 8 . 43232828156106 0 . 06121769693920 587 0 . 552707 6848906498 85 0 . 0423259541 153967 9 . 56936665353494 0 . 06825963823641 594 0 . 5532353068476618 7 . 233942984150266 0 . 0773 6032999103362 0 . 4274316541541941 8 . 96022693 099 0 . 03996347733060968 0 . 5091073130786462 86 − 6 . 38744603111 9123 5 . 052123646744015 0 . 0530697345371 5137 0 . 4657297418468141 1 . 26167033800978 4 0 . 05392235260994765 0 . 435557134407466 6 9 . 81 884128475822 0 . 0434164193 614162 0 . 546118275633825 87 3 . 0296937112 82459 1 . 767169254935782 0 . 0386273054889 003 0 . 4174202422881018 9 . 9918988283659 4 0 . 0347107535224807 0 . 531658797486970 9 4 . 79 4411329362948 0 . 04866392 717198935 0 . 5202815575942617 88 − 0 . 79103110586 4576 8 . 38082427065573 0 . 055212790770486 2 0 . 5821800194923659 3 . 864226559651998 0 . 0546 4216708052787 0 . 5051543239785165 4 . 44506409 6761643 0 . 03386907179361 738 0 . 4659081 177469465 89 2 . 9708368173 8158 9 . 22706363472046 0 . 069121403865498 36 0 . 56 30023378185795 2 . 92988019657 0098 0 . 07966659968684009 0 . 499226363393 5188 6 . 920427033384797 0 . 0312340749 1202272 0 . 4077156601519876 90 − 7 . 11077260491 7624 3 . 106633667560681 0 . 0487399002538 7701 0 . 5697049535893381 8 . 30700777463754 0 . 05249 501630989304 0 . 5669783762910612 1 . 13457393 933062 0 . 07200760153994357 0 . 444672072474744 91 − 7 . 16230508070 3513 5 . 387619660361665 0 . 0458150038587 5453 0 . 5197835066282974 8 . 22551991773337 0 . 08312 26693907787 0 . 4274987667560087 6 . 82894852 9150043 0 . 05943349593138 421 0 . 5115861 497137036 92 1 . 7203684064 82304 6 . 780548897314363 0 . 0870012111933 092 0 . 5984908484842784 6 . 5875712774126 53 0 . 05694105237128053 0 . 52266978253427 88 5 . 99005641944593 0 . 07837921322316 456 0 . 466823 6040752577 93 0 . 1494884045 649762 8 . 09818524171748 0 . 09765952559284 96 0 . 4848535596042637 2 . 15348378575717 6 0 . 04734211110674212 0 . 4077222220507315 7 . 1431107 62448606 0 . 04682210332386 052 0 . 509084 643832387 94 − 0 . 96282109703 4532 7 . 260437643310942 0 . 0508339657527 9076 0 . 54745303715986 8 . 38162990306018 0 . 05791050943688 269 0 . 5794395625 497382 2 . 722302743095399 0 . 08374461 5781603 0 . 5395428417251624 95 0 . 9351220450 36145 2 . 31788301454997 0 . 05976249487823 031 0 . 5809204376238206 8 . 19172407354263 0 . 06806 778195768599 0 . 5479374417815378 9 . 939903362 25375 0 . 04266977230181523 0 . 595990170 5863791 96 0 . 4328277236 540677 4 . 113414259206836 0 . 0798840451334 0022 0 . 5262079910925557 7 . 4369941913248 23 0 . 07641699196144898 0 . 431628208551548 6 9 . 59671807016201 0 . 07198966517052 108 0 . 4563024 970288369 97 0 . 8530435160 35702 3 . 229317247568396 0 . 0466428830896 7379 0 . 4916641780174803 9 . 1953361514258 1 0 . 0944216012526871 0 . 579263841357723 2 6 . 98 0966479991427 0 . 076473121 61060935 0 . 5407763967437385 98 − 3 . 51619187124 8886 9 . 20769534239692 0 . 072145996079658 83 0 . 41 11312070946356 7 . 5687256468 32963 0 . 0806902033322297 0 . 495204295074 3076 8 . 94919747461644 0 . 04263443777 290076 0 . 5735261960865083 99 19 . 67 243760549639 2 . 2706635908550 83 0 . 0971170179967765 0 . 46130179679872 72 8 . 22480560472966 0 . 05360247725612 82 0 . 4745303011865 644 3 . 970009703387312 0 . 09820323492 57953 0 . 5029128207513967 100 1 . 54 4060514744405 4 . 017102041449 901 0 . 04437090645089548 0 . 5073655615301 741 3 . 724036679477685 0 . 07622772645 899346 0 . 4368882837991222 5 . 350822342112657 0 . 0765 9119397629786 0 . 4056243763991 382 T ABLE I II . All th e necessary informatio n to construct our MLA, trained from 100 densities with N = 1 on a grid of 500 p oin ts, with λ = 12 × 10 14 and σ = 43. F or purp os es of saving space, we do not list these densities. They ma y b e reconstructed from these potentials via Numero v’s metho d.

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment