Maximum Joint Entropy and Information-Based Collaboration of Automated Learning Machines

We are working to develop automated intelligent agents, which can act and react as learning machines with minimal human intervention. To accomplish this, an intelligent agent is viewed as a question-asking machine, which is designed by coupling the processes of inference and inquiry to form a model-based learning unit. In order to select maximally-informative queries, the intelligent agent needs to be able to compute the relevance of a question. This is accomplished by employing the inquiry calculus, which is dual to the probability calculus, and extends information theory by explicitly requiring context. Here, we consider the interaction between two question-asking intelligent agents, and note that there is a potential information redundancy with respect to the two questions that the agents may choose to pose. We show that the information redundancy is minimized by maximizing the joint entropy of the questions, which simultaneously maximizes the relevance of each question while minimizing the mutual information between them. Maximum joint entropy is therefore an important principle of information-based collaboration, which enables intelligent agents to efficiently learn together.

💡 Research Summary

The paper presents a principled framework for coordinating multiple autonomous learning agents that operate as “question‑asking machines.” Building on the recently introduced inquiry calculus—a dual to probability calculus—the authors model the space of possible questions as a lattice of down‑sets, where each question corresponds to a set of statements that would answer it. Within this lattice, the relevance of a question to the central issue (the full set of possible system states) is quantified by the Shannon entropy of the probability partition induced by that question. In other words, a question’s relevance is proportional to the uncertainty it resolves about the system.

When two agents independently select questions, the information they obtain can overlap, leading to redundancy. This redundancy is captured by the mutual information between the two questions. The authors prove that minimizing this redundancy while preserving the informativeness of each question is equivalent to maximizing the joint entropy of the pair of questions. Joint entropy, defined over the joint distribution of the two agents’ possible answers, equals the sum of the individual entropies minus their mutual information. Therefore, maximizing joint entropy simultaneously keeps each question highly relevant (high individual entropy) and drives the mutual information toward zero, ensuring that the agents acquire complementary information.

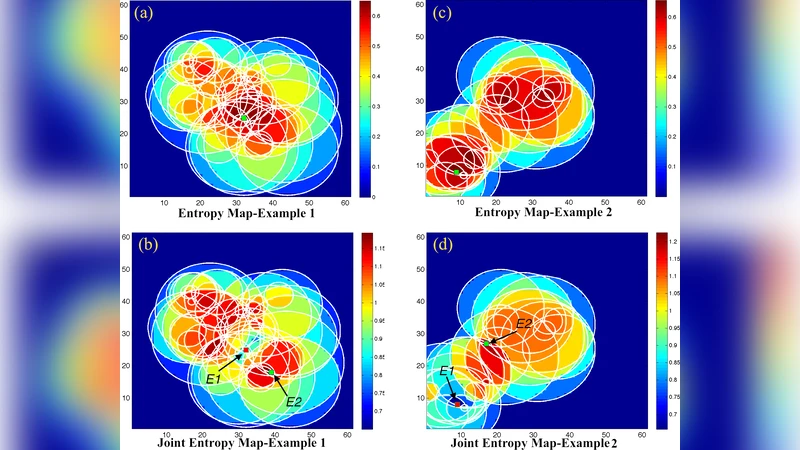

To demonstrate the theory, the authors implement a concrete experimental scenario using two LEGO Mindstorms NXT robots tasked with locating a white circle on a dark background. The circle is parameterized by its center (x₀, y₀) and radius r₀. Each robot can take a light‑intensity measurement at a chosen (x, y) location, yielding a binary outcome (white/black). From a posterior distribution over the circle parameters (obtained via Bayesian inference on previous measurements), the robots generate a set of sampled circles. For any candidate measurement location, they predict the proportion of sampled circles that would produce a white reading, thus constructing a probability distribution over the binary outcome. The entropy of this distribution provides a scalar measure of how informative that single measurement would be.

The first robot selects the location E₁ that maximizes this single‑measurement entropy, i.e., the point where the predicted outcome is most uncertain. The second robot then evaluates the joint entropy of the pair (E₁, E₂) for all possible E₂ locations. The joint entropy is computed from the four‑way partition of the sampled circles into regions a, b, c, d, corresponding to the four possible outcome pairs (white‑white, white‑black, black‑white, black‑black). By scanning the space of E₂ positions while holding E₁ fixed, the authors produce a two‑dimensional slice of the four‑dimensional joint‑entropy surface. The optimal E₂ is the location that maximizes this joint entropy, which empirically corresponds to regions of high individual entropy that are also minimally correlated with the outcome at E₁.

Simulation results are presented for two regimes: one where the posterior samples of circles are highly correlated (i.e., the circle’s position and size are tightly constrained) and another where the samples are more dispersed. In the highly correlated case, the joint‑entropy map shows that the optimal second measurement tends to lie near the first measurement but in a direction that reduces redundancy. In the less correlated case, the optimal second measurement is farther away, reflecting the greater independence of the two measurements. In both scenarios, the selected E₂ locations align with high‑entropy regions while avoiding areas where the mutual information with E₁ is large, confirming that the joint‑entropy maximization criterion effectively balances relevance and independence.

The authors conclude that maximizing joint entropy provides a robust, information‑theoretic principle for collaborative experimental design among autonomous agents. By framing question selection within the inquiry calculus, they extend classical Bayesian experimental design to multi‑agent settings, offering a systematic method to allocate sensing resources, reduce redundant data acquisition, and accelerate learning. Potential applications include coordinated robotic exploration, distributed sensor networks, and any domain where multiple intelligent systems must jointly decide what data to collect in order to efficiently reduce uncertainty about a shared model.

Comments & Academic Discussion

Loading comments...

Leave a Comment