A Nearly Linear-Time PTAS for Explicit Fractional Packing and Covering Linear Programs

We give an approximation algorithm for packing and covering linear programs (linear programs with non-negative coefficients). Given a constraint matrix with n non-zeros, r rows, and c columns, the algorithm computes feasible primal and dual solutions…

Authors: Christos Koufogiannakis, Neal E. Young

A Nearly Linear-Time PT AS for Explicit F ractional P ac king and Co vering Linea r Programs Christos Koufogiannakis · Neal E. Y oung Abstract W e giv e an approximat ion algo r ithm fo r fracti onal pa cking and covering linea r programs (linea r progra ms with non-negat ive coefficients). Given a constr a int matri x w ith n no n-zeros, r r ows, and c col umns, the algori thm (w ith high probability) comput es feasible primal a nd dual soluti ons whose cost s are wi thin a factor o f 1 + ε o f opt (t he opt imal cost) in time O (( r + c ) log( n ) /ε 2 + n ). 1 1 In tro duction A p acking problem i s a linear pr ogram of the form ma x { a · x : M x ≤ b, x ∈ P } , where t he en tr ies of the constrai nt mat rix M ar e non-nega tive and P is a conv ex pol ytop e a dmitt ing som e form of optimi z a tion oracle. A c overing probl em is of the form min { a · ˆ x : M ˆ x ≥ b, ˆ x ∈ P } . This pap er fo cuses on explicitly given pa cking and cov eri ng problems, that is, ma x { a · x : M x ≤ b, x ≥ 0 } and mi n { a · ˆ x : M ˆ x ≥ b, ˆ x ≥ 0 } , where the p olyto p e P is just t he p osi tive orthant. Expl icitl y g iven packing and cov ering are imp ort ant sp ecia l cases o f linear prog r amming , i ncluding, for exam ple, f ractio nal set co ver, multicommo di ty flow problems with given paths, tw o-player zero-sum matrix games wit h non-nega tive pay offs, and v aria nts of these problems. The paper gi ves a (1 + ε )-a pproximatio n alg orithm — that i s, an a lgori thm that returns feasi ble pri mal and dual soluti ons w hose costs are wit hin a given fact or 1 + ε of opt . With high pro ba bility , it r uns in time O ( ( r + c ) lo g( n ) /ε 2 + n ), where n – the input size – is t he num b er of no n-zero entries in t he co nstrai nt matri x and r + c is the n umber of rows plus col umns ( i .e., co nstraints plus v aria b l es). F or dense i nstances, r + c can b e a s smal l as O ( √ n ). F or modera tely dense instances – as long as r + c = o ( n/ log n ) – t he 1 /ε 2 factor multiplies a sub-linear term. General l y , the time is l inear in the i nput size n as long as ε ≥ Ω ( p ( r + c ) lo g( n ) /n ). 1.1 Related work The algo rithm is a La grangi an-rela x ation ( a.k.a . price-direct ed decom p ositio n, multiplicat ive weights) a lgo- rithm. Broadly , these alg orith m s work by r epl acing a set of hard constra ints by a sum of smo oth p enalti es, one p er co nstrai nt, and then itera tively augm enting a solut ion while tra ding off the increa se in the ob jective agai n st th e i ncrease in the sum o f p enalt ies. Here th e p ena lties ar e expo nential in the const raint violati on, and, i n eac h iterati on, only the first-o rder (l inear) approxima tion i s used to estimate t he change i n the sum of p enal ties. Such algori thms, which can pr ovide useful alter n a tives to interior-p oi nt a nd Simplex metho ds, have a long history and a large lit erature. Bienst o ck gives an implem en t ation -o riented, op era t ions-resea rch p er- sp ect ive [2]. Arora et al. discuss them from a comput er-science p ersp ect i ve, highl ighting co nnections to other fields such as l earning theo ry [1]. An overview by T odd pla ces them in the co ntext of general li n ea r progra mming [18]. Departmen t of Computer Science and Engineering, Unive r sity of Califor nia, Ri v ersi de. The first author wo uld like to thank the Greek State Sc holarship F oundation (IKY). The second author’s research w as partially supp orted by NSF gran ts 0626912, 0729071, and 1117954. 1 Accepted to Al gorithmica, 2013. The conference version of this pap er was “Beating Simplex for fractional pac king and co vering linear programs” [13]. The running times of algo rithms of this typ e i ncrease as the approximati on para meter ε gets small. F or algo r ithms t hat rely on linea r appr oximation of t he p enalty changes in ea ch iterat ion, t he runni ng times grow at least quadrati cally in 1 /ε ( times a p o lynom i al i n the o ther par ameters) . F or expli citly g i ven packing and cov ering, t he f astest prev i ous such algor ithm that we k now of runs i n t ime O (( r + c )¯ c log( n ) /ε 2 ), w here ¯ c i s the maxi mum number o f columns in which a ny v ariable app ears [2 1]. Tha t alg orithm appl ies t o mixe d packing a nd co vering — a more g eneral problem. Using some of the tec hni ques i n this pa p er , one can improve that alg o rithm to run i n time O ( n log ( n ) /ε 2 ) (a n unpublished r esult), which i s slower than t he algo r ithm here fo r dense pro blems. T echnically , the st arting p oint fo r the work her e is a rema r k able alg orithm by Grig oriadi s a nd Khachiyan for t he following specia l ca s e of packing and cov ering [ 9]. The input is a tw o-player zero-sum matrix g ame with pay offs in [ − 1 , 1]. The ou t put is a pa ir of mi xed st rategi es th a t guarantee a n ex pect ed pay o ff wit hin an additive ε of op t imal . (Not e that achieving addit ive error ε is, how ever, easi er than ac hi eving m ult iplica tive error 1 + ε .) The alg o rithm com putes t he desired out put i n O ( ( r + c ) log ( n ) /ε 2 ) tim e. This i s remark able in that, for d ens e matri ces, it is sub-line ar in the i nput size n = Θ ( r c ). 2 (F o r a machine-learning al gorit hm closely r el ated to Gr igori adis a nd Khachiy an’s result , see [5, 6].) W e also use th e idea of non-unif orm incr ements from al gorit hms b y Garg and K¨ onem a nn [8, 12, 7]. Dep endenc e on ε . Building on work b y N est erov (e. g., [16, 17]) , recent alg orithm s for packing and cov- ering pro blems hav e reduced the dep end ence o n 1 /ε from qu a drati c t o linea r, at the exp ense o f i ncreased depend ence on other parameter s. Ro ughly , t hese alg orit hm s b etter approximat e the change in t he p enalty function in each itera tion, lea ding to fewer iterat ions but more t ime p er i terati on (alt hough no t to the same extent as interior-p oint a lgor i thms). F or exa m ple, Biensto c k and Iyengar give an algori thm for c o ncurrent multicommo di ty flow tha t solves O ∗ ( ε − 1 k 1 . 5 | V | 0 . 5 ) sho r test-pat h probl ems, where k is the number of com- mo diti es and | V | is the n umber o f vertices [3]. C hudak and Eleut erio contin ue t h i s directi o n — for exa mple, they g ive an algori thm f or fr action a l set cover running i n worst-ca se t ime O ∗ ( c 1 . 5 ( r + c ) /ε + c 2 r ) [4]. Comp arison to Si mplex and Interior-Point metho ds. Currently , t he most co mmonl y used alg orithm s f or solving linear prog rams in practice a re Sim plex and interior-p oint metho d s. Regarding Sim plex a lgori thms, commerci al implementati o ns a lgori thms use many carefu l ly tuned heuristi cs (e.g. pre-solvers a nd heuristics for m aintaining sparsi ty a nd n umeri cal sta bility), enabling t hem t o qui ckly solve many practi cal problems with m i llio ns of non-zeros to optim ality . But, as i s well known, their w orst -case r unning times are expo- nential. A l so, for b ot h Sim plex and in ter ior-p oint metho ds, running times can v a ry w idely dep ending on the struct ure of the underly i ng problem. ( A detail ed ana lysis of Sim plex and interior-p oi nt runni ng times is ou t side the scop e of t his pa per .) These issues ma ke rig orous compa r ison b etw een the v ari ous alg orithm s difficult . Still , here is a m eta-arg ument tha t may a llow som e meaningful compar ison. F o cus on “ square” con- straint m atrices, where r = Θ ( c ). Note that at a minimum, a ny Sim plex i mplementatio n m ust iden ti fy a non-triv ial basic feasible solution . Li kewise, interior-p oint algo rithms require ( in each i terati on) a Cho lesky decomp osit ion or other matr ix f a ctori zation . Thus, ess ential l y , b o th m et ho ds req uire impl icitl y (at l east) solving an r × r syst em o f l inear equa tions. Solving such a system is a rel atively w el l -understo o d pro blem, bo th in theory a nd in practice, and (barring special structure) takes Ω ( r 3 ) time, or Ω ( r 2 . 8 ) time using Strassen’s al g orit h m . Thus, on “squ a re” instances, Sim plex and interior-p o int al gori t hms sho uld hav e run- ning ti mes growing a t least with Ω ( r 2 . 8 ) ( a nd probabl y mo re). This rea soning appl ies even if Si mplex or interior-p oint metho ds are term i nated ear l y so as to find appr oximately optim al sol ut ions. In co mpari son, o n “sq uare” ma trices, the a lgori thm in thi s pap er takes ti me O ( n + r lo g( r ) /ε 2 ) w here n = O ( r 2 ) or l ess. If t he met a-argum ent ho l ds, then, for appl icati ons where (1 + ε ) -approximate so lutio ns suffice fo r some fix ed and m o derate ε (say , ε ≈ 1%), for very l arge instan ces (say , r ≥ 1 0 4 ), the a lgori thm here should b e orders of magnit ude faster t han Sim plex o r interior-p o int algo rithms. This co nc l usion i s consistent w ith exp eriments rep orted her e, i n which t he running t imes of Simplex a nd interior-p oint a l gori thms on l arge ra ndom instances exceed Ω ( r 2 . 8 ). Concr etely , w ith ε = 1 %, the al gori t hm here is fast er when r is on the order o f 10 3 , wi th a sup er-linear (in r ) sp eed-up fo r la r ger r . 2 The problem st udied here, pac king and cov ering, can be r educed to Grigori adis and K hachiy an’s problem. This reduction leads to an O (( r + c ) log( n )( U opt ) 2 /ε 2 )-time algorithm to find a (1 + ε )-approximate pac king/co vering solution, where U . = max ij M ij / ( b i a j ). A pre-pro cessing s tep [ 14, § 2.1] can bound U , leading to a running time bound of O (( r + c ) log( n ) mi n( r, c ) 4 /ε 4 ). 2 1.2 T echnical ro admap Broadly , the running ti mes o f itera tive o ptimi zatio n alg orithm s are determined by (1 ) the n umber of iter- atio n s a nd (2 ) the time p er itera tion. V arious algo rithm s trade off these tw o fact o rs in different w ays. The technical appro ach taken here is t o accept a high num b er of iterat ions — ( r + c ) log( n ) /ε 2 , a typical b ound for an al gorit hm o f th i s class (see e.g. [11] f or fur ther discussion) — and to fo cus o n impl ementing each iterat ion as qui ckly as p ossi ble ( ideally in const ant a morti zed time) . Coupling. Grigo riadis a nd Khachiy an’ s sub-li near tim e a lgori thm uses an unusual tec hni q ue of c oupling primal and dual algor i thms that is cri tical to the algori thm h er e. As a st arting po i nt, to explain coupling, consider t he following “s l ow” coupl ing a lgori thm. (Throug hout, assume w ithout l oss o f g enerality by scal i ng that a j = b i = 1 for all i, j . ) T he a lgori thm starts wi th al l-zero pr imal an d dua l solut ions, x and ˆ x , resp ectively . In eac h i t erati o n, i t increases one co o rdinat e x j of t he prima l so lution x by 1, and i ncreases one co o rdinate ˆ x i of t he dual soluti on ˆ x b y 1. The index j of the prima l v ariabl e to increment i s chosen randoml y from a di s t ributi on ˆ p that dep ends on the current du al solut ion. Likewise, t he index i of the dual v ariable to increm ent i s chosen rando m ly fro m a distri bution p that dep ends on the cur rent primal soluti on. The distribut ion ˆ p is concentrated o n the i nd i ces of dua l constr a ints M T ˆ x that are “ m ost vio lated” by ˆ x . Likewise, t he dist ributio n p is concentrated on the i ndices o f pri mal constr aints M x that are “mo st viola ted” by x . Speci fic a lly , p i is prop orti onal to (1 + ε ) M i x , whi le ˆ p j is pro p o rtio nal to ( 1 − ε ) M T j ˆ x . 3 Lemma 1 in the next section proves that this al gori t hm achiev es the desired approximat ion gua rantee. Here, br oadly , i s why coupli ng help s reduce the t ime p er itera tion in compar ison to the sta ndard approach. The standa r d ap pr oach is to i ncrement the pri mal v ariable corresp o nding t o a dual const raint that is “most viola ted” by p — tha t is, to incr ement x j ′ where j ′ (approximat ely) m inimi zes M T j ′ p ( for p defined a s ab ove). This requir es at a minimum mai ntaini ng the vector M T p . Recal l that p i is a f u nc t ion of M i x . Thus, a change in one pr imal v ariabl e x j ′ changes many entries in the vector p , but even mor e entries in M T p . (In the r × c biparti te graph G = ([ r ] , [ c ] , E ) wher e E = { ( i, j ) : M ij 6 = 0 } , the neigh b ors of j ′ change in p , w hile all neighb ors of those neighb ors change in M T p .) Thus, m aintaining M T p is cost ly . In com parison, to implement coupling , it is enough to mai ntain the v ecto rs p a nd ˆ p . T he further pro duct M T p is not needed (nor is M ˆ p ). This i s the basi c reason why coupling helps reduce the tim e per iterat ion. Non-uniform incr ements. Th e next m ain technique, used t o make more pro gress p er it eratio n, i s Garg and K¨ onemann’s non-uniform incr ements [ 8, 1 2, 7]. Inst ead of i ncrementing the pr imal a nd dual v ariables by a uni form am ount each ti me (as describ ed ab ove), the algori thm incr ements the c hosen primal and dual v ariables x j ′ and ˆ x i ′ by an amount δ i ′ j ′ chosen sma ll eno ugh so t hat the l eft-hand side (LHS) o f ea ch constrai nt ( each M i x or M T j ˆ x ) increases by at most 1 (so that t he ana lysis still holds ) , but lar ge enough so that the L HS of at le ast one suc h constrai n t incr eases b y at least 1 / 4 . Thi s is sm a ll enoug h to al l ow the same corr ectness pro of to g o through, but i s larg e enoug h to gua rantee a sma ll num b er of itera tions. The num b er of it eratio ns is b ounded by ( roughly ) the f ollowing argum ent: each i terati on in cr eases the LHS of some const raint by 1 / 4, but, during the course of t he a lgori thm, no LHS ever exceeds N ≈ log ( n ) /ε 2 . (The particul ar N is chosen w i th f oresight so that the rela tive error works out t o 1 + ε .) Th us, the number of iterat ions is O (( r + c ) N ) = O ( ( r + c ) log( n ) /ε 2 ). Using slow ly changing estimates of M x and M T ˆ x . In fact, we w i ll achiev e this b ound not just fo r t he num b er of iter ations , but als o for the total work done ( outside of pre- and p ost-pro cessing). The k ey to this is the t hird mai n technique. Most o f the work done by the al gori thm as describ ed so far would be in maintaining the vectors M x and M T ˆ x and the di stributi ons p and ˆ p (which are functions o f M x and M T ˆ x ). This would requi r e lo ts of t ime in t he worst case, b ecause, even w ith non-unifor m i ncrements, there can st ill be ma ny smal l changes in elements of M x and M T ˆ x . T o work around t his, i nstead of maintaining M x and M T ˆ x exactly , the al gorit hm m a intains more slowly c hang ing estimates for them (vectors y and ˆ y , respecti vely), using rando m sampling. The algorit hm ma intains y ≈ M x as foll ows. When the alg orithm increases a primal v ari able x j ′ during an iterati on, this increas es some elements in t he vector M x (s p eci fically , the elements M i x where M ij > 0 ). F o r each suc h element M i x , i f the element increases by , say , δ ≤ 1, t hen the algo r ithm increases t he corr espo nding y i not by δ , but by 1, but only with pr ob ability δ . This maintains not only E [ y i ] = M i x , but al so, with high pr obabil ity , y i ≈ M i x . F ur ther, the a lgori thm onl y do es work for a y i (e.g. up dati ng p i ) when y i increases (by 1). The a lgori thm mai ntains the est i mate vector ˆ y ≈ M T ˆ x similar ly , and defines t h e sampli ng dis t ributi ons p a nd ˆ p a s f unctions of y a nd ˆ y ins t ead of M x and M T ˆ x . In this way 3 The al gori thm can be interpreted as a f orm of fictitious play of a tw o-play er zero-sum game, where in eac h round eac h play er pla ys from a distribution concent rated around the b est r esponse to the aggregate of the opp onent ’s historical plays. In con trast, in many other fictitious-play algori thms, one or both of the pla yer plays a deterministic pure b est-resp onse to the opp onent ’s hi s torical av erage. 3 e ach unit of work done by the a lgori thm can b e charged to an increase in | M x | + | M T ˆ x | (o r more precisely , an increase in | y | + | ˆ y | , whi ch nev er exceeds ( r + c ) N ) . (Thr oughout the pa p er, | v | denotes t he 1-norm of any vector v .) Section 2 gives t he forma l intuiti on underlyin g co upling by describi ng and form ally a nalyzing the first (simpl er , slower) co upling alg orithm describ ed ab ove . Secti on 3 describ es the full (m ain) alg orithm and its correctness pro of. Section 4 gives remaining implementatio n deta ils a nd b o unds the run tim e. Section 5 presents basic ex per imental results, including a co m pariso n w ith t he GLPK Simp l ex algo rithm . 1.3 Prelimina ries F or the rest of the pap er, a ssume the pri mal and dual probl ems are of the fol lowing rest ricted forms, resp ectively: max {| x | : M x ≤ 1 , x ≥ 0 } , min {| ˆ x | : M T ˆ x ≥ 1 , ˆ x ≥ 0 } . Tha t i s, a ssume a j = b i = 1 for each i, j . This i s witho ut lo ss of gener ality by the transfo rmati on M ′ ij = M ij / ( b i a j ). Reca l l that | v | deno tes the 1-norm o f any v ecto r v . 2 Slow algorithm (coupling) T o illustra te the co upling technique, i n t his sect i on we a nalyze t he fir st (si mpler but slower) alg orithm describ ed in th e roadma p in the intro ductio n, a v ariant of Grig o riadi s and Khachiyan’s algo rithm [9] . W e show that i t ret urns a (1 − 2 ε )-approxima te prima l-dual pair with high pr obabil ity . W e do not analyze the r unning t ime, whic h ca n b e large. In the fol lowing sect ion, w e describ e ho w to mo di fy t his alg orithm (using non-uni form increments a nd the random sampli ng t rick describ ed in the previous roadma p) to ob t ain the full alg o rithm with a go o d ti me b ound. F or just th i s secti on, assum e that each M ij ∈ [0 , 1]. ( Assume a s al wa ys that b i = a j = 1 fo r all i, j ; recall that | v | deno t es the 1-no r m of v .) Here is the alg orithm : slow-alg ( M ∈ [ 0 , 1] r × c , ε ) 1. V ectors x, ˆ x ← 0 ; scal ar N = ⌈ 2 ln( r c ) /ε 2 ⌉ . 2. Rep ea t until max i M i x ≥ N : 3. Let p i . = (1 + ε ) M i x (for al l i ) and ˆ p j . = (1 − ε ) M T j ˆ x (for all j ). 4. Cho ose random indi ces j ′ and i ′ resp ectively from pro babili ty distri butions ˆ p/ | ˆ p | an d p/ | p | . 5. Increase x j ′ and ˆ x i ′ each b y 1. 6. Let ( x ⋆ , ˆ x ⋆ ) . = ( x / m ax i M i x, ˆ x/ min j M T j ˆ x ). 7. Ret urn ( x ⋆ , ˆ x ⋆ ). The scaling of x and ˆ x in l ine 6 ensures feasibi lity of the final pri mal solutio n x ⋆ and the final dua l soluti on ˆ x ⋆ . ( Recall th e assump t ion that b i = a j = 1 for all i, j .) The final prima l soluti on c o st and fina l d ua l soluti on co sts a re, resp ectively | x ⋆ | = | x | / m ax i M i x and | ˆ x ⋆ | = | ˆ x | / min j M T j ˆ x . Since the algor ithm keeps the 1-norms | x | a nd | ˆ x | of the intermediate primal a nd dual solutio ns eq ual, the fina l pri mal and dual cost s will be wit hin a facto r of 1 − 2 ε of ea ch other as lo ng as min j M T j ˆ x ≥ (1 − 2 ε ) max i M i x . If thi s ev ent ha pp ens, then b y weak duality impl i es that ea ch soluti on is a ( 1 − 2 ε ) -approximati on o f it s resp ecti ve o ptimum. T o prov e that the ev ent mi n j M T j ˆ x ≥ (1 − 2 ε ) max i M i x happ ens with high probabil i ty , we s h ow that | p | · | ˆ p | (the pro duct of the 1-norms o f p a nd ˆ p , a s defined in the algor ithm) i s a Lyapuno v functio n — tha t is, t he pro duct is non-increasi ng in exp ect atio n wit h each iterat ion. Thus, i ts exp ected final v alue is at most i ts init i al v alue r c , a nd wi th high probabil i ty , its fina l v alue i s at most, say , ( r c ) 2 . If that happ ens, then b y carefu l insp ecti o n of p and ˆ p , it must b e tha t (1 − ε ) m ax i M i x ≤ min j M T j ˆ x + εN , w h i ch (wit h the termina tion condit ion max i M i x ≥ N ) im plies the desired even t. 4 4 It ma y be instructive to compare this algorithm to the more standard algorithm. In fact there are t w o standa rd algorithms related to this one: a primal al gori thm and a dual al gorithm. In each i teration, the primal al gorithm would c ho ose j ′ to minimize M T j ′ p and increments x j ′ . Separately and simultaneou sl y , the dual algorithm would choose i ′ to maximize ( M ˆ p ) i ′ , then i ncremen ts ˆ x i ′ . (Note that the pri mal algorithm and the dual algorithm are independent, and in fact either can b e run without the other.) T o pr o ve the approximation ratio for the pri mal algorithm, one would b ound the increase in | p | r elativ e to the increase in the primal ob jective | x | . T o prov e the appro ximation r atio for the dual algorithm, 4 Lemma 1 The slow algorithm r eturns a (1 − 2 ε ) -appr oximate primal-dual p air (fe asible prim al and dual solutions x ⋆ and ˆ x ⋆ such that | x ⋆ | ≥ (1 − 2 ε ) | ˆ x ⋆ | ) with pr ob ability at le ast 1 − 1 / ( r c ) . Pr o of In a gi ven iterati on, l et p and ˆ p denot e the vectors at the sta rt of the it eratio n. Let p ′ and ˆ p ′ denote the vectors at the end o f t he iterat ion. L et ∆x denote the vector who se j th entry is the increase in x j during the it eratio n (o r if z is a scalar, ∆z denotes the increase in z ). T hen, using that each ∆M i x = M ij ′ ∈ [0 , 1], | p ′ | = X i p i (1 + ε ) M i ∆x ≤ X i p i (1 + εM i ∆x ) = | p | h 1 + ε p T | p | M ∆x i . Likewise, for t he dual , | ˆ p ′ | ≤ | ˆ p | [1 − ε ( ˆ p/ | ˆ p | ) T M T ∆ ˆ x ]. Multiply ing t hese b ounds o n | p ′ | and | ˆ p ′ | and usi ng that ( 1 + a ) (1 − b ) = 1 + a − b − ab ≤ 1 + a − b for a, b ≥ 0 gives | p ′ || ˆ p ′ | ≤ | p || ˆ p | h 1 + ε p | p | T M ∆x − ε∆ ˆ x T M ˆ p | ˆ p | i . The i nequality ab ov e i s wha t mot i v ates the “coupli ng” of prim al and dual incr ements. The al gorit hm chooses the random increments to x and ˆ x precisely so that E[ ∆x ] = ˆ p/ | ˆ p | a nd E[ ∆ ˆ x ] = p/ | p | . T a king exp ectatio ns of b oth si des of the ineq uality ab ove, a nd plug g ing these equa tions into the two ter m s on t he right-hand side, t h e two terms exactly cancel, giv ing E[ | p ′ || ˆ p ′ | ] ≤ | p || ˆ p | . Thus, the particul ar random choice of i ncrements to x and ˆ x makes the quantity | p | | ˆ p | non-increa sing in exp ect atio n with each iterati on. This and W ald’s equati on (Lemma 9, or equi v al en t ly a sta ndard optio nal s t opping theo rem for s up er- marti ngales) im ply tha t t h e expect atio n of | p || ˆ p | at t ermina t ion is at most i t s ini tial v alue r c . So , by the Marko v b ound, the probabi lity t hat | p || ˆ p | ≥ ( r c ) 2 is at most 1 /r c . Thus, with pr obabil ity at least 1 − 1 /r c , at t erminat ion | p || ˆ p | ≤ ( r c ) 2 . Assume this happ ens. No te that ( r c ) 2 ≤ exp( ε 2 N ), so | p || ˆ p | ≤ ( r c ) 2 impli es (1+ ε ) max i M i x (1 − ε ) min j M T j ˆ x ≤ | p || ˆ p | ≤ ex p( ε 2 N ) . T aking lo gs, a nd using the inequalit ies 1 / ln(1 / ( 1 − ε )) ≤ 1 /ε and ln(1 + ε ) / ln(1 / (1 − ε )) ≥ 1 − ε , gi ves (1 − ε ) max i M i x ≤ mi n j M T j ˆ x + εN . By the termi nation conditio n max i M i x ≥ N , so the a b ove inequality implies (1 − 2 ε ) ma x i M i x ≤ min j M T j ˆ x. This and | x | = | ˆ x | (and weak duality) im ply the approximatio n guarantee for the primal-dual pair ( x ⋆ , ˆ x ⋆ ) returned by the alg orithm . ⊓ ⊔ 3 F ull algorithm This sect i on descri b es the ful l al gorit hm and gives a pro of of it s ap pr oximation guara ntee. In a dditio n t o the coupling idea explai ned in the previous secti on, for sp eed the full al g orit h m uses non-uni f orm increments and estima tes of M x and M T ˆ x as descr ib ed i n t he i ntro ducti on. Next we describ e some more detai ls of t hose techniques. After that we give the al gorit hm in d et ail ( altho ug h some implementatio n detai ls that are not crucial t o t h e appr oxima tion guarantee a re delay ed to the next secti on). Recall t hat WLOG we are assuming a i = b j = 1 fo r all i, j . The only assumpti on on M is M ij ≥ 0 . Non-uniform incr ements. In ea ch iterat ion, instea d of increasing the random ly chosen x j ′ and ˆ x i ′ by 1, the alg orit h m i ncreases t h em b oth by an increment δ i ′ j ′ , chosen just so that t he maxi mum resul ting incr ease in any left -hand side (LHS) of any constrai nt (i.e. m ax i ∆M i x or max j ∆M T j ˆ x ) is i n [1 / 4 , 1]. The alg orit hm also deletes cov eri ng co nstraints once they beco me sati sfied (the set J con ta ins ind i ces of not-yet-satisfied cov ering co nstrai n t s, tha t i s j suc h that M T j ˆ x < N ). W e w ant the anal y sis of the approximation r atio to con tinue to hol d (the a nalog ue of Lemma 1 for the slow algo r ithm) , even wit h the increments adjusted a s a b ov e. That an a lysis requires t hat the exp ect ed change in each x j and each ˆ x i should b e prop orti onal to ˆ p j and p i , resp ectively . Thus, w e a djust the sampli ng dist ributi o n for the rando m pair i ′ , j ′ so that , when we choose i ′ and j ′ from the dist r ibutio n and increment x j ′ and ˆ x i ′ by δ i ′ j ′ as defined a bove, it is the case tha t, for any i and j , E[ ∆x j ] = α ˆ p j / | ˆ p | and E[ ∆ ˆ x i ] = αp i / | p | for an α > 0. T his is do ne by scaling the proba bility of c ho o s i ng each given i ′ , j ′ pair by a factor prop ortio nal to 1 /δ i ′ j ′ . one wou ld b ound the decrease i n | ˆ p | relative to the increase i n the dual ob j ectiv e | ˆ x | . In this view, the coupled algorithm can b e obtained by taking these tw o indep enden t pr i mal and dual algorithms and r andomly coupling their cho i ces of i ′ and j ′ . The analysis of the coupled algorithm uses as a p enalty function | p || ˆ p | , the pr oduct of the resp ectiv e p enalt y functions | p | , | ˆ p | of the tw o underlying algorithms. 5 T o implement the a bove non-uniform increments a nd t he a djusted sampling di stributi on, t he a lgori thm maintains t he following data structures a s a funct ion of the current prim al and dual sol utions x and ˆ x : a set J of indi ces of still-a ct ive (not yet met ) covering constr a ints (co lumns); for each column M T j its m aximum entry u j = max i M ij ; and f or each row M i a clo se upp er b ound ˆ u i on its ma ximum active entry max j ∈ J M ij (sp ecificall y , t he alg orit hm ma intains ˆ u i ∈ [1 , 2] × max j ∈ J M ij ). Then, the algo rithm takes t he i ncrement δ i ′ j ′ to b e 1 / ( ˆ u i ′ + u j ′ ). T his seem i ngly o dd c ho i ce has tw o key prop erties: (1) It sa tisfies δ i ′ j ′ = Θ (1 / max( ˆ u i ′ , u j ′ )), which ensures that w hen x j ′ and ˆ x i ′ are increased b y δ i ′ j ′ , the ma ximum i nc r ease in any LHS ( a ny M i x , o r M T j ˆ x wit h j ∈ J ) is Ω (1 ). (2 ) It a llows the alg orithm to select the r andom pa ir ( i ′ , j ′ ) i n constant time using the f ollowing subroutine, called rando m-pair (the notat ion p × ˆ u deno tes t he vector wit h i th entry p i ˆ u i ): random-pair ( p, ˆ p, p × ˆ u, ˆ p × u ) 1. Wit h proba bility | p × ˆ u || ˆ p | / ( | p × ˆ u || ˆ p | + | p || ˆ p × u | ) choose random i ′ from dis t ributi on p × ˆ u/ | p × ˆ u | , and in d ep endently cho ose j ′ from ˆ p/ | ˆ p | , 2. o r, otherw ise, choose random i ′ from dis t ributi on p/ | p | , and in d ep endently cho ose j ′ from ˆ p × u/ | ˆ p × u | . 3. Ret urn ( i ′ , j ′ ). The k ey prop erty o f random-pair is t hat it ma kes the exp ected c ha nges in x a nd ˆ x correct : any gi ven pair ( i, j ) is chosen with proba b i lity prop o r tiona l to p i ˆ p j /δ ij , which makes the exp ected change in a ny x j and ˆ x i , resp ecti vely , is prop ort ional to ˆ p j and p i . (See Lemm a 2 b elow.) Maintaining estimates ( y and ˆ y ) of M x and M T ˆ x . Instead of mai ntaining t he vectors p a n d ˆ p as di rect functions of the vectors M x a nd M T ˆ x , to sav e work, the algo rithm maintains mo r e slowly changing estimates ( y a nd ˆ y ) of the v ecto rs M x a n d M T ˆ x , and maintains p a n d ˆ p as functio ns of the esti mates, rather tha n as functions o f M x and M T ˆ x . Sp eci fically , the a lgor ithm ma intains y and ˆ y a s fo l lows. When any M i x increas es by som e δ ∈ [0 , 1 ] in an itera tion, the a l gori t hm increa s es the corresp ondi ng estimat e y i by 1 wit h pr obabili ty δ . Likewise, w hen any M T j ˆ x increases by some ˆ δ ∈ [0 , 1 ] in a n it eratio n, the a lgori thm increases the corresp onding estim ate ˆ y j by 1 w ith pr obabil i ty ˆ δ . Then, each p i is maintained as p i = (1 + ε ) y i instead o f (1 + ε ) M i x , and each ˆ p j is maintained as ˆ p j = (1 − ε ) ˆ y j instead of (1 + ε ) M i x . This reduces the frequency of up dates to p and ˆ p (and so reduces the t otal work), yet mai ntains y ≈ M x and ˆ y ≈ M T ˆ x with hig h probabi lity , whi ch is eno ugh to still allow a (s u i tably mo di fied) co up l ing ar g ument to go throug h. Each change to a y i or a ˆ y j increases the changed element b y 1 . Al so, no elem en t of y or ˆ y gets la r ger than N b efore the algo rithm stops ( or th e corresp onding covering constraint is deleted) . Thus, in total the elements of y and ˆ y are changed a t most O ( ( r + c ) N ) = O (( r + c ) l og( n ) /ε 2 ) tim es. W e implement the algo r ithm to do only c onstant work mai ntaining the rema ining vectors for each such change. Thi s allows us to b o und t he total ti m e by O (( r + c ) log( n ) /ε 2 ) (pl us O ( n ) pre- and po st-pro cessing time). As a s t ep t ow ards this go al, in each iteratio n, in order to determine the elements in y a nd ˆ y that change, using just O (1 ) work p er c hanged elemen t, the al gorit hm uses the foll owing tri ck. It cho oses a random β ∈ [0 , 1]. It then i ncrements y i by 1 f or those i such that the increa se M ij ′ δ i ′ j ′ in M i x is at least β . Li kewise, it increments ˆ y j by 1 for j suc h tha t t he increase M i ′ j δ i ′ j ′ in M T j ˆ x is at least β . T o do thi s efficiently , the algo rithm prepro cesses M , so that withi n each r ow M i or co lumn M T j of M , the elements can be accessed in (approximat ely) decr ea sing or d er in constant time p er element accessed. ( T his prepro cessi ng is describ ed i n Section 4.) This metho d of i ncrementing th e elements of y and ˆ y uses cons t ant work per changed element and incremen ts ea ch element with the correct pr o babili ty . (T he rando m increm ents o f different elements are not i ndep endent, but this is ok ay b ecause, in t he end, each estima te y j and ˆ y i will be shown sep erat ely t o b e correct with hi gh probabil ity .) The detail ed algor ithm is sho wn in Fi g. 1, except for the subrouti ne ra ndom-pai r (a b ov e) and som e implementati o n deta ils tha t are left until Secti on 4. Appr oximation guar ante e. Next we st a te and prov e t he approximati on guara ntee for the full al g orit h m in Fi g. 1. W e fir st prove three util ity lem mas. The first utili ty lemm a est ablishes t hat (i n exp ecta tion) x , ˆ x , y , and ˆ y change a s desir ed in each iterati on. 6 solve ( M ∈ R r × c + , ε ) — r et urn a (1 − 6 ε ) - appr oxima te primal-dual p air w/ high pr ob. 1. Initiali ze ve ctors x, ˆ x, y , ˆ y ← 0 , and scalar N = ⌈ 2 ln( rc ) /ε 2 ⌉ . 2. Pr ecompute u j . = max { M ij : i ∈ [ r ] } f or j ∈ [ c ] . (The max. ent ry in column M j .) As x and ˆ x are incremente d, the alg. maintains y and ˆ y so E[ y ] = M x , E[ ˆ y ] = M T ˆ x . It main tains vectors p defined by p i . = (1 + ε ) y i and, as a function of ˆ y : J . = { j ∈ [ c ] : ˆ y j ≤ N } (the active columns) ˆ u i ∈ [1 , 2] × max { M ij : j ∈ J } (appro ximates the max. active en try in r o w i of M ) ˆ p j . = (1 − ε ) ˆ y j if j ∈ J 0 otherwise. It main tains vectors p × ˆ u and ˆ p × u , where a × b is a vect or whose i th entry is a i b i . 3. Rep eat un til max i y i = N or min j ˆ y j = N : 4. Let ( i ′ , j ′ ) ← random-pa ir ( p, ˆ p, p × ˆ u, ˆ p × u ). 5. Increase x j ′ and ˆ x i ′ eac h by the same amoun t δ i ′ j ′ . = 1 / ( ˆ u i ′ + u j ′ ). 6. Up date y , ˆ y , and the other vectors as follows: 7. Choose random β ∈ [0 , 1] uniformly , and 8. for each i ∈ [ r ] with M ij ′ δ i ′ j ′ ≥ β , increase y i b y 1 9. (and multiply p i and ( p × ˆ u ) i b y 1 + ε ); 10. for each j ∈ J with M i ′ j δ i ′ j ′ ≥ β , increase ˆ y j b y 1 11. (and multiply ˆ p j and ( ˆ p × u ) j b y 1 − ε ). 12. F or eac h j lea ving J , update J , ˆ u , and p × ˆ u . 13. Let ( x ⋆ , ˆ x ⋆ ) . = ( x/ max i M i x, ˆ x/ min j M T j ˆ x ). R eturn ( x ⋆ , ˆ x ⋆ ). Fig. 1 The ful l algorithm. [ i ] denotes { 1 , 2 , . . . , i } . Implementa tion details are in Section 4. Lemma 2 In e ach iter ation, 1. The l ar gest change i n any r elevant LHS i s at le ast 1/4: max { max i ∆M i x, max j ∈ J ∆M T j ˆ x } ∈ [1 / 4 , 1] . 2. L et α . = | p || ˆ p | / P ij p i ˆ p j /δ ij . The exp e c te d changes in e ach x j , x j , y i , ˆ y j satisfy E [ ∆x j ] = α ˆ p j / | ˆ p | , E[ ∆y i ] = E[ ∆M i x ] = αM ˆ p i / | ˆ p | , E[ ∆ ˆ x i ] = αp i / | p | , E[ ∆ ˆ y j ] = E[ ∆M T j ˆ x ] = αM T p j / | p | . Pr o of (i) By the choice of ˆ u and u , for the ( i ′ , j ′ ) c ho s en, the largest change i n a relev ant LHS is δ i ′ j ′ max max i M ij ′ , max j ∈ J M i ′ j ∈ [1 / 2 , 1] δ i ′ j ′ max( ˆ u i ′ , u j ′ ) ⊆ [1 / 4 , 1 ] δ i ′ j ′ ( ˆ u i ′ + u j ′ ) = [1 / 4 , 1] . (ii) First, we verify that the probabil ity that ra ndom-pai r ret urns a g iven ( i, j ) is α ( p i / | p | )( ˆ p j / | ˆ p | ) /δ ij . Here is the cal culati on. By i nsp ection o f ra ndom-pai r, t h e prob a bility is pro po rtiona l t o | p × ˆ u | | ˆ p | p i ˆ u i | p × ˆ u | ˆ p j | ˆ p | + | p | | ˆ p × u | p i p ˆ p j u j | ˆ p × u | which by algebr a simplifies to p i ˆ p j ( ˆ u i + u j ) = p i ˆ p j /δ ij . Hence, the pro babili ty must b e α ( p i / | p | )( ˆ p j / | ˆ p | ) /δ ij , b ecause t he choice of α makes the s um o ver al l i and j of the pr obabil i ties equal 1. Next, not e that part (i ) of the lemm a impl i es that i n line 8 ( given the chosen i ′ and j ′ ) the probabi lity that a g iven y i is incremented is M ij ′ δ i ′ j ′ , while i n li n e 1 0 the probabili ty tha t a g iven ˆ y j is inc r emented is M i ′ j δ i ′ j ′ . Now, the rema ining equa liti es i n (ii) fo llow by direct ca lculat i on. F or ex ample: E[ ∆x j ] = X i ( αp i / | p | )( ˆ p j / | ˆ p | ) /δ ij ) δ ij = α ˆ p j / | ˆ p | . ⊓ ⊔ 7 The next lemma shows tha t (wi th high pro babili ty) t he est imat e vectors y and ˆ y suit ably approximat e M x and M T ˆ x , respect ively . The proo f is simply a n a pplicat i on of an appr o priat e A zuma-like inequa lity (tai l ored to dea l w ith t he rando m st opping t i me o f the alg orithm ). Lemma 3 1. F or any i , with pr ob ability at le ast 1 − 1 / ( r c ) 2 , at termination (1 − ε ) M i x ≤ y i + εN . 2. F or any j , with pr ob ability at le ast 1 − 1 / ( r c ) 2 , after the last iter ation with j ∈ J , i t holds that (1 − ε ) ˆ y j ≤ M T j ˆ x + εN . Pr o of (i) By Lemma 2, in each iterati on ea ch M i x and y i increase by at mo st 1 and t he exp ected increases in these tw o quantities are the sam e. So, b y the Azuma ineq u a lity for random stopp i ng t imes (Lemma 10), Pr[(1 − ε ) M i x ≥ y i + εN ] is at most exp( − ε 2 N ) ≤ 1 / ( r c ) 2 . Thi s proves (i). The proo f fo r (ii) is si m ilar , no ting that, while j ∈ J , the quantity M T j ˆ x increa ses by a t most 1 each iterat ion. ⊓ ⊔ Finall y , here is the main ut ility lemma . Recall that t he heart o f the ana lysis of t he slow alg orit h m (Lemma 1) was showing that in exp ecta tion | p || ˆ p | w as non-i ncreasing. This all ow ed us to conc l ude that (with hi gh probability at the end) ma x i M i x w as no t muc h larger tha n min j M T j ˆ x . This was the k ey to proving the approximati on rati o. The next l em ma gi ves the a nalog ous a rgument for t he ful l alg orithm . It shows that the quantity | p || ˆ p | is no n- i ncreasing in exp ect a tion, which, by definition of p and ˆ p , implies that (with high pro babili ty at the end) max i y i is not much larg er than m in j ˆ y j . The pro o f is essential l y the sam e as that of Lemma 1, but with some t echnical complica tions accounting for the delet i on o f covering constraints. Since (wit h high pro ba bility by L emma 3) the estim ates y and ˆ y a pproximate M x and M ˆ x , resp ectively , this im pl ies that (wit h hi gh proba bility at the end) ma x i M i x is not much la rger than mi n j M T j ˆ x . Si nce the algo r ithm maintains | x | = | ˆ x | , thi s is enough to prov e the approximati o n ra t io. Lemma 4 With pr ob ability at le ast 1 − 1 /r c , when the algorithm stops, max i y i ≤ N and min j ˆ y j ≥ ( 1 − 2 ε ) N . Pr o of Let p ′ and ˆ p ′ denote p a nd ˆ p aft er a gi ven iterat ion, whi le p and ˆ p denote the v a lues befor e the iterat ion. W e claim that, given p and ˆ p , E[ | p ′ | | ˆ p ′ | ] ≤ | p | | ˆ p | — w i th each itera tion | p | | ˆ p | is non-increasi ng in exp ectatio n. T o prov e it, no te | p ′ | = P i p i (1 + ε∆y i ) = | p | + εp T ∆y and, simila r ly , | ˆ p ′ | = | ˆ p | − ε ˆ p T ∆ ˆ y (recall ∆y i , ∆ ˆ y j ∈ { 0 , 1 } ). Multi pl ying t hese tw o equa tions a n d dro pp i ng a negat i ve ter m gives | p ′ | | ˆ p ′ | ≤ | p | | ˆ p | + ε | ˆ p | p T ∆y − ε | p | ˆ p T ∆ ˆ y . The clai m fo llows by t aking exp ectatio ns of bot h sides, then, in the right-hand side applying linea rity of exp ectatio n and substitut ing E[ ∆y ] = αM ˆ p/ | ˆ p | a nd E[ ∆ ˆ y ] = αM T p/ | p | f rom Lemm a 2. By W ald’s equati on (Lem ma 9), the claim implies that E[ | p | | ˆ p | ] for p and ˆ p at termi natio n is at most its initial v alue r c . Applyi ng the Markov b ound, wit h pro babili ty a t least 1 − 1 /r c , at termi natio n max i p i max j ˆ p j ≤ | p || ˆ p | ≤ ( r c ) 2 ≤ exp( ε 2 N ). Assume this even t happ ens. The index set J is not empty at termina tion, so the m inimum ˆ y j is achiev ed for j ∈ J . Sub s t itute in the definitio n s of p i and ˆ p j and take log to get max i y i ln(1 + ε ) ≤ min j ˆ y j ln(1 / (1 − ε )) + ε 2 N . Divide by ln(1 / (1 − ε )), appl y 1 / ln(1 / (1 − ε )) ≤ 1 /ε and also ln(1 + ε ) / ln(1 / (1 − ε )) ≥ 1 − ε . This gives (1 − ε ) max i y i ≤ m in j ˆ y j + εN . By t he terminati on co nditio n max i y i ≤ N is guaranteed, and either m ax i y i = N or min j ˆ y j = N . If min j ˆ y j = N , t hen the even t in the lemm a o ccurs. If not , then max i y i = N , which ( with the ineq u a lity in previous paragr a ph) implies ( 1 − ε ) N ≤ min j ˆ y j + εN , ag ain i mplyi ng th e even t in the lemm a. ⊓ ⊔ Finall y , here is t he appr oximation guarantee (Theorem 1) . It fol lows fro m the three lemma s ab ov e by straig htforward a l gebra. Theorem 1 Wi th pr ob ability at le ast 1 − 3 /r c , the algorithm i n Fig. 1 r eturns fe asible primal and dual solutions ( x ⋆ , ˆ x ⋆ ) with | x ⋆ | / | ˆ x ⋆ | ≥ 1 − 6 ε . Pr o of Recal l tha t t he a lgori thm returns ( x ⋆ , ˆ x ⋆ ) . = ( x/ max i M i x, ˆ x/ min j M T j ˆ x ). By the nai ve union b ound, with pro babili ty at least 1 − 3 /r c (fo r all i and j ) the events i n Lemma 3 o ccur, and the even t in L emma 4 o ccurs. Assume al l of these even ts ha ppen. T h en, at termina tion, for all i and j , 8 (1 − ε ) M i x ≤ y i + εN (1 − 2 ε ) N ≤ ˆ y j y i ≤ N and (1 − ε ) ˆ y j ≤ M T j ˆ x + εN . By a lgebra, using ( 1 − a )( 1 − b ) ≥ 1 − a − b and 1 / (1 + ε ) ≥ 1 − ε , it fol l ows for all i a nd j that (1 − 2 ε ) M i x ≤ N and (1 − 4 ε ) N ≤ M T j ˆ x. This i mplies m in j M T j ˆ x/ ma x i M i x ≥ 1 − 6 ε . The scaling at t he end of t he a lgori thm assures that x ⋆ and ˆ x ⋆ are fea sible. Since the sizes | x | and | ˆ x | increase by the sam e amo unt ea ch i terat i on, they are equal. Thus, the ra tio of the primal and dual ob jectives i s | x ⋆ | / | ˆ x ⋆ | = min j M T j ˆ x/ ma x i M i x ≥ 1 − 6 ε . ⊓ ⊔ 4 Implementation details and running time This secti on gives remai ni ng implementati on detail s for the algo rithm and b o unds the r unning time. The remaini ng im plementation detai ls concern the mai ntenance of the ve ct ors ( x, ˆ x, y , ˆ y , p, ˆ p, u, ˆ u, p × ˆ u, ˆ p × u ) so that each up dat e to t hese vectors can b e im plemented in constant t ime a nd random -pair can b e im plemented in constant time. The matrix M s ho uld b e given in any standa rd spa rse representation, so that the non-zero entries can be traversed i n time prop or tiona l to the num b er of non-zer o entries. 4.1 Simpler implementatio n First, here is an implementatio n that t a kes O ( n lo g n + ( r + c ) l og( n ) /ε 2 ) ti me. (After t his we describ e how to m o dify this i mplementatio n t o rem ov e t he log n fact o r from the firs t t erm.) Theorem 2 The algorithm c an b e impl emente d to r eturn a (1 − 6 ε ) -appr oximate primal-dual p air for p acking and c overing in tim e O ( n log n + ( r + c ) l og( n ) /ε 2 ) with pr ob ability at le ast 1 − 4 /r c . Pr o of T o supp o r t rando m-pair, store each of t he four vectors p, ˆ p, p × ˆ u, ˆ p × u i n its own rando m-sampl ing data struct ure [15] (see also [1 0]). This dat a structure mai ntains a vector v ; it supp orts r andom sampl ing from t he distri bution v / | v | and c ha nging any entry o f v in consta nt time. Then rando m-pair runs in co nstant time, a nd each up da te of an entry of p , ˆ p , p × ˆ u , o r ˆ p × u ta kes const ant time. Up dating the estima tes y and ˆ y in each i terati on r equires, given i ′ and j ′ , identifying which j and i are such tha t M i ′ j and M ij ′ are at least β /δ i ′ j ′ (the corr espo nding el em ents y i and ˆ y j get increa sed). T o supp ort this efficiently , a t the st a rt of t he algori thm, prepro cess the matri x M . Buil d, for eac h row a nd column, a do ubly lin ked li st o f the non-zero en tri es. Sort e ach li st in desc ending or der. Cross-reference the lists so that, given a n entry M ij in the i t h row list, the corresp o nding entry M ij in the j th colum n list can be found in constant time. The t otal time for prepro cessing is O ( n log n ). Now implem ent each i terati on as foll ows. Let I t denote the set of indices i for which y i is incremented in l i ne 8 i n it eratio n t . F rom the r andom β ∈ [0 , 1 ] and the sorted l ist for ro w j ′ , co m pute this set I t by trav ersi ng the list for r ow j ′ in o rder of decreasin g M ij ′ , co llectin g elements un ti l an i wi th M ij ′ < β /δ i ′ j ′ is encountered. Then, for each i ∈ I t , up date y i , p i , and the i th entry in p × ˆ u i n constant time. Likewise, let J t denote the set of indices j for which ˆ y j is incremented in li ne 10. Com pute J t from the sort ed li st for col umn i ′ . F or each j ∈ J t , up dat e ˆ p j , a nd the j t h entry i n ˆ p × u . T he to tal tim e for these o p eratio ns during the course of the algor ithm is O ( P t 1 + |I t | + |J t | ). F or ea ch element j that leaves J during the i terati on, up date ˆ p j . Delete all en tries in t he j th column list from al l row lists. F o r each r ow list i who se fir st (la r gest) entry is deleted, up dat e the corresp ondi ng ˆ u i by sett ing ˆ u i to b e the next (now first and m aximum) entry rem aining in t he row lis t ; a lso up da te ( p × ˆ u ) i . The tota l tim e for this during the course of the algori thm is O ( n ), beca use each M ij is deleted at most once. This co mpletes t he i mplementatio n. By insp ecti on, t he to tal time is O ( n log n ) (for pr epro cessing, and del etion of covering const raints) plus O ( P t 1 + |I t | + |J t | ) (for the work done as a result of the increments). The first term O ( n log n ) above i s i n it s final for m . The next three lemm as bound the seco nd term (t he sum). The first lem ma b ounds the sum except fo r the “1”. That is, it b ounds the number of tim es a ny y i or ˆ y j is incremented. (There are r + c elements, a nd ea ch can b e increm en t ed at mo s t N times duri ng the course o f the alg orit h m .) 9 Lemma 5 X t |I t | + |J t | ≤ ( r + c ) N = O (( r + c ) log( n ) /ε 2 ) . Pr o of First , P t |I t | ≤ r N beca use each y i can b e increased at most N tim es b efore ma x i y i ≥ N (causi ng termina tion) . Second, P t |J t | ≤ cN b ecause eac h ˆ y j can b e increased at mo st N t imes b efo re j leaves J and ceases to b e up dated. ⊓ ⊔ The next lemma b o unds t he remaini ng part of the second term, which is O ( P t 1). Given that P t |I t | + |J t | ≤ ( r + c ) N , i t’s eno ugh to b oun d t he n umber of itera tions t where |I t | + |J t | = 0. Call such an itera tion empty . ( The 1’s i n the non-empty iterat ions contribute at most P t |I t | + |J t | ≤ ( r + c ) N t o t h e sum. ) W e first show that each i terati on is non-emp ty with probabil ity a t lea st 1/4. This is so b ecause, for any ( i ′ , j ′ ) pair chosen i n a n i terat i on, for the constra int that determi nes the increm ent δ i ′ j ′ , the expect ed increase in the corresp onding y i or ˆ y j must b e at least 1 /4, and that el ement will b e i ncremented (making the iterati on non-empty) with pro babili ty at least 1 / 4. Lemma 6 Given the state at the start of an iter ation, the pr ob ability that i t is empty is at most 3/4. Pr o of Given t he ( i ′ , j ′ ) chosen in t he iterati on, by (1 ) of Lemma 2, by definit ion o f δ i ′ j ′ , there is either an i such that M ij ′ δ i ′ j ′ ≥ 1 / 4 or a j suc h t hat M i ′ j δ i ′ j ′ ≥ 1 / 4. In the for m er case, i ∈ I t with pro babili ty at least 1 / 4. In the lat ter case, j ∈ J t with probabil ity at least 1 / 4. ⊓ ⊔ This impli es that, wit h hi gh pr obabil ity , the number of empty it er atio n s do es not exceed three tim es t h e num b er of non-empty iterati ons by muc h. (This follows from the Azuma-li ke inequa lity .) W e have already bo unded t he num b er of non-empty it eratio ns, so this im plies a b ound (wi th high pro babili ty) on the num b er of em p ty i terati ons. Lemma 7 With pr ob ability at le ast 1 − 1 /r c , the numb er of empty iter ations is O (( r + c ) N ) . Pr o of Let E t be 1 f or em pty iter a tions and 0 otherw ise. By the previous lemma and the Azuma-like i n- equality tai l ored for r andom st opping times (L emma 10), for any δ, A ≥ 0, Pr h (1 − δ ) P T t =1 E t ≥ 3 P T t =1 (1 − E t ) + A i ≤ exp( − δ A ) . T ak i ng δ = 1 / 2 and A = 2 ln( r c ), it f ollows that wi th pr obabil i ty a t least 1 − 1 /r c , t he num b er of empty iterat ions is b o unded b y a constant tim es the n umber of no n-empty it erati o ns plus 2 l n( r c ) . The num b er of non-empty itera tions is at most ( r + c ) N , hence, with probabil ity at lea st 1 − 1 /r c t he num b er of empty iterat ions is O (( r + c ) N ). ⊓ ⊔ Finall y w e compl ete the pro of of Theo rem 2, stated at t he t op o f the section. As discussed a b ov e, t he t otal time i s O ( n log n ) (for prepro cessing, and deletion of cov ering const raints) plus O ( P t 1 + |I t | + |J t | ) (for t he work do ne as a result o f t he increm ents). By Lemm a 5, P t |I t | + |J t | = O (( r + c ) log( n ) /ε 2 ). By L emma 7, with probabil ity 1 − 1 /r c , the num b er of i terati ons t such that |I t | + |J t | = 0 is O (( r + c ) log( n ) /ε 2 ). T ogether, these imply t hat, w ith proba bility 1 − 1 /r c , and the t otal time is O ( n log n + ( r + c ) log( n ) /ε 2 ). T his a nd T heorem 1 imp l y Theo rem 2. ⊓ ⊔ 4.2 F aster implem entation. T o prove the main result, it rema ins t o describ e how to remov e the log n factor fr om t he n l og n term i n the time bo und i n the previo us section. The idea is that it suffices t o appr oximately sor t the r ow and col umn list s, and t hat this can b e done in linear t ime. Theorem 3 The algorithm c an b e impl emente d to r eturn a (1 − 7 ε ) -appr oximate primal-dual p air for p acking and c overing in tim e O ( n + ( c + r ) lo g( n ) /ε 2 ) with pr ob ability at le ast 1 − 5 /r c . 10 Pr o of Mo dify the algor ithm as f ollows. First, prepro cess M a s describ ed in [14, § 2. 1] so that t h e non-zero en t r ies hav e b o unded range. Sp ecifi- cally , let β = min j max i M ij . Let M ′ ij . = 0 if M ij < β ε/c a nd M ′ ij . = min { β c/ε, M ij } o t herwise. A s shown in [14], any (1 − 6 ε )-appr oximate prim al-dual pai r for the t ransform ed problem will b e a ( 1 − 7 ε )-approximat e primal -dual pair for t he ori ginal probl em. In the prepr o cessing st ep, i ns t ead of so rting the row and co l umn list s, pseudo-sort them — so rt them based o n keys ⌊ l og 2 M ij ⌋ . These keys w ill b e integers in the rang e log 2 ( β ) ± log( c/ε ). U se buck et sort , so that a row o r column wit h k entries can b e pro cessed in O ( k + log( c/ε ) ) time. The tot al time for pseudo-sor ting the rows a nd columns is O ( n + ( r + c ) l og( c/ε )). Then, i n the t th iterat ion, maintain the data st ructures as b efore, except as follows. Comput e t he set I t as follows. T rav erse the pseudo -sorted j th col umn until an index i w i th M ij ′ δ i ′ j ′ < β / 2 is fo und. (N o indices later in the l ist ca n b e in I t .) T ak e all the indices i seen w i th M ij ′ δ i ′ j ′ ≥ β . Comput e the set J t simil a rly . T o tal t ime fo r this i s O ( P t 1 + |I ′ t | + |J ′ t | ), w here I ′ t and J ′ t denote the sets of i ndices actuall y trav ersed (so I t ⊆ I ′ t and J t ⊆ J ′ t ). When an index j l eav es the set J , delete a ll entries in the j t h co l umn li st from all row lists. F o r each row list affected, set ˆ u i to two tim es the first element rema ining in the row l i st. This ensures ˆ u i ∈ [1 , 2] max j ∈ J M ij . These ar e t he onl y detail s that are changed. The total t ime i s no w O ( n + ( r + c ) l o g( c/ε )) for prepro cessing and del etion of co vering const raints, pl us O ( P t 1 + |I ′ t | + |J ′ t | ) to im plement the increments and vector up dates. T o finish, the next lem m a b ounds the latter t erm. The b a sic idea i s that, in each i terati on, each mat rix entry is at most twic e as likely to b e examined as it w as in the pr evious algo rithm. Thus, with high probabi lity , each m atrix element is exam ined at m ost abo ut twice as oft en as it would hav e b een in the previ ous a l gori t hm. Lemma 8 With pr ob ability at le ast 1 − 2 /r c , it happ ens that P t (1 + |I ′ t | + |J ′ t | ) = O (( r + c ) N ) . Pr o of Consi der a given iterat ion. Fix i ′ and j ′ chosen in t he iteratio n. F or each i , note tha t, for the random β ∈ [ 0 , 1], Pr[ i ∈ I ′ t ] ≤ Pr[ β / 2 ≤ M ij ′ δ i ′ j ′ ] ≤ 2 M ij ′ δ i ′ j ′ = 2 Pr[ β ≤ M ij ′ δ i ′ j ′ ] = 2 Pr[ i ∈ I t ] . Fix a n i . Appl ying Azum a-like i nequality for random st opping ti mes ( Lemma 1 0), fo r any δ, A ≥ 0, Pr h (1 − δ ) P t [ i ∈ I ′ t ] ≥ 2 P t [ i ∈ I t ] + A i ≤ exp( − δ A ) . (Ab ov e [ i ∈ S ] denot es 1 if i ∈ S a nd 0 other wise.) T ak i ng δ = 1 / 2 and A = 4 ln( r c ), wit h proba bility at least 1 − ( r c ) 2 , it ha ppens tha t P t [ i ∈ I ′ t ] ≤ 4 P t [ i ∈ I t ] + 8 ln( r c ) . Likewise, for a ny j , with pro babili ty a t l east 1 − 1 / ( r c ) 2 , we hav e that P t [ j ∈ J ′ t ] ≤ 2 P t [ j ∈ J t ] + 8 ln( r c ). Summing the naive union b o und ov er all i and j , with probabil ity at least 1 − 1 /r c , it happ ens tha t the sum P t ( |I ′ t | + |J ′ t | ) is a t m o st 4 P t ( |I t | + |J t | ) + 8( r + c ) ln( r c ). By L emma 5 the latter q uantity is O ( ( r + c ) N ). By L emma 7, the number of empty i terati ons is still O ( ( r + c ) N ) w ith probabil ity at least 1 − 1 /r c . The lemma f o llows b y applyi ng the naive union b o un d . ⊓ ⊔ If the even t in t he lem ma happ ens, t hen the tota l tim e is O ( n + ( r + c ) log( n ) /ε 2 ). Thi s prov es Theo r em 3. ⊓ ⊔ 5 Empirical Results W e perf ormed an exp erim ental ev aluati on of our al gorit hm a nd compar ed it a gainst Sim plex o n rando mly generated 0/1 input ma trices. T hese ex per iments suffer f rom the fo llowing l imita tions: (i) t he i nstances are relati vely sm a ll, (ii ) the i nstances are random a nd thus not repr es entati ve o f practica l applicat ions, (iii) the compari son is to the publicly av ailabl e GLPK (G NU Linear Prog rammi ng Kit) , not th e industry stand a rd CPLEX. With tho se cav ea t s, here are t he findings. 11 The runni ng ti m e of our algo rithm is well-predic t ed by t he ana l ysis, wit h a l eading const ant f actor of ab out 12 ba s i c op era tions i n the big-O t erm i n which ε o ccurs. F or mo derately l arge inputs, the algorit hm can be s u bs t antially faster than Si m plex ( GLPK – Gnu Linear Progra mming Kit – Simplex algorit hm gl psol v ersio n 4.15 with default option s ) . 5 The empiric al running times r ep ort e d her e for Simplex ar e to find a (1 ± ε ) -appr oximate solution. F or i nputs wi t h 250 0 -5000 rows and colum ns, the alg orithm (with ε = 0 . 01 ) is fa ster than Simplex by factor s r a nging from tens t o hundreds. F or larger insta nces, the sp eedup g rows roughl y linearly in r c . F or instances w ith mo derately sm all ε and tho usands ( or mor e) rows and c o lumns, the a lgori thm i s or ders of magni t ude faster t han Simplex. The test in pu t s had r, c ∈ [739 , 500 0], ε ∈ { 0 . 02 , 0 . 01 , 0 . 005 } , and ma trix density d ∈ { 1 / 2 k : k = 1 , 2 , 3 , 4 , 5 , 6 } . F or each ( r, c, d ) tuple there was a rando m 0/1 m atri x wi th r rows and c col umns, where ea ch entry was 1 wit h proba bility d . The a lgori thm here was run on each such inpu t , w ith ea ch ε . The running time was co mpared to tha t t aken by a Si m plex solver to find a (1 − ε )-a pproximate solution. GLPK S i mplex fail ed to finish due to cycling on ab out 10 % of the initia l runs; t hose inputs are excluded from the final data . This l eft 1 67 runs. The complete da ta for the non-excluded runs is given in the ta bles at t he end of the section. 5.1 Empirical ev aluation of this algo rithm The running tim e o f the alg orithm here includes (A) ti m e for prepro cessing and init iali z a tion, (B) tim e f or sampli ng ( line 4, once p er iterati on of t he outer lo o p), and (C ) time for increm ents (lines 8 a nd 10, o nce p er iterat ion o f the inner lo ops). Theo r etical ly the dom inant ter m s are O ( n ) fo r (A) a nd O (( r + c ) lo g( n ) /ε 2 ) fo r (C). F or t he input s tested here, the sig nificant t erms in pra ctice are fo r (B) and (C) , w i th the ro le of ( B) dimini s hi ng fo r larg er inst ances. The t i me (num b er of basic op era tions) is well-predicted b y the expression [12( r + c ) + 480 d − 1 ] ln( r c ) ε 2 (1) where d = 1 / 2 k is the density ( f ractio n o f matrix entries th a t are non-zero , at l east 1 / min( r, c )). The 12 ( r + c ) ln( r c ) /ε 2 term is the tim e spent in (C ) , the inner lo ops; it is t he most sign i ficant ter m in the exp eriments a s r a nd c grow. The less signi ficant t erm 480 d − 1 ln( r c ) /ε 2 is f or (B), and is pr o p ortio nal to the num b er of samples (tha t is, it eratio ns o f the outer lo op) . Not e t h a t this term de cr e a ses as ma trix dens i ty increases. ( F or the implementation we fo cused on reduci ng t he t ime for (C), no t fo r (B). It is probable tha t the constant 480 ab ove can b e reduced with a m o re car eful i mplementatio n. ) The plo t b elow shows t he run time in seconds, di vided by the predi cted t ime (the pr edicted number of basic oper ations ( 1) ti mes the predict ed ti me p er basic op eratio n): 0.8 1 1.2 1.4 1.6 1.8 2 2.2 1 10 100 1000 x = (pr edicted time) y = (time / pre dicted time) ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ The ti me exceeds the predict ed time by up to a fa ctor of two f or l arge inst ances. T o understa nd thi s fur ther, co nsider the next tw o plots. The plot on the left plots the act ual t he num b er of basic op erat ions ( obtain ed by instru m enting the co d e) , divided by t he estimat e (1). The pl ot on the rig ht plots the average time p er op erat ion. 5 Preliminary exp eriments suggest that the more sophisticated CPLEX implemen tation is f aster than GLPK Simpl ex, but, often, only by a factor of fiv e or so. Also, prelimi nary exp eriments on l ar ger instances than ar e considered here suggest that the running time of Sim plex and interior-point methods, including CPLEX implementat ions on random instances grows more rapidly than estimated here. 12 0.85 0.9 0.95 1 1.05 1.1 1.15 1.2 1e+09 1e+10 1e+11 x = (pr edicted #oper ations) y = (#op er ations) / (pr edicted #op er ations) + + + + + + + + + + + + + + + + + + + + + + + + + + + + + + + + + + + + + + + + + + + + + + + + + + + + + + + + + + + + + + + + + + + + + + + + + + + + + + + + + + + + + + + + + + + + + + + + + + + + + + + + + + + + + + + + + + + + + + + + + + + + + + + + + + + + + + + + + + + + + + + + + + + + + + + + + + + + + + + + + + + + + + + + 0.8 1 1.2 1.4 1.6 1.8 2 2.2 2.4 2.6 1e+09 1e+10 1e+11 x = (pr edicted #oper ations) y = (normalized time p er op eration) + + + + + + + + + + + + + + + + + + + + + + + + + + + + + + + + + + + + + + + + + + + + + + + + + + + + + + + + + + + + + + + + + + + + + + + + + + + + + + + + + + + + + + + + + + + + + + + + + + + + + + + + + + + + + + + + + + + + + + + + + + + + + + + + + + + + + + + + + + + + + + + + + + + + + + + + + + + + + + + + + + + + + + + + The conclusi on seems to b e that the num b er of basi c op erat ions is as predi c t ed, but, unexp ect edly , the time p er b asic op er at ion is larg er (by as muc h a s a facto r of two) for large inputs. W e o bserved this effect on a n umber of di fferent m achines. W e don’t know why . P erha ps ca ching o r memory al lo cati on issues could be the culprit. 5.2 Empirical ev aluation of Simpl ex W e estimate the time for Simplex to find a nea r-opti m al appr oximation to be at l east 5 m in( r , c ) r c basic op erati o ns. This estim ate comes from assumi ng tha t a t lea st Ω (min( r, c )) pivot steps are required (b ecause this many v aria bles will b e no n -zer o in t he final sol u t ion), a nd each pi vot step w ill take Ω ( rc ) tim e. (This ho l ds even fo r sparse m atri ces du e to rapid fil l-in.) The leadi ng consta nt 5 comes from exp erim ental ev aluatio n. This esti mate seem s conser v ative, and indeed GLPK Simplex oft en exceeded it. Here’s a plot of the actual time for Simpl ex to find a (1 − ε ) -approximate solutio n (fo r eac h test input) , divided b y this estima te (5 min( r, c ) r c times the est imat ed t ime p er op era tion) . 0.1 1 10 100 1 10 100 1000 x = (predicted time f or simplex) y = (time / pre dicted time) ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ Simplex genera lly to ok at lea st the est i mated time, and so metim es up to a fact or o f ten l onger. (Not e also that thi s exp erimental data ex cl udes a b out 10 % of the runs, in which GL PK Sim plex fa i led to t erminat e due t o ba sis cycl ing.) 13 5.3 Speed-up of t his al gori t hm versus Simplex. Combining the ab ov e estima tes, a conserv ative estima te of the sp eed-up fa ctor in using the alg orithm here instead of Si m plex ( that i s, the time for Si mplex divided by the tim e for t he a lgor ithm here) is 5 min( r, c ) r c [12( r + c ) + 480 d − 1 ] ln( r c ) /ε 2 . (2) The plo t bel ow plots the act ua l measured sp eed-up divided by the conser v ative est i mate (2), as a function o f the esti m ated runni n g tim e of the algori thm here. 0.1 1 10 100 1 10 100 1000 x = (predicted alg time ) y = (Simplex time / alg time) (predicted Simplex time / pr edicted alg time) ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ ♦ The sp eedup is typically at least as predict ed in (2), and often m ore. T o make thi s more concrete, con si der t he case when r ≈ c and ε = 0 . 01. Then t he estima te simpl ifies to ab out ( r / 310) 2 / ln r . F or r ≥ 9 00 or so, the algo r ithm here sh o uld b e fast er than Simpl ex, a nd for each factor -10 increase in r , the sp eedup should i ncrease by a facto r of almo st 100. 5.4 Implementation issues The prim ary implementatio n issue is implem enting the random sa mpling efficiently and precisely . The data str uctures in [15, 10], hav e tw o practi cal drawbac ks. The con st ant facto rs in the runn i ng times are mo deratel y large, and they i mplici t ly o r explicit ly requi re that the probabili ties b ei ng sampled remain in a po lynomi ally b o unded ra nge ( i n the a lgori thm here, this can b e accomplished by rescalin g the data structure p erio di cally) . Howev er, t he alg orithm here uses these data st ructures in a rest ricted way . Using the underlying ideas, we built a data structure from scrat ch wit h very fast entry-up date time a nd mo derately fast sam ple t ime. W e fo cused more o n reducing the upda te time t han the sam p l ing time, b ecause w e exp ect more update op erat ions t han sampling o per ation s. F ull details are b eyond the sco pe of this paper. An op en-source implementation is at [ 19]. 5.5 Data The foll owing ta b l e ta bulates t he detail s of the exp eri mental results desc r ib ed earli er : “t-a lg” is the t ime for the algor ithm here in seconds; “t-sim ” is the time for Simplex to find a (1 − ε ) - o ptima l soln; “t-sim%” is that ti me div ided by the time for S i mplex to compl ete; “al g/sim ” is t-a lg/t - si m. 14 r c k 100 ε t-alg t-sim t-sim% alg/sim 739 739 2 2.0 1 3 0.31 0.519 739 739 2 1.0 7 6 0.51 1.251 739 739 2 0.5 33 7 0.64 4.387 739 739 5 2.0 3 1 0.51 2.656 739 739 5 1.0 15 1 0.63 8.840 739 739 5 0.5 63 2 0.76 30.733 739 739 4 2.0 2 2 0.51 0.970 739 739 4 1.0 11 3 0.64 3.317 739 739 4 0.5 46 4 0.76 11.634 739 739 3 2.0 2 3 0.43 0.561 739 739 3 1.0 9 5 0.60 1.745 739 739 3 0.5 38 6 0.72 6.197 1480 740 3 2.0 2 9 0.37 0.304 1480 740 3 1.0 13 13 0.53 0.959 1480 740 3 0.5 57 16 0.64 3.478 1480 740 2 2.0 2 24 0.44 0.102 1480 740 2 1.0 11 33 0.60 0.342 1480 740 2 0.5 51 39 0.71 1.313 1480 740 5 2.0 4 4 0.41 0.928 1480 740 5 1.0 18 6 0.56 2.930 1480 740 5 0.5 77 7 0.66 10.447 1480 740 4 2.0 3 6 0.34 0.495 1480 740 4 1.0 15 10 0.49 1.496 1480 740 4 0.5 64 12 0.60 5.239 740 1480 3 2.0 3 14 0.35 0.211 740 1480 3 1.0 14 21 0.51 0.667 740 1480 3 0.5 63 29 0.71 2.139 740 1480 2 2.0 2 13 0.27 0.192 740 1480 2 1.0 11 25 0.51 0.462 740 1480 2 0.5 54 34 0.68 1.597 740 1480 5 2.0 5 7 0.59 0.699 740 1480 5 1.0 22 9 0.72 2.460 740 1480 5 0.5 94 10 0.82 9.054 740 1480 1 2.0 2 23 0.24 0.097 740 1480 1 1.0 9 41 0.44 0.237 740 1480 1 0.5 47 55 0.59 0.848 740 1480 4 2.0 3 12 0.47 0.313 740 1480 4 1.0 17 15 0.61 1.130 740 1480 4 0.5 73 19 0.75 3.803 r c k 100 ε t-alg t-sim t-sim% al g/sim 1110 1110 3 2.0 3 21 0.30 0.142 1110 1110 3 1.0 13 33 0.48 0.399 1110 1110 3 0.5 58 43 0.62 1.354 1110 1110 6 2.0 6 5 0.64 1.327 1110 1110 6 1.0 29 6 0.76 4.763 1110 1110 6 0.5 121 6 0.83 17.903 1110 1110 5 2.0 4 9 0.48 0.480 1110 1110 5 1.0 20 13 0.64 1.575 1110 1110 5 0.5 86 15 0.77 5.439 1110 1110 4 2.0 3 17 0.43 0.203 1110 1110 4 1.0 16 24 0.60 0.649 1110 1110 4 0.5 68 29 0.71 2.325 1111 2222 1 2.0 3 94 0.15 0.036 1111 2222 1 1.0 15 198 0.30 0.077 1111 2222 1 0.5 78 344 0.53 0.227 1111 2222 4 2.0 5 94 0.49 0.057 1111 2222 4 1.0 26 123 0.64 0.212 1111 2222 4 0.5 119 148 0.77 0.803 1111 2222 3 2.0 4 109 0.35 0.042 1111 2222 3 1.0 21 163 0.52 0.134 1111 2222 3 0.5 104 222 0.71 0.467 1111 2222 6 2.0 9 23 0.66 0.426 1111 2222 6 1.0 44 26 0.76 1.664 1111 2222 6 0.5 187 29 0.84 6.346 1111 2222 2 2.0 3 83 0.18 0.047 1111 2222 2 0.5 91 269 0.57 0.339 1111 2222 5 2.0 6 63 0.57 0.110 1111 2222 5 1.0 32 77 0.69 0.415 1111 2222 5 0.5 140 88 0.79 1.594 2222 1111 4 2.0 4 53 0.38 0.092 2222 1111 4 1.0 23 75 0.54 0.311 2222 1111 4 0.5 107 91 0.65 1.185 2222 1111 3 2.0 4 53 0.29 0.080 2222 1111 3 1.0 21 84 0.46 0.253 2222 1111 3 0.5 97 115 0.63 0.848 2222 1111 6 2.0 7 21 0.49 0.373 2222 1111 6 1.0 34 26 0.61 1.297 2222 1111 6 0.5 148 30 0.71 4.816 2222 1111 2 2.0 3 102 0.36 0.037 2222 1111 2 1.0 17 139 0.49 0.127 2222 1111 2 0.5 88 173 0.61 0.513 2222 1111 5 2.0 5 42 0.41 0.141 2222 1111 5 1.0 27 57 0.56 0.472 2222 1111 5 0.5 120 70 0.68 1.696 15 r c k 100 ε t-alg t-sim t-sim% alg/sim 1666 1666 4 2.0 5 117 0.40 0.045 1666 1666 4 1.0 24 163 0.56 0.153 1666 1666 4 0.5 111 201 0.69 0.554 1666 1666 3 2.0 4 112 0.29 0.040 1666 1666 3 1.0 21 185 0.48 0.114 1666 1666 3 0.5 98 245 0.64 0.400 1666 1666 6 2.0 8 42 0.51 0.210 1666 1666 6 1.0 38 55 0.66 0.697 1666 1666 6 0.5 165 63 0.76 2.612 1666 1666 2 2.0 3 109 0.20 0.036 1666 1666 2 1.0 18 221 0.41 0.083 1666 1666 2 0.5 88 313 0.58 0.282 1666 1666 5 2.0 6 82 0.44 0.080 1666 1666 5 1.0 29 109 0.58 0.269 1666 1666 5 0.5 130 133 0.71 0.981 1666 3332 2 2.0 5 354 0.12 0.017 1666 3332 2 1.0 30 857 0.29 0.036 1666 3332 2 0.5 162 1594 0.54 0.102 1666 3332 5 2.0 9 509 0.51 0.020 1666 3332 5 1.0 51 654 0.65 0.078 1666 3332 5 0.5 227 762 0.76 0.299 1666 3332 1 2.0 5 350 0.09 0.015 1666 3332 1 1.0 24 1003 0.25 0.025 1666 3332 1 0.5 135 1881 0.46 0.072 1666 3332 4 2.0 7 578 0.38 0.014 1666 3332 4 1.0 42 899 0.58 0.047 1666 3332 4 0.5 204 1087 0.71 0.188 1666 3332 3 2.0 6 533 0.20 0.013 1666 3332 3 1.0 36 1095 0.41 0.033 1666 3332 3 0.5 180 1741 0.65 0.104 1666 3332 6 2.0 13 255 0.56 0.051 1666 3332 6 1.0 60 319 0.70 0.190 1666 3332 6 0.5 271 361 0.79 0.752 3332 1666 5 2.0 9 275 0.38 0.033 3332 1666 5 1.0 45 392 0.54 0.115 3332 1666 5 0.5 213 482 0.66 0.441 3332 1666 4 2.0 7 274 0.30 0.028 3332 1666 4 1.0 40 414 0.45 0.097 3332 1666 4 0.5 195 556 0.60 0.352 3332 1666 3 2.0 6 316 0.24 0.020 3332 1666 3 1.0 34 544 0.41 0.063 3332 1666 3 0.5 178 703 0.53 0.254 3332 1666 6 2.0 11 154 0.39 0.071 3332 1666 6 1.0 52 218 0.56 0.238 3332 1666 6 0.5 233 273 0.70 0.854 r c k 100 ε t-alg t-sim t-sim% al g/sim 2499 2499 2 2.0 5 530 0.13 0.011 2499 2499 2 1.0 29 1556 0.40 0.019 2499 2499 2 0.5 159 2275 0.58 0.070 2499 2499 5 2.0 9 580 0.42 0.016 2499 2499 5 1.0 46 793 0.58 0.059 2499 2499 5 0.5 217 960 0.70 0.227 2499 2499 4 2.0 8 662 0.31 0.012 2499 2499 4 1.0 42 1064 0.50 0.040 2499 2499 4 0.5 195 1369 0.64 0.143 2499 2499 7 2.0 17 125 0.50 0.139 2499 2499 7 1.0 76 162 0.65 0.475 2499 2499 7 0.5 327 190 0.77 1.715 2499 2499 3 2.0 6 618 0.18 0.011 2499 2499 3 1.0 35 1079 0.32 0.032 2499 2499 3 0.5 174 1774 0.53 0.099 2500 5000 6 2.0 19 2525 0.52 0.008 2500 5000 6 1.0 98 3337 0.69 0.029 2500 5000 6 0.5 458 3828 0.79 0.120 2500 5000 7 2.0 26 1042 0.60 0.026 2500 5000 7 1.0 124 1272 0.73 0.098 2500 5000 7 0.5 556 1427 0.82 0.390 5000 2500 3 2.0 10 2165 0.23 0.005 5000 2500 3 1.0 62 3828 0.40 0.016 5000 2500 3 0.5 338 5586 0.58 0.061 5000 2500 6 2.0 17 1352 0.39 0.013 5000 2500 6 1.0 90 1832 0.53 0.049 5000 2500 6 0.5 418 2297 0.66 0.182 5000 2500 5 2.0 14 1752 0.33 0.008 5000 2500 5 1.0 82 2592 0.49 0.032 5000 2500 5 0.5 397 3330 0.63 0.119 5000 2500 4 2.0 12 1916 0.26 0.006 5000 2500 4 1.0 70 3177 0.44 0.022 5000 2500 4 0.5 367 4197 0.58 0.087 3750 3750 7 2.0 23 1828 0.50 0.013 3750 3750 7 1.0 111 2343 0.64 0.047 3750 3750 7 0.5 506 2712 0.74 0.187 3750 3750 6 2.0 18 3061 0.40 0.006 3750 3750 6 1.0 91 4263 0.55 0.022 3750 3750 6 0.5 432 5279 0.68 0.082 6 F uture di rections Can one ext end the coupl ing technique to m ixe d pa cking and cov eri ng problems? What a b o ut t he sp ecial case of ∃ x ≥ 0 ; Ax ≈ b (i mp orta n t for computer tomo g raphy). Wha t ab out cov eri ng wi th “b ox” const raints (upp er b ounds on indi vidual v ariabl es)? Perhaps most im p ortantly , what abo ut general (not expli citly given) packing and covering, e.g. t o ma ximum multicommo dit y flow (where P is the p ol ytop e whose vertices corresp ond to al l s i → t i paths)? In al l o f these ca ses, correct ness of a natural alg orithm i s easy to est a blish, but the r unning time is pro blemat ic. This seems to b e b ecause the coupling approa ch requires that fast primal and dua l a l gori t hms of a part icular k i nd must b oth exist . Such al gori thms ar e known f or ea c h of the ab ov e-mentioned problems, but the natur al alg orit hm for each dual problems is slow. The algori thm seems a natur a l ca ndidate for solving dynamic probl ems, o r sequences of closely related problems ( e.g. each pro blem comes from the previous one b y a small change in the constra int ma trix) . Adapting the alg orit hm to start with a gi ven prima l /dual pa ir seems strai ghtforward and may be useful in practice. Can one use coupli ng t o i mprov e p ar al lel and di stribute d alg orit h m s for pac ki ng and covering (e.g. [14, 21]), p erhaps reducing the dep endence on ε from 1 /ε 4 to 1 /ε 3 ? (In t h i s case, i nstead of incremen ti ng a r andomly c hosen v ariabl e in each of the primal and dual so lutio ns, one would increment al l pri mal an d du a l v ariables deter minist i cally in each iterati o n: i ncrement the prima l vector x by α ˆ p a nd the dual vector ˆ x by αp for the m axima l α so t hat the correct nes s pro o f go es through. Can one b ound the num b er of iterat ions, assuming the m atrix is appro priatel y prepro cessed? ) 16 Ackno wledgments Thanks to two a nonymous referees for h el pful su g gestio ns. The fi r st author would like t o thank t he Greek State Scholarship F ounda tion (IKY). The second autho r’s research was partia lly suppor ted by NSF grants 0626 9 12, 07290 71, and 11179 54. References 1. Ar ora, S., Hazan, E., Kale, S.: The multiplicativ e weigh ts up date method: A meta-algorithm and applications. Theory of Computing 8 , 121–164 (2012) 2. Biensto ck, D.: Poten tial F unction M etho ds for Approximately Solving Linear Programmi ng Pr oblems: Theory and Practice. Kl u wer Academic Publi shers, Boston, MA (2002) 3. Biensto ck, D., Iyengar, G.: Solving f r actional pac king problems in O (1 /ε ) iterations. In: Pro ceedings of the T hi rty First Annual ACM Symp osium on Theory of Computing, pp. 146–155. Chicago, Illinois (2004) 4. Chudak, F.A. , Eleuterio, V.: Improv ed approximation schemes for linear programming relaxations of comb inatorial optimization problems. In: Pro ceedings of the eleven th IPCO Confer ence, Berli n, German y . Springer (2005) 5. Clar kson, K. L., Hazan, E., W o o druff, D. P . : Sublinear optimi zation for mac hine learning. In: Pro ceedings of the 2010 IEEE 51st Ann ual Symposi um on F o undations of Computer Science, pp. 449–457. IEEE Computer Society (2010) 6. Clar kson, K.L., Hazan, E., W oo druff, D.P .: Sublinear optimization for machine learning. Journal of the ACM 59 (5) (2012) 7. Garg, N., Ko enemann, J.: F a ster and simpl er algori thms f or multicommodity flow and other fractional packing problems. SIAM Journal on Computing 37 (2), 630–6 52 (2007) 8. Garg, N ., K¨ onemann, J.: F aste r and sim pl er algori thms for multicommodity flow and other fr actional packing problems. In: Thirty Ninth Annua l Symp osium on F oundations of Computer Science. IEEE, Miami Beac h, Florida (1998) 9. Gri gori adis, M .D., Khac hiyan, L.G.: A s ubl i near-time randomized approximation algorithm for matrix games. Op era- tions Research Letters 18 (2), 53–58 (1995 ) 10. Hagerup, T . , Mehlhorn, K., Munro, J.I.: Optimal algori thms for generating discrete random v ariables with cha nging distributions. Lecture Notes in Computer Science 700 , 253–264 (1993). Pr oceedings 20th Inte r national Conference on Automata, Languages and Programm i ng 11. Klein, P ., Y oung, N.E.: On the num b er of iterations f or Dantzig-W olfe optimization and packing-co v eri ng approximation algorithms. Lecture Notes i n Computer Science 1610 , 320–327 (1999). U R L citeseer.nj .nec.com/440226.html 12. K¨ onemann, J.: F ast combinatorial algorithms for pac king and co vering problems. Master’s thesis, Univ ersit¨ at des Saarlandes (1998) 13. Koufogiannakis, C., Y oung, N.E.: B eating simplex for fractional packing and co vering linear programs. In the for t y- eigh th IEEE symp osium on F oundations of Computer Science pp. 494–504 (2007). DOI 10.1109/F OCS.2007.62 14. Luby , M., Nisan, N.: A parallel appro ximation algorithm for p ositive linear programmi ng. In: Pro ceedings of the Twe nt y-Fifth Annu al ACM Symposi um on Theory of Computing, pp. 448–457. San Diego, California (1993) 15. Matias, Y., Vitter, J.S., Ni , W.: Dynamic Generation of Discrete Random V ariates. Theory of Computing Systems 36 (4), 329–358 (2003) 16. Nesterov, Y.: Smo oth minimization of non-smo oth functions. Mathematical Pr ogramming 1 03 (1), 127–152 (2005) 17. Nesterov, Y. : U nconstrained con vex m inimization in relative scale. Mathematics of Op erations Research 34 (1), 180–193 (2009) 18. T odd, M.J.: The man y facets of linear programm i ng. Mathematical Pr ogramming 91 (3), 417–436 (2002 ) 19. Y oung, N.: F ast ptas for packing and co vering linear pr ograms. https://code.g oogle.com/p/fastpc/ (2013) 20. Y oung, N . E.: K-medians, f acility lo cation, and the Chernoff-W ald bound. In: Pro ceedings of the Eleven th A nn ual ACM-SIAM Symp osium on Di screte Algorithms, pp. 86–95. San F rancisco, California (2000 ) 21. Y oung, N.E. : Sequen tial and paral l el algori thms f or m i xed packing and cov ering. In: F orty Second Annual Symp osium on F oundat ions of Computer Science, pp. 538–546. IEEE, Las V e gas, NV (2002) 7 App endix: Util ity Lem mas The first is a one-sided v ariant o f W a ld’s equati o n: Lemma 9 [20, lemma 4.1] L et K b e any finite numb er. Le t x 0 , x 1 , . . . , x T b e a se q uenc e of r andom variables, wher e T is a r andom stopping time with finite exp e c tation. If E[ x t − x t − 1 | x t − 1 ] ≤ µ and (in every outc ome) x t − x t − 1 ≤ K for t ≤ T , then E[ x T − x 0 ] ≤ µ E[ T ] . The second is the Azuma-li ke inequal i ty tai lored for random stopping times. Lemma 10 L et X = P T t =1 x t and Y = P T t =1 y t b e sums of non-ne gative r andom variables, wher e T i s a r andom stopping time with finite exp e ctation, and, f or al l t , | x t − y t | ≤ 1 and E x t − y t | P s 0. Consider the sequence π 0 , π 1 , . . . , π T where π t = 0 fo r t > λ E[ T ] a nd ot herwise π t . = Y s ≤ t (1 + ε ) x s (1 − ε ) y s = π t − 1 (1 + ε ) x t (1 − ε ) y t ≤ π t − 1 (1 + εx t − ε y t ) (using (1 + ε ) x (1 − ε ) y ≤ ( 1 + εx − εy ) when | x − y | ≤ 1). F rom E[ x t − y t | π t − 1 ] ≤ 0, it follows that E[ π t | π t − 1 ] ≤ π t − 1 . Note that , fro m t he use of λ , P s ≤ t x s − y s and (t herefore) π t − π t − 1 are b ounded. T hus W al d’s (Lem ma 9), impli es E[ π T ] ≤ π 0 = 1. Applying the Markov bou nd , Pr[ π T ≥ exp( εA )] ≤ exp( − εA ) . So as s um e π T < exp( εA ). T aking lo gs, if T ≤ λ E[ T ], X ln(1 + ε ) − Y l n ( 1 / (1 − ε )) = ln π T < εA. Dividing by ln(1 / (1 − ε )) and appl ying t he i nequalit ies ln(1 + ε ) / ln(1 / (1 − ε ) ) ≥ 1 − ε and ε/ l n(1 / (1 − ε ) ) ≤ 1, g ives (1 − ε ) X < Y + A . Thus, Pr[(1 − ε ) X ≥ Y + A ] ≤ Pr[ T ≥ λ E[ T ]] + Pr[ π T ≥ exp( εA )] ≤ 1 /λ + exp( − εA ) . Since λ ca n b e arbitr arily la r ge, the lemma fo llows. ⊓ ⊔ 18

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

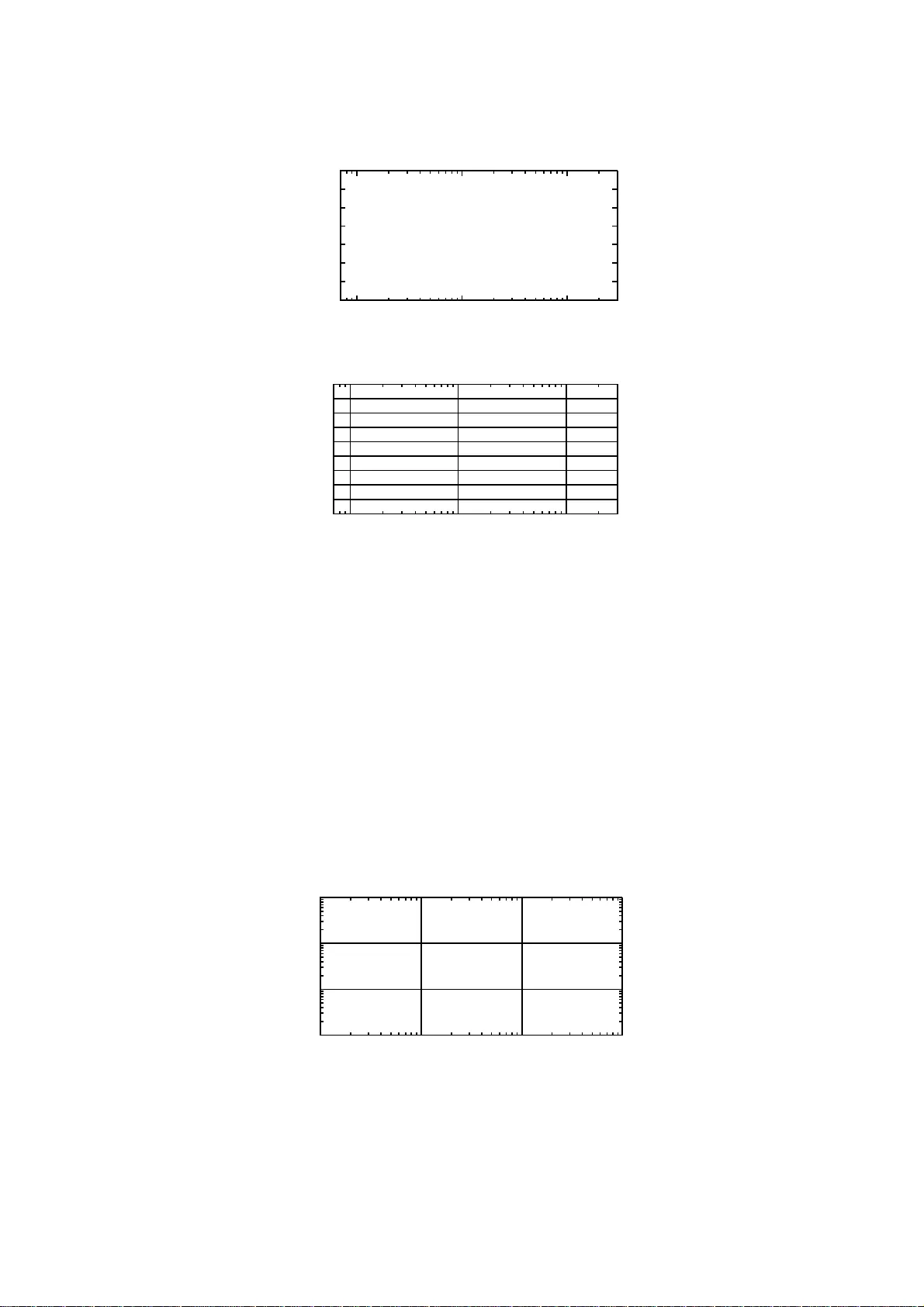

Leave a Comment