Removing spurious interactions in complex networks

Identifying and removing spurious links in complex networks is a meaningful problem for many real applications and is crucial for improving the reliability of network data, which in turn can lead to a better understanding of the highly interconnected nature of various social, biological and communication systems. In this work we study the features of different simple spurious link elimination methods, revealing that they may lead to the distortion of networks’ structural and dynamical properties. Accordingly, we propose a hybrid method which combines similarity-based index and edge-betweenness centrality. We show that our method can effectively eliminate the spurious interactions while leaving the network connected and preserving the network’s functionalities.

💡 Research Summary

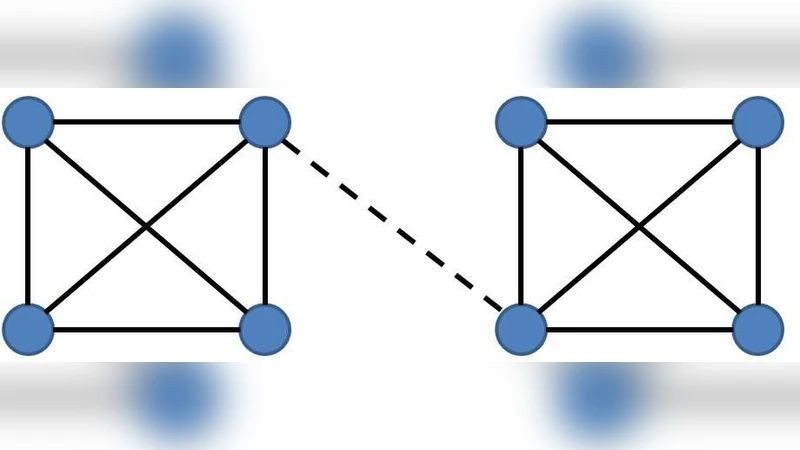

The paper addresses the problem of identifying and removing spurious (false) links from complex networks while preserving the networks’ structural integrity and functional properties. Although much research has focused on link prediction—i.e., finding missing connections—relatively little attention has been paid to the opposite problem of eliminating erroneous links. The authors argue that naïvely deleting suspected spurious edges can be far more damaging than missing a few true edges, because it may fragment the giant component, disrupt communication pathways, and impair dynamical processes such as synchronization, traffic flow, or power distribution.

To investigate this issue, the authors select six well‑studied empirical networks: the C. elegans neural network (CE), an email communication network, a co‑authorship network of scientists (SC), a political blog network (PB), a protein‑protein interaction network (PPI), and the US airline transportation network (USAir). For each network they extract the giant component and treat it as the “ground‑truth” network (A_t). They then inject a fraction (f) (ranging from 0 to 0.8) of random edges to create an “observed” network (A_o) that contains spurious links. The goal of any spurious‑link detection algorithm is to rank all edges by a reliability score (R_{ij}) and to remove the lowest‑ranked fraction (f’) (typically set equal to (f)) to obtain a reconstructed network (A_r).

Two families of reliability indices are examined. The first family is similarity‑based, assuming that edges joining similar nodes are more likely to be genuine. Four representative measures are used: Common Neighbors (CN), Resource Allocation (RA), Local Path (LP), and Katz index. The second family is centrality‑based, assuming that edges that are topologically important should be retained. Two simple centrality scores are considered: Preferential Attachment (PA) and Edge Betweenness (EB). The authors compute the Area Under the ROC Curve (AUC) for each method, which quantifies the probability that a true edge receives a higher score than a spurious one.

The similarity‑based methods achieve high AUC values (≈0.8–0.95), indicating good discrimination ability, but when the lowest‑ranked edges are removed they dramatically shrink the giant component and distort other structural metrics such as clustering coefficient and average shortest‑path length. In contrast, EB yields low AUC (<0.5) yet preserves connectivity because it preferentially protects high‑betweenness edges that hold the network together.

To reconcile these opposing strengths, the authors propose a hybrid index: \

Comments & Academic Discussion

Loading comments...

Leave a Comment