Information-theoretic bound on the energy cost of stochastic simulation

Physical systems are often simulated using a stochastic computation where different final states result from identical initial states. Here, we derive the minimum energy cost of simulating a complex data set of a general physical system with a stochastic computation. We show that the cost is proportional to the difference between two information-theoretic measures of complexity of the data - the statistical complexity and the predictive information. We derive the difference as the amount of information erased during the computation. Finally, we illustrate the physics of information by implementing the stochastic computation as a Gedankenexperiment of a Szilard-type engine. The results create a new link between thermodynamics, information theory, and complexity.

💡 Research Summary

The paper investigates the fundamental thermodynamic cost of simulating a physical system when the simulation is performed by a stochastic computation, i.e., a process that can produce different outputs from identical inputs. The authors connect this cost to two well‑known information‑theoretic quantities: the statistical complexity Cµ, which measures the amount of memory a minimal predictive model (an ε‑machine) must retain, and the predictive information E, which quantifies how much of the past information is actually transmitted to the future.

First, the authors review the historical link between entropy in thermodynamics and Shannon entropy, recalling Maxwell’s demon and Landauer’s principle that erasing one bit of logical information necessarily dissipates at least kT ln 2 of heat. They then introduce ε‑machines as the unique minimal unifilar hidden‑Markov models that capture all regularities of a stochastic process. In this framework Cµ = H(S) where S denotes the stationary distribution over causal states, while E = lim_{N→∞} I(X_{‑N:‑1}; X_{0:N}) is the mutual information between the semi‑infinite past and future.

A key observation is that for any ε‑machine the inequality Cµ ≥ E holds, with the difference Cµ − E being exactly the average amount of information that must be erased each computational step. The authors formalize this by defining the per‑step erasure entropy h_erase = H(S_{i‑1} | X_i S_i). Using the unifilarity of ε‑machines (the next state is uniquely determined by the current state and emitted symbol) they prove two theorems: (1) Cµ = E if and only if h_erase = 0, i.e., the machine is logically reversible; (2) the limit of the erasure entropy for the N‑fold concatenated machine equals Cµ − E.

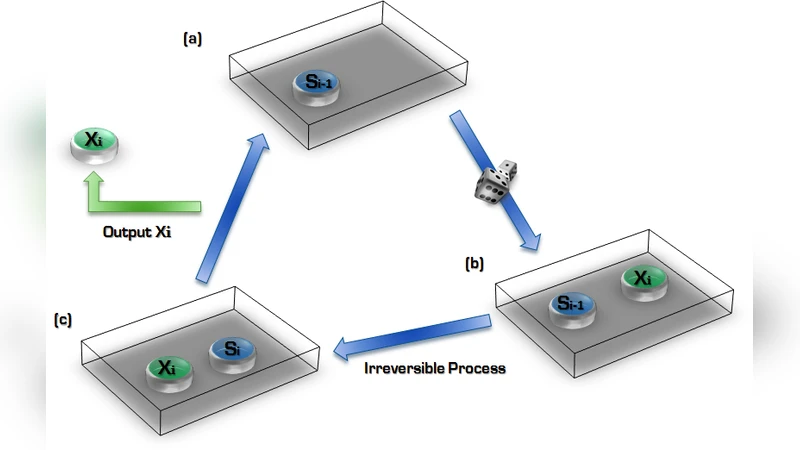

Thermodynamically, each computation cycle consists of three entropy changes: (a) generation of a new output symbol increases internal entropy by h = H(X_i | S_{i‑1}); (b) erasure of the previous state reduces entropy by h_erase; (c) ejection of the symbol reduces entropy by h_R = H(X_i | S_i). The balance h − h_erase − h_R = 0 reproduces the information‑theoretic identity h = h_erase + h_R. In the limit of infinitely long output strings the entropy balance yields H_erase + E − Cµ = 0, confirming that the minimal heat dissipation per cycle is kT ln 2·(Cµ − E).

To give a physical illustration, the authors extend Szilard’s single‑particle engine. In their Gedankenexperiment the memory must store at least Cµ bits to generate the desired predictive information E. Resetting the memory at a colder reservoir temperature T_C costs Q_C = kT_C ln 2·Cµ, while extracting work from the hot reservoir at temperature T_H yields Q_H = kT_H ln 2·E. The overall efficiency becomes η = 1 − (T_C/T_H)·(Cµ/E), which can be expressed as the product of the Carnot efficiency and an “information‑theoretic efficiency” ι = E/Cµ = 1 − h_erase/Cµ.

The paper concludes that the unavoidable energy cost of any optimal classical stochastic simulator is precisely the difference between statistical complexity and predictive information. This cost is rooted in the logical irreversibility of the underlying ε‑machine. The authors also note that quantum simulators can achieve a lower statistical complexity than any classical ε‑machine for the same process, suggesting that quantum information processing may reduce the thermodynamic cost of stochastic simulation. Overall, the work provides a rigorous bridge between thermodynamics, information theory, and computational complexity, offering clear quantitative guidelines for designing energy‑efficient simulators of stochastic physical systems.

Comments & Academic Discussion

Loading comments...

Leave a Comment