Networking - A Statistical Physics Perspective

Efficient networking has a substantial economic and societal impact in a broad range of areas including transportation systems, wired and wireless communications and a range of Internet applications. As transportation and communication networks become increasingly more complex, the ever increasing demand for congestion control, higher traffic capacity, quality of service, robustness and reduced energy consumption require new tools and methods to meet these conflicting requirements. The new methodology should serve for gaining better understanding of the properties of networking systems at the macroscopic level, as well as for the development of new principled optimization and management algorithms at the microscopic level. Methods of statistical physics seem best placed to provide new approaches as they have been developed specifically to deal with non-linear large scale systems. This paper aims at presenting an overview of tools and methods that have been developed within the statistical physics community and that can be readily applied to address the emerging problems in networking. These include diffusion processes, methods from disordered systems and polymer physics, probabilistic inference, which have direct relevance to network routing, file and frequency distribution, the exploration of network structures and vulnerability, and various other practical networking applications.

💡 Research Summary

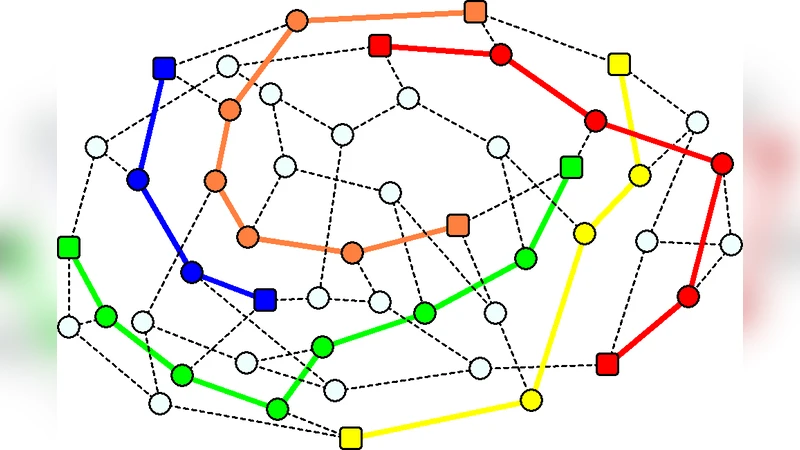

The paper surveys how methods from statistical physics can be brought to bear on modern networking problems, which are increasingly large, nonlinear, and heterogeneous. After a brief motivation, the authors review the three canonical network topologies—Erdős‑Rényi random graphs, scale‑free (Barabási‑Albert) networks, and small‑world (Watts‑Strogatz) graphs—and the standard node‑ and edge‑centric metrics (degree, centrality, clustering, path length, modularity). These models serve as the substrate for the subsequent physical analyses.

In the dynamical‑process section the authors treat networks as stochastic processes. Random‑walk theory is used to derive PageRank‑type centrality measures, first‑passage times, and coverage dynamics, which in turn inform routing and search strategies. Epidemic‑spreading models capture virus propagation, information diffusion, and the existence of a critical infection threshold that separates a healthy regime from an epidemic one; this provides a quantitative basis for designing immunisation or traffic‑shaping policies. Cascading‑failure theory, borrowed from the study of phase transitions in interdependent systems, explains how a small local failure can trigger a system‑wide collapse, highlighting the need for robust design.

The next part recasts several networking tasks as disordered‑system problems. Frequency allocation is mapped onto graph‑coloring, while the “color‑diversity” problem models distributed file‑segment storage. Resource redistribution and optimal source placement are expressed as energy‑minimisation problems akin to spin‑glass models, allowing the use of simulated‑annealing or other physics‑inspired heuristics. Circuit and loop detection are linked to the minimum‑Steiner‑tree problem, offering a principled way to minimise wiring or link costs.

Probabilistic inference techniques receive a dedicated treatment. The paper details belief‑propagation (BP) update rules, free‑energy approximations, and survey‑propagation (SP) for hard constraint‑satisfaction problems such as coloring and allocation. Network tomography and compressed sensing are presented as concrete examples where a small set of end‑to‑end measurements can be used to reconstruct link delays or traffic states, with BP providing a scalable, distributed solution that outperforms traditional linear‑programming approaches.

Finally, the authors outline future research directions: the need for experimental validation on real protocols, integration of dynamic topology changes, multi‑service constraints, and energy considerations; and the promise of hybrid frameworks that combine machine‑learning techniques with physics‑based models. Overall, the review convincingly argues that statistical‑physics tools can bridge the gap between macroscopic network behaviour (phase transitions, self‑organisation) and microscopic algorithm design, although concrete implementation results remain limited and constitute an important avenue for further work.

Comments & Academic Discussion

Loading comments...

Leave a Comment