Massively parallelized replica-exchange simulations of polymers on GPUs

We discuss the advantages of parallelization by multithreading on graphics processing units (GPUs) for parallel tempering Monte Carlo computer simulations of an exemplified bead-spring model for homopolymers. Since the sampling of a large ensemble of conformations is a prerequisite for the precise estimation of statistical quantities such as typical indicators for conformational transitions like the peak structure of the specific heat, the advantage of a strong increase in performance of Monte Carlo simulations cannot be overestimated. Employing multithreading and utilizing the massive power of the large number of cores on GPUs, being available in modern but standard graphics cards, we find a rapid increase in efficiency when porting parts of the code from the central processing unit (CPU) to the GPU.

💡 Research Summary

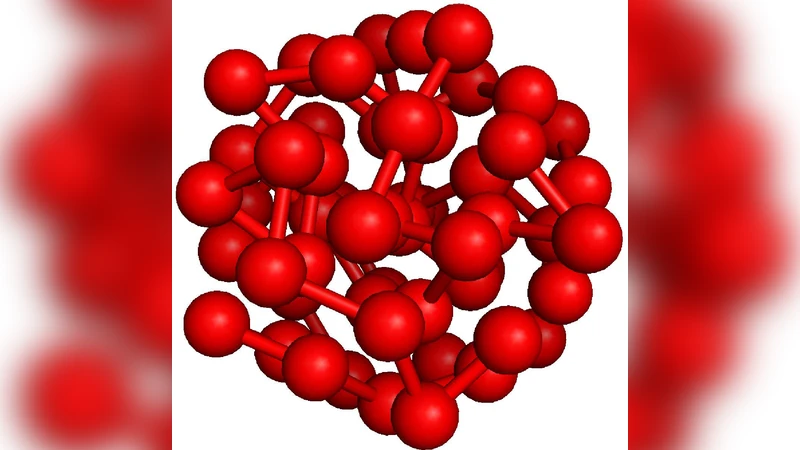

The paper presents a comprehensive study of how to accelerate replica‑exchange (parallel tempering) Monte Carlo simulations of an off‑lattice bead‑spring homopolymer by exploiting the massive parallelism of modern graphics processing units (GPUs) through CUDA programming. The authors begin by motivating the need for large‑scale sampling in polymer physics, where accurate estimation of thermodynamic observables such as the specific‑heat peak requires ensembles of millions of conformations. Conventional CPU‑only implementations quickly become a bottleneck, especially when many replicas at different temperatures must be simulated simultaneously to overcome free‑energy barriers.

Section 2 gives a concise yet thorough overview of GPU architecture. The device consists of multiple streaming multiprocessors (SMs), each containing many cores that execute groups of 32 threads (warps) in lock‑step. Memory hierarchy—global, constant, texture, shared, and registers—is explained, emphasizing the high latency of global memory and the low latency of shared memory and registers. The authors describe how CUDA abstracts these details, allowing a kernel to be launched with a grid of thread blocks; each block can be sized to match the number of monomers in the polymer, and the grid dimension is set to the number of replicas. This mapping ensures that each replica runs independently in its own block while still sharing the same device.

In Section 3 the physical model is defined. The polymer consists of N monomers interacting via a truncated, shifted Lennard‑Jones (LJ) pair potential and bonded by a finitely extensible nonlinear elastic (FENE) potential. The total energy is the sum of all non‑bonded LJ contributions and the N‑1 bonded FENE terms. A simple Metropolis move is employed: a randomly chosen monomer is displaced by a small vector with components uniformly drawn from

Comments & Academic Discussion

Loading comments...

Leave a Comment