Scientific Visualization in Astronomy: Towards the Petascale Astronomy Era

Astronomy is entering a new era of discovery, coincident with the establishment of new facilities for observation and simulation that will routinely generate petabytes of data. While an increasing reliance on automated data analysis is anticipated, a critical role will remain for visualization-based knowledge discovery. We have investigated scientific visualization applications in astronomy through an examination of the literature published during the last two decades. We identify the two most active fields for progress - visualization of large-N particle data and spectral data cubes - discuss open areas of research, and introduce a mapping between astronomical sources of data and data representations used in general purpose visualization tools. We discuss contributions using high performance computing architectures (e.g: distributed processing and GPUs), collaborative astronomy visualization, the use of workflow systems to store metadata about visualization parameters, and the use of advanced interaction devices. We examine a number of issues that may be limiting the spread of scientific visualization research in astronomy and identify six grand challenges for scientific visualization research in the Petascale Astronomy Era.

💡 Research Summary

The paper provides a comprehensive review of scientific visualization research in astronomy over the past two decades, positioning it within the emerging “Petascale Astronomy Era.” It begins by highlighting the unprecedented data volumes generated by next‑generation observatories such as ALMA, LSST, and the Square Kilometre Array, as well as cosmological N‑body simulations that can contain up to 10^10 particles. While automated data mining and machine‑learning pipelines are expected to dominate data analysis, the authors argue that human visual perception remains indispensable for pattern recognition, hypothesis testing, and result verification.

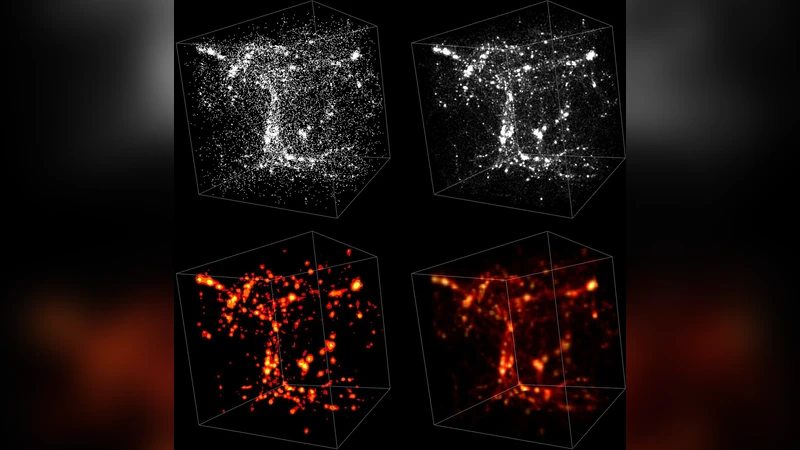

Through an extensive literature survey, the authors identify two primary data domains that have driven visualization advances: (1) large‑N particle simulations and (2) three‑dimensional spectral data cubes. For particle data, four canonical rendering techniques are examined—point plots, splats (texture‑based point sprites), isosurfaces, and volume rendering. Each method’s strengths and limitations are discussed in the context of resolution, memory footprint, and the ability to convey both global structure and fine‑scale features. The paper emphasizes that modern GPU acceleration, combined with MPI‑based distributed memory architectures, enables real‑time or near‑real‑time rendering of billions of particles by partitioning data across nodes and streaming subsets to graphics processors.

Spectral data cubes, which embed spatial (RA, Dec) and frequency (or velocity) axes, present a distinct set of challenges. The authors describe adaptive multi‑resolution grids, hierarchical level‑of‑detail schemes, and physically motivated colour‑mapping strategies that allow astronomers to explore weak emission, multi‑channel correlations, and time‑varying phenomena. Volume rendering, often implemented via GPU‑based ray casting or texture‑slicing, is shown to be essential for visualizing diffuse structures that would be invisible in traditional slice‑by‑slice views.

The review proceeds to discuss high‑performance computing (HPC) contributions, including GPU‑driven pipelines, hybrid CPU‑GPU clusters, and cloud‑based rendering services. It highlights case studies where MPI‑CUDA hybrids compute isosurfaces in parallel, and where web‑GL viewers enable remote, collaborative exploration of petabyte‑scale datasets. Collaborative visualization platforms, workflow management systems (e.g., VisTrails, Kepler), and the capture of visualization metadata are presented as mechanisms to ensure reproducibility and to facilitate multi‑user interaction.

Advanced interaction devices—virtual‑reality headsets, 3‑D mice, touch interfaces—are surveyed, illustrating how immersive environments can improve intuition when navigating high‑dimensional data. The authors also note the relative scarcity of stream‑line visualizations in astronomy, a technique common in fluid dynamics, suggesting a potential area for cross‑disciplinary borrowing.

In the final sections, the paper diagnoses several systemic barriers: heterogeneous data formats, lack of standardized APIs, limited training in visualization techniques, and insufficient integration of visualization into routine data pipelines. To address these, the authors propose six “grand challenges” for the Petascale Astronomy Era: (1) real‑time streaming visualization of petabyte‑scale data, (2) seamless integration of multi‑modal and multi‑resolution datasets, (3) automated, standards‑based visualization pipelines with rich metadata, (4) AI‑augmented visual analytics for feature detection and guided exploration, (5) cloud‑native collaborative visualization services, and (6) sustainable education and community building to cultivate expertise.

The paper concludes that meeting these challenges will be critical for astronomers to extract scientific insight from ever‑growing data volumes, to validate automated analyses, and to communicate results effectively within the broader scientific community and to the public.

Comments & Academic Discussion

Loading comments...

Leave a Comment