Establishing Applicability of SSDs to LHC Tier-2 Hardware Configuration

Solid State Disk technologies are increasingly replacing high-speed hard disks as the storage technology in high-random-I/O environments. There are several potentially I/O bound services within the typical LHC Tier-2 - in the back-end, with the trend towards many-core architectures continuing, worker nodes running many single-threaded jobs and storage nodes delivering many simultaneous files can both exhibit I/O limited efficiency. We estimate the effectiveness of affordable SSDs in the context of worker nodes, on a large Tier-2 production setup using both low level tools and real LHC I/O intensive data analysis jobs comparing and contrasting with high performance spinning disk based solutions. We consider the applicability of each solution in the context of its price/performance metrics, with an eye on the pragmatic issues facing Tier-2 provision and upgrades

💡 Research Summary

The paper investigates whether affordable solid‑state drives (SSDs) can replace conventional hard‑disk drives (HDDs) in the internal storage of LHC Tier‑2 worker nodes, where many single‑threaded analysis jobs run concurrently and generate a high volume of random I/O. Two consumer‑grade MLC SSD models were tested: a low‑end Kingston Value 128 GB and a mid‑range Intel X‑25M G2 160 GB. These were compared against a single 7200 RPM HDD, a RAID‑1 mirror of two such disks, and a RAID‑0 stripe of two disks.

The methodology combined low‑level I/O tracing (blktrace) with the HammerCloud automated job submission framework. Real ATLAS analysis workloads were executed using two data‑access patterns: the FileStager method (which stages files locally on the worker node) and the DQ2_LOCAL method (which reads files directly from the site’s storage). For each configuration the authors measured mean job efficiency (the fraction of CPU time spent on useful work) and overall system throughput (GB s⁻¹).

Results show that, for an 8‑core node, the single HDD achieved 75 % efficiency and 5.5 GB s⁻¹, while a RAID‑0 array reached 90 % efficiency and 7 GB s⁻¹. The Kingston SSD lagged with 60 % efficiency and 4.5 GB s⁻¹, and the Intel SSD performed slightly better at 80 % efficiency and 6 GB s⁻¹. On a 24‑core node the RAID‑0 configuration again led (86 % efficiency, 21 GB s⁻¹), whereas the Intel SSD delivered only 50 % efficiency and 12 GB s⁻¹. Thus, despite SSDs’ negligible seek latency, their write‑intensive, highly concurrent analysis workloads do not benefit enough to outweigh the performance gap.

Cost analysis further weakens the SSD case. The Kingston SSD costs £155 (≈£1.21 per GB) and the Intel SSD £245 (≈£1.53 per GB), whereas two 500 GB HDDs in a RAID‑0 cost only £80 (≈£0.16 per GB). When price is normalised to throughput per 8‑core node, SSDs are three to ten times more expensive than the RAID‑0 solution. Even when considered as a fraction of the total node cost (≈10 %), the price‑to‑performance ratios remain unfavorable.

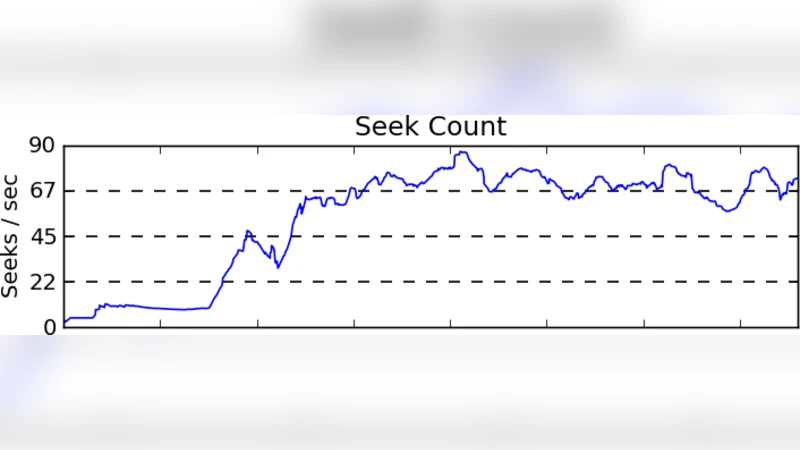

The authors also examined the impact of ROOT file ordering. Re‑ordering AOD files to make accesses more sequential reduces peak seek rates on SSDs, but the overall job efficiency still trails that of RAID‑0 when files are staged locally. Moreover, the benefit of SSDs disappears when the staging step is bypassed (DQ2_LOCAL), indicating that the primary bottleneck is the write‑heavy staging process rather than pure random reads.

In discussion, the paper acknowledges that SSDs excel as metadata stores or read‑only caches, especially when paired with distributed file systems such as Lustre, GPFS, or pNFS. However, for the typical Tier‑2 scenario—where budget constraints dominate and analysis jobs generate both reads and writes on the same local filesystem—affordable SSDs do not provide a cost‑effective improvement. The authors suggest that future work could explore dedicated SSD cache partitions, more aggressive ROOT file optimisation, or leveraging SSDs in a metadata‑only role.

In conclusion, the study finds that, given current SSD pricing and the mixed read/write nature of ATLAS analysis workloads, SSDs are not a viable replacement for HDDs on Tier‑2 worker nodes. RAID‑0 HDD arrays deliver superior throughput and far better price‑performance, making them the preferred storage solution for these sites.

Comments & Academic Discussion

Loading comments...

Leave a Comment