Learning Program Embeddings to Propagate Feedback on Student Code

Providing feedback, both assessing final work and giving hints to stuck students, is difficult for open-ended assignments in massive online classes which can range from thousands to millions of students. We introduce a neural network method to encode programs as a linear mapping from an embedded precondition space to an embedded postcondition space and propose an algorithm for feedback at scale using these linear maps as features. We apply our algorithm to assessments from the Code.org Hour of Code and Stanford University’s CS1 course, where we propagate human comments on student assignments to orders of magnitude more submissions.

💡 Research Summary

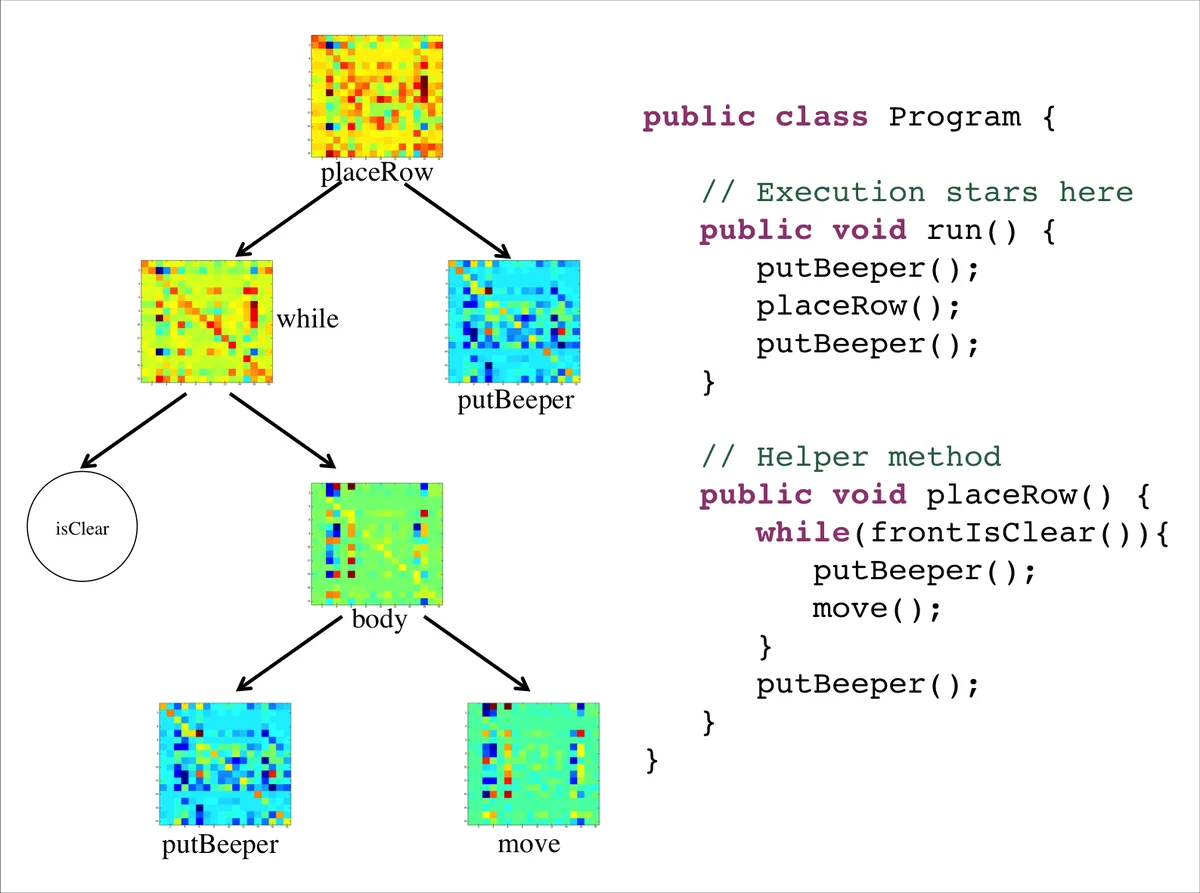

The paper tackles the challenge of providing personalized feedback on open‑ended programming assignments in massive online courses, where thousands to millions of students submit code. The authors propose a novel neural‑network based representation that treats each program as a linear transformation between an embedded pre‑condition space and an embedded post‑condition space. By collecting Hoare triples (P, A, Q) – where P is the state before executing program fragment A and Q is the state after – they obtain a large training set of (pre‑state, program, post‑state) examples.

Both pre‑conditions and post‑conditions are first encoded into a common d‑dimensional vector (e.g., one‑hot encodings of grid‑world positions). An encoder network f(P)=φ(W_enc · P + b_enc) maps these vectors into an m‑dimensional nonlinear feature space; a decoder ψ reconstructs the original state from the feature space. The key assumption is that, after this nonlinear embedding, the effect of any program fragment A can be approximated by a linear map M_A such that f(Q) ≈ M_A · f(P). The matrix M_A, of size m × m, becomes the program’s embedding.

Two modeling choices are explored. The primary “non‑parametric model” (NPM) assigns a distinct matrix M_i to each unique program (or AST subtree) observed in the training data. The overall parameter set includes the encoder/decoder weights and all program matrices. Training minimizes a composite loss: (1) a prediction loss measuring how well M_A · f(P) predicts the embedded post‑condition, (2) an auto‑encoding loss ensuring the encoder/decoder faithfully reconstruct states, and (3) an L2 regularization term. Optimization uses minibatch stochastic gradient descent with Adagrad, and a smart initialization is performed by first training an auto‑encoder on the state space and then solving ridge regression for each program to obtain an initial M_i.

Triples are extracted by instrumenting program execution on a suite of unit tests. Every time a subtree A is executed, the system records the before‑state P and after‑state Q, yielding a massive collection of triples, including those from inner loops and conditional branches. Limiting the number of triples per subtree prevents over‑representation of repetitive structures. Collecting triples at the subtree level is crucial because it captures not only functional behavior but also implementation style, which is essential for educational feedback.

The learned embeddings are then used for feedback propagation. Human graders first annotate a small, carefully selected set of exemplar programs with a subset H of possible feedback labels L (e.g., comments on correctness, style, strategy). Each program’s matrix M_A serves as a feature vector for a set of N binary classifiers (one per label). The classifiers are trained on the annotated examples and then applied to the vast majority of unlabelled submissions, automatically assigning the same feedback categories. This two‑phase active‑learning loop dramatically amplifies the reach of human feedback.

Empirical evaluation is performed on two large datasets. The first is the Code.org “Hour of Code” platform, with over 27 million learners writing simple Karel‑style programs (no user‑defined variables). The second is Stanford’s CS1 (Programming Methodologies) course, featuring thousands of Java submissions with richer language features. In both cases, the authors generate thousands of Hoare triples per assignment, embed the programs, and compare feedback propagation performance against baselines that rely on AST edit distance or hand‑crafted features. Results show that the embedding‑based method achieves substantially higher precision and recall in predicting teacher comments, especially for functional errors, and also captures stylistic patterns via subtree embeddings.

The paper’s contributions are threefold: (1) a method for learning joint embeddings of program states and programs that captures functional and stylistic aspects; (2) a practical algorithm for scaling teacher feedback to massive code repositories using these embeddings; and (3) a demonstration of the approach’s effectiveness on real‑world, large‑scale educational data. By framing programs as linear operators in a learned feature space, the work bridges ideas from functional maps in graphics, kernel embeddings of distributions, and recursive neural networks for language, opening a promising direction for automated reasoning about code at scale. Future work may extend the technique to languages with user‑defined variables, multi‑module projects, and more nuanced pedagogical objectives such as code optimization or algorithmic complexity feedback.

Comments & Academic Discussion

Loading comments...

Leave a Comment