Extrinsic Methods for Coding and Dictionary Learning on Grassmann Manifolds

Sparsity-based representations have recently led to notable results in various visual recognition tasks. In a separate line of research, Riemannian manifolds have been shown useful for dealing with features and models that do not lie in Euclidean spaces. With the aim of building a bridge between the two realms, we address the problem of sparse coding and dictionary learning over the space of linear subspaces, which form Riemannian structures known as Grassmann manifolds. To this end, we propose to embed Grassmann manifolds into the space of symmetric matrices by an isometric mapping. This in turn enables us to extend two sparse coding schemes to Grassmann manifolds. Furthermore, we propose closed-form solutions for learning a Grassmann dictionary, atom by atom. Lastly, to handle non-linearity in data, we extend the proposed Grassmann sparse coding and dictionary learning algorithms through embedding into Hilbert spaces. Experiments on several classification tasks (gender recognition, gesture classification, scene analysis, face recognition, action recognition and dynamic texture classification) show that the proposed approaches achieve considerable improvements in discrimination accuracy, in comparison to state-of-the-art methods such as kernelized Affine Hull Method and graph-embedding Grassmann discriminant analysis.

💡 Research Summary

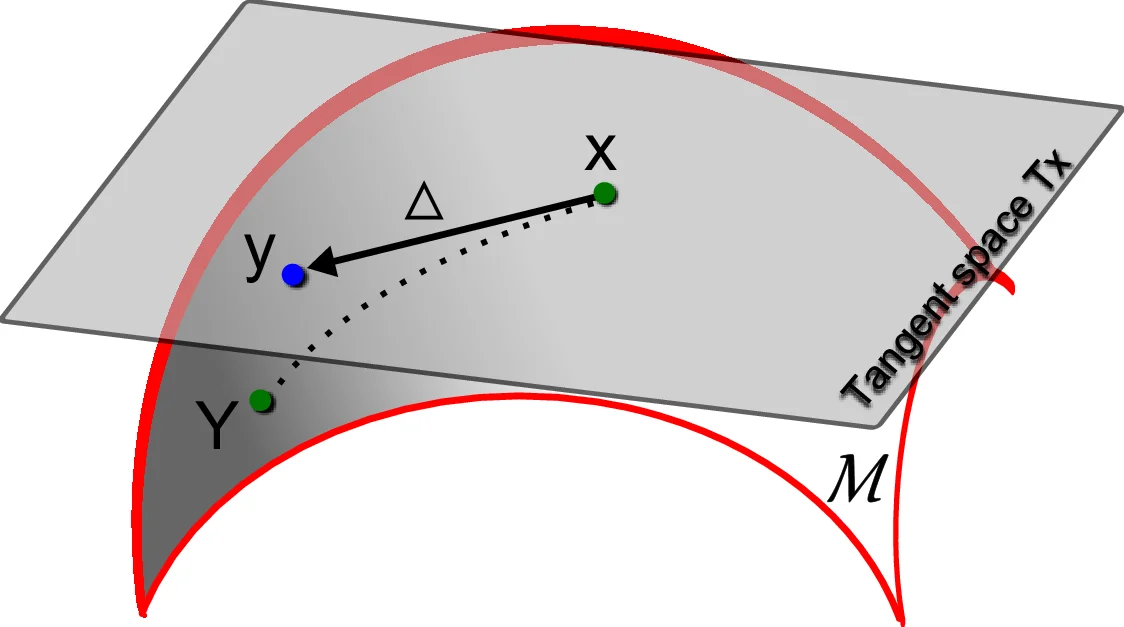

The paper tackles the problem of representing and learning dictionaries for data that lie on Grassmann manifolds, i.e., sets of linear subspaces. Traditional sparse coding and dictionary learning techniques are defined in Euclidean spaces, while recent work on Riemannian manifolds has shown that many visual descriptors (e.g., video clips, image sets) naturally reside on non‑Euclidean structures. Existing intrinsic approaches for Grassmann manifolds rely on the logarithm map to move points to a tangent space; however, the log map for Grassmann manifolds lacks a closed‑form expression and is computationally expensive, making both coding and dictionary learning impractical for large‑scale vision tasks.

To overcome these limitations, the authors propose an extrinsic framework based on an isometric embedding of the Grassmann manifold into the space of symmetric positive‑semidefinite (SPSD) matrices. The embedding is simply the projection operator Φ: G(p,d) → S⁺(d) defined by Φ(X)=XXᵀ, where X∈ℝ^{d×p} has orthonormal columns. This mapping preserves the geodesic distance: the Frobenius norm between two embedded matrices equals the ℓ₂ norm of the principal angles between the original subspaces. Consequently, the manifold geometry is retained while all computations can be performed with standard linear‑algebra tools in Euclidean space.

Within this embedded space the authors develop two sparse coding schemes:

-

Grassmann Sparse Coding (GSC) – a direct analogue of Lasso. For a query subspace X and a dictionary {D_j}, the objective is

min_y ‖Φ(X) – Σ_j y_j Φ(D_j)‖_F² + λ‖y‖₁.

The problem is convex in y and can be solved with any off‑the‑shelf ℓ₁‑solver (e.g., ADMM, LARS). -

Grassmann Locality‑constrained Linear Coding (GLCC) – replaces the ℓ₁ penalty with a locality term that forces the code to be similar to a pre‑computed weight vector w derived from the k‑nearest dictionary atoms. The formulation becomes

min_y ‖Φ(X) – Σ_j y_j Φ(D_j)‖_F² + λ‖y – w‖₂²,

encouraging smooth, locally adaptive representations while still being solvable by a simple quadratic program.

Both methods benefit from the fact that Φ(D_j) are SPSD matrices, so the reconstruction error is a standard Frobenius norm and no manifold‑specific operations are needed.

For dictionary learning, the authors adopt an atom‑wise update strategy. Holding all codes and all atoms except D_i fixed, the sub‑problem reduces to minimizing a quadratic form in Φ(D_i). By reshaping the residual term into a matrix and performing a singular value decomposition, the optimal Φ(D_i) is given by the leading singular vector scaled appropriately. The updated matrix is then projected back onto the Grassmann manifold via QR factorization, yielding a new orthonormal basis for the atom. This closed‑form update is repeated for each atom and iterated until convergence, leading to a computationally efficient learning algorithm that scales linearly with the number of atoms.

To handle non‑linear relationships among subspaces, the authors extend both coding and learning to a reproducing kernel Hilbert space (RKHS). They define a kernel on the Grassmann manifold as k(X,Y)=‖XᵀY‖_F², which corresponds to the inner product of the embedded SPSD matrices. All operations (reconstruction error, locality weights, dictionary updates) are expressed solely in terms of kernel evaluations, allowing the method to implicitly operate in a high‑dimensional feature space without explicit mapping. This kernelized version (K‑GSC / K‑GLCC) significantly improves performance on datasets where linear subspace combinations are insufficient.

The experimental evaluation spans six diverse tasks: gender recognition from gait, hand‑gesture classification, scene categorization, face recognition from image sets, action recognition, and dynamic texture classification. For each task, the authors compare their methods against state‑of‑the‑art baselines such as the Kernelized Affine Hull Method (AHM), Graph‑embedding Grassmann Discriminant Analysis (GDA), and recent deep learning approaches. Results consistently show that both GSC and GLCC outperform the baselines, with accuracy gains ranging from 3 % to 7 % points. The kernelized variants provide additional improvements, especially on highly non‑linear datasets (e.g., dynamic textures). Moreover, the extrinsic approach yields a 5‑fold speedup in dictionary learning time compared to intrinsic log‑map based methods, and reduces memory consumption because only SPSD matrices (or kernel values) need to be stored.

In summary, the paper makes four key contributions:

- An isometric embedding of Grassmann manifolds into SPSD matrices that enables Euclidean‑style sparse coding.

- Two practical coding models (GSC and GLCC) together with a closed‑form atom‑wise dictionary update algorithm.

- Kernel extensions that capture non‑linear structure while preserving computational tractability.

- Comprehensive empirical validation demonstrating superior accuracy and efficiency across a broad spectrum of visual recognition problems.

The proposed extrinsic framework bridges the gap between sparse representation theory and Riemannian geometry, offering a scalable and mathematically sound toolset for any application where data are naturally represented as subspaces. Future work may explore integration with deep neural architectures, online dictionary updates, and extensions to other manifolds such as the space of symmetric positive‑definite matrices.

Comments & Academic Discussion

Loading comments...

Leave a Comment