Group-based ranking method for online rating systems with spamming attacks

Ranking problem has attracted much attention in real systems. How to design a robust ranking method is especially significant for online rating systems under the threat of spamming attacks. By building reputation systems for users, many well-performed ranking methods have been applied to address this issue. In this Letter, we propose a group-based ranking method that evaluates users’ reputations based on their grouping behaviors. More specifically, users are assigned with high reputation scores if they always fall into large rating groups. Results on three real data sets indicate that the present method is more accurate and robust than correlation-based method in the presence of spamming attacks.

💡 Research Summary

The paper addresses the vulnerability of online rating platforms to spam attacks, where malicious or random users submit biased ratings that can distort the perceived quality of items. Traditional reputation‑based methods (e.g., iterative refinement, correlation‑based ranking) rely on estimating a single “true quality” for each object and then measuring the deviation of each user’s ratings from that estimate. This approach assumes that an object has a unique objective rating, an assumption that often fails in practice because many items legitimately receive a range of subjective scores. Moreover, the quality estimation itself can be corrupted by spammers.

To overcome these limitations, the authors propose a Group‑based Ranking (GR) algorithm that evaluates user reputation directly from the size of the rating groups to which a user belongs, without estimating item quality. The method proceeds as follows:

- Grouping – For each object α and each possible discrete rating ω_s (s = 1…z), all users who gave ω_s to α are placed into a group Γ_sα.

- Group size – The cardinality Λ_sα = |Γ_sα| is computed.

- Normalization – Λ_sα is divided by the total number of ratings received by α (k_α) to obtain a normalized weight Λ*_sα = Λ_sα / k_α, which represents the proportion of users that chose that rating.

- Reward matrix – The original rating matrix A is mapped to a reward matrix A′ where A′_iα = Λ*_sα if user i gave rating ω_s to α, and 0 otherwise.

- Reputation calculation – For each user i, the mean μ_i and standard deviation σ_i of the non‑zero entries of A′_i are calculated, and the reputation is defined as R_i = μ_i / σ_i. This is equivalent to the inverse of the coefficient of variation of the reward vector, rewarding users who consistently belong to large groups and penalizing those whose rewards are small or highly variable.

- Spam detection – Users are sorted by ascending R_i; the top‑L users are flagged as spammers.

The algorithm therefore exploits the well‑documented “herding” behavior of humans: users who align with the majority are placed in large groups and are deemed trustworthy, while outliers are considered suspicious. Importantly, GR does not require any prior estimate of item quality, making it applicable when an item legitimately has multiple reasonable ratings.

Experimental Setup – Three widely used datasets were employed: MovieLens (943 users, 1682 movies), Netflix (1038 users, 1215 movies), and Amazon (662 users, 1500 products). For each dataset, only users with at least 20 ratings and items with at least 20 ratings were retained, yielding sparsities between 2 % and 6 %. Two types of artificial spammers were injected: (i) malicious spammers who always give the extreme rating 1 or 5 with equal probability, and (ii) random spammers who assign a uniformly random rating from 1 to 5. The spammer proportion q = d/m (d = number of injected spammers) and the activity level p = k/n (k = number of items each spammer rates) were varied to test robustness.

Evaluation Metrics – Recall@L (the fraction of true spammers appearing in the top‑L list) and AUC (the probability that a randomly chosen spammer receives a lower reputation than a randomly chosen non‑spam) were used.

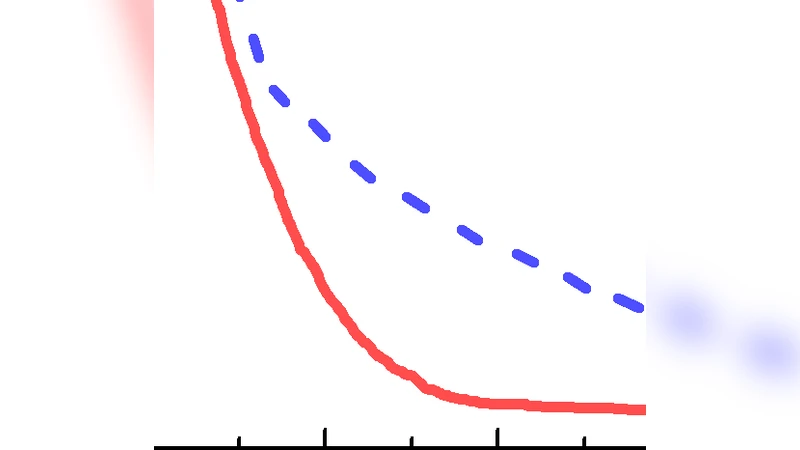

Results – Across all datasets, GR consistently outperformed the Correlation‑based Ranking (CR) method. AUC values for GR were 0.959 (MovieLens), 0.930 (Netflix), and 0.949 (Amazon), compared with 0.914, 0.668, and 0.877 for CR respectively. Recall curves showed that when L exceeds the true number of spammers (d), GR’s recall rises sharply and remains higher than CR’s, especially for random spammers where CR struggles. Pearson correlation between reputation R_i and the true rating error δ_i was more strongly negative for GR (e.g., –0.956 vs –0.949 on MovieLens), indicating that GR’s reputations align better with actual rating accuracy.

Complexity and Scalability – The dominant cost is the grouping step, which is linear in the number of observed ratings |E|. No iterative quality estimation is required, so memory usage and runtime are lower than IR/CR approaches, making GR suitable for large‑scale systems.

Limitations – In extremely sparse settings many groups have size one, reducing discriminative power. Coordinated spamming attacks that force many malicious users into the same rating group could artificially inflate reputations. Extending the method to continuous rating scales or multi‑dimensional feedback would require redesigning the normalization and reward mapping.

Conclusion – The Group‑based Ranking method offers a novel, quality‑agnostic way to assess user trustworthiness by leveraging collective rating behavior. Empirical evidence demonstrates superior robustness to both malicious and random spamming attacks compared with the state‑of‑the‑art correlation‑based approach, suggesting a promising direction for enhancing the reliability of online recommendation and rating platforms.

Comments & Academic Discussion

Loading comments...

Leave a Comment