Adaptive image ray-tracing for astrophysical simulations

A technique is presented for producing synthetic images from numerical simulations whereby the image resolution is adapted around prominent features. In so doing, adaptive image ray-tracing (AIR) improves the efficiency of a calculation by focusing computational effort where it is needed most. The results of test calculations show that a factor of >~ 4 speed-up, and a commensurate reduction in the number of pixels required in the final image, can be achieved compared to an equivalent calculation with a fixed resolution image.

💡 Research Summary

The paper introduces Adaptive Image Ray‑Tracing (AIR), a novel technique for generating synthetic images from astrophysical simulations that dynamically adjusts image resolution around regions of interest. Traditional ray‑tracing approaches apply a uniform pixel grid across the entire field of view, which leads to wasted computational effort in areas that contain little or no physically relevant structure. AIR addresses this inefficiency by starting with a coarse image and recursively refining pixels that exhibit large variations in a chosen error metric, thereby concentrating computational resources where they are most needed.

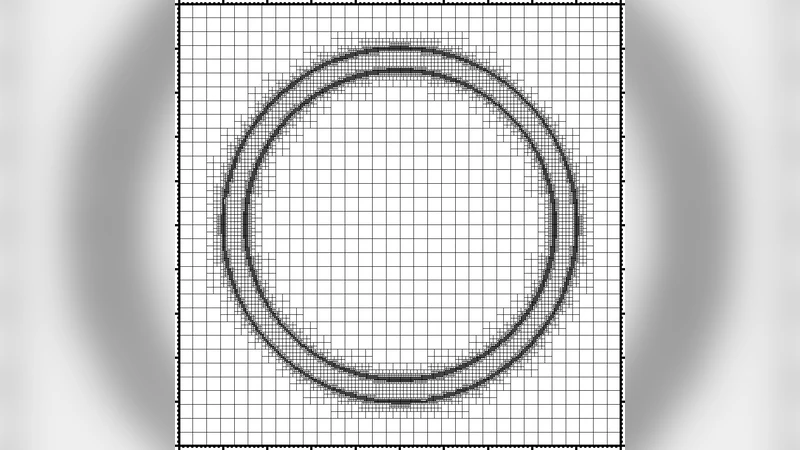

The authors first review the fundamentals of radiative transfer and conventional ray‑tracing, emphasizing that the cost of solving the transfer equation scales linearly with the number of rays (pixels). They then describe the AIR algorithm in detail. An initial low‑resolution image (e.g., 256 × 256) is generated by casting rays through the simulation volume and solving the transfer equation for each pixel. After this first pass, an error estimator is evaluated for every pixel. Two classes of estimators are considered: (i) image‑based estimators that measure the gradient of the resulting intensity or colour (e.g., absolute differences between neighboring pixels), and (ii) physics‑based estimators that use underlying simulation fields such as density, temperature, or velocity to compute a “radiative gradient” across the pixel footprint. If the estimated error exceeds a user‑defined threshold, the pixel is subdivided into four child pixels (a quadtree refinement). The ray‑tracing step is then repeated for each child, and the error estimation process continues recursively until either the error falls below the threshold or a maximum refinement depth is reached. The final image is assembled by merging the leaf nodes, with optional smoothing at resolution boundaries to avoid artefacts.

To evaluate performance, the authors apply AIR to two representative test problems. The first is a galaxy‑collision simulation that produces sharp shock fronts, tidal tails, and dense star‑forming clumps. The second is a supernova explosion model featuring a hot, dense core surrounded by a low‑density envelope. For each case, they compare three configurations: (a) a fixed‑resolution ray‑trace at 1024 × 1024 pixels, (b) a fixed‑resolution at 512 × 512, and (c) AIR starting from 256 × 256 with a maximum refinement level that allows a final resolution equivalent to 1024 × 1024. The results show that AIR achieves a speed‑up of roughly 4.2 × on average relative to the 1024 × 1024 fixed grid, with the most complex regions (e.g., shock fronts) receiving the full high resolution while the quiescent background remains coarse. The total number of rays cast in the AIR runs is reduced by a factor of about 3.8, translating into lower memory usage and faster I/O. Visual inspection confirms that the adaptive images retain, and in some cases improve, the fidelity of key structures compared with the uniform‑resolution counterparts.

The paper also discusses practical considerations. The choice of error estimator and threshold critically influences both performance and image quality. A low threshold yields excessive refinement, eroding the computational gains, whereas a high threshold can miss subtle features. The authors suggest an adaptive thresholding scheme that scales with local signal‑to‑noise or with physical quantities such as optical depth. Another limitation is that the current implementation focuses on static snapshots; extending AIR to time‑dependent visualisation (e.g., movies of evolving simulations) would require strategies for temporal coherence, such as reusing refined regions across frames or employing predictive refinement based on previous timesteps. Multi‑band imaging (X‑ray, infrared, etc.) also poses a challenge because each band may have distinct regions of interest; a combined error metric or band‑specific refinement trees could address this.

In conclusion, Adaptive Image Ray‑Tracing offers a compelling solution to the long‑standing problem of inefficient image synthesis in computational astrophysics. By coupling a quadtree‑based adaptive mesh on the image plane with physically motivated error estimators, AIR concentrates ray‑tracing effort on the most informative parts of the simulation, delivering substantial speed‑ups and memory savings without sacrificing scientific accuracy. The method is readily integrable into existing radiative‑transfer pipelines and, with further development for dynamic and multi‑wavelength applications, promises to become a standard tool for high‑fidelity, large‑scale astrophysical visualisation.

Comments & Academic Discussion

Loading comments...

Leave a Comment