On the tightness of an SDP relaxation of k-means

Recently, Awasthi et al. introduced an SDP relaxation of the $k$-means problem in $\mathbb R^m$. In this work, we consider a random model for the data points in which $k$ balls of unit radius are deterministically distributed throughout $\mathbb R^m$, and then in each ball, $n$ points are drawn according to a common rotationally invariant probability distribution. For any fixed ball configuration and probability distribution, we prove that the SDP relaxation of the $k$-means problem exactly recovers these planted clusters with probability $1-e^{-\Omega(n)}$ provided the distance between any two of the ball centers is $>2+\epsilon$, where $\epsilon$ is an explicit function of the configuration of the ball centers, and can be arbitrarily small when $m$ is large.

💡 Research Summary

The paper investigates the exact recovery properties of a semidefinite programming (SDP) relaxation for the k‑means clustering problem. The authors consider a probabilistic “stochastic ball model”: k unit‑radius balls are placed at fixed centers γ₁,…,γ_k in ℝ^m, and inside each ball n points are drawn independently from a common rotationally invariant distribution D (e.g., uniform on the unit ball). The central question is whether the SDP solution coincides with the true cluster indicator matrix X = Σ_a (1/n_a) 1_{C_a} 1_{C_a}ᵀ, where C_a denotes the points belonging to ball a.

First, the classic k‑means objective is rewritten in matrix form (2) and relaxed to the SDP (3): maximize –Tr(DX) subject to Tr(X)=k, X1=1, X⪰0, X≥0, where D_{ij}=‖x_i−x_j‖². Using cone duality, the dual problem (6) is derived with variables (z, α, β). Crucially, α is uniquely determined by the scalar z, leaving z and the non‑negative symmetric matrix β as the only free parameters.

The dual slack matrix Q = A* y – c is decomposed as Q = z(I−E) + M – B, where:

- E depends only on cluster sizes (its rank is 1 or 2, its largest eigenvalue λ≥k, and any non‑zero eigenvectors lie in the span of the all‑ones vectors of each cluster);

- M incorporates the data distance matrix D together with intra‑cluster correction terms;

- B = ½β is to be chosen to satisfy non‑negativity and symmetry.

The authors impose the strong condition Q·1_a = 0 for every cluster a, which forces the subspace Λ = span{1_a} to lie in the nullspace of Q. This yields a bound on z:

z ≤ min_{a≠b} (2 n_a n_b)/(n_a + n_b) · min( M(a,b)·1 ),

and suggests choosing z as large as possible to push the negative eigenvalue of (I−E) to zero. For a given z, they construct B explicitly:

u(a,b) = M(a,b)·1 – z (n_a + n_b)/(2 n_a)·1,

ρ(a,b) = u(a,b)·1,

B(a,b) = u(a,b) u(b,a)ᵀ / ρ(b,a).

This construction guarantees B is symmetric, entry‑wise non‑negative, and satisfies the linear constraints Q·1_a = 0.

The remaining requirement for Q⪰0 reduces to a spectral condition on the orthogonal complement of Λ:

k·‖P_{Λ⊥}(M – B)P_{Λ⊥}‖₂ ≤ 2.

If this holds, Theorem 6 guarantees that X is the optimal SDP solution, i.e., the SDP is tight and recovers the planted clusters exactly.

The deterministic condition above is then analyzed probabilistically under the stochastic ball model. Assuming the rotationally invariant distribution has zero mean and covariance I/m, and that the minimum inter‑center distance Δ satisfies

Δ > 2 + ε,

where ε = 2·Cond(γ)·(1 + 1/√m) and Cond(γ) = max_{a≠b}‖γ_a−γ_b‖₂ / min_{a≠b}‖γ_a−γ_b‖₂, the authors show that with probability at least 1 – e^{‑Ω(n)} the spectral condition holds. As the ambient dimension m grows, Cond(γ) → 1, making ε arbitrarily small; thus the required separation approaches the information‑theoretic limit Δ > 2 (the balls just need to be disjoint).

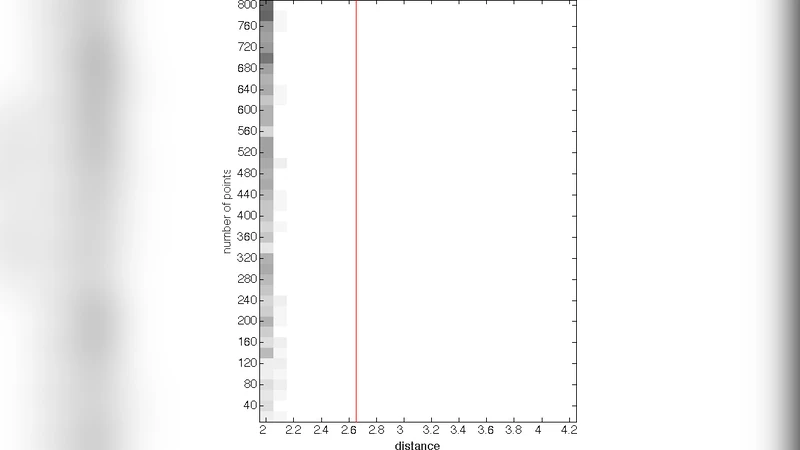

Empirical validation is provided for m = 6 with D uniform on the unit ball. For each Δ, 30 random instances are generated, and the success rate of the proposed dual certificate is plotted (Figure 1). The new certificate succeeds at significantly lower Δ than the earlier certificate from Awasthi et al. (2015), confirming that the theoretical bound is not merely an artifact of analysis.

In summary, the paper establishes that the SDP relaxation for k‑means is tight under near‑optimal separation conditions: any Δ exceeding 2 by an explicit, dimension‑dependent ε suffices for exact recovery with exponentially high probability in n. This improves upon the previous best guarantee Δ > 2√2(1 + 1/√m) and demonstrates that SDP‑based clustering can achieve optimal recovery in high dimensions. The dual‑certificate construction and the deterministic spectral condition provide a versatile framework that may be adapted to other clustering formulations and to the analysis of SDP relaxations in broader combinatorial optimization contexts.

Comments & Academic Discussion

Loading comments...

Leave a Comment