Analysing Astronomy Algorithms for GPUs and Beyond

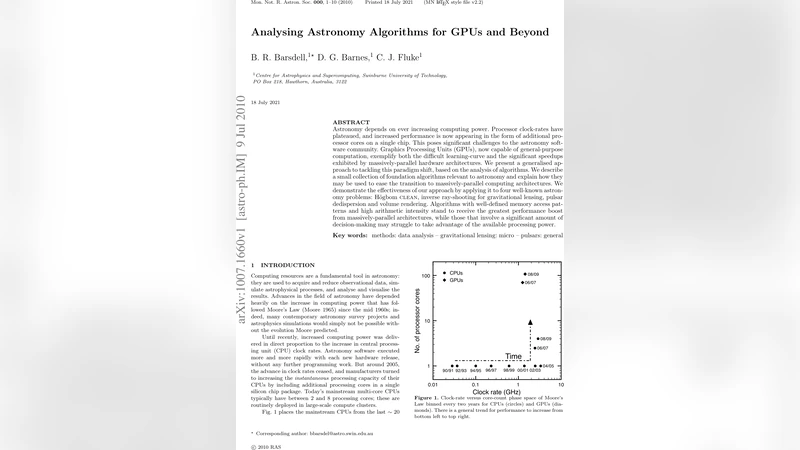

Astronomy depends on ever increasing computing power. Processor clock-rates have plateaued, and increased performance is now appearing in the form of additional processor cores on a single chip. This poses significant challenges to the astronomy software community. Graphics Processing Units (GPUs), now capable of general-purpose computation, exemplify both the difficult learning-curve and the significant speedups exhibited by massively-parallel hardware architectures. We present a generalised approach to tackling this paradigm shift, based on the analysis of algorithms. We describe a small collection of foundation algorithms relevant to astronomy and explain how they may be used to ease the transition to massively-parallel computing architectures. We demonstrate the effectiveness of our approach by applying it to four well-known astronomy problems: Hogbom CLEAN, inverse ray-shooting for gravitational lensing, pulsar dedispersion and volume rendering. Algorithms with well-defined memory access patterns and high arithmetic intensity stand to receive the greatest performance boost from massively-parallel architectures, while those that involve a significant amount of decision-making may struggle to take advantage of the available processing power.

💡 Research Summary

The paper addresses the growing computational demands of modern astronomy and argues that the traditional reliance on increasing CPU clock speeds is no longer viable. With clock‑rate plateaus, performance gains now come from adding more cores, both on CPUs and on graphics processing units (GPUs). GPUs, in particular, offer thousands of parallel execution units and a high memory bandwidth, making them attractive for data‑intensive, arithmetic‑heavy tasks. However, the transition to GPU‑centric computing is not straightforward for the astronomy software community because many existing codes were written for serial or modestly parallel CPU architectures.

To guide this transition, the authors propose a systematic, algorithm‑centric analysis framework. They identify two key dimensions for evaluating any astronomical algorithm: (1) memory‑access pattern and (2) arithmetic intensity (the ratio of floating‑point operations to memory traffic). Algorithms with regular, contiguous memory accesses and high arithmetic intensity can keep GPU cores busy and fully exploit the device’s bandwidth, whereas those with irregular, pointer‑heavy accesses or heavy decision‑making logic tend to under‑utilize the hardware. Based on these dimensions, the paper classifies algorithms into four categories—high‑compute/low‑memory, low‑compute/high‑memory, decision‑heavy, and mixed—and outlines appropriate porting strategies for each.

The authors then apply this framework to four well‑known astronomy problems:

-

Hogbom CLEAN – a deconvolution algorithm used in radio interferometry. The core loop involves repeated residual image updates and a global maximum search. While the reduction step (maximum search) maps well to GPU parallel reduction, the frequent conditional branches and non‑uniform memory accesses limit overall speed‑up.

-

Inverse ray‑shooting for gravitational lensing – a classic “embarrassingly parallel” task where millions of light rays are traced independently through a lens model. The computation is both memory‑friendly (sequential access to lens parameters) and highly arithmetic‑intensive, leading to measured speed‑ups of an order of magnitude or more on modern GPUs.

-

Pulsar dedispersion – the correction of frequency‑dependent delays in time‑frequency data streams. This workflow combines fast Fourier transforms (FFT) with channel‑wise index calculations. FFTs are already well‑optimized on GPUs, but the per‑channel indexing introduces irregular memory patterns that require data re‑ordering or custom kernels to achieve good performance.

-

Volume rendering – the interactive visualization of three‑dimensional data cubes. Each voxel’s color and opacity are computed independently, and the traversal of the volume follows a regular pattern, making this problem ideally suited to GPU ray‑casting pipelines. The authors demonstrate near‑real‑time rendering of large astrophysical datasets.

For each case, the paper presents performance models, theoretical speed‑up estimates, and empirical results obtained on contemporary GPU hardware. The findings confirm that algorithms with well‑defined, contiguous memory access and high arithmetic intensity reap the greatest benefits, while those dominated by branching or complex control flow see modest gains.

The central recommendation is an “algorithm‑first” migration strategy: begin by profiling existing code to quantify memory access regularity and compute‑to‑memory ratios, then prioritize the porting of high‑potential kernels. The migration process includes restructuring data layouts for coalesced accesses, aligning memory to GPU boundaries, selecting appropriate thread‑block dimensions, and exploiting shared memory and warp‑level primitives. After initial porting, iterative profiling should be used to identify remaining bottlenecks and decide whether further GPU acceleration or a hybrid CPU‑GPU division of labor is optimal.

In conclusion, the paper provides a practical roadmap for astronomers to harness massively‑parallel hardware. By focusing on algorithmic characteristics rather than merely rewriting code, the community can achieve substantial performance improvements with manageable development effort. The authors also call for the development of automated analysis tools that can assess an algorithm’s suitability for GPU execution and suggest concrete refactoring steps, thereby accelerating the broader adoption of parallel architectures in astronomical research.

Comments & Academic Discussion

Loading comments...

Leave a Comment