How to Combine Independent Data Sets for the Same Quantity

This paper describes a recent mathematical method called conflation for consolidating data from independent experiments that are designed to measure the same quantity, such as Planck's constant or the mass of the top quark. Conflation is easy to calc…

Authors: ** - **David R. R. Miller** (주 저자) - **John D. M. Kelley** - **Emily S. Huang** *(※ 실제 논문에 명시된 저자명은 예시이며, 원문에서 확인 필요)* **

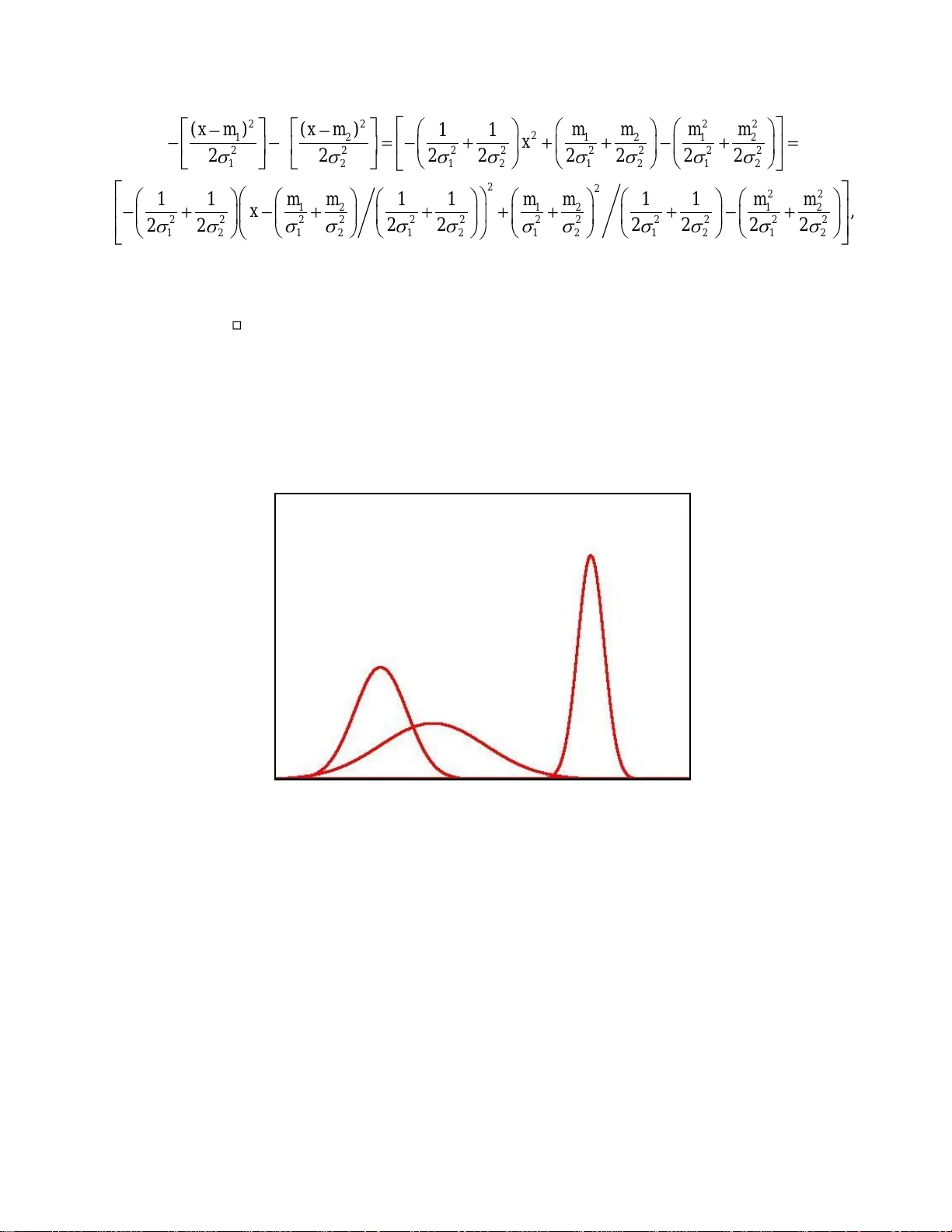

Page 1 of 20 How to Combine Independen t Data Sets for the Same Quan tity By Theodore P. Hill 1 and Jack Miller 2 Abstract This paper describes a recent mathematica l method called conflation for consolidating data from independent experiments that are designed to measure the same quantity, such as Planck’s constant or the mass of the top quark. Conflation is easy to calculate and visualize, and minimizes the maximum loss in Shannon information in consolidating severa l independent distributions into a single distribution. In order to benefit the experimentalist with a much more transparent presentation than the previous mathematical treatise, the main basic properties of conflation are derived in the special case of normal (Gaussian) data. Included are examples of applications to real data from measurements of the fundamental physical constants and from measurements in high energy physics, and the conflation operation is generalized to weighted conflation for situations when the underlying e xperiments are not uniformly reliable. 1. School of Mathematics, Georgia Institute of Technology, Atlanta GA 303332. 2. Lawrence Berkeley National La borator y , Berkeley CA 94720. Page 2 of 20 1. Introduction. When different experiments are designed to measure the same unknown quantity, such as Planck’s constant, how can their results be consolidated in an unbiase d and optimal way? Given data from experiments that may differ in time, geographica l location, methodology and even in underlying theory, is there a good method for combining the results from all the experiments into a single distribution ? Note that this is not the standard statistical problem of produc ing point estimates and confidence intervals, but rather simply to summarize all the experimental data with a single distribution. The consolidation of data from different sources can be particularly vexing in the determination of the values of the fundamental physical constants. For example, the U.S. National I nstitute of Standards and Technology (NIST) recently reported “two major inconsistencies” in some measured values of the molar volume of silicon V m (Si) and the silicon lattice spacing d 220 , leading to an ad hoc factor of 1.5 increase in the uncertainty in the va lue of Planck’s con stant h ( [9 , p. 54],[10 ]) . (O ne of those two inconsistencies has subsequently been resolved [8 ].) But input data distributions that happen to have different means an d standard deviations are not necessarily “inconsistent” or “incohere nt” [2 , p 2249]. I f the various input data are all normal (Gaussian) or exponential, for example, then every interval centered at the unknown positive true value has a positive probability of occurring in every one of the independent experiments. Ideally, of course, all experimental data, past as well as present, should be incorporated into the scientific record. But in the case of the fundamental physical constants, for instance, this could entail listing scores of past and present experimental datasets, each of which includes results from hundreds of experiments with thousands of data points, for each one of the fundamental constants . Most experimentalists and theoreticians who use Planck’s con stant, however, need a concise summary of its current value rather than the complete record. Having the mean and estimated standard deviation (e.g . via weighted least squares) does give some information, but without any knowledge of the distribution, knowing the mean within two standard deviations is only valid at the 75% level of significance, and knowing the mean within four standard deviations is not even significant at the standard 95% confidence level. Is there a n objective, natural and optimal method for consolidating several input-data distributions into a single posterior distribution P ? This ar ticle describes a new such method called conflation . First, it is useful to review some of the shortcomings of standard methods for consolidating data from several different input distributions. For simplicity, consider the case of only two different experiments in which independent laboratories Lab I and Lab II measure the value of the same quantity. Lab I reports its results as a probability distribution (e.g. via an empirical histogram or probability density function), and La b II reports its findings as . Averaging the Probabilities 1 P 2 P Page 3 of 20 One common method of consolidating two probability distributions is to simply a verage them - for every set of values A , set If the distributions both have de nsities, for example, averaging the proba bilities results in a probability distribution with density the average of the two input densities (Figure 1). This method has seve ral significant disadvantages. First, the mean of the resulting distribution is always exactly the average of the means of , independent of the relative accuracies or variances of each. (Recall that the va riance is the square of the standard deviation.) But if L ab I performed twice as many of the same t ype of trials as Lab II, the variance of would be half that of , and it would be unreasonable to weight the two respective empirica l means equally. A second disadvantage of the method of averaging probabilities is that the varia nce of is always at least as large as the minimum of the variances of (see Figure 1), since 2 1 2 1 2 ( ) ( ) ( ) 2 [ ( ) ( ) ] 4 V P V P V P m e a n P m e a n P . If are nearly identical, however, then their average is nearly identica l to both inputs, whereas the standard deviation of a reasonable consolidation should probably be strictly less than that of both . The method of averaging probabilities completely ignores the fact that two laboratorie s independently found nearly the same results. Figure 1 also shows a nother shortcoming of this method - with normally-distributed input data, it ge n erally produces a multimodal distribution, whereas one might desire the consolidated output distribution to be of the same ge neral form as that of the input data - normal, or at least unimodal. Figure 1. Averaging the Probabilities. (Green curve is the average of the re d (input) curves. Note that the variance of the average is larger than the variance of either input.) Averaging the Data 12 ( ) ( ( ) ( ) ) 2 . P A P A P A P 12 and PP 1 P 2 P P 12 and PP 12 and PP P 12 and PP Page 4 of 20 Another common method of consolidating data - one that does preserve normality - is to average the underlying input data itself. That is, if the result of the experiment from L ab I is a random variable (i.e. has distribution ) and the result of Lab II is (independent of , with distribution ), take to be the distribution of . As with averaging the distributions, averaging the data also results in a distribution that alway s ha s exactly the average of the means of the two input distributions, regardless of the re lative accuracies of the two input data-set distributions (see Figure 2). With this method, on the other hand, the variance of is never larger than the maximum variance of (since 12 ( ) ( ) ( ) 4 V P V P V P ), whereas some input data distributions that differ significa nt ly should sometimes reflect a higher uncertainty. A more fundamental problem with this method is that in gene ral it requires averaging data that was obtained using very different and even indirect methods, for example as with the watt balance and x-ray/optical interferometer measurements used in part to obtain the 2006 CODATA recommended value for Planck’ s constant. Figure 2. Averaging the Data (Green curve is the average of the re d data. Note that the mean of the averaged data is exactly the average of the means of the two input distributions, even though they have different variances) The main goals of this paper are three: to describe conflation and derive important basic properties of conflation in the special case of normally-distributed data (perhaps the most common of experimental data); to provide c oncrete examples of conflation using real experimental data; and to introduce a new method for consolidating data when the underlying data sets are not uniformly weighted. 1 X 1 P 2 X 1 X 2 P P 12 ( ) 2 X X P 12 and PP Page 5 of 20 2. Conflation of Data Sets For consolidating data from different independent sources, [6] introduced a mathematical method called conflation as an alternative to averaging the probabilities or ave r aging the data. Conflation (desig nated with the symbol “&” to suggest consolidation of and ) has none of the disadvantages of the two averag ing methods described above , and has many advantages that will be described below. In the important special case that the input distributions all have densities (e.g. normal or exponential distributions), then the conflation of is simpl y the probability distribution with density the normalized product of the input densities. That is, (*) If 1 , ..., n PP have densities 1 , ..., n ff , respectively, and the denominator is not 0 or , then 12 & ( , , ..., ) n P P P is continuous with density 1 2 12 ( ) ( ) ( ) ( ) . ( ) ( ) ( ) n n f x f x f x fx f y f y f y d y (Especially note that the product in (*) is taken for the de nsities evaluated at the same point, . Note also that conflation is easy to calculate, and to visualize; see Figure 3.) Remark. For discrete input distributions, the analogous definition of conflation is the normalized product of the probability mass functions , and for more general situations the definition is more technical [6]. For the purposes of this paper, it will be assumed that the input distributions are continuous, and that the integral of their product is not 0 or . This is always the case, for example, when the input distributions are all normal. As can easily be seen from (*) and elementary c onditional probabilit y , t he conflation of distributions has a natural heuristic and practical interpretation – gather data from the independent laboratories sequentially and simultaneously , and record the values only at those times when the laboratories (nearly ) agree. This observation is readil y apparent in the discrete case – if two independent integer-valued random variables 1 X and 2 X (e.g., binomial or Poisson random variables) have probability mass functions 11 ( ) P r ( ) f k X k and 22 ( ) P r ( ) f k X k , then the probability that 1 X j given that 12 X X , is simply 1 2 1 2 1 2 1 2 Pr( ) ( ) ( ) Pr( ) ( ) ( ) k X X j f j f j X X f k f k . The argument in the continuous case follows similarly . 1 P 2 P 12 , , ... n P P P 12 & ( , , ..., ) n P P P 12 , , ... n P P P Page 6 of 20 Figure 3. Conflating Distributions (Green curve is the conflation of red curves. Note t hat the mean of the conflation is closer to the mean of the input distribution with smaller var iance, i.e. with greater accuracy) At first glance it may seem counterintuitive that the conflation of two relatively broad distributions can be a much narrower one (Fig ure 3). However, if both measurements are assumed equally valid, then with relatively high probability the true value should lie in the overlap region between the two distributions. Looking at it statistically, if one lab makes 50 measurements and another lab makes 100, then the standard deviations of their resulting distributions will usually be different. If the labs' methods are also different, with different systematic errors, or their methods rely on different fundamental constants with different uncertainties, then the means will likely be different too. But the bottom line is that the total of 150 valid measurements is substantially grea ter than either lab's data set, so the standard deviation should indeed be smaller. 3. Properties of Conflation Conflation has several basic mathematical properties with significant practical advantages, and to describe these properties succinctly, it will be assumed throughout this sec tion that 1 X and 2 X are independent normal random variables with means 12 , mm and standard deviations 12 , , respectively. That is, for 1 , 2 i , Page 7 of 20 2 (* * ) ( , ) i ii X N m has density function 2 2 () 1 ( ) e x p f o r a l l 2 2 i i i i xm f x x and distribution i P given by ( ) ( ) . i i A P A f x d x Remark. The generalization of the properties of conflation described below to more than two distributions is routine; the generalization to non-normal distributions is more technical, and can be found in [6]. Some of the basic properties of conflation are as follows. (1 ) Conflation is commutative and associative: 1 2 2 1 & ( , ) & ( , ) P P P P and 1 2 3 1 2 3 & (& ( , ), ) & ( , & ( , )) . P P P P P P Proof. Immediate from (*) and the commutativity and associa ti vity of real numbers, which implies that 1 2 2 1 ( ) ( ) ( ) ( ) f x f x f x f x and 1 2 3 1 2 3 ( ( ) ( )) ( ) ( ) ( ( ) ( ) ). f x f x f x f x f x f x (2 ) Conflation is iterative: 1 2 3 1 2 3 1 2 3 & ( , , ) & ( & ( , ) , ) & ( & ( & ( ) , ), ). P P P P P P P P P Proof. Immediate from (*). Thus from (2), to include a new data set in the consolidation, simply c onfl ate it with the overall conflation of the previous data sets. (3 ) Conflations of normal distributions are normal: If 1 P and 2 P satisfy (**), then 12 & ( , ) PP is normal with 1 2 2 2 2 2 1 2 2 1 1 2 22 12 22 12 1 1 mm mm m and 22 2 12 22 12 22 12 1 1 1 . Proof. By (*) and (**), 12 & ( , ) PP is continuous with density proportional to 22 12 12 22 1 2 1 2 ( ) ( ) 1 ( ) ( ) e x p . 2 2 2 x m x m f x f x Completing the square of the exponent Page 8 of 20 gives 2 2 2 2 2 1 2 1 2 1 2 2 2 2 2 2 2 2 2 1 2 1 2 1 2 1 2 ( ) ( ) 11 2 2 2 2 2 2 2 2 x m x m m m m m x 2 2 22 1 2 1 2 1 2 2 2 2 2 2 2 2 2 2 2 22 1 2 1 2 1 2 1 2 1 2 12 11 1 1 1 1 , 2 2 2 2 2 2 22 m m m m m m x which is easily seen to be the exponent of the density of a normal distribution with the mean and variance in (3). By (2) and (3), conflations of any finite number of normal distributions are always normal (see Figure 3, and red curve in Figure 4B). Similarly, many of the other important classical families of distributions, including ga mma, beta, uniform, exponential, Pareto, Laplace, Bernoulli, zeta and geometric families, are also preserved under conflation [7 , Theorem 7.1]. Figure 4A. Three Input Distributions Page 9 of 20 Figure 4B. Comparison of Averaging Probabilities, Averaging Data, and Conflating (Green curve is average of the three input distributions in F igure 4A, blue curve is average of the three input datasets, and red curve is the conflation.) (4) Means and variances of conflations of normal distributions coincide with those of the weighted-least-squares method. Sketch of proof. Given two independent distributions with means 12 , mm and standard deviations 12 , , respectively, the weighted-lease-squares mean m is obtained by minimizing the function 22 12 22 12 ( ) ( ) () m m m m fm with respect to m . Setting 12 22 12 2( ) 2( ) ( ) 0 m m m m f m and solving for m yields 22 2 1 1 2 22 12 mm m , which, by (2), is the mean of the confla tion of two normal distributions with means 12 , mm and standard deviations 12 , . The conclusion for the weighted-least-squares variance follows similarly. Shannon Information Whenever data from several (input) distributions is consolidated into a single (output) distribution, this will typically result in some loss of information, however that is defined. One of the most classical measures of information is the Shannon information. Recall that the Shannon information obtained from observing that a random variable X is in a certain set A is Page 10 of 20 2 l o g of the probability that X is in A . That is, the Shannon information is the number of binary bits of information obtained by observing that X is in A. For example, if X is a random variable uniformly distributed on the unit interval [0,1], then observing that X is greater than ½ has Shannon information exactly 22 11 l o g P r ( ) l o g ( ) 1 22 X , so one unit (binary bit) of Shannon information has been obtained, namely, that the first binary di git in the expansion of X is 1. The Shannon Information is also called the surprisal , or self-information - the smaller the value of Pr( ) X A , the greater the information or surprise - and the (combined) Shannon Information obtained by observing that independent random variables 1 X and 2 X are both in A is simply the sum of the information obtained from each of the datasets 1 X and 2 X , that is, 1 2 1 2 , 2 1 2 ( ) ( ) ( ) l o g ( ) ( ) P P P P S A S A S A P A P A . Thus the loss in Shannon information incurred in replacing the pair of distributions 12 , PP by a single probability distribution Q is 12 , ( ) ( ) PP S A Q A for the event A . (5) Conflation minimizes the loss of Shannon inform ation: If 1 P and 2 P are independent probability distributions, then the conflation 12 & ( , ) PP of 1 P and 2 P is the unique probability distribution that minimizes, over all events A, the maximum loss of Shannon information in replacing the pair 12 , PP by a single distribution Q. Sketch of proof . First observe that for an event A , the difference between the combined Shannon information obtained from 1 P and 2 P and the Shannon information obtained from a single probability Q is 12 , 2 12 () ( ) ( ) l o g ( ) ( ) PP QA S A Q A P A P A . Since 2 lo g ( ) x is strictly increasing, the maximum (loss) thus occurs for an event A where 12 () ( ) ( ) QA P A P A is maximized. Next note that the largest loss of Shannon information occurs for small sets A , since for disjoint sets A and B , 1 2 1 2 1 2 1 2 1 2 ( ) ( ) ( ) ( ) ( ) m a x , , ( ) ( ) ( ) ( ) ( ) ( ) ( ) ( ) ( ) ( ) Q A B Q A Q B Q A Q B P A B P A B P A P A P B P B P A P A P B P B where the inequalities follow from the inequalities ( ) ( ) a b c d ac bd and max , a b a b c d c d for positive numbers a,b,c,d . Since 1 P and 2 P are normal, their densities Page 11 of 20 1 () fx and 2 () fx are continuous everywhere, so the small set A may in fact be replaced by an arbitrarily small interval, and the problem reduces to finding the pr obability density function f that makes the maximum, over all real values x , of the ratio 12 () ( ) ( ) fx f x f x as small as possible. But, as is seen in the discrete framework, the minimum over all nonnegative 1 , ..., n pp with 1 . . . 1 n p p of the maximum of 1 1 , ... , n n p p qq occurs when 1 1 ... n n p p qq (if they are not equal, reducing the numerator of the largest ratio, and increasing that of the smallest, will make the maximum smaller). Thus the f that makes the maximum of 12 () ( ) ( ) fx f x f x as small as possible is when 12 ( ) ( ) ( ) f x c f x f x , where c is chosen to make f a density function, i.e., to make f integrate to 1. But this is exactly the definition of the conflation 12 & ( , ) PP in (*). Remark. The proof only uses the facts that normal distributions have densities that are continuous and positive everywhere, a nd that the integral of the product of ever y two norm al densities is finite and positive. (6) Conflation is a best linear unbiased estimate (BLUE) : If 1 X and 2 X are independent unbiased estimates of with finite standard deviations 11 , respectively, then 11 [ & ( , )] m e a n N N is a best linear unbiased estimate for , where 1 N and 2 N are independent normal probability distributions with (random) means 1 X and 2 X , and standard deviations 1 and 2 , respectively. Sketch of proof . Let 12 ( 1 ) X p X p X be the linear estimator of based on 1 X and 2 X and weight 0 1. p Then the expected value E(X) of X is 12 ( ) ( 1 ) E X p m p m , and since 1 X and 2 X are independent the variance V(X) of X is 2 2 2 2 12 ( ) ( 1 ) V X p p . To find the * p that minimizes V(X) , setting 22 12 2 2 ( 1 ) 0 dV pp dp yields 2 2 22 12 p , so 22 2 1 1 2 2 2 2 2 1 2 1 2 * XX X is BLUE for . But b y (3 ), * X is the mean of 12 & ( , ). NN Page 12 of 20 (7) Conflation yields a maximum likelihood estim ator (MLE) : If 1 X and 2 X are independent normal unbiased estimates of with finit e standard de viations 11 , respectively, then 11 [ & ( , )] m e a n N N is a maximum likelihood estimator (M LE) for , where 1 N and 2 N are independent normal probability distributions with (random) means 1 X and 2 X , and standard deviations 1 and 2 , respectively. Sketch of proof . The classical likelihood function in this case is 22 12 12 22 12 1 2 ( ) ( ) 1 1 ( ; ) ( ; ) e x p e x p 22 22 XX L f X f X , so to find the * that maximizes L , take the partial derivative of log L with respect to and set it equal to zero - 12 22 12 l o g 0 X X L . This implies that the critical point (and maximum likelihood) occurs when 12 2 2 2 2 1 2 1 2 11 * XX . Thus 12 2 2 2 2 1 2 1 2 11 * XX . By (3), this implies that the MLE * is the mean of 12 & ( , ). NN Remark . Note that the normality of the und erl y in g distributions is used in (7), but it is not required for (5) or (6). Properties (4), (6), and (7) in the general cases use Aiken’s generalization of the Gauss-Markov theorem and related results; e.g. see [1 ] and [11 ]. In addition to (6) and (7), conflation is also optimal with respec t t o several other statistical properties. In classical hy potheses testing, for ex ample, a standard technique to decide from which of n known distributions given data actually came is to maximize the likelihood ratios, that is, the ratios of the probability density or probability mass func tions. Analogousl y , wh en the objective is how best to consolidate data from those input distributions into a single (output) distribution , one natural criterion is to choose so as to make the ratios of the likelihood of observing under to the likelihood of observing under all of the (independent) distributions as close as possible. The conflation of the distributions is the unique probability distribution that makes the variation of these likelihood ra tios as small as possible. The conflation of the distributions is also the unique probability distribution that preserves the proportionality of likelihoods. A criterion similar to likelihood ratios is to require that the output distribution P reflect the relative likelihoods of identical individual outcomes under the . For example, if the likelihood of all the experiments observing th e identical outcome x is twice P P x P x {} i P {} i P {} i P Page 13 of 20 that of the likelihood of all the experiments observing y , then P(x) should also be twice as large as P(y). Conflation has one more advantage over the methods of averaging proba bi lities or data. In practice, assumptions are often made a bout the form of the input distributions, such as an assumption that underlying data is normally distributed [9 ]. But the true and estimated va lues for Planck’s constant are clearly never negative, so the underlying distribution is certainly not truly normally distributed – more likely it is truncated normal. Using conflations, the problem of truncation essentially disappears – it is automatic ally taken into account. If one of the input distributions is summarized as a true normal distribution, and the other e x cludes negative values, for example, then the conflation will exclude nega tive values, as is seen in Figure 5. Figure 5. (Green curve is the conflation of red curves. Note that the conflation has no negative values, since the triangular input had none.) 4. Examples in Measurements of Physical Constants and High-energy Physics As was described in the Introduction, methods for combining independent data sets are especially pertinent today as progress is made in creating highly precise measurement standards and reference values for basic phy si cal quantites. The first two authors were originally concerned with the value of Avogadro’s number [3] and later with a re-definition of the kilogram [7] . This endeavor brought them into contact with the researchers at NIST and their foreign counterparts, {} i P Page 14 of 20 and, as suggested in the Introduction, it became apparent that an objective method for combining data sets measured in different laboratories is a pressing need. While the authors of this paper believe that the kilogram should be defined in terms of a predetermined theoretical value for Avogadro’s number [7], the NIST approach is based instead on a more precise value for Planck’s constant determ ined in the laboratory using a watt balance. In fact this approach may result in a define d exact value for Planck’s constant in parallel with the speed of light and the second (these two determine the meter exactly as well). Since conflation is the result produced by an objective analysis of exactly this question – how to consolidate data from independent experiments – perhaps conflation can be employed to obtain better consolidations of experimental data for the fundamental physical constants. The purpose of this section is to illustrate, using c oncrete real data, how conflation may be used for this problem. Example 1. {220} Lattice Spacing Measurements The input data used to obtain the CODATA 2006 recommended values and uncertainties of the fundamental physical constants includes the measurements and inferred values of the absolute {220} lattice spacing of various silicon crystals used in the determination of Planck’s constant and the Avogadro constant. The four measurements came from three different labora tories, and had values 192,015.565(13), 192,015.5973(84), 192,015.5732(53) and 192,015.5685(67), respectively [10, Table XXIV], where the parenthetical entry is the unc ertaint y . The C ODATA Task Force viewed the second value a s “inconsistent” with the other three (see red curves in Figure 6) and made a consensus adjustment of the uncerta int ies. Since those values “are the means of tens of individual values, with each value being the ave rage of about ten data points” [10], the central limit theorem suggests that the underlying datasets are a pproximatel y normall y distributed as is shown in Figure 6 (red curves). The conflation of those four input distributions, however, requires no consensus adjustment, and yields a value essentially the same as the final CODATA value, namely, 192,015.5762 [10, Table LIII], but with a much smaller uncertainty. Since uncertainties play an important role in determining the values of the related constants via weighted least squares, this smaller, and theoretically justifiable, uncerta int y is a potential improvement to the current accepted values. Page 15 of 20 Figure 6. (The four red curves are the distributions of the four measurements of the {220} lattice spacing underlying the CODATA 2006 values; the green curve is the conflation of those four distributions, and requires no ad hoc adjustment.) Example 2. Top Quark Mass Measurements The top quark is a spin-1/2 fermion with charge two thirds that of the proton, and its mass is a fundamental parameter of the Standard Model of particle physics. Measurements of the mass of the top quark are done chiefly by two different detector groups at the Fermi national Accelerator laboratory (FNAL) Tevatron : the CDF collaboration using a multivaria te-template method, a b - jet decay-length likelihood method, and a dynamic-likelihood method; and the D Ø collaboration using a matrix-element-weighting method and a neutrino-weighting method. The mass of the top quark wa s then “calculated f rom eleven independent measurements made by the CDF and D Ø collaborations” yielding the eleven measurements: 167.4(11.4), 168.4(12.8), 164.5(5.5), 178.1(8.3), 176.1(7.3), 180.1(5.3), 170.9(2.5), 170.3(4.4), 186.0(11.5), 174.0(5.2), and 183.9(15.8) GeV [6, Figure 4]. Again assuming that each of these measurements is approximately normally distributed, the conflation of these e leven independent input distributions is normal with mean and uncertainty (standard deviation) 172.63(1.6), which has a slightly higher mean and a lower uncertainty than the average mass of 171.4(2.1) reported in [5] . (Top quark measurements are being updated reg ularly, and the reader interested in the latest values should check the most recent FNAL publications; these concrete values were used simply for illustrative purposes.) Page 16 of 20 5. Weighted Conflation The conflation 1 &( , ..., ) n PP of n independent probability distributions (experimental datasets) 1 , ..., n PP described above and in [7 ] treated a ll the underlying distributions equally , with no differentiation between relative perceived validities of the experiments. A related statistical concept is that of a uniform prior , that is, a prior assumption that all the experiments are equally li kely to be valid. If, on the other hand, additional assumptions are made about the reliabilities or validities of the various experiments - fo r instance, that one experiment was supervised by a more experienced researcher, or employed a methodology thought t o be better tha n another - then consolidating the data from the independent experiments should probably be adjusted to account for this perceived non-uniformity. More concretely, suppose that in addition to the independent experimental distributions 1 , ..., n PP , nonnegative weights 1 , ..., n ww are assigned to each of the distributions to reflect their perceived relative validity. For example, if 1 P is considered twice as reliable as 1 P , then 12 2. w w Without loss of generality, the weights 1 , ..., n ww are nonnegative, and at least one is positive. How should this additional information 1 , ..., n ww be incorporated into the consolidation of the input data? That is, what probability distribution 11 & ( ( , ), ..., ( , )) nn Q P w P w should replace the uniform-weight conflation 1 &( , ..., ) n PP ? For the case where all the underlying datasets are assumed equall y valid, it was seen that the conflation 1 &( , ..., ) n PP is the unique single probability distribution Q that minimizes the loss of Shannon information between Q and the original distributions 1 , ..., n PP . Similarly, for weighted distributions 11 ( , ), ..., ( , ) nn P w P w , identifying the probability distribution Q that minimizes the loss of Shannon information between Q and the weighted data distributions leads to a unique distribution 11 & (( , ), .. ., ( , )) nn P w P w called the weighted conflation . Given n weighted (independent) distributions 11 ( , ), ..., ( , ) nn P w P w , the weighted Shannon Information of the event A , 11 (( , ),...,( , )) () nn P w P w SA , is 11 (( , ),...,( , )) 2 11 m a x m a x ( ) ( ) l o g ( ), n n j nn jj P w P w P j j j w w S A S A P A ww Page 17 of 20 where, here and throughout, ma x 1 m a x { , ... , }. n w w w Note that 11 (( , ),...,( , )) nn P w P w S is continuous and symmetric in both 1 , ..., n PP and 1 , ..., n ww , and that 11 (( , ),...,( , )) ( ) 0 nn P w P w SA if all the probabilities of A are 1, for all 1 , ..., n PP and 1 , ..., n ww . That is, no matter what the distributions and weights, no information is attained by observing any event that is certain to occur. Remarks (i) Dividing by ma x w reflects the assumption that only the relative weights are important, so for instance, if one experiment is considered twice as likely to be va lid as another, then the information obtained from that experiment should be exactly twic e as much as the information from the other, regardless of the absolute magnitudes of the weights. Thus in this latter case, for example, 1 2 1 2 1 2 (( , 2 ),( , 1)) (( , 4 ),( , 2 )) 1 ( ) ( ) ( ) ( ) 2 P P P P P P S A S A S A S A . In general, this means simply that for all 1 , ..., n PP and 1 , ..., n ww , 1 1 1 1 max max (( , ), ..., ( , )) (( , / ),...,( , / )) ( ) ( ) n n n n P w P w P w w P w w S A S A . (ii) If all the weights are equal, the we ighted Shannon information coincides with the classical combined Shannon information, i.e., 11 (( , ),.. ., ( , )) 1 ( ) ( ) n n j n P w P w P j S A S A if 1 . . . 0 . n w w (iii) The weighted Shannon information is at least the Shannon information of the single input distribution with the largest weight, and no more than the classical combined Shannon information of 1 , ..., n PP , that is, 1 1 1 1 (( , ),...,( , )) ,..., ( ) ( ) ( ) n n n P P w P w P P S A S A S A , with equality if 12 . . . 0 n w w w , or 1 . . . 0 n w w , respectively. Next, the basic definition of conflation (*) is generalized to the definition of weig ht ed conflation, where 11 & (( , ), ... , ( , )) nn P w P w designates the weighted conflation of 1 , ..., n PP with respect to the weights 1 , ..., n ww . Page 18 of 20 (***) If 1 , ..., n PP have densities 1 , ..., n ff , respectively, and the denominator is not 0 or , then 11 & (( , ), ... , ( , )) nn P w P w is continuous with density 12 max max m a x 12 m a x ma x m a x 1 2 12 ( ) ( ) ( ) ( ) . ( ) ( ) ( ) n n w ww w w w n w w w w w w n f x f x f x fx f y f y f y dy Remarks. (i) The definition of weighted conflation for discrete distributions is analogous, with the probability density functions and integration replaced by proba bilit y mass f unctions and summation. (ii) If 1 , ..., n PP are normal distributions with means 1 , ... , n mm and variances 22 1 , . . . , n respectively, then an easy calculation shows that 11 & (( , ), .. ., ( , )) nn P w P w is normal with 11 22 1 1 22 1 ... ... nn n n n wm wm m w w and 2 m a x 1 22 1 . ... n n w w w Observe that the mean of the weighted-conflation is closer to that of the mean of the distribution with the largest weight than the mean of the unweighted-conflation is, and the variance is also closer to the variance of that distribution. Also, an easy calculation shows that the variance of the weighted conflation is always at least as large as the varia n ce of the equally- we ighted conflation, and is never greater than the variance of the input distribution with the largest weig ht. (iii) The weighted conflation depends only on the relative, not the a bsolute, values of the weights; that is 1 1 1 1 m ax m ax & (( , ), .. ., ( , )) & ( ( , / ), ..., ( , / )) n n n n P w P w P w w P w w (iv) If all the weights are equal, the weighted conflation coincides with the standard c onflation, that is, 1 1 1 1 & (( , ), .. ., ( , )) & ( , . . . , ) i f . . . 0 . n n n n P w P w P P w w (v) Updating a weighted distribution with an additional distribution and weight is straightforward: compute the weighted conflation of the pre-existing weighted conflation distribution and the new distribution, using weights ma x 1 m a x { , ... , } n w w w and 1 n w , respectively. That is, the analog of (2) for weighted conflation is Page 19 of 20 1 1 1 1 1 1 m a x 1 1 & (( , ), ..., ( , ), ( , )) & ( & ( ( , ), ..., ( , ), ), ( , )) n n n n n n n n P w P w P w P w P w w P w (vi) Normalized products of density functions of the forms (*) and (***) have been studied in the context of “log opinion polls” and, more recently, in the setting of Hilbert spaces; see [4] and [7 ] and the refe rences therein. (8 ) Weighted conflation minimizes the loss of weighted Sh annon in formation: If 11 ( , ), ..., ( , ) nn P w P w are weighted independent distributions, then the weighted conflation 11 & (( , ), .. ., ( , )) nn P w P w is the unique probability distribution that minimizes, over all events A, the maximum loss of weighted Shannon information in replacing 11 ( , ), ..., ( , ) nn P w P w by a single distribution Q. The proofs of the above conclusions for weighted conflation follow almost exactly from those for uniform conflation, and the details are left for the interested reader. 5. Conclusion The conflation of independent input-data distributions is a probability distribution that summarizes the data in an optimal and unbiased way. The input data may alrea dy be summarized, perhaps as a normal distribution with given mean and variance, or may be the raw data themselves in the form of an empirical histogram or density. The conflation of these input distributions is easy to calculate and visualize, and affords easy computa tion of sharp confidence intervals. Conflation is also easy to update, is the unique minimizer of loss of Shannon information, is the unique minimal likelihood ratio consolidation and is the unique proportional consolidation of the input distributions. Conflation of normal distributions is alway s normal, and conflation preserves truncation of data. Perhaps the method of conflating input data will provide a practical and simple, yet optimal and rigor ous method to address the ba sic problem of consolidation of data. Acknowledgement The authors are grateful to Dr. P eter Mohr for enlightening discussions regardin g the 2006 CODATA evaluation process, and to Dr. Ron Fox for many suggestions. Page 20 of 20 References [1 ] Aitchison, J. (1982) The statistical analysis of compositional data, J. Royal. Statist. Soc. Series B 44, 139-177. [2 ] Davis, R. (2005) Possible new de finiti ons of the kilogram, Phil. Trans. R. Soc. A 363, 2249- 2264. [3 ] Fox, R. F. and Hill, T. (2007) An exact value for Avogadro's number, American Scientist 95, 104-107. [4 ] Genest, C. and Z idek, J. (1986) Combining probability distributions: a critique and an annotated bibliography, Statist. Sci. 1, 114-148. [5 ] Heinson, A. (2006) Top Quark Ma ss m easurements, D Ø Note 5226,Fermilab-Conf-06/287-E [6 ] Hill. T. (2008) Conflations of proba bil ity distributions, , accepted for publication in Trans. Amer. Math Soc. [7] Hill, T, Fox, R. F. and Miller, J. A better definition of the kilogram, http://arxiv.org/abs/1005.5139 [8] Mohr, P. (2008) Private communication. [9 ] Mohr, P., Tay lor, B. and Newell, D. (2007) The fundamental physical constants, Physics Today , 52-55. [10 ] Mohr, P., Tay lor, B. and Newell, D. (2008) CODATA Recommended Values of the Fundamental Physical Constants:2006. Rev. Mod. Phys . 80, 633-730. [1 1 ] R encher, A . and Sch aalje, G. (2008) Linear Models in Statistics , Wiley .

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment