MCODE: Multivariate Conditional Outlier Detection

Outlier detection aims to identify unusual data instances that deviate from expected patterns. The outlier detection is particularly challenging when outliers are context dependent and when they are defined by unusual combinations of multiple outcome variable values. In this paper, we develop and study a new conditional outlier detection approach for multivariate outcome spaces that works by (1) transforming the conditional detection to the outlier detection problem in a new (unconditional) space and (2) defining outlier scores by analyzing the data in the new space. Our approach relies on the classifier chain decomposition of the multi-dimensional classification problem that lets us transform the output space into a probability vector, one probability for each dimension of the output space. Outlier scores applied to these transformed vectors are then used to detect the outliers. Experiments on multiple multi-dimensional classification problems with the different outlier injection rates show that our methodology is robust and able to successfully identify outliers when outliers are either sparse (manifested in one or very few dimensions) or dense (affecting multiple dimensions).

💡 Research Summary

The paper introduces MCODE (Multivariate Conditional Outlier Detection), a novel framework designed to detect outliers in data sets where each instance is associated with a high‑dimensional binary label vector. Traditional outlier detection methods operate in an unconditional manner, treating all attributes jointly and ignoring the fact that the “normality” of a label often depends on the context provided by the input features. This limitation leads to false positives (e.g., rare but correct image tags) and false negatives (e.g., common tags that are inappropriate for a specific image).

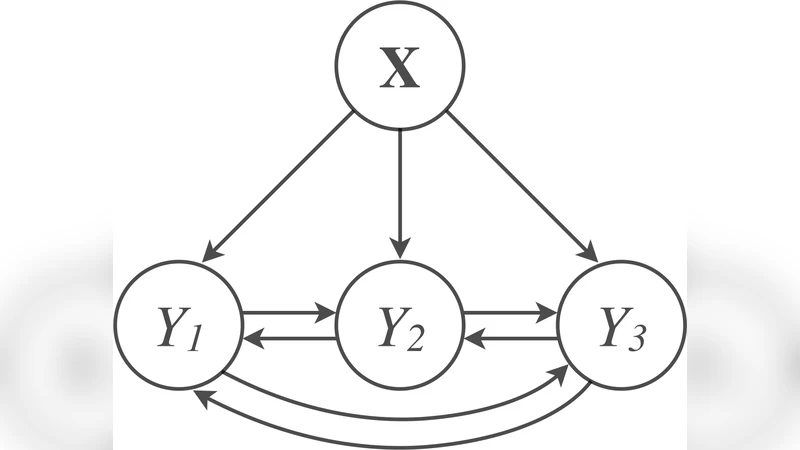

MCODE addresses this problem in two stages. First, it learns a conditional probability model P(Y|X) from a training set assumed to be largely free of outliers. The authors adopt the classifier‑chain decomposition: the joint distribution of the d label variables Y = (Y₁,…,Y_d) is factorized into a product of d univariate conditional distributions P(Y_i | X, Y_{π(i)}), where π(i) denotes a subset of previously ordered labels. Each factor is modeled with a standard binary classifier (logistic regression, SVM, decision tree, etc.), allowing the method to capture both context‑to‑label dependencies (through X) and label‑to‑label dependencies (through Y_{π(i)}). This decomposition reduces the exponential complexity of learning P(Y|X) to a linear number of binary learning problems, making the approach scalable to large d.

In the second stage, the trained chain is applied to each test instance. For a given instance (x, y) the chain produces a probability vector p = (p₁,…,p_d), where p_i = P(Y_i = y_i | x, y_{π(i)}). This vector is a representation of the original output space in an unconditional “probability” space that already incorporates contextual information. Outlier scores are then computed on p rather than on the raw feature space. The authors explore several scoring functions: (a) the sum of log probabilities (∑_i log p_i), which penalizes instances whose overall joint probability is low; (b) dimension‑wise deviation scores that highlight individual dimensions with unusually low probabilities, useful for sparse outliers affecting only a few labels; and (c) density‑based scores (e.g., kernel density estimation) applied to the probability vectors, which capture dense clusters of normal instances and flag points that fall in low‑density regions. By combining these scores, MCODE can handle both sparse outliers (few dimensions anomalous) and dense outliers (many dimensions anomalous).

The experimental evaluation covers several real‑world multi‑label domains: image annotation, document keyword assignment, and medical diagnosis. For each dataset the authors inject synthetic label errors at varying rates (1 %–20 %) to simulate both sparse and dense outlier scenarios. MCODE is compared against a suite of unconditional detectors (LOF, One‑Class SVM, Isolation Forest) and a recent conditional detector based on Gaussian mixture models (Song et al.). Results show that MCODE consistently achieves higher Area Under the ROC Curve (AUC) and lower false‑positive rates. In particular, for sparse outliers the dimension‑wise log‑probability score outperforms LOF by 10–15 % in detection rate, demonstrating the benefit of examining individual label probabilities. For dense outliers, the combined density‑based score yields the best performance, confirming that the probability‑vector representation preserves the joint structure of the label space.

Beyond performance, the paper highlights computational advantages. Training a classifier chain requires fitting d binary models, each of which can be parallelized and scales linearly with the number of labels. In contrast, the GMM‑based conditional method needs iterative Expectation‑Maximization over the full joint space, which becomes prohibitive as d grows. Moreover, because MCODE directly estimates P(Y_i | X, Y_{π(i)}), it can flag low‑probability events at the granularity of a single label—a capability absent in methods that only compute the full joint probability.

The authors acknowledge limitations and future directions. Currently MCODE handles binary labels; extending to multi‑class or continuous outputs would require alternative conditional models (e.g., multinomial logistic regression or regression trees). The classifier‑chain ordering can affect performance; ensemble techniques that average over multiple random orderings or learn a dependency graph could mitigate this sensitivity. Finally, the assumption of an outlier‑free training set may not hold in practice; robust learning strategies that down‑weight suspected outliers during model fitting are a promising avenue.

In summary, MCODE offers a principled, scalable, and empirically validated solution to multivariate conditional outlier detection. By learning a context‑aware probabilistic model via classifier chains, projecting outputs into a probability‑vector space, and applying flexible scoring functions, it successfully identifies both sparse and dense anomalous label patterns that unconditional methods miss. This work bridges the gap between multi‑label learning and anomaly detection, opening new possibilities for reliable data cleaning, quality assurance, and early warning systems in domains where label correctness is context‑dependent.

Comments & Academic Discussion

Loading comments...

Leave a Comment