A Hebbian/Anti-Hebbian Neural Network for Linear Subspace Learning: A Derivation from Multidimensional Scaling of Streaming Data

Neural network models of early sensory processing typically reduce the dimensionality of streaming input data. Such networks learn the principal subspace, in the sense of principal component analysis (PCA), by adjusting synaptic weights according to …

Authors: Cengiz Pehlevan, Tao Hu, Dmitri B. Chklovskii

A Hebbian/Anti-Heb bian Neural Network f or Linear Subspace Lear ning: A Deriv ation fr om Multidimensional Scaling of Str eaming Data Cengiz Pehle v an 1 , 2 , T ao Hu 3 , Dmitri B. Chklovskii 2 1 Janelia Research Campus, Ho ward Hughes Medical Institute, Ashb urn, V A 20147 2 Simons Center for Data Analysis, 160 Fifth A ve, Ne w Y ork, NY 10010 3 T exas A&M Uni versity , MS 3128 T AMUS, College Station, TX 77843 K eywords: Online learning, Linear subspace tracking, Multidimensional scaling, Neural networks Abstract Neural netw ork models of early sensory processing typically reduce the dimension- ality of streaming input data. Such networks learn the principal subspace, in the sense of principal component analysis (PCA), by adjusting synaptic weights according to acti vity-dependent learning rules. When deriv ed from a principled cost function these rules are nonlocal and hence biologically implausible. At the same time, biologically plausible local rules ha ve been postulated rather than deriv ed from a principled cost function. Here, to bridge this gap, we deriv e a biologically plausible network for sub- space learning on streaming data by minimizing a principled cost function. In a depar- ture from previous work, where cost was quantified by the representation, or reconstruc- tion, error , we adopt a multidimensional scaling (MDS) cost function for streaming data. The resulting algorithm relies only on biologically plausible Hebbian and anti-Hebbian local learning rules. In a stochastic setting, synaptic weights con ver ge to a stationary state which projects the input data onto the principal subspace. If the data are generated by a nonstationary distrib ution, the network can track the principal subspace. Thus, our result makes a step to wards an algorithmic theory of neural computation. 1 Intr oduction Early sensory processing reduces the dimensionality of streamed inputs (Hyv ¨ arinen et al., 2009) as e videnced by a high ratio of input to output nerve fiber counts (Shep- herd, 2003). For example, in the human retina, information gathered by ≈ 125 million photoreceptors is con ve yed to the Lateral Geniculate Nucleus through ≈ 1 million gan- glion cells (Hubel, 1995). By learning a lo wer-dimensional subspace and projecting the streamed data onto that subspace the nerv ous system de-noises and compresses the data simplifying further processing. Therefore, a biologically plausible implementation of dimensionality reduction may of fer a model of early sensory processing. For a single neuron, a biologically plausible implementation of dimensionality re- duction in the streaming, or online, setting has been proposed in the seminal work of (Oja, 1982), Figure 1A. At each time point, t , an input vector , x t , is presented to the neuron, and, in response, it computes a scalar output, y t = wx t , were w is a ro w-vector of input synaptic weights. Furthermore, synaptic weights w are updated according to a version of Hebbian learning called Oja’ s rule: w ← w + η y t ( x > t − w y t ) , (1) where η is a learning rate and > designates a transpose. Then, the neuron’ s synap- tic weight vector con ver ges to the principal eigen vector of the co variance matrix of 2 the streamed data (Oja, 1982). Importantly , Oja’ s learning rule is local meaning that synaptic weight updates depend on the activities of only pre- and postsynaptic neurons accessible to each synapse and, therefore, biologically plausible. Oja’ s rule can be deriv ed by an approximate gradient descent of the mean squared representation error (Cichocki and Amari, 2002; Y ang, 1995), a so-called synthesis vie w of principal component analysis (PCA) (Pearson, 1901; Preisendorfer and Mobley, 1988): min w X t x t − w > wx t 2 2 . (2) Computing principal components beyond the first requires more than one output neuron and motiv ated numerous neural networks. Some well-kno wn examples are the Generalized Hebbian Algorithm (GHA) (Sanger, 1989), F ¨ oldiak’ s network (F ¨ oldiak, 1989), the subspace network (Karhunen and Oja, 1982), Rubner’ s network (Rubner and T av an, 1989; Rubner and Schulten, 1990), Leen’ s minimal coupling and full coupling networks (Leen, 1990, 1991) and the APEX network (Kung and Diamantaras, 1990; Kung et al., 1994). W e refer to (Becker and Plumbley, 1996; Diamantaras and Kung, 1996; Diamantaras, 2002) for a detailed re vie w of these and further dev elopments. Ho we ver , none of the previous contributions was able to deriv e a multineuronal single-layer network with local learning rules by minimizing a principled cost function, in a way that Oja’ s rule (1) was derived for a single neuron. The GHA and the sub- space rules rely on nonlocal learning rules: feedforward synaptic updates depend on other neurons’ synaptic weights and acti vities. Leen’ s minimal network is also nonlo- cal: feedforward synaptic updates of a neuron depend on its lateral synaptic weights. While F ¨ oldiak’ s, Rubner’ s and Leen’ s full coupling networks use local Hebbian and 3 anti-Hebbian rules, they were postulated rather than deriv ed from a principled cost function. APEX network, perhaps, comes closest to our criterion: the rule for each neuron can be related separately to a cost function which includes contributions from other neurons. But no cost function describes all the neurons combined. At the same time, numerous dimensionality reduction algorithms have been de- veloped for data analysis needs disregarding the biological plausibility requirement. Perhaps the most common approach is again PCA, which was originally de veloped for batch processing (Pearson, 1901) but later adapted to streaming data (Y ang, 1995; Crammer, 2006; Arora et al., 2012; Goes et al., 2014). For a more detailed collec- tion of references, see e.g. (Balzano, 2012). These algorithms typically minimize the representation error cost function: min F X − F > FX 2 F , (3) where X is a data matrix and F is a wide matrix (for detailed notation, see below). The minimum of (3) is when rows of F are orthonormal and span the m -dimensional principal subspace, and therefore F > F is the projection matrix to the subspace (Y ang, 1995) 1 . A gradient descent minimization of such cost function can be approximately imple- mented by the subspace network (Y ang, 1995), which, as pointed out above, requires nonlocal learning rules. While this algorithm can be implemented in a neural network using local learning rules, it requires a second layer of neurons (Oja, 1992), making it 1 Recall that, in general, the projection matrix to the row space of a matrix P is gi ven by P > PP > − 1 P , provided PP > is full rank (Plumbley, 1995). If the rows of P are orthonormal this reduces to P > P . 4 less appealing. In this paper , we deri ve a single-layer netw ork with local Hebbian and anti-Hebbian learning rules, similar in architecture to F ¨ oldiak’ s (F ¨ oldiak, 1989) (see Figure 1B), from a principled cost function and demonstrate that it recovers a principal subspace from streaming data. The nov elty of our approach is that, rather than starting with the rep- resentation error cost function traditionally used for dimensionality reduction, such as PCA, we use the cost function of classical multidimensional scaling (CMDS), a member of the family of multidimensional scaling (MDS) methods (Cox and Cox, 2000; Mar- dia et al., 1980). Whereas the connection between CMDS and PCA has been pointed out pre viously (W illiams, 2001; Cox and Cox, 2000; Mardia et al., 1980), CMDS is typically performed in the batch setting. Instead, we de veloped a neural network imple- mentation of CMDS for streaming data. The rest of the paper is org anized as follows. In Section 2, by minimizing the CMDS cost function we deriv e two online algorithms implementable by a single-layer network, with synchronous and asynchronous synaptic weight updates. In Section 3, we demonstrate analytically that synaptic weights define a principal subspace whose dimension m is determined by the number of output neurons and that the stability of the solution requires that this subspace corresponds to top m principal components. In Section 4, we show numerically that our algorithm recov ers the principal subspace of a synthetic dataset, and does it faster than the existing algorithms. Finally , in Section 5, we consider the case when data are generated by a nonstationary distribution and present a generalization of our algorithm to principal subspace tracking. 5 x 1 x n . . . y 1 y m anti-Hebbian -M A B W . . . x 2 x 1 x n . . . y w x 2 Hebbian Figure 1: An Oja neuron and our neural network. A . A single Oja neuron computes the principal component, y , of the input data, x , if its synaptic weights follo w Hebbian updates. B . A multineuron network computes the principal subspace of the input if the feedforward connection weight updates follo w a Hebbian and the lateral connection weight updates follo w an anti-Hebbian rule. 2 Deriv ation of online algorithms from the CMDS cost function CMDS represents high-dimensional input data in a lower -dimensional output space while preserving pairwise similarities between samples 2 (Y oung and Householder, 1938; T orgerson, 1952). Let T centered input data samples in R n be represented by column- vectors x t =1 ,...,T concatenated into an n × T matrix X = [ x 1 , . . . , x T ] . The correspond- ing output representations in R m , m ≤ n , are column-vectors, y t =1 ,...,T , concatenated into an m × T dimensional matrix Y = [ y 1 , . . . , y T ] . Similarities between vectors in Euclidean spaces are captured by their inner products. For the input (output) data, such 2 Whereas MDS in general starts with dissimilarities between samples that may not li ve in Euclidean geometry , in CMDS data are assumed to ha ve a Euclidean representa- tion. 6 inner products are assembled into a T × T Gram matrix 3 X > X ( Y > Y ). For a gi ven X , CMDS finds Y by minimizing the so-called “strain” cost function (Carroll and Chang, 1972) : min Y X > X − Y > Y 2 F . (4) For discov ering a lo w-dimensional subspace, the CMDS cost function (4) is a viable alternati ve to the representation error cost function (3) because its solution is related to PCA (W illiams, 2001; Cox and Cox, 2000; Mardia et al., 1980). Specifically , Y is the linear projection of X onto the (principal sub-)space spanned by m principal eigen vec- tors of the sample cov ariance matrix C T = 1 T P T t =1 x t x > t = XX > . The CMDS cost function defines a subspace rather than indi vidual eigen vectors because left orthogonal rotations of an optimal Y stay in the subspace and are also optimal, as is e vident from the symmetry of the cost function. In order to reduce the dimensionality of streaming data, we minimize the CMDS cost function (4) in the stochastic online setting. At time T , a data sample, x T , drawn independently from a zero-mean distrib ution is presented to the algorithm which com- putes a corresponding output, y T prior to the presentation of the ne xt data sample. Whereas in the batch setting, each data sample affects all outputs, in the online setting, past outputs cannot be altered. Thus, at time T the algorithm minimizes the cost de- pending on all inputs and ouputs up to time T with respect to y T while keeping all the 3 When input data are pairwise Euclidean distances, assembled into a matrix Q , the Gram matrix, X > X , can be constructed from Q by HZH , where Z ij = − 1 / 2 Q 2 ij , H = I n − 1 /n 11 > is the centering matrix, and I n is the n dimensional identity matrix (Cox and Cox, 2000; Mardia et al., 1980). 7 pre vious outputs fixed: y T = arg min y T X > X − Y > Y 2 F = arg min y T T X t =1 T X t 0 =1 x > t x t 0 − y > t y t 0 2 , (5) where the last equality follo ws from the definition of the Frobenius norm. By keeping only the terms that depend on current output y T we get: y T = arg min y T " − 4 x > T T − 1 X t =1 x t y > t ! y T + 2 y > T T − 1 X t =1 y t y > t ! y T − 2 k x T k 2 k y T k 2 + k y T k 4 # . (6) In the large- T limit, expression (6) simplifies further because the first two terms gro w linearly with T , and therefore dominate ov er the last two. After dropping the last two terms we arri ve at: y T = arg min y T " − 4 x > T T − 1 X t =1 x t y > t ! y T + 2 y > T T − 1 X t =1 y t y > t ! y T # . (7) W e term the cost in e xpression (7) the “online CMDS cost”. Because the online CMDS cost is a positi ve semi-definite quadratic form in y T for suf ficiently lar ge T , this optimization problem is con vex. While it admits a closed-form analytical solution via matrix in version, we are interested in biologically plausible algorithms. Next, we consider two algorithms that can be mapped onto single-layer neural networks with local learning rules: coordinate descent leading to asynchronous updates and Jacobi iteration leading to synchronous updates. 2.1 A neural network with asynchr onous updates The online CMDS cost function (7) can be minimized by coordinate descent which at e very step finds the optimal v alue of one component of y T while keeping the rest fix ed. 8 The components can be cycled through in an y order until the iteration con ver ges to a fixed point. Such iteration is guaranteed to con ver ge under very mild assumptions: diagonals of T − 1 P t =1 y t y > t has to be positiv e (Luo and Tseng, 1991), meaning that each output coordinate has produced at least one non-zero output before current time step T . This condition is almost always satisfied in practice. The cost to be minimized at each coordinate descent step with respect to i th chan- nel’ s acti vity is: y T ,i = arg min y T ,i " − 4 x > T T − 1 X t =1 x t y > t ! y T + 2 y > T T − 1 X t =1 y t y > t ! y T # . K eeping only those terms that depend on y T ,i yields: y T ,i = arg min y T ,i " − 4 X k x T ,k T − 1 X t =1 x t,k y t,i ! y T ,i +4 X j 6 = i y T ,j T − 1 X t =1 y t,j y t,i ! y T ,i + 2 T − 1 X t =1 y 2 t,i ! y 2 T ,i # . By taking a deri v ativ e with respect to y T ,i and setting it to zero we arrive at the following closed-form solution: y T ,i = P k T − 1 P t =1 y t,i x t,k x T ,k T − 1 P t =1 y 2 t,i − P j 6 = i T − 1 P t =1 y t,i y t,j y T ,j T − 1 P t =1 y 2 t,i . (8) T o implement this algorithm in a neural network we denote normalized input-output and output-output cov ariances, W T ,ik = T − 1 P t =1 y t,i x t,k T − 1 P t =1 y 2 t,i , M T ,i,j 6 = i = T − 1 P t =1 y t,i y t,j T − 1 P t =1 y 2 t,i , M T ,ii = 0 , (9) allo wing us to re write the solution (8) in a form suggestiv e of a linear neural network: y T ,i ← n X j =1 W T ,ij x T ,j − m X j =1 M T ,ij y T ,j , (10) 9 where W T and M T represent the synaptic weights of feedforward and lateral connec- tions respecti vely , Figure 1B. Finally , to formulate a fully online algorithm we rewrite (9) in a recursiv e form. This requires introducing a scalar v ariable D T ,i representing cumulative acti vity of a neuron i up to time T − 1 , D T ,i = T − 1 X t =1 y 2 t,i , (11) Then, at each time point, T , after the output y T is computed by the network, the fol- lo wing updates are performed: D T +1 ,i ← D T ,i + y 2 T ,i W T +1 ,ij ← W T ,ij + y T ,i ( x T ,j − W T ,ij y T ,i ) /D T +1 ,i M T +1 ,i,j 6 = i ← M T ,ij + y T ,i y T ,j − M T ,ij y T ,i /D T +1 ,i . (12) Equations (10) and (12) define a neural network algorithm that minimizes the on- line CMDS cost function (7) for streaming data by alternating between two phases: neural acti vity dynamics and synaptic updates. After a data sample is presented at time T , in the neuronal activity phase, neuron activities are updated one-by-one, i.e. asyn- chronously , (10) until the dynamics con ver ges to a fixed point defined by the following equation: y T = W T x T − M T y T = ⇒ y T = ( I m + M T ) − 1 W T x T , (13) where I m is the m -dimensional identity matrix. In the second phase of the algorithm, synaptic weights are updated, according to a local Hebbian rule (12) for feedforward connections, and according to a local anti- 10 Hebbian rule (due to the ( − ) sign in equation (10)) for lateral connections. Interest- ingly , these updates hav e the same form as the single-neuron Oja’ s rule (1) (Oja, 1982), except that the learning rate is not a free parameter but is determined by the cumulati ve neuronal acti vity 1 /D T +1 ,i 4 . T o the best of our kno wledge such single-neuron rule (Hu et al., 2013) has not been deriv ed in the multineuron case. An alternati ve deriv ation of this algorithm is presented in Appendix A.1 Unlike the representation error cost function (3), the CMDS cost function (4) is formulated only in terms of input and output activity . Y et, the minimization with respect to Y recov ers feedforward and lateral synaptic weights. 2.2 A neural network with synchr onous updates Here, we present an alternati ve way to deriv e a neural network algorithm from the large- T limit of the online CMDS cost function (7) . By taking a deri vati ve with respect to y T and setting it to zero we arri ve at the follo wing linear matrix equation: T − 1 X t =1 y t y > t ! y T = T − 1 X t =1 y t x > t ! x T , (14) W e solve this system of equations using Jacobi iteration (Strang, 2009), by first split- ting the output cov ariance matrix that appears on the left side of (14) into its diagonal 4 The single neuron Oja’ s rule deri ved from the minimization of a least squares opti- mization cost function ends up with the identical learning rate (Diamantaras, 2002; Hu et al., 2013). Motiv ated by this fact, such learning rate has been argued to be optimal for the APEX network (Diamantaras and K ung, 1996; Diamantaras, 2002) and used by others (Y ang, 1995). 11 component D T and the remainder R T : T − 1 X t =1 y t y > t ! = D T + R T , where the i th diagonal element of D T , D T ,i = P T − 1 t =1 y 2 t,i , as defined in (11). Then, (14) is equi v alent to: y T = D − 1 T T − 1 X t =1 y t x > t ! x T − D − 1 T R T y T . Interestingly , the matrices obtained on the right side are algebraically equiv alent to the feedforward and lateral synaptic weight matrices defined in (9): W T = D − 1 T T − 1 X t =1 y t x > t ! and M T = D − 1 T R T . (15) Hence, the Jacobi iteration for solving (14) y T ← W T x T − M T y T . (16) con ver ges to the same fixed point as the coordinate descent, (13). Iteration (16) is naturally implemented by the same single-layer linear neural net- work as for the asynchronous update, Figure 1B. F or each stimulus presentation the network goes through two phases. In the first phase, iteration (16) is repeated until con- ver gence. Unlike the coordinate descent algorithm which updated activity of neurons one after another , here, activities of all neurons are updated synchronously . In the sec- ond phase, synaptic weight matrices are updated according to the same rules as in the asynchronous update algorithm (12). Unlike the asynchronous update (10), for which con ver gence is almost alw ays guar- anteed (Luo and Tseng, 1991), con ver gence of iteration (16) is guaranteed only when 12 the spectral radius of M is less than 1 (Strang, 2009). Whereas we cannot prov e that this condition is alw ays met, in practice, the synchronous algorithm works well. While in the rest of the paper , we consider only the asynchronous updates algorithm, our results hold for the synchronous updates algorithm provided it con ver ges. 3 Stationary synaptic weights define a principal sub- space What is the nature of the lo wer dimensional representation found by our algorithm? In CMDS, outputs y T ,i are the Euclidean coordinates in the principal subspace of the input vector x T (Cox and Cox, 2000; Mardia et al., 1980). While our algorithm uses the same cost function as CMDS, the minimization is performed in the streaming, or online, setting. Therefore, we cannot take for granted that our algorithm will find the principal subspace of the input. In this section, we provide analytical evidence, by a stability analysis in a stochastic setting, that our algorithm extracts the principal subspace of the input data and projects onto that subspace. W e start by previe wing our results and method. Our algorithm performs a linear dimensinality reduction since the transformation between the input and the output is linear . This can be seen from the neural activity fixed point (13), which we re write as y T = F T x T , (17) 13 where F T is a matrix defined in terms of the synaptic weight matrices W T and M T : F T := ( I m + M T ) − 1 W T . (18) Relation (17) sho ws that the linear filter of a neuron, which we term a “neural filter”, is the corresponding ro w of F T . The space that neural filters span, the rowspace of F T , is termed a “filter space”. First, we pro ve that in the stationary state of our algorithm, neural filters are in- deed orthonormal vectors (section 3.2, Theorem 1). Second, we demonstrate that the orthonormal filters form a basis of a space spanned by some m eigen vectors of the co- v ariance of the inputs C (section 3.3, Theorem 2). Third, by analyzing linear perturba- tions around the stationary state, we find that stability requires these m eigen vectors to be the principal eigen vectors and, therefore, the filter space to be the principal subspace (section 3.4, Theorem 3). These results show that e ven though our algorithm was deri ved starting from the CMDS cost function (4), F T con ver ges to the optimal solution of the representation error cost function (3). This correspondence suggests that F > T F T is the algorithm’ s current estimate of the projection matrix to the principal subspace. Further , in (3), columns of F > are interpreted as data features. Then, columns of F > T , or neural filters, are the algorithm’ s estimate of such features. Rigorous stability analyses of PCA neural netw orks (Oja, 1982; Oja and Karhunen, 1985; Sanger, 1989; Oja, 1992; Hornik and K uan, 1992; Plumbley, 1995) typically use the ODE method (Kushner and Clark, 1978): Using a theorem of stochastic approxi- mation theory (Kushner and Clark, 1978), the con vergence properties of the algorithm 14 are determined using a corresponding deterministic dif ferential equation 5 . Unfortunately the ODE method cannot be used for our network. While the method requires learning rates that depend only on time, in our network learning rates ( 1 /D T +1 ,i ) are activity dependent. Therefore we take a different approach. W e directly work with the discrete-time system, assume con ver gence to a “stationary state”, to be defined be- lo w , and study the stability of the stationary state. 3.1 Pr eliminaries W e adopt a stochastic setting where the input to the network at each time point, x t , is an n -dimensional i.i.d. random vector with zero mea n, h x t i = 0 , where brackets denote an av erage over the input distrib ution, and cov ariance C = x t x > t . Our analysis is performed for the “stationary state” of synaptic weight updates, i.e. when averaged ov er the distribution of input values, the updates on W and M av erage to zero. This is the point of conv ergence of our algorithm. For the rest of the section, we drop the time index T to denote stationary state variables. The remaining dynamical variables, learning rates 1 /D T +1 ,i , keep decreasing at each time step due to neural acti vity . W e assume that the algorithm has run for a suffi- ciently long time such that the change in learning rate is small and it can be treated as a constant for a single update. Moreover , we assume that the algorithm con ver ges to a stationary point sufficiently fast such that the following approximation is valid at large 5 Application of stochastic approximation theory to PCA neural networks depends on a set of mathematical assumptions. See (Zufiria, 2002) for a critique of the v alidity of these assumptions and an alternati ve approach to stability analysis. 15 T : 1 D T +1 ,i = 1 P T t =1 y 2 t,i ≈ 1 T h y 2 i i , where y is calculated with stationary state weight matrices. W e collect these assumptions into a definition. Definition 1 (Stationary State) . In the stationary state, h ∆ W ij i = h ∆ M ij i = 0 , and 1 D i = 1 T h y 2 i i , with T lar ge. The stationary state assumption leads us to define various relations between synaptic weight matrices, summarized in the follo wing corollary: Corollary 1. In the stationary state, h y i x j i = y 2 i W ij , (19) and h y i y j i = y 2 i ( M ij + δ ij ) , (20) wher e δ ij is the Kr oneck er-delta. Pr oof. Stationarity assumption when applied to the update rule on W (12) leads imme- diately to (19). Stationarity assumption applied to the update rule on M (12) giv es: h y i y j i = y 2 i M ij , i 6 = j. 16 The last equality does not hold for i = j since diagonal elements of M are zero. T o cov er the case i = j , we add an identity matrix to M , and hence one reco vers (20). Remark. Note that (20) implies h y 2 i i M ij = y 2 j M j i , i.e. that lateral connection weights are not symmetrical. 3.2 Orthonormality of neural filters Here we prov e the orthonormality of neural filters in the stationary state. First, we need the follo wing lemma: Lemma 1. In the stationary state, the following equality holds: I m + M = WF > . (21) Pr oof. By (20), h y 2 i i ( M ik + δ ik ) = h y i y k i . Using y = Fx , we substitute for y k on the right hand side: h y 2 i i ( M ik + δ ik ) = P j F kj h y i x j i . Next, the stationarity condition (19) yields: h y 2 i i ( M ik + δ ik ) = h y 2 i i P j F kj W ij . Canceling h y 2 i i on both sides prov es the Lemma. No w we can prov e our theorem. Theorem 1. In the stationary state, neur al filters ar e orthonormal: FF > = I m . (22) Pr oof. First, we substitute for F (but not for F > ) its definition (18): FF > = ( I m + M ) − 1 WF > . Next, using Lemma 1, we substitute WF > by ( I m + M ) . The right hand side becomes ( I m + M ) − 1 ( I m + M ) = I m . Remark. Theorem 1 implies that rank( F ) = m . 17 3.3 Neural filters and their r elationship to the eigenspace of the co- variance matrix Ho w is the filter space related to the input? W e partially answer this question in Theo- rem 2, using the follo wing lemma: Lemma 2. In the stationary state, F > F and C commute: F > F C = CF > F . (23) Pr oof. See Appendix A.2. No w we can state our second theorem. Theorem 2. At the stationary state state, the filter space is an m -dimensional subspace in R n that is spanned by some m eigen vectors of the covariance matrix. Pr oof. Because F > F and C commute (Lemma 2), they must share the same eigenv ec- tors. Equation (22) of Theorem 1 implies that m eigen values of F > F are unity and the rest are zero. Eigen vectors associated with unit eigen values span the ro wspace of F 6 and are identical to some m eigen vectors of C . Which m eigen vectors of C span the filter space? T o sho w that these are the eigen- vectors corresponding to the lar gest eigen v alues of C , we perform a linear stability analysis around the stationary point and show that an y other combination would be unstable. 6 If this fact is not familiar to the reader , we recommend Strang’ s (Strang, 2009) discussion of Singular V alue Decomposition. 18 3.4 Linear stability r equires neural filters to span a principal sub- space The strate gy here is to perturb F from its equilibrium value and sho w that the perturba- tion is linearly stable only if the row space of F is the space spanned by the eigen vectors corresponding to the m highest eigen values of C . T o pro ve this result, we will need tw o more lemmas. Lemma 3. Let H be an m × n real matrix with orthonormal r ows and G is an ( n − m ) × n r eal matrix with orthonormal r ows, whose r ows ar e chosen to be ortho gonal to the r ows of H . Any n × m r eal matrix Q can be decomposed as: Q = A H + S H + B G , wher e A is an m × m ske w-symmetric matrix, S is an m × m symmetric matrix and B is an m × ( n − m ) matrix. Pr oof. Define B := Q G > , A := 1 2 Q H > − H Q > and S := 1 2 Q H > + H Q > . Then, A H + S H + B G = Q H > H + G > G = Q . Let’ s denote an arbitrary perturbation of F as δ F , where a small parameter is im- plied. W e can use Lemma 3 to decompose δ F as δ F = δ A F + δ S F + δ B G , (24) where the ro ws of G are orthogonal to the rows of F . Skew-symmetric δ A corresponds to rotations of filters within the filter space, i.e. it keeps neural filters orthonormal. Symmetric δ S k eeps the filter space in v ariant but destroys orthonormality . δ B is a perturbation that takes the neural filters outside of the filter space. 19 Next, we calculate ho w δ F ev olves under the learning rule, i.e. h ∆ δ F i . Lemma 4. A perturbation to the stationary state has the following evolution under the learning rule to linear or der in perturbation and linear or der in T − 1 : h ∆ δ F ij i = 1 T X k ( I m + M ) − 1 ik h y 2 k i " X l δ F kl C lj − X lpr δ F kl F rp C lp F rj − X lpr F kl δ F rp C lp F rj # − 1 T δ F ij . (25) Pr oof. Proof is provided in Appendix A.3. No w , we can state our main result in the following theorem: Theorem 3. The stationary state of neur onal filters F is stable, in lar ge- T limit, only if the m dimensional filter space is spanned by the eigen vectors of the covariance matrix corr esponding to the m highest eigen vectors. Pr oof Sketch. Full proof is giv en in Appendix A.4. Here we sketch the proof. T o simplify our analysis, we choose a specific G in Lemma 3 without losing gener - ality . Let v 1 ,...,n be eigen vectors of C and v 1 ,...,n be corresponding eigenv alues, labeled so that the first m eigen vectors span the ro w space of F (or filter space). W e choose ro ws of G to be the remaining eigen vectors, i.e. G 0 := [ v m +1 , . . . , v n ] . By extracting the e volution of components of δ F from (25) using (24), we are ready to state the conditions under which perturbations of F are stable. Mutlipying (25) on the right by G > gi ves the e volution of δ B : ∆ δ B j i = X k P j ik δ B j k where P j ik ≡ 1 T ( I m + M ) − 1 ik h y 2 k i v j + m − δ ik ! . 20 Here we changed our notation to δ B kj = δ B j k to make it e xplicit that for each j we hav e one matrix equation. These equations are stable when all eigen v alues of all P j are negati ve, which requires as sho wn in the Appendix A.4: v 1 , . . . , v m > v m +1 , . . . , v n . This result pro ves that the perturbation is stable only if the filter space is identical to the space spanned by eigen vectors corresponding to the m highest eigen values of C . It remains to analyze the stability of δ A and δ S perturbations. Multiplying (25) on the right by F > gi ves, h ∆ δ A ij i = 0 and h ∆ δ S ij i = − 2 T δ S ij . δ A perturbation, which rotates neural filters, does not decay . This behavior is inherently related to the discussed symmetry of the strain cost function (4) with respect to left rotations of the Y matrix. Rotated y v ectors are obtained from the input by rotated neural filters and hence δ A perturbation does not affect the cost. On the other hand, δ S destroys orthonormality and these perturbations do decay , making the orthonormal solution stable. T o summarize our analysis, if the dynamics con ver ges to a stationary state, neural filters form an orthonormal basis of the principal subspace. 4 Numerical simulations of the asynchr onous network Here, we simulate the performance of the network with asynchronous updates, (10) and (12), on synthetic data. The data were generated by a colored Gaussian process 21 with an arbitrarily chosen “actual” cov ariance matrix. W e choose the number of input channels, n = 64 , and the number of output channels, m = 4 . In the input data, the ratio of the power in first 4 principal components to the po wer in remaining 60 components was 0.54. W and M were initialized randomly , the step size of synaptic updates were initialized to 1 /D 0 ,i = 0 . 1 . Coordinate descent step is cycled ov er neurons until magnitude of change in y T in one cycle is less than 10 − 5 times the magnitude of y T . W e compared the performance of the asynchronous updates network, (10) and (12), with two pre viously proposed networks, APEX (Kung and Diamantaras, 1990; K ung et al., 1994) and F ¨ oldiak’ s (F ¨ oldiak, 1989), on the same dataset, Figure 2. APEX net- work uses the same Hebbian/anti-Hebbian learning rules for synaptic weights, but the architecture is slightly different in that the lateral connection matrix, M , is lower tri- angular . F ¨ oldiak’ s network has the same architecture as ours, Figure 1B, and the same learning rules for feedforward connections. Ho wev er , the learning rule for lateral con- nections is ∆ M ij ∝ y i y j , unlik e (12). For the sake of fairness, we applied the same adapti ve step size procedure for all networks. As in (12), the stepsize for each neuron i at time T was 1 /D T +1 ,i , with D T +1 ,i = D T ,i + y 2 T ,i . In fact, such learning rate has been recommended and argued to be optimal for the APEX network (Diamantaras and Kung, 1996; Diamantaras, 2002), see also footnote 4. T o quantify the performance of these algorithms, we used three different metrics. First is the strain cost function, (4), normalized by T 2 , Figure 2A. Such normalization is chosen because the minimum value of offline strain cost is equal to the power con- tained in the eigenmodes be yond the top m : T 2 P n k = m +1 v k 2 , where { v 1 , . . . , v n } are 22 eigen values of sample cov ariance matrix C T (Cox and Cox, 2000; Mardia et al., 1980). For each of the three networks, as expected, the strain cost rapidly drops to wards its lo wer bound. As our network was deri ved from the minimization of the strain cost function, it is not surprising that the cost drops faster than in the other two. Second metric quantifies the de viation of the learned subspace from the actual prin- cipal subspace. At each T , the de viation is F > T F T − V > V 2 F , where V is a m × n matrix whose rows are the principal eigen vectors, V > V is the projection matrix to the principal subspace, F T is defined the same way for APEX and F ¨ oldiak networks as ours and F > T F T is the learned estimate of the projection matrix to the principal subspace. Such deviation rapidly falls for each network confirming that all three algorithms learn the principal subspace, Figure 2B. Again, our algorithm extracts the principal subspace faster than the other two netw orks. Third metric measures the degree of non-orthonormality among the computed neu- ral filters. At each T : F T F > T − I m 2 F . Non-orthonormality error quickly drops for all networks, confirming that neural filters con ver ge to orthonormal vectors, Figure 2C. Y et again, our network orthonormalizes neural filters much faster than the other two networks. 5 Subspace tracking using a neural network with local lear ning rules W e ha ve demonstrated that our netw ork learns a linear subspace of streaming data gen- erated by a stationary distribution. But what if the data are generated by an e volving 23 normalized strain error (dB) subspace error (dB) non-orthonormality error (dB) T T T A B C 0 2500 5000 -2 -1 0 1 2 3 4 5 0 2500 5000 -15 -10 -5 0 5 10 0 2500 5000 -50 -40 -30 -20 -10 0 10 Földiak APEX Ours Figure 2: Performance of the asynchronous neural network compared with e xisting algorithms. Each algorithm was applied to 40 dif ferent random data sets drawn from the same Gaussian statistics, described in te xt. W eight initializations were random. Solid lines indicate means and shades indicate standard deviations across 40 runs. All errors are in decibells (dB). For formal metric definitions, see text. A. Strain error as a function of data presentations. Dotted line is the best error in batch setting, calculated using eigen values of the actual cov ariance matrix. B. Subspace error as a function of data presentations. C. Non-orthonormality error as a function of data presentations. 24 distribution and we need to track the corresponding linear subspace? Using the al- gorithm (12) would be suboptimal because the learning rate is adjusted to effecti vely “remember” the contribution of all the past data points. A natural way to track an e volving subspace is to “forget” the contribution of older data points (Y ang, 1995). In this Section, we deriv e an algorithm with “forgetting” from a principled cost function where errors in the similarity of old data points are discounted: y T = arg min y T T X t =1 T X t 0 =1 β 2 T − t − t 0 x > t x t 0 − y > t y t 0 2 . (26) where β is a discounting factor 0 ≤ β ≤ 1 with β = 1 corresponding to our original algorithm (5). The effecti ve time scale of “for getting” is: τ := − 1 / ln β . (27) By introducing a T × T -dimensional diagonal matrix β T with diagonal elements β T ,ii = β T − i we can re write (26) in a matrix notation: y T = arg min y T β > T X > X β T − β > T Y > Y β T 2 F . (28) A similar discounting was used in (Y ang, 1995) to deri ve subspace tracking algorithms from the representation error cost function, (3). T o deriv e an online algorithm to solv e (28) we follo w the same steps as before. By keeping only the terms that depend on current output y T we get: y T = arg min y T " − 4 x > T T − 1 X t =1 β 2( T − t ) x t y > t ! y T + 2 y > T T − 1 X t =1 β 2( T − t ) y t y > t ! y T − 2 k x T k 2 k y T k 2 + k y T k 4 . (29) 25 In (29), provided that past input-input and input-output outer products are not for gotten for a sufficiently long time, i.e. τ >> 1 , the first two terms dominate over the last two for large T . After dropping the last two terms we arri ve at: y T = arg min y T " − 4 x > T T − 1 X t =1 β 2( T − t ) x t y > t ! y T + 2 y > T T − 1 X t =1 β 2( T − t ) y t y > t ! y T # . (30) As in the non-discounted case, minimization of the discounted online CMDS cost function by coordinate descent (30) leads to a neural network with asynchronous up- dates, y T ,i ← n X j =1 W β T ,ij x T ,j − m X j =1 M β T ,ij y T ,j , (31) and by a Jacobi iteration - to a neural network with synchronous updates, y T ← W β T x T − M β T y T , (32) with synaptic weight matrices in both cases gi ven by: W β T ,ij = T − 1 P t =1 β 2( T − t ) y t,i x t,j T − 1 P t =1 β 2( T − t ) y 2 t,i , M β T ,i,j 6 = i = T − 1 P t =1 β 2( T − t ) y t,i y t,j T − 1 P t =1 β 2( T − t ) y 2 t,i , M β T ,ii = 0 . (33) Finally , we rewrite (33) in a recursiv e form. As before, we introduce a scalar vari- able D β T ,i representing the discounted cumulati ve activity of a neuron i up to time T − 1 , D β T ,i = T − 1 X t =1 β 2( T − t − 1) y 2 t,i . (34) Then, the recursi ve updates are: D β T +1 ,i ← β 2 D β T ,i + y 2 T ,i W β T +1 ,ij ← W β T ,ij + y T ,i x T ,j − W β T ,ij y T ,i /D β T +1 ,i M β T +1 ,i,j 6 = i ← M β T ,ij + y T ,i y T ,j − M β T ,ij y T ,i /D β T +1 ,i . (35) 26 These updates are local and almost identical to the original updates (12) except the D β T +1 ,i update, where the past cumulati ve acti vity is discounted by β 2 . For suitably chosen β , the learning rate, 1 /D β T +1 ,i , stays suf ficiently large e ven at large- T , allo wing the algorithm to react to changes in data statistics. As before, we hav e a two-phase algorithm for minimizing the discounted online CMDS cost function (30). For each data presentation, first the neural network dynamics is run using either (31) or (32) until the dynamics con verges to a fixed point. In the second step, synaptic weights are updated using (35). In Figure 3, we present the results of a numerical simulation of our subspace track- ing algorithm with asynchronous updates similar to that in Section 4 b ut for non- stationary synthetic data. The data are dra wn from two dif ferent Gaussian distrib u- tions: from T = 1 to T = 2500 - with cov ariance C 1 , and from T = 2501 to T = 5000 - with cov ariance C 2 . W e ran our algorithm with 4 different β factors, β = 0 . 998 , 0 . 995 , 0 . 99 , 0 . 98 ( τ = 499 . 5 , 199 . 5 , 99 . 5 , 49 . 5 ). W e ev aluate the subspace tracking performance of the algorithm using a modifica- tion of the subspace error metric introduced in Section 4. From T = 1 to T = 2500 the error is F > T F T − V > 1 V 1 2 F , where V 1 is a m × n matrix whose ro ws are the principal eigen vectors of C 1 . From T = 2501 to T = 5000 the error is F > T F T − V > 2 V 2 2 F , where V 2 is a m × n matrix whose ro ws are the principal eigen vectors of C 2 . Figure 3A plots this modified subspace error . Initially , the subspace error decreases, reach- ing lower values with higher β . Higher β allo ws for smaller learning rates allowing a fine-tuning of the neural filters and hence lower error . At T = 2501 , a sudden jump is observ ed corresponding to the change in principal subspace. The network rapidly 27 corrects its neural filters to project to the new principal subspace and the error falls to before jump values. It is interesting to note that higher β no w leads to a slower decay due to extended memory in the past. W e also quantify the degree of non-orthonormality of neural filters using the non- orthonormality error defined in Section 4. Initially , the non-orthonormality error de- creases, reaching lo wer v alues with higher β . Again, higher β allows for smaller learn- ing rates allowing a fine-tuning of the neural filters. At T = 2501 , an increase in orthonormality error is observed as the network is adjusting its neural filters. Then, the error falls to before change values, with higher β leading to a slower decay due to extended memory in the past. 6 Discussion In this paper , we made a step to wards a mathematically rigorous model of neuronal di- mensionality reduction satisfying more biological constraints than was pre viously pos- sible. By starting with the CMDS cost function (4), we deriv ed a single-layer neural network of linear units using only local learning rules. Using a local stability analysis, we sho wed that our algorithm finds a set of orthonormal neural filters and projects the input data stream to its principal subspace. W e showed that with a small modification in learning rate updates, the same algorithm performs subspace tracking. Our algorithm finds the principal subspace, but not necessarily the principal com- ponents themselves. This is not a weakness since both the representation error cost (3) and CMDS cost (4) are minimized by projections to principal subspace and finding the 28 Figure 3: Performance of the subspace tracking asynchronous neural network with nonstationary data. The algorithm with different β factors was applied to 40 dif ferent random data sets drawn from the same nonstationary statistics, described in text. W eight initializations were random. Solid lines indicate means and shades indicate standard de viations. All errors are in decibells (dB). For formal metric definitions, see text. A. Subspace error as a function of data presentations. B. Non-orthonormality error as a function of data presentations. 29 principal components is not necessary . Our network is most similar to F ¨ oldiak’ s network (F ¨ oldiak, 1989), which learns feedforward weights by a Hebbian Oja rule and the all-to-all lateral weights by an anti- Hebbian rule. Y et, the functional form of the anti-Hebbian learning rule in F ¨ oldiak’ s network, ∆ M ij ∝ y i y j , is dif ferent from ours (12) resulting in the following interest- ing dif ferences: 1) Because the synaptic weight update rules in F ¨ oldiak’ s network are symmetric, if the weights are initialized symmetric, i.e. M ij = M j i , and learning rates are identical for lateral weights, they will stay symmetric. As mentioned abov e, such symmetry does not exist in our network ((12) and (20)). 2) While in F ¨ oldiak’ s network neural filters need not be orthonormal (F ¨ oldiak, 1989; Leen, 1991), in our network the y will be (Theorem 1). 3) In F ¨ oldiak’ s network output units are decorrelated (F ¨ oldiak, 1989), since in its stationary state h y i y j i = 0 . This need not be true in our network. Y et, correlations among output units do not necessarily mean that information in the output about the input is reduced 7 . Our network is similar to the APEX network (Kung and Diamantaras, 1990) in the 7 As pointed before (Linsker, 1988; Plumble y, 1993, 1995; Kung, 2014), PCA max- imizes mutual information between a Gaussian input, x , and an output, y = Fx , such that ro ws of F have unit norms. When ro ws of F are principal eigen vectors, outputs are principal components and are uncorrelated. Ho we ver , the output can be multiplied by a rotation matrix, Q , and mutual information is unchanged, y 0 = Qy = QFx . y 0 is now a correlated Gaussian and QF still has rows with unit norms. Therefore, one can hav e correlated outputs with maximal mutual information between input and output, as long as ro ws of F span the principal subspace. 30 functional form of both the feedforward and the lateral weights. Ho wev er the network architecture is different because the APEX network has a lower -triangular lateral con- necti vity matrix. Such difference in architecture leads to two interesting differences in the APEX network operation (Diamantaras and Kung, 1996): 1) The outputs con verge to the principal components. 2) Lateral weights decay to zero and neural filters are the feedforward weights. In our network lateral weights do not hav e to decay to zero and neural filters depend on both the feedforward and lateral weights (18). In numerical simulations, we observed that our network is faster than F ¨ oldiak’ s and APEX networks in minimizing the strain error , finding the principal subspace and orthonormalizing neural filters. This result demonstrates the advantage of our principled approach compared to heuristic learning rules. Our choice of coordinate descent to minimize the cost function in the acti vity dy- namics phase allowed us to circumvent problems associated with matrix in version: y ← ( I m + M ) − 1 Wx . Matrix in v ersion causes problems for neural network implemen- tations because it is a non-local operation. In the absence of a cost function, F ¨ oldiak suggested to implement matrix in version by iterating y ← Wx − My until con ver - gence (F ¨ oldiak, 1989). W e deri ved a similar algorithm using Jacobi iteration. Ho we ver , in general, such iterativ e schemes are not guaranteed to con ver ge (Hornik and Kuan, 1992). Our coordinate descent algorithm is almost always guaranteed to con verge be- cause the cost function in the activity dynamics phase (7) meets the criteria in (Luo and Tseng, 1991). Unfortunately , our treatment still suf fers from the problem common to most other biologically plausible neural networks (Hornik and Kuan, 1992): a complete global 31 con ver gence analysis of synaptic weights is not yet av ailable. Our stability analysis is local in the sense that it starts by assuming that the synaptic weight dynamics has reached a stationary state and then prov es that perturbations around the stationary state are stable. W e hav e not made a theoretical statement on whether this state can e ver be reached or how fast such a state can be reached. Global con ver gence results us- ing stochastic approximation theory are av ailable for the single-neuron Oja rule (Oja and Karhunen, 1985), its nonlocal generalizations (Plumble y, 1995) and the APEX rule (Diamantaras and Kung, 1996), howe ver applicability of stochastic approximation the- ory was questioned recently (Zufiria, 2002). Even though a neural network implemen- tation is unknown, W armuth & Kuzmin’ s online PCA algorithm stands out as the only algorithm for which a re gret bound has been pro ved (W armuth and Kuzmin, 2008). An asymptotic dependence of regret on time can also be interpreted as con ver gence speed. This paper also contrib utes to MDS literature by applying CMDS method to stream- ing data. Howe ver , our method has limitations in that to deri ve neural algorithms we used the strain cost (4) of CMDS. Such cost is formulated in terms of similarities, inner products to be exact, between pairs of data v ectors and allo wed us to consider a stream- ing setting where a data vector is re vealed at a time. In the most general formulation of MDS pairwise dissimilarities between data instances are giv en rather than data vectors themselves or similarities between them (Cox and Cox, 2000; Mardia et al., 1980). This generates two immediate problems for a generalization of our approach: 1) A mapping to the strain cost function (4) is only possible if the dissimilarites are Euclidean dis- tances (footnote 3). In general, dissimilarities do not need be Euclidean or e ven metric distances (Cox and Cox, 2000; Mardia et al., 1980) and one cannot start from the strain 32 cost (4) for deri v ation of a neural algorithm. 2) In the streaming version of the general MDS setting, at each step, dissimilarities between the current and all past data instances are rev ealed, unlike our approach where the data vector itself is re vealed. It is a chal- lenging problem for future studies to find neural implementations in such generalized setting. The online CMDS cost functions (7) and (30) should be valuable for subspace learn- ing and tracking applications where biological plausibility is not a necessity . Minimiza- tion of such cost functions could be performed much more ef ficiently in the absence of constraints imposed by biology 8 . It remains to be seen how the algorithms presented in this paper and their generalizations compare to state-of-the-art online subspace tracking algorithms from machine learning literature (Cichocki and Amari, 2002). Finally , we believ e that formulating the cost function in terms of similarities sup- ports the possibility of representation in variant computations in neural netw orks. Acknowledgments W e are grateful to L. Greengard, S. Seung and M. W armuth for helpful discussions. 8 For example, matrix equation (14) could be solved by a conjugate gradient descent method instead of iterativ e methods. Matrices that keep input-input and output-output correlations in (14) can be calculated recursi vely , leading to a truly online method 33 A A ppendix A.1 Alternati ve derivation of an asynchr onous network Here, we solve the system of equations (14) iterativ ely (Strang, 2009). First, we split the output cov ariance matrix that appears on the left-hand side of (14) into its diagonal component D T , a strictly upper triangular matrix U T and a strictly lower triangular matrix L T : T − 1 X t =1 y t y > t = D T + U T + L T . (36) Substituting this into (14) we get: ( D T + ω L T ) y T = ((1 − ω ) D T − ω U T ) y T + ω T − 1 X t =1 y t x > t ! x T , (37) where ω is a parameter . W e solve (14) by iterating y T ← − ( D T + ω L T ) − 1 " ((1 − ω ) D T − ω U T ) y T + ω T − 1 X t =1 y t x > t ! x T # , (38) until con ver gence. If symmetric T − 1 P t =1 y t y > t is positi ve definite, the con ver gence is guar- anteed for 0 < ω < 2 by the Ostro wski-Reich theorem (Reich, 1949; Ostro wski, 1954). When ω = 1 the iteration (38) corresponds to the Gauss-Seidel method and when ω > 1 - to the succesiv e overrelaxation method. The choice of ω for fastest con vergence de- pends on the problem, and we will not e xplore this question here. Howe ver , v alues around 1.9 are generally recommended (Strang, 2009). Because in (37) the matrix multiplying y T on the left is lower triangular and on the right is upper triangular , the iteration (38) can be performed component-by-component 34 (Strang, 2009): y T ,i ← − (1 − ω ) y T ,i + ω P k T − 1 P t =1 y t,i x t,k x T ,k T − 1 P t =1 y 2 t,i − ω P j 6 = i T − 1 P t =1 y t,i y t,j y T ,j T − 1 P t =1 y 2 t,i . (39) Note that y T ,i is replaced with its ne w v alue before moving to the ne xt component. This algorithm can be implemented in a neural network y T ,i ← (1 − ω ) y T ,i + ω n X j =1 W T ,ij x T ,j − ω m X j =1 M T ,ij y T ,j , (40) where W T and M T , as defined in (9), represent the synaptic weights of feedforward and lateral connections respecti vely . The case of ω < 1 can be implemented by a leaky integrator neuron. The ω = 1 case corresponds to our original asynchronous algorithm, except that no w updates are performed in a particular order . For the ω > 1 case, which may con ver ge faster , we do not see a biologically plausible implementation since it requires self-inhibition. Finally , to express the algorithm in a fully online form we re write (9) via recursiv e updates, resulting in (12). 35 A.2 Pr oof of Lemma 2 Pr oof of Lemma 2. In our deriv ation belo w , we use results from equations (18), (19) and (20) of the main text. F > F C ij = X kl F ki F kl h x l x j i = X k F ki h y k x j i (from (18)) = X k F ki y 2 k W kj (from (19)) = X kp F ki y 2 k ( M kp + δ kp ) F pj (from (18)) = X kp F ki y 2 p ( M pk + δ pk ) F pj (from (20)) = X p W pi y 2 p F pj (from (18)) = X p h y p x i i F pj (from (19)) = X pk F pk h x k x i i F pj = X pk h x i x k i F pk F pj = CF > F ij . (from (18)) A.3 Pr oof of Lemma 4 Here we calculate ho w δ F e volv es under the learning rule, i.e. h ∆ δ F i and deri ve equa- tion (25). First, we introduce some new notation to simplify our expressions. W e define lateral synaptic weight matrix M with diagonals set to 1 as ˆ M := I m + M . (41) 36 W e use ˜ to denote perturbed matrices ˜ F := F + δ F , ˜ W := W + δ W , ˜ M := M + δ M , ˆ ˜ M := I + ˜ M = ˆ M + δ M . (42) Note that when the network is run with these perturbed synaptic matrices, for input x , the network dynamics will settle to the fix ed point ˜ y = ˆ ˜ M − 1 ˜ Wx = ˜ Fx , (43) which is dif ferent from the fixed point of the stationary netw ork, y = ˆ M − 1 Wx = Fx . No w we can prov e Lemma 4. Pr oof of Lemma 4. The proof goes in the follo wing steps. 1. Since our update rules are formulated in terms of W and M , it will be helpful to express δ F in terms of δ W and δ M . The definition of F , equation (18), gi ves us the desired relation: ( δ ˆ M ) F + ˆ M ( δ F ) = δ W . (44) 2. Next, we sho w that in the stationary state h ∆ δ F i = ˆ M − 1 ( h ∆ δ W i − h ∆ δ M i F ) + O 1 T 2 . (45) Pr oof. A verage changes due to synaptic updates on both sides of (44) are equal: D ∆ h ( δ ˆ M ) F + ˆ M ( δ F ) iE = h ∆ δ W i . Noting that the unperturbed matrices are sta- tionary , i.e. h ∆ M i = h ∆ F i = h ∆ W i = 0 , one gets h ∆ δ M i F + ˆ M h ∆ δ F i = h ∆ δ W i + O ( T − 2 ) , from which equation (45) follo ws. 37 3. Next step is to calculate h ∆ δ W i and h ∆ δ M i using the learning rule, in terms of matrices W , M , C , F and δ F and plug the result into (45). This manipulation is going to gi ve us the e volution of δ F equation, (25). First, h ∆ δ W i : h ∆ δ W ij i = D ∆ ˜ W ij E = 1 T h y 2 i i h ˜ y i x j i − ˜ y 2 i ˜ W ij = 1 T h y 2 i i X k ˜ F ik h x k x j i − X kl ˜ F ik ˜ F il h x k x l i ˜ W ij ! (from (43)) = 1 T h y 2 i i X k ˜ F ik C kj − X kl ˜ F ik ˜ F il C kl ˜ W ij ! = 1 T h y 2 i i X k F ik C kj − X kl F ik F il C kl W ij + X k δ F ik C kj − 2 X kl δ F ik F il C kl W ij − X kl F ik F il C kl δ W ij ! (from (42)) = 1 T h y 2 i i X k δ F ik C kj − 2 X kl δ F ik F il C kl W ij − X kl F ik F il C kl δ W ij ! . (from (19)) 38 Next we calculate h ∆ δ M i : h ∆ δ M ij i = D ∆ ˜ ˆ M ij E = 1 T h y 2 i i h ˜ y i ˜ y j i − ˜ y 2 i ˜ M ij − 1 D i δ ij ˜ y 2 i (last term sets ∆ ˜ ˆ M ii = 0 ) = 1 T h y 2 i i X kl ˜ F ik ˜ F j l h x k x l i − X kl ˜ F ik ˜ F il h x k x l i ˜ M ij − δ ij X kl ˜ F ik ˜ F il h x k x l i ! (from (43)) = 1 T h y 2 i i X kl ˜ F ik ˜ F j l C kl − X kl ˜ F ik ˜ F il C kl ˜ M ij − δ ij X kl ˜ F ik ˜ F il C kl ! = 1 T h y 2 i i X kl F ik F j l C kl − X kl F ik F il C kl M ij − δ ij X kl F ik F il C kl + X kl δ F ik F j l C kl + X kl F ik δ F j l C kl − 2 X kl δ F ik F il C kl M ij − X kl F ik F il C kl δ M ij − 2 δ ij X kl δ F ik F il C kl ! (from (42)) = 1 T h y 2 i i X kl δ F ik F j l C kl + X kl F ik δ F j l C kl − 2 X kl δ F ik F il C kl M ij − X kl F ik F il C kl δ M ij − 2 δ ij X kl δ F ik F il C kl ! . (from (20)) Plugging these in equation (45), we get h ∆ δ F ij i = X k ˆ M − 1 ik T h y 2 k i " X l δ F kl C lj − 2 X lp δ F kl F kp C lp W kj − X lp F kl F kp C lp δ W kj − X lpr δ F kl F rp C lp F rj − X lpr F kl δ F rp C lp F rj + 2 X lpr δ F kl F kp C lp M kr F rj + X lpr F kl F kp C lp δ M kr F rj +2 X lpr δ kr δ F kl F kp C lp F rj # + O 1 T 2 . M kr and δ M kr terms can be eliminated using the previously deriv ed relations (18) and (44). This leads to a cancellation of some of the terms giv en above, and finally 39 we hav e h ∆ δ F ij i = X k ˆ M − 1 ik T h y 2 k i " X l δ F kl C lj − X lpr δ F kl F rp C lp F rj − X lpr F kl δ F rp C lp F rj − X lpr F kl F kp C lp ˆ M kr δ F rj # + O 1 T 2 . T o proceed further , we note that: y 2 k = F CF > kk , (46) which allo ws us to simplify the last term. Then, we get our final result: h ∆ δ F ij i = 1 T X k ˆ M − 1 ik h y 2 k i " X l δ F kl C lj − X lpr δ F kl F rp C lp F rj − X lpr F kl δ F rp C lp F rj # − 1 T δ F ij + O 1 T 2 . A.4 Pr oof of Theorem 3 For ease of reference, we remind that in general δ F can be written as, δ F = δ A F + δ S F + δ B G . (24) Here, δ A is an m × m ske w symmetric matrix, δ S is an m × m symmetric matrix and δ B is an m × ( n − m ) matrix. G is an ( n − m ) × n matrix with orthonormal rows. These ro ws are chosen to be orthogonal to the ro ws of F . Let v 1 ,...,n be the eigen vectors C and v 1 ,...,n be the corresponding eigen values. W e label them such that F spans the same space as the space spanned by the first m eigen vectors. W e choose rows of G to be the remaining eigen vectors, i.e. G > := [ v m +1 , . . . , v n ] . Then, for future reference, F G > = 0 , GG > = I ( n − m ) , and X k C ik G > kj = X k C ik v j + m k = v j + m G > ij . (47) 40 W e also remind the definition: ˆ M := I m + M . (41) Pr oof of Theor em 3. Below , we discuss the conditions under which perturbations of F are stable. W e work to linear order in T − 1 as stated in Theorem 3. W e treat separately the e volution of δ A , δ S and δ B under a general perturbation δ F . 1. Stability of δ B 1.1 Ev olution of δ B is gi ven by: h ∆ δ B ij i = 1 T X k ˆ M − 1 ik h y 2 k i v j + m − δ ik ! δ B kj . (48) Pr oof. Starting from (24) and using (47): h ∆ δ B ij i = X k h ∆ δ F ik i G > kj = 1 T X k ˆ M − 1 ik h y 2 k i X lp δ F kl C lp G j p − 1 T δ B ij . Here the last line results from equation (47) applied to (25). Let’ s look at the first term again using (47) and then (24), 1 T X k ˆ M − 1 ik h y 2 k i X lp δ F kl C lp G j p = 1 T X k ˆ M − 1 ik h y 2 k i X l δ F kl v j + m G j l = 1 T X k ˆ M − 1 ik h y 2 k i v j + m δ B kj . Combining these gi ve (48). 1.2 When is (48) stable? Next, we sho w that stability requires v 1 , . . . , v m > v m +1 , . . . , v n . 41 For ease of manipulation, we express (48) as a matrix equation for each column of δ B . For con venience we change our notation to δ B kj = δ B j k ∆ δ B j i = X k P j ik δ B j k where P j ik ≡ 1 T O ik v j + m − δ ik , and O ik ≡ ˆ M − 1 ik h y 2 k i . W e ha ve one matrix equation for each j . These equations are stable if all eigen- v alues of all P j are negati ve. { eig ( P ) } < 0 = ⇒ { eig ( O ) } < 1 v j , j = m + 1 , . . . , n. = ⇒ { eig ( O − 1 ) } > v j , j = m + 1 , . . . , n. 1.3 If one could calculate eigen values of O − 1 , the stability condition can be articu- lated. W e start this calculation by noting that X k O ik h y k y j i = X k ˆ M − 1 ik h y k y j i h y 2 k i = X k ˆ M − 1 ik ˆ M kj = δ ij (from (20)) . (49) Therefore, O − 1 = yy > = F CF > . (50) Then, we need to calculate the eigen values of F CF > . They are: eig ( O − 1 ) = v 1 , . . . , v m . Pr oof. W e start with the eigen value equation. F CF > λ = λ λ 42 Multiply both sides by F > : F > F CF > λ = λ F > λ . Next, we use the commutation of F > F and C , (23), and the orthogonality of neural filters, FF > = I m , (22) to simplify the left hand side: F > F CF > λ = CF > FF > λ = C F > λ . This implies that C F > λ = λ F > λ . (51) Note that by orthogonality of neural filters, the follo wing is also true: F > F F > λ = F > λ . (52) All the relations above would hold true if λ = 0 and F > λ = 0 , but this would require F F > λ = λ = 0 , which is a contradiction. Then, (51) and (52) imply that F > λ is a shared eigen vector between C and F > F . F > F and C was sho wn to commute before and the y share a complete set of eigen vectors. Ho we ver , some n − m eigen vectors of C hav e zero eigen values in F > F . W e had labeled shared eigen vectors with unit eigen value in F > F to be v 1 , . . . , v m . The eigen v alue of F > λ with respect to F > F is 1, therefore F > λ is one of v 1 , . . . , v m . This prov es that λ = { v 1 , . . . , v m } and eig ( O − 1 ) = v 1 , . . . , v m . 43 1.4 From (50), it follo ws that for stability v 1 , . . . , v m > v m +1 , . . . , v n 2. Stability of δ A and δ S Next, we check stabilities of δ A and δ S . h ∆ δ A ij i + h ∆ δ S ij i = X k h ∆ δ F ik i F T kj (from definition (24)) = − 1 T X k ˆ M − 1 ik h y 2 k i X lm F kl δ F j m C lm − 1 T ( δ A ij + δ S ij ) = − 1 T X k ˆ M − 1 ik h y 2 k i X l F CF T kl δ A T lj + δ S T lj − ( δ A ij + δ S ij ) . (53) In deriving the last line, we used equations (24) and (47). The k summation was calculated before (49). Plugging this in (53), one gets h ∆ δ A ij i + h ∆ δ S ij i = − 1 T δ A ij + δ A T ij + δ S ij + δ S ij = − 2 T δ S ij = ⇒ h ∆ δ A ij i = 0 (from ske w symmetry of A ) = ⇒ h ∆ δ S ij i = − 2 T δ S ij . δ A perturbation, which rotates neural filters to other orthonormal basis within the principal subspace, does not decay . On the other hand, δ S destroys orthonormality and these perturbations do decay , making the orthonormal solution stable. Collecti vely , the results abov e prov e Theorem 3. 44 A.5 P erturbation of the stationary state due to data presentation Our discussion of the linear stability of the stationary point assumed general perturba- tions. Perturbations that arise from data presentation, δ F = ∆ F , (54) form a restricted class of the most general case, and hav e special consequences. Focus- ing on this case, we show that data presentations do not rotate the basis for extracted subspace in the stationary state. W e calculate perturbations within the extracted subspace. Using (24) and (47) δ A + δ S = δ F F > = ∆ F F > from (54) = ˆ M − 1 ∆ W − ∆ ˆ M F F > expand (18) to first order in ∆ = ˆ M − 1 ∆ W F > − ∆ ˆ M from (22) . (55) Let’ s look at ∆ W F > term more closely: ∆ W F > ij = X k η i y i x k − y 2 i W ik F > kj = η i y i X k F j k x k − y 2 i X k W ik F > kj ! = η i y i y k − y 2 i ˆ M ij = ∆ ˆ M ij . Plugging this back into (55) gi ves, δ A + δ S = 0 , = ⇒ δ A = 0 , & δ S = 0 , (56) 45 Therefore, perturbations that arise from data presentation do not rotate neural filter basis within the extracted subspace. This property should increase the stability of the neural filter basis within the extracted subspace. Refer ences Arora, R., Cotter , A., Li vescu, K., and Srebro, N. (2012). Stochastic optimization for pca and pls. Pr oceedings of the Allerton Confer ence on Communication, Contr ol, and Computing , pages 861–868. IEEE. Balzano, L. K. (2012) Handling missing data in high-dimensional subspace modeling (Doctoral dissertation, UNIVERSITY OF WISCONSIN–MADISON) Becker , S. and Plumble y , M. (1996). Unsupervised neural network learning procedures for feature extraction and classification. Appl Intell , 6(3):185–203. Carroll, J. and Chang, J. (1972). Idioscal (indi vidual dif ferences in orientation scaling): A generalization of indscal allo wing idiosyncratic reference systems as well as an analytic approximation to indscal. In Psychometric meeting , Princeton, NJ . Cichocki, A. and Amari, S.-I. (2002). Adaptive blind signal and image pr ocessing . John W iley Chichester . Cox, T . and Cox, M. (2000). Multidimensional scaling . CRC Press. Crammer , K. (2006). Online tracking of linear subspaces. In Learning Theory , pages 438–452. Springer . 46 Diamantaras, K. (2002). Neural networks and principal component analysis. Diamantaras, K. and Kung, S. (1996). Principal component neural networks: theory and applications . John W iley & Sons, Inc. F ¨ oldiak, P . (1989). Adapti ve network for optimal linear feature extraction. In Interna- tional J oint Confer ence on Neural Networks , pages 401–405. IEEE. Goes, J., Zhang, T ., Arora, R., and Lerman, G. (2014). Rob ust stochastic principal component analysis. Pr oceedings of the Seventeenth International Confer ence on Artificial Intelligence and Statistics (AIST AT) , pages 266–274. Hornik, K. and Kuan, C.-M. (1992). Con ver gence analysis of local feature extraction algorithms. Neural Networks , 5(2):229–240. Hu, T ., T owfic, Z., Pehle van, C., Genkin, A., and Chklovskii, D. (2013). A neuron as a signal processing device. Pr oceedings of the Asilomar Confer ence on Signals, Systems and Computers , pages 362–366. IEEE. Hubel, D. H. (1995). Eye, brain, and vision. Scientific American Library/Scientific American Books. Hyv ¨ arinen, A., Hurri, J., and Hoyer , P . O. (2009). Natural Imag e Statistics: A Pr oba- bilistic Appr oach to Early Computational V ision. , v olume 39. Springer . Karhunen, J. and Oja, E. (1982). Ne w methods for stochastic approximation of trun- cated karhunen-loe ve e xpansions. In Pr oc. 6th Int. Conf. on P attern Recognition , pages 550–553. 47 Kung, S. and Diamantaras, K. (1990). A neural network learning algorithm for adap- ti ve principal component extraction (apex). Pr oceedings of ICASSP , pages 861–864. IEEE. Kung, S.-Y ., Diamantaras, K., and T aur , J.-S. (1994). Adaptiv e principal component extraction (ape x) and applications. IEEE T Signal Pr oces , 42(5):1202–1217. Kung, S.-Y . (2014). Kernel Methods and Machine Learning . Cambridge Uni versity Press. Kushner H.J. and Clark D.S. (1978). Stochastic appr oximation methods for constrained and unconstrained systems . Springer . Leen, T . K. (1990). Dynamics of learning in recurrent feature-discov ery networks. Advances in Neural Information Pr ocessing Systems 3 , pages 70–76 Leen, T . K. (1991). Dynamics of learning in linear feature-discov ery networks. Net- work , 2(1):85–105. Linsker , R. (1988). Self-organization in a perceptual network. IEEE Computer , 21(3):105–117. Luo, Z. Q., and Tseng, P . (1991). On the con ver gence of a matrix splitting algorithm for the symmetric monotone linear complementarity problem. SIAM J Contr ol Optim , 29(5):1037-1060. Mardia, K., K ent, J., and Bibby , J. (1980). Multivariate analysis . Academic press. Oja, E. (1982). Simplified neuron model as a principal component analyzer . J Math Biol , 15(3):267–273. 48 Oja, E. (1992). Principal components, minor components, and linear neural networks. Neural Networks , 5(6):927–935. Oja, E. and Karhunen, J. (1985). On stochastic approximation of the eigen vectors and eigen values of the e xpectation of a random matrix. J Math Anal Appl , 106(1):69–84. Ostro wksi A.M. (1954). On the linear iteration procedures for symmetric matrices. Rend Mat Appl , 14:140–163. Pearson, K. (1901). On lines and planes of closest fit to systems of points in space. Philos Mag , 2:559-572. Plumbley , M. D. (1993). A Hebbian/anti-Hebbian network which optimizes information capacity by orthonormalizing the principal subspace. Pr oceedings of ICANN , pages 86–90. IEEE. Plumbley , M. D. (1995). L yapunov functions for con ver gence of principal component algorithms. Neural Networks , 8(1):11-23. Preisendorfer , R. and Moble y , C. (1988). Principal component analysis in meteor ology and oceanogr aphy , volume 17. Elsevier Science Ltd. Reich E. (1949). On the con vergence of the classical iterati ve procedures for symmetric matrices. Ann Math Statistics , 20:448–451. Rubner , J. and Schulten, K. (1990). Dev elopment of feature detectors by self- org anization. Biol Cybern , 62(3):193–199. Rubner , J. and T av an, P . (1989). A self-or ganizing network for principal-component analysis. EPL , 10(7):693. 49 Sanger , T . (1989). Optimal unsupervised learning in a single-layer linear feedforward neural network. Neur al networks , 2(6):459–473. Shepherd, G. (2003). The synaptic or ganization of the brain . Oxford Uni versity Press. Strang, G. (2009). Intr oduction to linear algebr a . W ellesley-Cambridge Press T orgerson, W . (1952). Multidimensional scaling: I. theory and method. Psychometrika , 17(4):401–419. W armuth, M. and Kuzmin, D. (2008). Randomized online pca algorithms with regret bounds that are logarithmic in the dimension. J Mac h Learn Res , 9(10). W illiams, C. (2001). On a connection between k ernel pca and metric multidimensional scaling. Advances in Neural Information Pr ocessing Systems 13 , pages 675–681. MIT Press. Y ang, B. (1995). Projection approximation subspace tracking. IEEE T Signal Pr oces , 43(1):95–107. Y oung, G. and Householder , A. (1938). Discussion of a set of points in terms of their mutual distances. Psychometrika , 3(1):19–22. Zufiria, P .J. (2002). On the discrete-time dynamics of the basic Hebbian neural network node.. IEEE T r ans Neural Netw , 13(6):1342–1352. 50

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

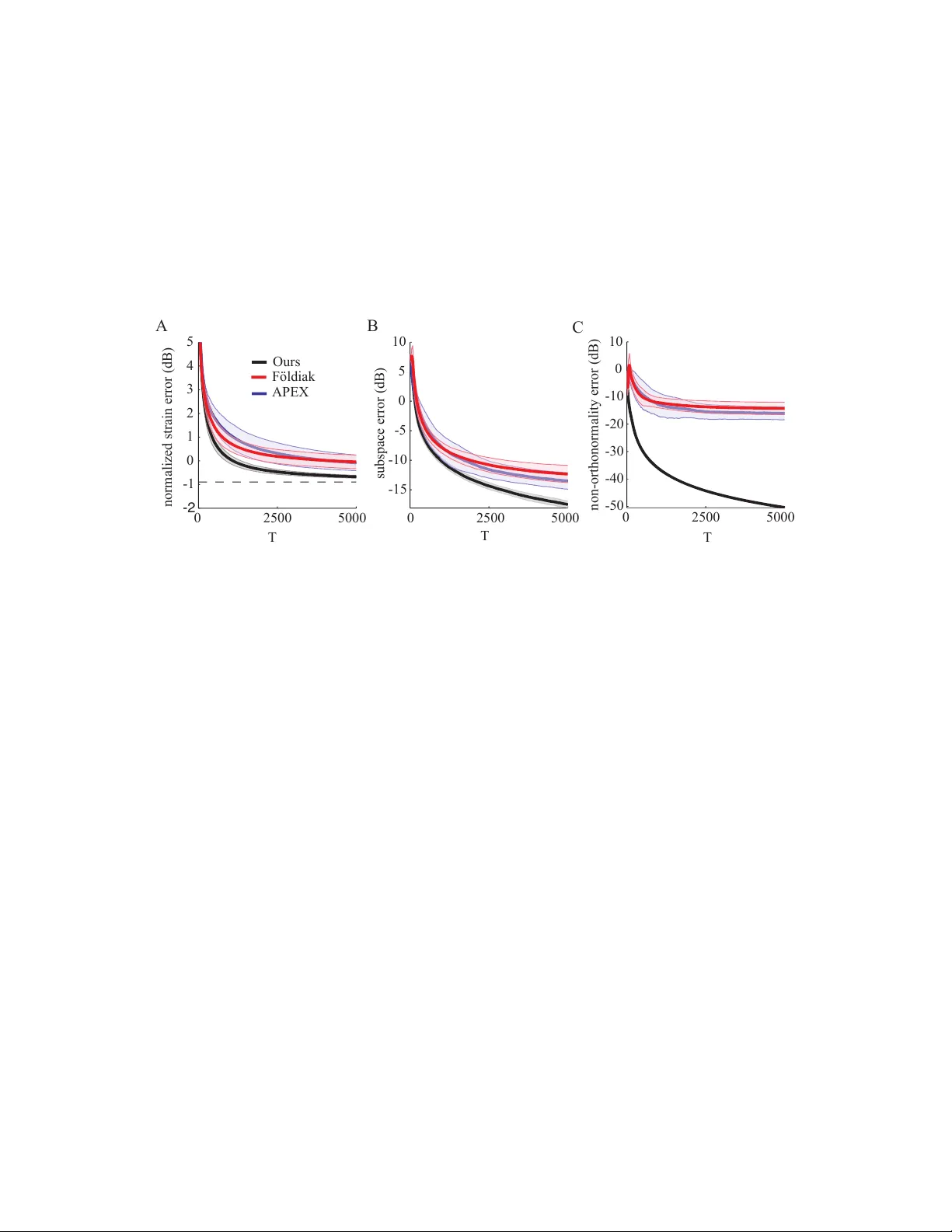

Leave a Comment