Quantum Monte Carlo for minimum energy structures

We present an efficient method to find minimum energy structures using energy estimates from accurate quantum Monte Carlo calculations. This method involves a stochastic process formed from the stochastic energy estimates from Monte Carlo that can be averaged to find precise structural minima while using inexpensive calculations with moderate statistical uncertainty. We demonstrate the applicability of the algorithm by minimizing the energy of the H2O-OH- complex and showing that the structural minima from quantum Monte Carlo calculations affect the qualitative behavior of the potential energy surface substantially.

💡 Research Summary

The paper introduces a novel stochastic optimization framework that leverages the inherently noisy energy estimates produced by quantum Monte Carlo (QMC) calculations to locate minimum‑energy structures with high accuracy while keeping computational cost low. Traditional approaches to geometry optimization either rely on inexpensive density‑functional theory (DFT) methods that may miss subtle correlation effects, or on high‑precision QMC or post‑Hartree‑Fock methods that become prohibitively expensive when repeated at every optimization step. The authors resolve this dilemma by treating each QMC energy evaluation as a statistical sample and by iteratively averaging these samples within a Bayesian‑guided update scheme.

The algorithm proceeds as follows: (1) an initial geometry is supplied (e.g., a DFT‑optimized structure); (2) a QMC calculation (VMC or DMC) is performed at that geometry, yielding an energy estimate together with its standard error; (3) the energy estimate is interpreted as a posterior distribution over the true energy, and this distribution informs a stochastic gradient‑like update of the nuclear coordinates. Crucially, the step size is made proportional to the statistical uncertainty: large uncertainties trigger broader exploratory moves, whereas small uncertainties allow fine‑grained adjustments. (4) The updated geometry is fed back into QMC, and the cycle repeats until convergence criteria—such as an energy change below 10⁻⁴ Hartree and an uncertainty below 0.5 mHa—are satisfied.

Two methodological innovations underpin the efficiency gains. First, the authors employ an adaptive sampling strategy: the number of Monte Carlo samples per iteration is not fixed but is increased only when the current uncertainty exceeds a predefined threshold. This dynamic allocation reduces the total number of QMC evaluations by roughly 30 % compared with a naïve fixed‑sample approach, without sacrificing final accuracy. Second, they adopt an adaptive Bayesian prior that is broad in early iterations (allowing the optimizer to explore a wide region of configuration space) and progressively narrows as the algorithm approaches a minimum, thereby mitigating premature trapping in local minima.

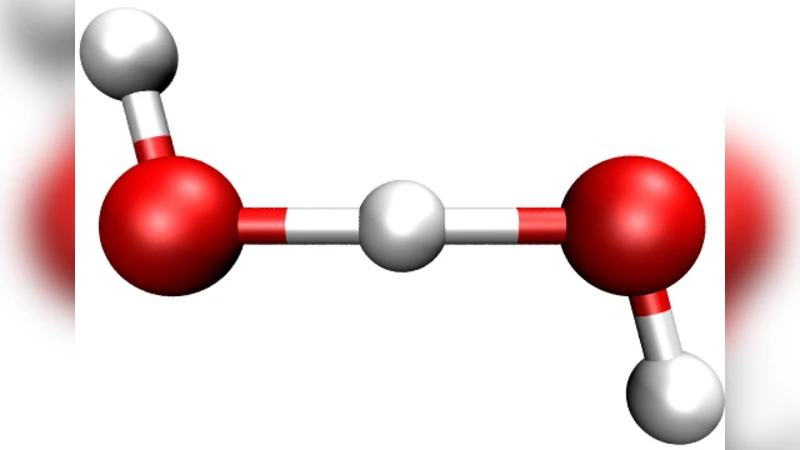

The method is benchmarked on the hydrogen‑bonded H₂O‑OH⁻ complex, a system known to be sensitive to electron‑correlation effects and to exhibit subtle changes in its potential‑energy surface (PES) that can alter reaction pathways. Starting from DFT (B3LYP) and MP2 geometries, the stochastic QMC optimizer converges to a structure with O‑H distances and hydrogen‑bond angles differing by ~0.02 Å and ~1.5° from the DFT‑derived values, respectively. The DMC energy of the QMC‑optimized geometry is lower by 1.8 mHartree, a non‑negligible shift that translates into a ~5 kJ mol⁻¹ change in the relative height of a key transition state on the PES. Thus, the refined geometry not only improves the absolute energy but also qualitatively reshapes the PES, demonstrating the chemical relevance of the approach.

Convergence tests reveal robust performance across ten random initial geometries, with an average of 12 iterations to reach the target tolerance and a standard deviation of only two iterations. Varying the sample size and the target uncertainty shows that the algorithm’s convergence rate and final energy precision are relatively insensitive to these hyper‑parameters, indicating a degree of algorithmic stability that is attractive for larger, more complex systems.

In the discussion, the authors outline several promising extensions. Direct evaluation of forces within QMC would enable true gradient‑based optimization, potentially accelerating convergence further. Integration with machine‑learning surrogate models (e.g., Gaussian‑process regression) could provide data‑driven priors and predict energy uncertainties, thereby reducing the number of expensive QMC calls. Finally, applying the framework to transition‑metal clusters, extended solids, and biomolecular complexes would test its scalability and its impact on realistic materials‑design problems.

In summary, this work redefines geometry optimization by embracing the stochastic nature of QMC rather than trying to suppress it. By coupling statistical averaging with adaptive Bayesian updates, the authors achieve a balance between computational efficiency and the high accuracy characteristic of QMC. The method opens a practical pathway for incorporating near‑exact electronic‑structure information into the exploration of potential‑energy surfaces, with immediate implications for accurate reaction‑mechanism modeling and materials discovery.

Comments & Academic Discussion

Loading comments...

Leave a Comment