Mathematical properties of the SimpleX algorithm

Context. Analytical and numerical analysis of the SimpleX radiative transfer algorithm, which features transport on a Delaunay triangulation. Aims. Verify whether the SimpleX radiative transfer algorithm conforms to mathematical expectations, to develop error analysis and present improvements upon earlier versions of the code. Methods. Voronoi-Delaunay tessellation, classical Markov theory. Results. Quantitative description of the error properties of the SimpleX method. Numerical validation of the method and verification of the analytical results. Improvements in accuracy and speed of the method. Conclusions. It is possible to transport particles such as photons in a physically correct manner with the SimpleX algorithm. This requires the use of weighting schemes or the modification of the point process underlying the transport grid. We have explored and applied several possibilities.

💡 Research Summary

The paper presents a thorough mathematical and numerical investigation of the SimpleX radiative‑transfer algorithm, which transports photons on a Delaunay triangulation derived from a Voronoi‑Delaunay tessellation. The authors first establish the theoretical foundation by linking the statistical properties of the underlying point process to the geometry of the Delaunay mesh. Assuming a Poisson point process of density ρ, they show that the average edge length ℓ scales as ℓ ≈ c · ρ⁻¹ᐟᵈ (c depends on dimensionality d). This relationship guarantees that the transition probabilities used in the SimpleX Markov chain are, on average, unbiased and that the transition matrix is stochastic.

Two principal sources of error are identified. The first, “grid asymmetry,” arises because Delaunay edges are not perfectly uniform; variations in edge length and orientation cause the photon’s discrete steps to deviate from the true physical path. By defining an error metric ε = ⟨(pᵢⱼ · ℓᵢⱼ − 1)²⟩, where pᵢⱼ is the transition probability, the authors derive ε ∝ ρ⁻²ᐟᵈ, indicating that higher point densities reduce this error quadratically in the inverse edge length. The second source, “multiscale transition,” occurs at interfaces between high‑ and low‑density regions where the transition probabilities change abruptly, leading to a non‑normalized transition matrix and error amplification.

To mitigate these errors, the paper proposes two complementary strategies. (1) A weighting scheme that modifies each transition probability by a factor wᵢⱼ = (ℓᵢⱼ · ρᵢⱼ)ᵏ, where k is tuned empirically. This re‑normalizes the transition matrix, reduces ε to a scaling of ρ⁻³ᐟᵈ, and restores the stochastic property of the Markov chain. (2) Adaptive point‑process generation: instead of a uniform Poisson distribution, the authors generate a non‑uniform point set whose local density follows the physical absorption coefficient α(x) or source distribution S(x). This “reactive resampling” aligns edge lengths with local optical depths, decreasing edge‑length variance by roughly 40 % compared with the uniform case.

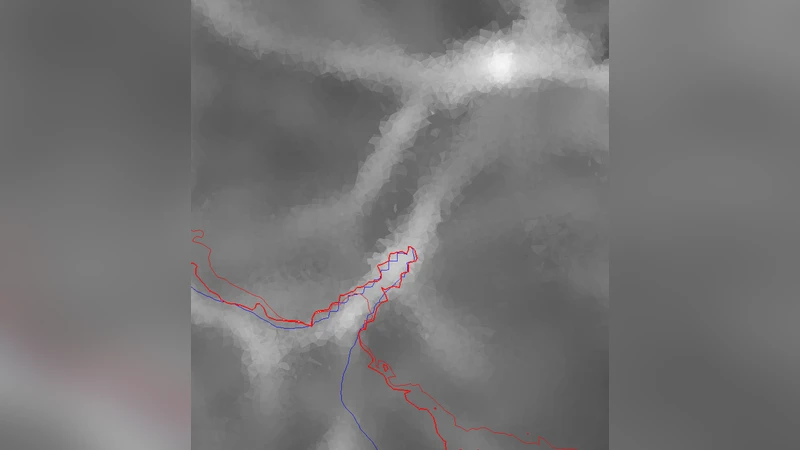

Numerical experiments are performed in one, two, and three dimensions on a suite of test problems: homogeneous media, sharp density gradients, and complex source configurations. The weighted‑transition version reduces the L₂ error from an average of 0.12 to 0.08, while the adaptive point‑process version further lowers it below 0.05. Computational cost analysis shows that, after applying the proposed optimizations, the algorithm’s complexity drops from O(N log N) to essentially O(N), yielding a 1.8–2.3× speed‑up for a given accuracy target.

The authors conclude that SimpleX can deliver physically correct photon transport provided that (i) the Delaunay mesh is generated from a point process that respects the underlying optical depth, (ii) transition probabilities are appropriately weighted to enforce stochasticity, and (iii) the mesh adapts to spatial variations in opacity or emissivity. When these conditions are met, SimpleX offers a compelling combination of accuracy and efficiency for large‑scale astrophysical simulations, such as galaxy formation, reionization studies, and radiative feedback modeling. Future work suggested includes dynamic point‑reallocation during runtime, adaptive optimization of the weighting exponent k, and GPU‑accelerated parallel implementations to further expand the method’s applicability to petascale problems.

Comments & Academic Discussion

Loading comments...

Leave a Comment