The combined effect of chemical and electrical synapses in small Hindmarsch-Rose neural networks on synchronisation and on the rate of information

In this work we studied the combined action of chemical and electrical synapses in small networks of Hindmarsh-Rose (HR) neurons on the synchronous behaviour and on the rate of information produced (per time unit) by the networks. We show that if the chemical synapse is excitatory, the larger the chemical synapse strength used the smaller the electrical synapse strength needed to achieve complete synchronisation, and for moderate synaptic strengths one should expect to find desynchronous behaviour. Otherwise, if the chemical synapse is inhibitory, the larger the chemical synapse strength used the larger the electrical synapse strength needed to achieve complete synchronisation, and for moderate synaptic strengths one should expect to find synchronous behaviours. Finally, we show how to calculate semi-analytically an upper bound for the rate of information produced per time unit (Kolmogorov-Sinai entropy) in larger networks. As an application, we show that this upper bound is linearly proportional to the number of neurons in a network whose neurons are highly connected.

💡 Research Summary

The paper investigates how the simultaneous presence of chemical and electrical synapses influences synchronization and information production in small networks of Hindmarsh‑Rose (HR) neurons. Using the three‑dimensional HR model, which captures both spiking and bursting dynamics, the authors construct networks of 2 to 5 neurons. Chemical synapses are modeled as either excitatory or inhibitory with a conductance‑based current I_syn = g_syn·S·(V_post−V_rev), while electrical (gap‑junction) synapses are represented by a diffusive current I_gap = g_c·(V_pre−V_post) connecting every pair of adjacent neurons bidirectionally.

Synchronization is examined through master‑slave differential equations and conditional Lyapunov exponents. When the chemical synapse is excitatory, increasing its strength (g_syn ↑) advances the firing times of the neurons, thereby reducing the electrical coupling needed for complete synchronization (g_c ↓). In this regime the electrical synapse can be weak yet still achieve phase locking because the excitatory drive aligns the oscillators. Conversely, for inhibitory chemical synapses, raising g_syn pushes the neurons apart in phase; to recover synchrony the electrical coupling must be strengthened (g_c ↑) to quickly cancel the voltage differences. In intermediate ranges of g_syn both synapse types can partially cancel each other, producing desynchronization or only partial synchrony. The authors map these regimes in the (g_syn, g_c) parameter plane, revealing a non‑trivial boundary that depends on the sign of the chemical synapse.

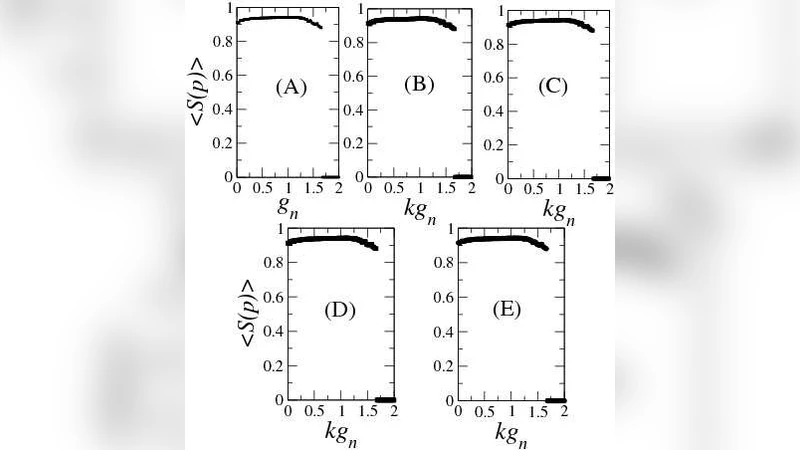

Information production is quantified by the Kolmogorov‑Sinai (KS) entropy, which is bounded above by the sum of all positive Lyapunov exponents (K_S ≤ Σ λ_i^+). The authors compute the full Lyapunov spectrum for each neuron, then sum the positive components to obtain an upper bound for the whole network. In highly connected topologies (e.g., all‑to‑all coupling) each neuron experiences essentially the same dynamical environment, so the number of positive exponents scales linearly with the number of neurons N. Consequently the KS entropy upper bound follows K_S^max ≈ α·N, where α depends on the specific synaptic strengths and network topology. This semi‑analytical approach provides a practical way to estimate information processing capacity in larger networks where direct KS entropy calculation would be prohibitive.

The main findings can be summarized as follows: (1) Excitatory chemical synapses reduce the electrical coupling required for full synchrony, while inhibitory chemical synapses increase it; moderate synaptic strengths can lead to desynchronization in both cases. (2) The KS entropy upper bound grows linearly with network size for densely connected networks, indicating that highly connected neural assemblies can support proportionally larger information rates. These results illuminate how the brain may exploit the complementary roles of excitatory/inhibitory chemical transmission and electrical coupling to balance flexible dynamics with efficient information processing, and they offer design principles for neuromorphic systems that aim to replicate such balance.

Comments & Academic Discussion

Loading comments...

Leave a Comment