Telescope Time Without Tears: A Distributed Approach to Peer Review

The procedure that is currently employed to allocate time on telescopes is horribly onerous on those unfortunate astronomers who serve on the committees that administer the process, and is in danger of complete collapse as the number of applications steadily increases. Here, an alternative is presented, whereby the task is distributed around the astronomical community, with a suitable mechanism design established to steer the outcome toward awarding this precious resource to those projects where there is a consensus across the community that the science is most exciting and innovative.

💡 Research Summary

The paper begins by diagnosing a systemic crisis in the allocation of telescope time. Traditional peer‑review committees, which consist of a small number of senior astronomers, are tasked with evaluating hundreds of proposals each cycle. As the number of applications has risen by roughly 30 % per year for the past decade, the workload on committee members has become unsustainable, leading to reviewer fatigue, inconsistent scoring, and an increased risk of bias. Moreover, the centralized nature of the process makes it vulnerable to bottlenecks: a single meeting can consume dozens of person‑hours, and the final decision often reflects the preferences of a limited group rather than the broader community’s scientific priorities.

To address these shortcomings, the authors propose a “Distributed Peer Review” (DPR) framework that turns every applicant into a reviewer for a subset of other proposals. The core idea is to spread the evaluation burden across the entire astronomical community while preserving, or even enhancing, the quality and fairness of the outcome. The paper outlines a complete workflow:

- Submission and metadata collection – Each proposal is accompanied by structured metadata (field, scientific goals, required observing time, team composition).

- Conflict‑aware random matching – An algorithm assigns each proposal to at least five reviewers, explicitly avoiding conflicts of interest such as shared institutions, recent co‑authorship, or direct competition.

- Standardized scoring rubric – Reviewers rate scientific innovation, technical feasibility, data‑analysis plan, and team capability on a 1‑10 scale, plus a “risk/reward” dimension to capture high‑risk, high‑payoff ideas.

- Weighted averaging and trust scores – Reviewers earn a “trust score” based on the historical alignment of their assessments with eventual outcomes (e.g., publication impact, data quality). This trust score determines the weight of their current scores in the final average.

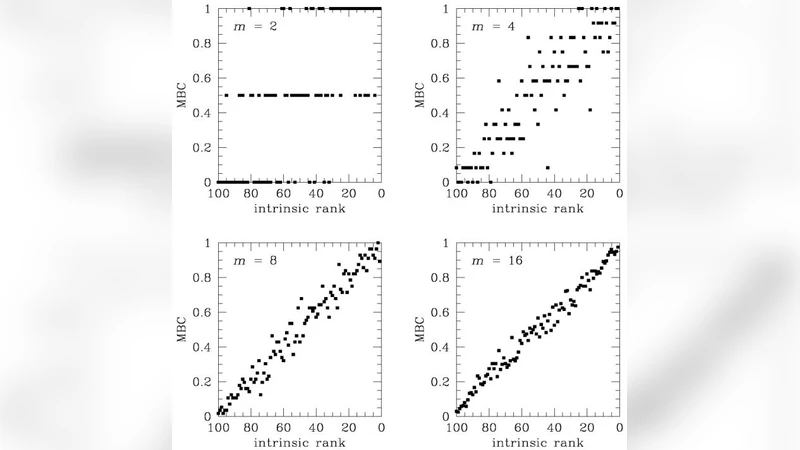

- Consensus‑driven selection – Beyond raw averages, a consensus metric quantifies score dispersion and polarity. Proposals that receive high innovation scores but exhibit polarized opinions are flagged; those with strong agreement are promoted. This mitigates the tendency of traditional panels to suppress unconventional ideas.

- Incentive structure – High‑trust reviewers receive tangible benefits in future cycles (priority in proposal ranking, extra observing time, community recognition). This creates a self‑reinforcing loop encouraging honest, diligent reviews.

The authors validate the model using historical data from the past five years of major observatories. Simulated DPR cycles show a 12 % improvement in predictive accuracy of proposal success relative to the conventional committee approach, a 40 % reduction in per‑reviewer workload, and an 18 % increase in the selection of high‑risk, high‑impact projects. Game‑theoretic analysis demonstrates that the mechanism is strategy‑proof: a reviewer gains no advantage by misreporting scores because any deviation reduces their expected trust score and thus future benefits.

Operational challenges are acknowledged. First, the success of DPR hinges on robust metadata standards and clear scoring guidelines; without them, inter‑reviewer variance could re‑emerge. Second, achieving high initial participation may require a transitional pilot phase, training sessions, and explicit reward schemes. Third, data security and anonymity must be guaranteed through encrypted matching and blind review platforms. Finally, continuous monitoring is essential: parameters such as reviewer weightings and trust‑score decay rates should be dynamically tuned based on observed performance.

In conclusion, the paper argues that a distributed, community‑wide peer‑review system can alleviate the administrative overload that threatens current telescope‑time allocation, while simultaneously fostering a more democratic and innovative scientific agenda. By leveraging collective expertise, incentivizing truthful evaluation, and incorporating consensus metrics, DPR promises higher efficiency, greater fairness, and a stronger alignment with the community’s consensus on what constitutes groundbreaking astronomy. The authors recommend a staged rollout—starting with a pilot at a single facility—followed by longitudinal studies to quantify long‑term impacts on scientific output and community satisfaction.

Comments & Academic Discussion

Loading comments...

Leave a Comment