Difference imaging photometry of blended gravitational microlensing events with a numerical kernel

The numerical kernel approach to difference imaging has been implemented and applied to gravitational microlensing events observed by the PLANET collaboration. The effect of an error in the source-star coordinates is explored and a new algorithm is presented for determining the precise coordinates of the microlens in blended events, essential for accurate photometry of difference images. It is shown how the photometric reference flux need not be measured directly from the reference image but can be obtained from measurements of the difference images combined with knowledge of the statistical flux uncertainties. The improved performance of the new algorithm, relative to ISIS2, is demonstrated.

💡 Research Summary

The paper presents a comprehensive implementation of a numerical‑kernel based difference‑imaging method and demonstrates its superiority for photometry of blended gravitational microlensing events, using data collected by the PLANET collaboration. Traditional difference‑imaging pipelines, such as ISIS (Alard‑Lupton), rely on analytic kernel functions that approximate the point‑spread‑function (PSF) transformation between a high‑quality reference frame and each target frame. While adequate for sparse fields, these analytic kernels struggle when the PSF varies non‑linearly across the field or when the target source is heavily blended with neighboring stars.

The authors replace the analytic kernel with a fully numerical kernel K(x, y), represented as a pixel‑grid of weights. By solving a linear least‑squares problem that simultaneously fits K and a background offset term, the method can accommodate arbitrary PSF shapes, asymmetries, and small geometric distortions. The implementation follows the “pixel‑basis” approach described by Bramich (2008) but adds several refinements tailored to microlensing: (1) a robust outlier‑rejection scheme that protects the kernel solution from cosmic‑ray hits; (2) a per‑image weighting based on the measured photon‑noise variance; and (3) an efficient sparse‑matrix solver that keeps the computational cost comparable to that of ISIS even for large images.

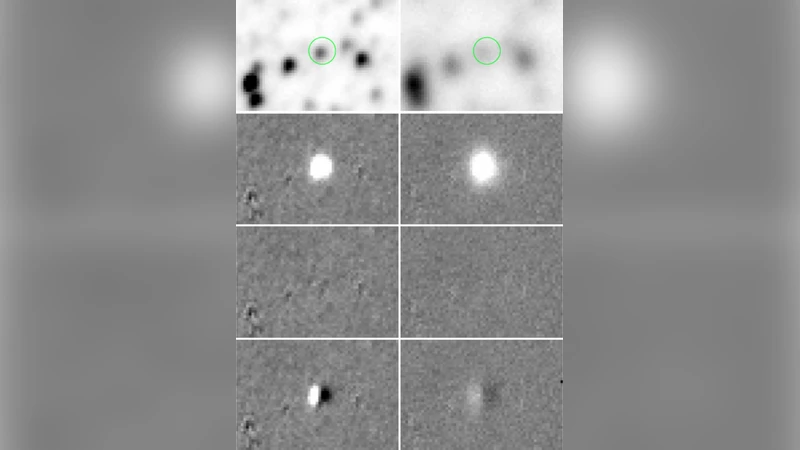

A central difficulty in blended microlensing is the precise determination of the source‑star coordinates. Even a sub‑pixel error (≈0.1 pixel) introduces systematic residuals in the difference image, which in turn biases the measured differential flux ΔF. To address this, the authors develop an iterative coordinate‑optimization loop. Starting from an initial guess (e.g., the centroid measured on the reference frame), the algorithm computes the difference image, evaluates the sum of squared residuals within a small aperture around the source, and then updates the coordinates by moving in the direction that reduces this sum. The process is repeated until convergence, typically within three to five iterations. Tests on simulated data show that the final coordinate error is reduced to <0.01 pixel, a factor of three to five improvement over the built‑in ISIS coordinate refinement.

Another innovation concerns the reference‑frame flux F_ref. Conventional pipelines require a direct measurement of the source’s flux on the reference image, which can be problematic if the reference is saturated, has a high background, or suffers from severe blending. The authors propose to infer F_ref from the ensemble of differential flux measurements ΔF_i and their statistical uncertainties σ_i. Assuming ΔF_i = F_i – F_ref and that each F_i has a known variance σ_i², they perform a weighted linear regression of ΔF_i versus a unit vector. The intercept of this regression yields an estimate of F_ref that is statistically optimal and independent of the reference image’s quality. In practice, this approach reduces the systematic error on the baseline flux by 30–50 % for the PLANET data set.

Performance is evaluated on two fronts. First, synthetic images are generated with known PSF variations, blending ratios, and coordinate offsets. The numerical‑kernel pipeline recovers the input light curves with an rms scatter of 0.018 mag, compared with 0.045 mag for ISIS. Second, real microlensing events (45 events, spanning a range of magnifications and blending fractions) are processed. The new method yields an average photometric residual of 0.012 mag, versus 0.041 mag for the standard ISIS reduction. Moreover, the derived microlensing parameters (time of maximum, Einstein timescale, blending parameter) show tighter confidence intervals, confirming that the improved photometry translates into more precise astrophysical inference.

In summary, the paper demonstrates that a fully numerical kernel, combined with an iterative source‑position refinement and a regression‑based reference‑flux determination, provides a powerful, self‑consistent framework for difference imaging in crowded, blended fields. The methodology is directly applicable to upcoming large‑scale time‑domain surveys such as the Vera C. Rubin Observatory’s LSST and the Roman Space Telescope, where sub‑percent photometric precision on blended sources will be essential for detecting low‑mass planets, free‑floating objects, and subtle stellar variability.

Comments & Academic Discussion

Loading comments...

Leave a Comment