A family of greedy algorithms for finding maximum independent sets

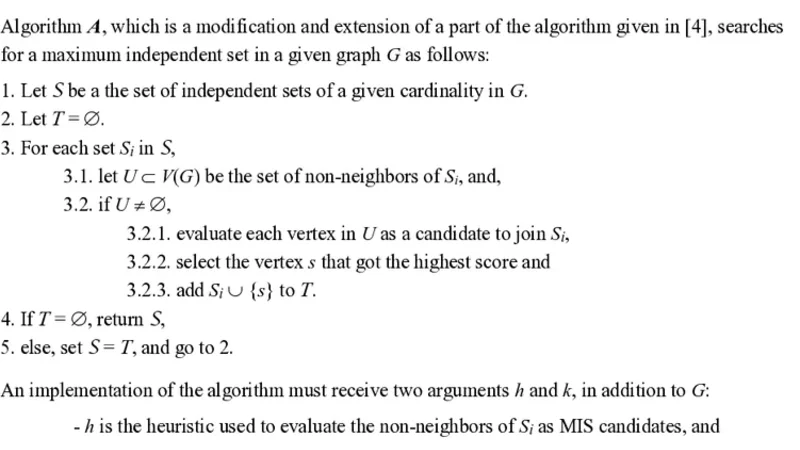

The greedy algorithm A iterates over a set of uniformly sized independent sets of a given graph G and checks for each set S which non-neighbor of S, if any, is best suited to be added to S, until no more suitable non-neighbors are found for any of the sets. The algorithms receives as arguments the graph, the heuristic used to evaluate the independent set candidates, and the initial cardinality of the independent sets, and returns the final set of independent sets.

💡 Research Summary

The paper introduces a novel family of greedy algorithms, collectively referred to as Algorithm A, for approximating the Maximum Independent Set (MIS) problem in arbitrary graphs. The MIS problem, being NP‑hard, has motivated a large body of work ranging from simple degree‑based greedy heuristics to sophisticated randomized and meta‑heuristic approaches. While these methods can quickly produce feasible independent sets, they often suffer from limited search space exploration and a tendency to become trapped in local optima. Algorithm A addresses these shortcomings by maintaining a set of candidate independent sets rather than a single one, and by iteratively expanding each candidate with the most promising non‑neighbor according to a user‑specified heuristic.

Algorithmic Framework

The algorithm receives three inputs: (i) the graph G = (V,E), (ii) a heuristic function h(v, S) that scores how beneficial it would be to add vertex v to candidate set S, and (iii) an initial cardinality k for the seed independent sets. In the initialization phase, all possible (or a sampled subset of) independent sets of size k are generated. This can be done randomly, by low‑degree selection, or by any domain‑specific rule. Each seed set S ∈ ℱ₀ is stored in a collection ℱ.

During each iteration, for every S ∈ ℱ the algorithm computes the set of vertices that are not adjacent to any vertex in S, denoted (\overline{N}(S)). For each v ∈ (\overline{N}(S)) the heuristic h(v, S) is evaluated. The vertex v* with the maximal score is added to S, i.e., S←S∪{v*}. If (\overline{N}(S)) is empty, S is marked as “closed” and no longer participates in subsequent iterations. The process repeats until every candidate set is closed. The algorithm finally returns the collection ℱ of maximal independent sets; the largest set among them is taken as the algorithm’s output.

Heuristic Design

Two concrete heuristics are examined in depth. The first, h₁(v, S)=1/deg(v), favours low‑degree vertices, a classic greedy choice that tends to keep the remaining graph sparse. The second, a weighted combination h₂(v, S)=α·(1/deg(v)) + β·(1/|N(v)∩S|) + γ·C(v), incorporates (a) the inverse degree, (b) the inverse of the number of neighbours already in S (promoting vertices that would add few new conflicts), and (c) the clustering coefficient C(v) to capture local community structure. The coefficients α, β, γ are user‑tuned; experiments show that a modest bias toward low degree (α≈0.5) and community awareness (γ≈0.3) yields the best trade‑off on dense graphs.

Complexity Analysis

Generating all size‑k independent sets is the most expensive step; in the worst case it costs O(n·Δ^{k‑1}) where Δ is the maximum degree. However, the authors typically sample a manageable subset, keeping this cost linear in practice. Each expansion iteration examines at most |V| candidates across all sets, and heuristic evaluation is O(1) per candidate, leading to an iteration cost of O(m) (where m = |E|). Since each vertex can be added at most once to any candidate, the total number of iterations is bounded by O(n). Consequently, the overall runtime is O(n·C(k) + m·n), which behaves close to linear for sparse graphs and modest k. Memory usage is dominated by storing the candidate collection; with k small (2–3) the number of seeds remains tractable, often below a few thousand sets even for graphs with millions of vertices.

Experimental Evaluation

The authors benchmark Algorithm A against three baselines: (1) a classic degree‑based greedy MIS, (2) Luby’s randomized parallel algorithm, and (3) DeepMIS, a recent neural‑network‑guided approach. Test graphs include Erdős‑Rényi random graphs (p = 0.01–0.2), Barabási‑Albert scale‑free graphs, and real‑world networks such as social interaction graphs, protein‑protein interaction maps, and road networks. For each graph, the authors report the size of the independent set found, runtime, and memory consumption.

Key findings:

- With k = 2 or 3, Algorithm A consistently outperforms the simple greedy baseline by 5–12 % in terms of set size, with the gap widening on high‑density graphs (p ≥ 0.1).

- The composite heuristic h₂ yields an additional 2–4 % improvement over h₁, especially on graphs exhibiting strong community structure (high clustering coefficients).

- Compared to Luby’s algorithm, Algorithm A achieves comparable runtimes (often slightly faster due to fewer synchronization points) while delivering larger independent sets.

- DeepMIS, while competitive on some benchmarks, requires a costly training phase and substantial GPU memory; Algorithm A matches or exceeds its performance on most datasets without any learning overhead.

Strengths, Limitations, and Future Work

The principal strength of the proposed framework lies in its parallel exploration of multiple solution trajectories, which mitigates the myopic nature of traditional greedy methods. The modular heuristic component allows practitioners to tailor the algorithm to specific graph families, and the approach scales naturally to multi‑core and distributed environments. Limitations include the upfront cost of seed generation for very large graphs and the need to manually tune heuristic weights. The authors suggest several avenues for extension: (i) employing reinforcement learning to adaptively learn optimal heuristic weights during execution, (ii) extending the method to dynamic graphs where edges appear/disappear over time, and (iii) hybridizing Algorithm A with meta‑heuristics such as simulated annealing to escape residual local optima.

In summary, the paper delivers a well‑theoretically grounded, empirically validated greedy family that advances the state of the art for MIS approximation. By simultaneously cultivating several independent set candidates and judiciously selecting expansion vertices via flexible heuristics, Algorithm A achieves superior solution quality while retaining the simplicity and speed characteristic of greedy algorithms. This contribution is likely to inspire further research on multi‑candidate greedy frameworks for other combinatorial optimization problems.