Scaling Laws of the Throughput Capacity and Latency in Information-Centric Networks

Wireless information-centric networks consider storage as one of the network primitives, and propose to cache data within the network in order to improve latency and reduce bandwidth consumption. We study the throughput capacity and delay in an information-centric network when the data cached in each node has a limited lifetime. The results show that with some fixed request and cache expiration rates, the order of the data access time does not change with network growth, and the maximum throughput order is inversely proportional to the square root and logarithm of the network size $n$ in cases of grid and random networks, respectively. Comparing these values with the corresponding throughput and latency with no cache capability (throughput inversely proportional to the network size, and latency of order $\sqrt{n}$ and $\sqrt{\frac{n}{\log n}}$ in grid and random networks, respectively), we can actually quantify the asymptotic advantage of caching. Moreover, we compare these scaling laws for different content discovery mechanisms and illustrate that not much gain is lost when a simple path search is used.

💡 Research Summary

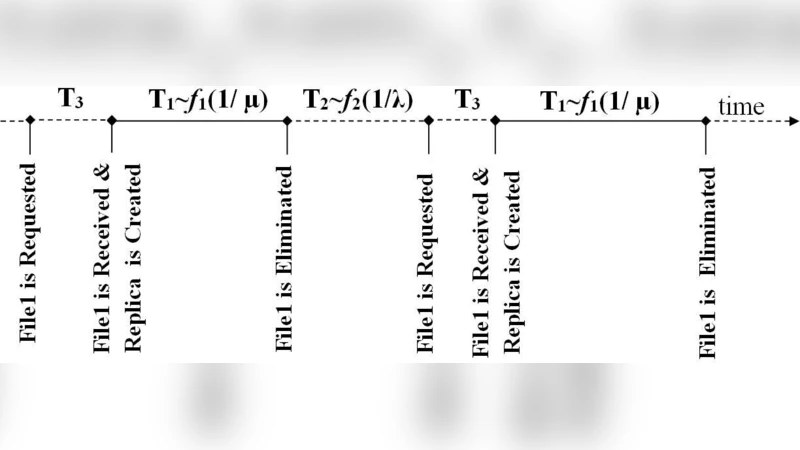

The paper investigates how limited‑lifetime caching influences the fundamental performance limits of wireless information‑centric networks (ICNs). Two canonical topologies are considered: a regular grid of √n × √n nodes and a randomly deployed planar network of n nodes. Each node stores content in a cache that expires after an exponentially distributed lifetime with mean 1/μ, while content requests arrive at each node according to an independent Poisson process with rate λ. The authors adopt the Gupta‑Kumar physical‑layer model to capture interference constraints, which determines how many simultaneous transmissions can succeed as the network grows.

First, the baseline without caching is analyzed. In a grid, the average hop distance from a requester to the original server scales as Θ(√n), leading to an average access latency of Θ(√n). Interference limits the number of concurrent successful links to O(√n), so the total stable throughput is Θ(1/n). In a random deployment, the average distance grows as Θ(√(n/ log n)), giving a latency of the same order and the same Θ(1/n) throughput bound.

Next, the paper introduces caching with a fixed expiration rate. In steady state, the probability that a given cache holds the requested object is p_h = λ/(λ + μ), which is independent of n. Consequently, the hit probability does not deteriorate as the network expands. When a hit occurs, the request is satisfied after only a constant number of hops (often one or two). When a miss occurs, the request must travel the full distance to the origin, incurring the same Θ(√n) or Θ(√(n/ log n)) hops as in the no‑cache case. Because p_h is a constant, the overall expected latency collapses to O(1) for both topologies: the term proportional to √n (or √(n/ log n)) is multiplied by (1 – p_h), which does not grow with n.

The impact on throughput is more subtle. Interference still limits the number of simultaneous transmissions to O(√n) (grid) or O(√(n log n)) (random). However, because a constant fraction p_h of requests are satisfied locally, the long‑range traffic that would otherwise occupy the network is reduced by the same factor. The resulting maximum stable aggregate throughput becomes Θ(1/√n) for the grid and Θ(1/√(n log n)) for the random network—an improvement of a factor √n (and √(n log n) respectively) over the no‑cache baseline.

The authors also compare two content‑discovery mechanisms. A “global search” floods the network with a query, incurring an additional O(log n) overhead but only marginally increasing the hit probability because most hits occur in nearby caches. A “path search” simply follows the routing path, checking caches sequentially; it adds virtually no overhead. Analytical results show that the extra O(log n) delay of global search does not translate into a meaningful throughput gain, so the simpler path‑search strategy is practically preferable.

Sensitivity analysis reveals that the scaling benefits rely on the assumption that the request rate λ and the expiration rate μ remain bounded as n grows. If caches expire too quickly (μ ≫ λ), the hit probability collapses and the network reverts to the baseline performance. Conversely, extremely long cache lifetimes improve hit rates but raise storage and consistency costs. The derived scaling exponents (1/√n, 1/√(n log n)) are robust to more realistic wireless effects (fading, non‑uniform traffic) because they stem from interference geometry rather than specific channel models; only constant factors would change.

In summary, the paper provides a rigorous asymptotic analysis showing that even with finite‑lifetime caches, ICNs can achieve order‑optimal throughput that grows as √n times faster than traditional host‑centric networks, while maintaining constant average latency. The results quantify the asymptotic advantage of caching, demonstrate that simple path‑based discovery incurs negligible loss, and offer design guidance for large‑scale wireless systems such as IoT, vehicular networks, and smart‑city deployments.