Iterative Grassmannian Optimization for Robust Image Alignment

Robust high-dimensional data processing has witnessed an exciting development in recent years, as theoretical results have shown that it is possible using convex programming to optimize data fit to a low-rank component plus a sparse outlier component…

Authors: Jun He, Dejiao Zhang, Laura Balzano

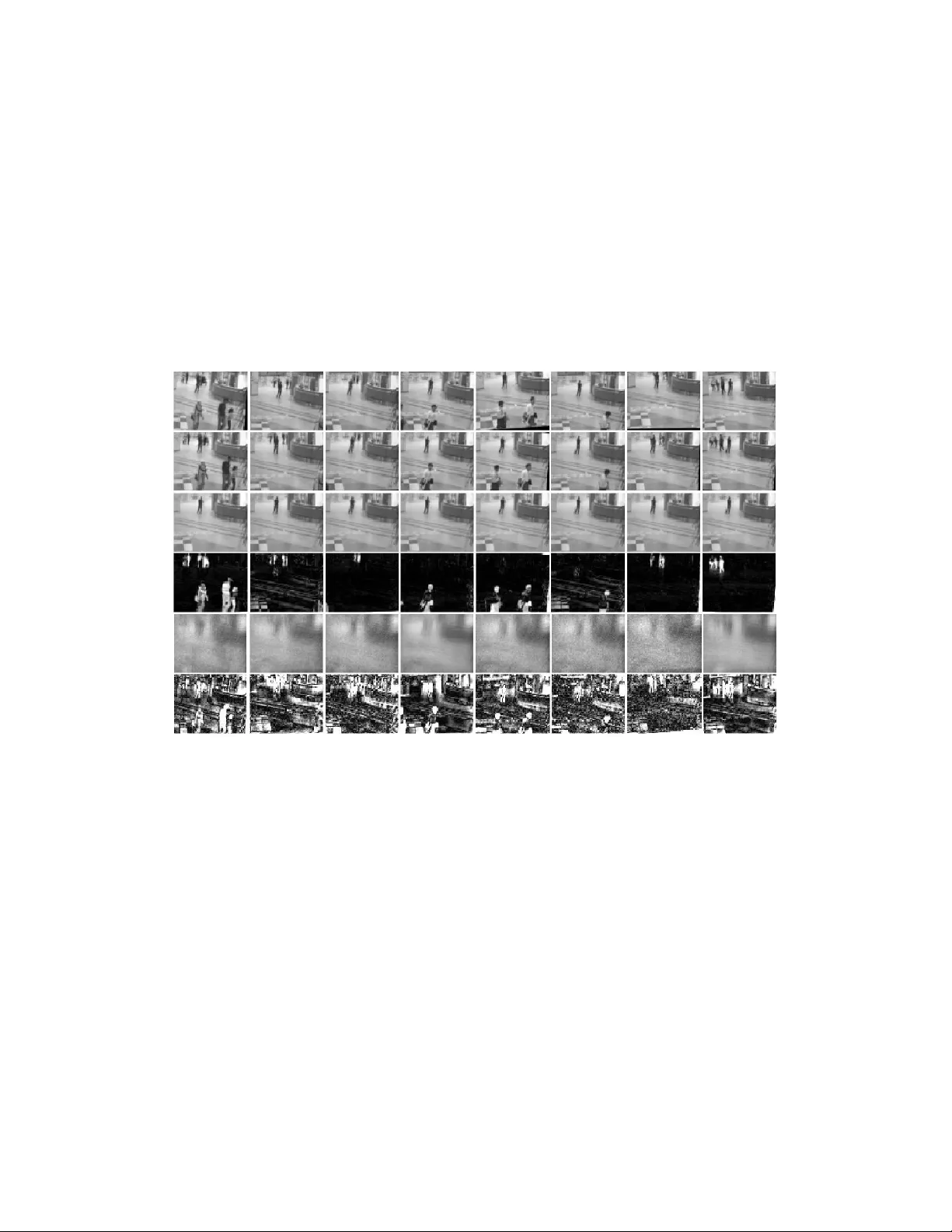

Iterativ e Grassmannian Optimization for Robust Image Alignmen t Jun He a, ∗ , Dejiao Zhang a , Laura Balzano b , T ao T ao a a Scho ol of Ele ctr onic and Information Engine ering, Nanjing University of Information Scienc e and T e chnolo gy, Nanjing, 210044, China b Dep artment of Ele ctric al Engine ering and Computer Scienc e, University of Michigan, A nn Arb or, USA Abstract Robust high-dimensional data pro cessing has witnessed an exciting develop- men t in recen t y ears. Theoretical results hav e shown that it is p ossible using con vex programming to optimize data fit to a low-rank comp onen t plus a sparse outlier comp onen t. This problem is also known as Robust PCA, and it has found application in many areas of computer vision. In image and video pro cessing and face recognition, the opp ortunit y to pro cess massiv e image databases is emerging as p eople upload photo and video data online in unpreceden ted v olumes. Ho wev er, data qualit y and consistency is not con trolled in an y w ay , and the massiveness of the data p oses a serious com- putational c hallenge. In this pap er w e presen t t-GRAST A, or “T ransformed GRAST A (Grassmannian Robust Adaptive Subspace T racking Algorithm)”. t-GRAST A iteratively p erforms incremental gradient descen t constrained to the Grassmann manifold of subspaces in order to sim ultaneously estimate three comp onen ts of a decomp osition of a collection of images: a low-rank subspace, a sparse part of occlusions and foreground ob jects, and a transfor- mation suc h as rotation or translation of the image. W e show that t-GRAST A is 4 × faster than state-of-the-art algorithms, has half the memory require- men t, and can achiev e alignment for face images as w ell as jittered camera surv eillance images. ∗ Corresp onding author. T el. +86 13913873052 Email addr esses: jhe@nuist.edu.cn (Jun He ), dejiaozhang@gmail.com (Dejiao Zhang), girasole@umich.edu (Laura Balzano) URL: http://sites.google.com/site/hejunzz/ (Jun He ) Pr eprint submitte d to Image and Vision Computing Novemb er 27, 2024 Keywor ds: Robust subspace learning, Grassmannian optimization, Image alignmen t, ADMM (Alternating Direction Metho d of Multipliers) 1. INTR ODUCTION With the explosion of image and video capture, both for surveillance and p ersonal enjo yment, and the ease of putting these data online, we are seeing photo databases grow at unpreceden ted rates. On record we kno w that in July 2010, F aceb ook had 100 million photo uploads p er da y [1] and Insta- gram had a database of 400 million photos as of the end of 2011, with 60 uploads p er second [2]; since then b oth of these databases ha ve certainly gro wn immensely . In 2010, there were an estimated minimum 10,000 surv eil- lance cameras in the city of Chicago and in 2002 an estimated 500,000 in London [3, 4]. These enormous collections p ose b oth an opp ortunit y and a challenge for image pro cessing and face recognition: The opportunity is that with so m uch data, it should b e p ossible to assist users in tagging photos, searc hing the image database, and detecting un usual activit y or anomalies. The c hallenge is that the data are not con trolled in an y w ay so as to en sure data qualit y and consistency across photos, and the massiv eness of the data p oses a serious computational c hallenge. In video surveillance, many recently prop osed algorithms mo del the fore- ground and bac kground separation problem as one of “Robust PCA”– de- comp osing the scene as the sum of a lo w-rank matrix of background, whic h represen ts the global appearance and illumination of the scene, and a sparse matrix of moving foreground ob jects [5, 6, 7, 8, 9]. These popular algorithms and mo dels w ork v ery w ell for a stationary camera. Ho wev er, in the case of camera jitter, the bac kground is no longer lo w-rank, and this is problematic for Robust PCA metho ds [10, 11, 12]. Robustly and efficien tly detecting mo ving ob jects from an unstable camera is a challenging problem, since we need to accurately estimate b oth the bac kground and the transformation of eac h frame. Fig. 1 shows that for a video sequence generated b y a sim u- lated unstable camera, GRAST A [13, 6] (Grassmannian Robust Adaptiv e Subspace T rac king Algorithm) fails to do the separation, but the approach w e prop ose here, t-GRAST A, can successfully separate the background and mo ving ob jects despite camera jitter. F urther recent w ork has extended the Robust PCA mo del to that of the “T ransformed Lo w-Rank + Sparse” mo del for face images with occlu- 2 Figure 1: Video background and foreground separation by t-GRAST A despite camera jitter. 1 st ro w: misaligned video frames by simulating camera jitters; 2 nd ro w: images aligned b y t-GRAST A; 3 rd ro w: background reco vered by t-GRAST A; 4 th ro w: foreground separated b y t-GRAST A; 5 th ro w: background reco vered b y GRAST A; 6 th ro w: foreground separated by GRAST A. sions that hav e come under transformations such as translations and rota- tions [14, 15, 16, 17]. Without the transformations, this can b e p osed as a conv ex optimization problem and therefore conv ex programming metho ds can b e used to tackle such a problem. In RASL [15] (Robust Alignment by Sparse and Low-Rank decomp osition), the authors p osed the problem with transformations as w ell, and though it is no longer con vex it can be linearized in eac h iteration and prov en to reach a local minimum. Though the conv ex programming methods used in [15] are p olynomial in the size of the problem, that complexity can still b e to o demanding for v ery large databases of images. W e prop ose T ransformed GRAST A, or t- GRAST A for short, to tackle this optimization with an incremental or online optimization technique. The benefit of this approac h is three-fold: First, it will improv e sp eeds of image alignment b oth in batc h mo de or in online mo de, as we show in Section 3. Second, the memory requirement is small, whic h mak es alignment for v ery large databases realistic, since t-GRAST A only needs to maintain lo w-rank subspaces throughout the alignmen t pro cess. Finally , the prop osed online version of t-GRAST A allows for alignment and o cclusion remo v al on images as they are uploaded to the database, which is 3 esp ecially useful in video pro cessing scenarios. 1.1. R obust Image A lignment The problem of robust image alignmen t arises regularly in real data, as large illumination v ariations and gross pixel corruptions or partial o cclusions often occur, suc h as sunglasses or a scarf for a human sub ject. The clas- sic batch image alignmen t approaches, such as congealing [18, 19] or least squares congealing algorithms [20, 21] cannot sim ultaneously handle suc h sev ere conditions, causing the alignment task to fail. With the breakthrough of conv ex relaxation theory applied to decomp os- ing matrices into a sum of lo w-rank and sparse matrices [22, 5], the recen tly prop osed algorithm “Robust Alignment b y Sparse and Low-rank decomp o- sition,” or RASL [15], p oses the robust image alignmen t problem as a trans- formed version of Robust PCA. The transformed batch of images can b e decomp osed as the sum of a lo w-rank matrix of reco vered aligned images and a sparse matrix of errors. RASL s e eks the optimal domain transfor- mations while trying to minimize the rank of the matrix of the v ectorized and stack ed aligned images and while k eeping the gross errors sparse. While the rank minimization and ` 0 minimization can b e relaxed to their conv ex surrogates– minimize the corresp onding nuclear norm kk ∗ and ` 1 norm kk 1 – the relaxed problem (1) is still highly non-linear due to the complicated do- main transformation. min A,E ,τ k A k ∗ + λ k E k 1 s.t. D ◦ τ = A + E (1) Here, D ∈ R n × N represen ts the data ( n pixels p er eac h of N images), A ∈ R n × N is the low-rank comp onen t, E ∈ R n × N is the sparse additiv e comp onen t, and τ are the transformations. RASL prop oses to tac kle this difficult optimization problem b y iteratively locally linearizing the non-linear image transformation D ◦ ( τ + 4 τ ) ≈ D ◦ τ + P n i =1 J i 4 τ i T i , where J i is the Jacobian of image i with resp ect to transformation i ; then in each iteration the linearized problem is conv ex. The authors ha v e sho wn that RASL works p erfectly w ell for batch aligning the linearly correlated images despite large illumination v ariations and o cclusions. In order to improv e the scalability of robust image alignment for massiv e image datasets, [23] prop oses an efficien t ALM-based (Augmen ted Lagrange Multiplier-based) iterativ e con vex optimization algorithm ORIA (Online Ro- bust Image Alignmen t) for online alignmen t of the input images. Though the 4 prop osed approac h can scale to large image datasets, it requires the subspace of the aligned images as a prior, and for this it uses RASL to train the initial aligned subspace. Once the input images cannot b e w ell aligned b y the cur- ren t subspace, the authors use an heuristic metho d to up date the basis. In con trast, with t-GRAST A w e include the subspace in the cost function, and up date the subspace using a gradient geo desic step on the Grassmannian, as in [6, 24]. W e discuss this in more detail in the next section. 1.2. Online R obust Subsp ac e L e arning Subspace learning has b een an area imp ortan t to signal pro cessing for a few decades. There are man y applications in which one must trac k signal and noise subspaces, from computer vision to comm unications and radar, and a surv ey of the related work can b e found in [25, 26]. The GR OUSE algorithm, or “Grassmannian Rank-One Up date Subspace Estimation,” is an online subspace estimation algorithm that can track chang- ing subspaces in the presence of Gaussian noise and missing entries [24]. GR OUSE w as developed as an online v ariant of low-rank matrix completion algorithms. It uses incremen tal gradien t metho ds that hav e been receiving extensiv e attention in the optimization communit y [27]. How ev er, GR OUSE is not robust to gross outliers, and the follow-up algorithm GRAST A [13, 6], can estimate a c hanging lo w-rank subspace as well as identify and subtract outliers. Still problematic is that, as we sho w ed in Fig. 1, ev en GRAST A cannot handle camera jitter. Our algorithm includes the estimation of trans- formations in order to align frames first b efore separating foreground and bac kground. 2. R OBUST IMAGE ALIGNMENT VIA ITERA TIVE ONLINE SUBSP A CE LEARNING 2.1. Mo del 2.1.1. Batch mo de In order to robustly align the set of linearly correlated images despite sparse outliers, w e consider the follo wing matrix factorization mo del (2) where the lo w-rank matrix U has orthonormal columns that span the low- dimensional subspace of the w ell-aligned images. 5 min U,W ,E ,τ k E k 1 (2) s.t. D ◦ τ = U W + E U ∈ G ( d, n ) W e hav e replaced the v ariable A with the pro duct of t w o smaller matrices U W , and the orthonormal columns of U ∈ R n × d span the low-rank subspace of the images. The set of all subspaces of R n of fixed dimension d is called the Grassmannian, which is a compact Riemannian manifold and is denoted b y G ( d, n ). In this optimization mo del, U is constrained to the Grassmannian G ( d, n ). Though problem (2) can not b e directly solved [15] due to the nonlinearit y of image transformation, if the misalignmen ts are not to o large, b y lo cally linearly approximating the image transformation D ◦ ( τ + 4 τ ) ≈ D ◦ τ + P N i =1 J i 4 τ i T i , the iterativ e mo del (3) can work well as a practical approac h. min U k ,W,E, 4 τ k E k 1 (3) s.t. D ◦ τ k + N X i =1 J k i 4 τ i T i = U k W + E U k ∈ G ( d k , n ) A t algorithm iteration k , τ k . = [ τ k 1 | , . . . , | τ k N ] are the current estimated trans- formations at iteration k , J k i is the Jacobian of the i -th image with resp ect to the transformation τ k i , and { i } denotes the standard basis for R n . Note, at differen t iterations the subspace ma y ha ve different dimensions, i.e. U k is constrained on differen t Grassmannian G ( d k , n ). A t each iteration of the iterative model (3), we consider this optimization problem as the subspace learning problem. That is, our goal is to robustly estimate the low-dimensional subspace U k whic h b est represen ts the lo cally transformed images D ◦ τ k + P N i =1 J k i 4 τ i despite sparse outliers E . In order to solve this subspace learning problem b oth efficien tly with regards to b oth computation and memory , we prop ose to learn U k at eac h iteration k in mo del (3) via the online robust subspace learning approach [6]. 6 2.1.2. Online mo de In order to perform online video processing tasks, for example video stabi- lization, it is desirable to design an efficient approach that can handle image misalignmen t frame b y frame. As in the previous discussion regarding batc h mo de pro cessing, for eac h video frame I , w e ma y model the ` 1 minimization problem as follo ws: min U,w,e,τ k e k 1 (4) s.t. I ◦ τ = U w + e U ∈ G ( d, n ) Note that with the constrain t I ◦ τ = U w + e in the ab o v e minimization problem, we supp ose for each frame the transformed image is w ell aligned to the lo w-rank subspace U . Ho w ever, due to the nonlinear geometric transform I ◦ τ , directly exploiting online subspace learning tec hniques [24, 6] is not p ossible. Here we approac h this as a manifold learning problem, supposing that the low-dimensional image subspace under nonlinear transformations forms a nonlinear manifold. W e prop ose to learn the manifold appro ximately using a union of subspaces mo del U ` , ` = 1 , . . . , L . The basic idea is illustrated in Fig. 2, and the lo cally linearized mo del for the nonlinear problem (4) is as follo ws: min w,e, 4 τ k e k 1 (5) s.t. I ◦ τ ` + J ` 4 τ = U ` w + e . U ` ∈ G ( d ` , n ) In tuitively , from Fig. 2, it is reasonable to think that the initial misaligned image sequence should b e high rank; then after iterativ ely appro ximating the nonlinear transform with a lo cally linear approximation, the rank of the new subspaces U ` , ` = 1 , . . . , L , should b e decreasing as the images b ecome more and more aligned. Then for each misaligned image I and the unkno wn transformation τ , we iterativ ely up date the union of subspaces U ` , ` = 1 , . . . , L , and estimate the transformation τ . Details of the online mo de of t-GRAST A will b e discussed in Section 2.4.2 7 ... ... I 1 ◦ τ 1 I 2 ◦ τ 2 I 3 ◦ τ 3 I N ◦ τ N .. . .. . I N I 3 I 2 I 1 U 1 U 2 U � U L ◦ ◦ ◦ ◦ ◦ ◦ ◦ ◦ ◦ ◦ ◦ Figure 2: The illustration of iteratively approximating the nonlinear image manifold using a union of subspaces. The use of a union of subspaces U ` , ` = 1 , . . . , L , to approximate the nonlinear manifold is a crucial inno v ation for this fully online model. Though w e use the sym b ols U k and U ` in b oth the batch mo de and the online mo de, they ha ve t wo differen t in terpretations. F or batc h mo de, U k is the iterativ ely learned aligned subspace in eac h iteration; while for online mo de, U ` , ` = 1 , . . . , L , is a collection of subspaces whic h are used for approximating the nonlinear transform, and they are up dated iteratively for eac h video frame. 2.2. ADMM Solver for the L o c al ly Line arize d Pr oblem Whether operating in batc h mo de or online mode, the k ey problem is ho w to quan tify the subspace error robustly for the lo cally linearized problem. Considering batc h mo de, at iteration k , giv en the i -th image I i , its estimate of transformation τ k i , the Jacobian J k i , and the curren t estimate of U k t , w e use the ` 1 norm as follo ws: 8 F ( S ; t, k ) = min w, 4 τ k U k t w − ( I i ◦ τ k i + J k i 4 τ ) k 1 (6) With U k t kno wn (or estimated, but fixed), this ` 1 minimization problem is a v ariation of the least absolute deviations problem, whic h can b e solved efficien tly by ADMM (Alternating Direction Metho d of Multipliers) [28]. W e rewrite the righ t hand of (6) as the equiv alen t constrained problem b y in tro ducing a sparse outlier v ector e : min w,e, 4 τ k e k 1 (7) s.t. I i ◦ τ k i + J k i 4 τ = U k t w + e . The augmen ted Lagrangian of problem (7) is L ( U k t , w , e, 4 τ , λ ) = k e k 1 + λ T h ( w , e, 4 τ ) + µ 2 k h ( w , e, 4 τ ) k 2 2 (8) where h ( w , e, 4 τ ) = U k t w + e − I i ◦ τ k i − J k i 4 τ , and λ ∈ R n is the Lagrange m ultiplier or dual vector. Giv en the current estimated subspace U k t , transformation parameter τ k i , and the Jacobian matrix J k i with resp ect to the i -th image I i , the optimal ( w ∗ , e ∗ , 4 τ ∗ , λ ∗ ) can b e computed by the ADMM approac h as follo ws: 4 τ p +1 = ( J k i J k i T ) − 1 J k i T ( U k t w p + e p − I i ◦ τ k i + 1 µ λ p ) w p +1 = ( U k t U k t T ) − 1 U k t T ( I i ◦ τ k i + J k i 4 τ p +1 − e p − 1 µ p λ p ) e p +1 = S 1 µ ( I i ◦ τ k i + J k i 4 τ p +1 − U k t w p +1 − 1 µ p λ p ) λ p +1 = λ p + µ p h ( w p +1 , e p +1 , 4 τ p +1 ) µ p +1 = ρµ p (9) where S 1 µ is the element wise soft thresholding operator [29], and ρ > 1 is the ADMM p enalt y constant enforcing { µ p } to b e a monotonically increasing p ositiv e sequence. The iteration (9) indeed conv erges to the optimal solution of the problem (7) [30]. W e summarize this ADMM solver as Algorithm 2 in Section 2.4. 9 2.3. Subsp ac e Up date Whether iden tifying the b est U k ∗ in the batc h mo de (3) or estimating the union of subspaces U ` , ` = 1 , . . . , L , in the online mo de (5), optimizing the orthonormal matrix U along the geo desic of Grassmannian is our key tech- nique. F or clarity of exp osition in this section, w e remo ve the sup erscript k or ` from U , as the core gradient step along the geo desic of the Grassman- nian for both batch mo de and online mo de is the same. W e seek a sequence { U t } ∈ G ( d, n ) suc h that U t − → U ∗ (as t → ∞ ). W e now face the c hoice of an effective subspace loss function. Regarding U as the v ariable, the loss function (6) is not differen tiable everywhere. Therefore, we c ho ose to instead use the augmen ted Lagrangian (8) as the subspace loss function once w e ha ve estimated ( w ∗ , e ∗ , 4 τ ∗ , λ ∗ ) b y ADMM (9) from the previous U t [13, 6]. In order to tak e a gradien t step along the geo desic of the Grassmannian, according to [26], w e first need to deriv e the gradien t formula of the real- v alued loss function (8) L : G ( d, n ) → R . The gradient O L can b e determined from the deriv ativ e of L with resp ect to the comp onen ts of U : d L dU = ( λ ∗ + µh ( w ∗ , e ∗ , 4 τ ∗ )) w ∗ T (10) Then the gradient is O L = ( I − U U T ) d L dU [26]. F rom Step 6 of Algorithm 1, w e ha ve that O L = Γ w ∗ T (see the definition of Γ in Alg. 1). It is easy to verify that O L is rank one since Γ is a n × 1 v ector and w ∗ is a d × 1 w eight vector. The follo wing deriv ation of geo desic gradien t step is similar to GROUSE [24] and GRAST A [13, 6]. W e rewrite the imp ortan t steps of the deriv ation here for completeness. The sole non-zero singular v alue is σ = k Γ kk w ∗ k , and the correspond- ing left and righ t singular v ectors are Γ k Γ k and w ∗ k w ∗ k resp ectiv ely . Then we can write the SVD of the gradien t explicitly b y adding the orthonormal set x 2 , . . . , x d orthogonal to Γ as left singular v ectors and the orthonormal set y 2 , . . . , y d orthogonal to w ∗ as righ t singular vectors as follows: O L = Γ k Γ k x 2 . . . x d × diag( σ, 0 , . . . , 0) × w ∗ k w ∗ k y 2 . . . y d T . Finally , following Equation (2.65) in [26], a geo desic gradien t step of length η in the direction − O L is given b y 10 U ( η ) = U + (cos( η σ ) − 1) U w ∗ t k w ∗ t k w ∗ t T k w ∗ t k − sin( η σ ) Γ k Γ k w ∗ t T k w ∗ t k . (11) 2.4. Algorithms 2.4.1. Batch Mo de F rom the discussion of of Sections 2.2 and 2.3, giv en the batc h of un- aligned images D , their estimate of transformation τ k and their Jacobian J k at iteration k , we can robustly identify the subspace U k ∗ b y incremen- tally up dating U k t along the geo desic of Grassmannian G ( d k , n ) (11). When U k t − → U k ∗ (as t → ∞ ), the estimate of 4 τ i for eac h initially aligned image I i ◦ τ k i also approaches its optimal v alue 4 τ ∗ i . Once the subspace U k is ac- curately learned, w e will up date the estimate of the transformation for each image using τ k +1 i = τ k i + 4 τ ∗ i . Then in the next iteration, the new subspace U k +1 can also b e learned from D ◦ τ k +1 , and the algorithm iterates until we reac h the stopping criterion, e.g. if k4 τ k 2 k τ k k 2 < or w e reac h the maximum iteration K . W e summarize our algorithms as follo ws. Algorithm 1 is the batch image alignmen t approach via iterative online robust subspace learning. F or Step 7, there are man y w ays to pic k the step-size. F or some examples, you ma y consider the diminishing and constant step-sizes adopted in GR OUSE [24], or the m ulti-level adaptive step-size used for fast conv ergence in GRAST A [13]. Algorithm 2 is the ADMM solver for the lo cally linearized problem (7). F rom our extensive exp erimen ts, if we set the ADMM p enalt y parameter ρ = 2 and the tolerance tol = 10 − 7 , Algorithm 2 has alwa ys conv erged in few er than 20 iterations. 2.4.2. Online Mo de In Section 2.1.2, we prop ose to tackle the difficult nonlinear online sub- space learning problem b y iterativ ely learning online a union of subspaces U ` , ` = 1 , . . . , L . F or a sequence of video frames I i , i = 1 , . . . , N , the union of subspaces U ` are up dated iteratively as illustrated in Fig. 3. Sp ecifically , at i -th frame I i , for the lo cally appro ximated subspace U 1 i at the first iteration, giv en the initial roughly estimated transformation τ 0 i , the 11 Algorithm 1 T ransformed GRAST A - batc h mo de Require : An initial n × d 0 orthogonal matrices U 0 . A sequence of unaligned images I i and the corresp onding initial transformation parameters τ 0 i , i = 1 , . . . , N . The maxim um iteration K . Return : The estimated well-aligned subspace U k ∗ for the well-aligned im- ages. The transformation parameters τ k i for eac h well-aligned image. 1: while not con verged and k < K do 2: Up date the Jacobian matrix of each image : J k i = ∂ ( I i ◦ ζ ) ∂ ζ | ζ = τ k i ( i = 1 . . . N ) 3: Up date the wrapp ed and normalized images: I i ◦ τ k i = v ec ( I i ◦ τ k i ) k v ec ( I i ◦ τ k i ) k 2 4: for j = 1 → N , . . . , until conv er g ed do 5: Estimate the w eight v ector w k j , the sparse outliers e k j , the lo cally lin- earized transformation parameters 4 τ k j , and the dual vector λ k j via the ADMM algorithm 2 from I i ◦ τ k i , J k i , and the curren t estimated subspace U k t ( w k j , e k j , 4 τ k j , λ k j ) = arg min w,e, 4 τ ,λ L ( U k t , w , e, λ ) 6: Compute the gradien t O L as follows: Γ 1 = λ k j + µh ( w k j , e k j , 4 τ k j ), Γ = ( I − U k t U k t T )Γ 1 , O L = Γ w k j T 7: Compute step-size η t . 8: Up date subspace: U k t +1 = U k t + (cos( η t σ ) − 1) U t w k j k w k j k − sin( η t σ ) Γ k Γ k w k j T k w k j k , where σ = k Γ kk w k j k . 9: end for 10: Up date the transformation parameters: τ k +1 i = τ k i + 4 τ k i , ( i = 1 . . . N ) 11: end while 12 Algorithm 2 ADMM Solv er for the Lo cally Linearized Problem (7) Require : An n × d orthogonal matrix U , a wrapp ed and normalized image I ◦ τ ∈ R n , the corresp onding Jacobian matrix J , and a structure OPTS whic h holds four parameters for ADMM: ADMM p enalt y constant ρ , the tolerance tol , and ADMM maxim um iteration K . Return : weigh t vector w ∗ ∈ R d ; sparse outliers e ∗ ∈ R n ; lo cally linearized transformation parameters 4 τ ∗ ; and dual v ector λ ∗ ∈ R n . 1: Initialize w , e, 4 τ , λ, and µ : e 1 = 0, w 1 = 0, 4 τ 1 = 0, λ 1 = 0, µ = 1 2: Cac he P = ( U T U ) − 1 U T and F = ( J T J ) − 1 J T 3: for k = 1 → K do 4: Up date 4 τ : 4 τ k +1 = F ( U w k + e k − I ◦ τ + 1 µ λ k ) 5: Up date w eights: w k +1 = P ( I ◦ τ + J 4 τ k +1 − e k − 1 µ λ k ) 6: Up date sparse outliers: e k +1 = S 1 µ ( I ◦ τ + J 4 τ k +1 − U w k +1 − 1 µ λ k ) 7: Up date dual: λ k +1 = λ k + µh ( w k +1 , e k +1 , 4 τ k +1 ) 8: Up date µ : µ = ρµ 9: if k h ( w k +1 , e k +1 , 4 τ k +1 ) k 2 ≤ tol then 10: Con verge and break the lo op. 11: end if 12: end for 13: w ∗ = w k +1 , e ∗ = e k +1 , 4 τ ∗ = 4 τ k +1 , λ ∗ = y k +1 13 ADMM solv er Algorithm 2 giv es us the lo cally estimated 4 τ 1 i , and the up- dated subspace U 1 i +1 is obtained by taking a gradient step along the geo desic of the Grassmannian G ( d 1 , n ) as discussed in Section 2.3. The transforma- tion τ 1 i of the next iteration is updated b y τ 1 i = τ 0 i + 4 τ 1 i . Then for the next lo cally appro ximated subspace U 2 i , w e also estimate 4 τ 2 i and update the sub- space along the geo desic of the Grassmannian G ( d 2 , n ) to U 2 i +1 . Repeatedly , w e will up date U ` i in the same wa y to get U ` i +1 and the new transformation τ ` i = τ ` − 1 i + 4 τ ` i . After completing the up date for all L subspaces, the union of subspaces U ` i +1 ( ` = 1 , . . . , L ) will be used for approximating the nonlinear transform of the next video frame I i +1 . W e summarize the ab o ve statemen ts as Algorithm 3, and we call this approac h the ful ly online mo de of t-GRAST A. τ 0 i τ 1 i τ 2 i � τ 1 i � τ 2 i U 1 i U 2 i U 3 i U 2 i +1 U 1 i +1 τ 0 i +1 !"#$%%&'()*+$,-*'./0.1$#*'/12$)*' 34%(4*'./0.1$#*'%*$+4(45' τ 1 i +1 U 1 i +2 � τ 3 i U L i U L i +1 U L i +2 U L − 1 i +2 U L − 1 i +1 τ L − 1 i +1 τ L i +1 τ L − 2 i +1 τ L − 1 i τ L i = τ L − 1 i + � τ L i � τ L i Figure 3: The diagram of the ful ly online mo de of t-GRAST A. 2.4.3. Discussion of Online Image Alignment If the subspace U k of the w ell-aligned images is known as a prior, for example if U k is trained b y Algorithm 1 from a “well selected” dataset of one 14 Algorithm 3 T ransformed GRAST A - F ul ly Online Mo de Require : The initial L n × d ` orthonormal matrices U ` spanning the corre- sp onding subspace S ` , ` = 1 , ..., L . A sequence of unaligned images I i and the corresp onding initial transformation parameters τ 0 i , i = 1 , . . . , N . Return : The estimated iterativ ely appro ximated subspaces U ` i , ` = 1 , . . . , L , after pro cessing image I i . The transformation parameters τ L i for each well- aligned image. 1: for unaligned image I i , i = 1 , . . . , N do 2: for the iterativ e approximated subspace U ` , ` = 1 , . . . , L do 3: Up date the Jacobian matrix of image I i : J ` i = ∂ ( I i ◦ ζ ) ∂ ζ | ζ = τ ` i 4: Up date the wrapp ed and normalized images: I i ◦ τ ` i = v ec ( I i ◦ τ ` i ) k v ec ( I i ◦ τ ` i ) k 2 5: Estimate the weigh t vector w ` i , the sparse outliers e ` i , the locally linearized transformation parameters 4 τ ` i , and the dual v ector λ ` i via the ADMM algorithm 2 from I i ◦ τ ` i , J ` i , and the curren t estimated subspace U ` i ( w ` i , e ` i , 4 τ ` i , λ ` i ) = arg min w,e, 4 τ ,λ L ( U ` i , w , e, λ ) 6: Compute the gradien t O L as follows: Γ 1 = λ ` i + µh ( w ` i , e ` i , 4 τ ` i ), Γ = ( I − U ` t U ` t T )Γ 1 , O L = Γ w ` i T 7: Compute step-size η ` i . 8: Up date subspace: U ` i +1 = U ` i + (cos( η ` i σ ) − 1) U t w ` i k w ` i k − sin( η ` i σ ) Γ k Γ k w ` i T k w ` i k , where σ = k Γ kk w ` i k . 9: Up date the transformation parameters: τ ` +1 i = τ ` i + 4 τ ` i 10: end for 11: end for 15 category , w e can simply use U k to align the rest of the unaligned images of the same category . Here “w ell selected” means the training dataset should co v er enough of the global appearance of the ob ject, suc h as different illuminations, whic h can b e represented b y the low-dimensional subspace structure. By category , w e mean a particular ob ject of in terest or a particular background scene in the video surv eillance data. F or massiv e image pro cessing tasks, it is easy to collect such go o d training datasets by simply randomly sampling a small fraction of the whole image set. Once U k is learned from the training set, w e can use a v ariation of Algorithm 1 to align eac h unaligned image I without up dating the subspace, since we ha ve the assumption that the remaining images also lie in the trained subspace. W e call Algorithm 4 the tr aine d online mo de . Ho wev er, w e note that for a v ery large streaming dataset suc h as is t yp- ical in real-time video pro cessing, the tr aine d online mo de may be less well- defined, as the subspace of the streaming video data may c hange ov er time. F or this scenario, our ful ly online mo de for t-GRAST A could gradually adapt to the changing subspace and then accurately estimate the transformation τ . 2.5. Discussion of Memory Usage W e compare the memory usage of our ful ly online mo de of t-GRAST A to that of RASL. RASL requires storage of A , E , a Lagrange m ultiplier matrix Y , the data D , and D ◦ τ , each of which require storage of the size nN . T o compare fairly to t-GRAST A, whic h assumes a d -dimensional model, w e supp ose RASL uses a thin singular v alue decomp osition of size d , whic h requires nd + N d + d 2 memory elements. Finally for the Jacobian p er image, RASL needs nN p , and for τ RASL needs N p , but we will assume p is a small constan t independent of dimension and ignore it. Therefore RASL’s total memory usage is 6 nN + nd + N d + d 2 + N . t-GRAST A must also store the Jacobian, τ , and the data as well as the data with transformation, using memory size 3 nN + N . Otherwise, t- GRAST A needs to store the union of subspaces U ` , ` = 1 , . . . , L matrices of size Lnd ( L N ), and the vectors e , λ , Γ, and w for 3 n + d memory elemen ts. Thus t-GRAST A’s memory total is 3 nN + Lnd + 3 n + d + N . F or a problem size of 100 images, each with 100 × 100 pixels, and assuming d = 10, L = 10, t-GRAST A uses 66.1% of the memory of RASL. F or 10000 mega-pixel images, t-GRAST A uses 50.1% of the memory of RASL. The scaling remains ab out half throughout mid-range to large problem sizes. 16 Algorithm 4 T r aine d Online Mo de of Image Alignment Require : A w ell-trained n × d orthogonal matrix U . An unaligned image I and the corresp onding initial transformation parameters τ 0 . The maximum iteration K . Return : The transformation parameters τ k for the w ell-aligned image. 1: while not con verged and k < K do 2: Up date the Jacobian matrix : J k = ∂ ( I ◦ ζ ) ∂ ζ | ζ = τ k 3: Up date the wrapp ed and normalized image: I ◦ τ k = v ec ( I ◦ τ k ) k v ec ( I ◦ τ k ) k 2 4: Estimate the w eight v ector w k , the sparse outliers e k , the lo cally lin- earized transformation parameters 4 τ k , and the dua l v ector λ k via the ADMM algorithm 2 from I ◦ τ k , J k , and the w ell-trained subspace U ( w k , e k , 4 τ k , λ k ) = arg min w,e, 4 τ ,λ L ( U, w , e, λ ) 5: Up date the transformation parameters: τ k +1 = τ k + 4 τ k 6: end while 17 3. PERF ORMANCE EV ALUA TION In this section, w e conduct comprehensive exp erimen ts on a v ariet y of alignmen t tasks to verify the efficiency and sup eriorit y of our algorithm. W e first demonstrate the abilit y of the prop osed approac h to cop e with o cclusion and illumination v ariation during the alignment pro cess. After that, we further demonstrate the robustness and generalit y of our approac h b y testing it on handwritten digits and face images taken from the Lab eled F aces in the Wild database [31]. Finally , we apply our approach to dealing with video jitters and solving the interesting bac kground foreground separation problem. 3.1. Oc clusion and il lumination variation W e first test our approac h on the dataset ‘dumm y’ describ ed in [15]. Here, w e w ant to v erify the ability of our approach to effectiv ely align the images despite o cclusion and illumination v ariation. The dataset contains 100 images of a dumm y head tak en under v arying illumination and with artificially generated o cclusions created b y adding a square patch at a random lo cation of the image. Fig. 4 sho ws 10 misaligned images of the dumm y . W e align these images by Algorithm 1 (the batc h mo de of t-GRAST A). The canonical frame is c hosen to b e 49 × 49 pixels and the subspace dimension is set to 5. Here and in the rest of our exp erimen ts, for simplicity w e set d k of Algorithm 1 to a fixed d in every iteration. The last three rows of Fig. 4 sho w the results of alignmen t, from which we can see that our approac h is successful at aligning the misaligned images while removing the o cclusion at the same time. 3.2. R obustness In order to further demonstrate the robustness of our approach, w e ap- ply it on more realistic images taken from the Labeled F aces in the Wild database [31]. The LFW con tains more severely misaligned images, for it also includes remark able v ariations in p ose and expression aside from illumi- nation and o cclusion, whic h can b e seen in Fig. 5(c). W e chose 16 sub jects from LFW, eac h of them with 35 images. Each image is aligned to an 80 × 60 canonical frame using τ which are from the group of affine transformations G = Af f (2), as in [15]; these are translations, rotations, and scale trans- formations. F or eac h sub ject, w e set the subspace dimension = 15 and use Algorithm 1 to align each image. In this example, we demonstrate the robust- ness of our approach by comparing the a verage face of each sub ject b efore 18 Figure 4: The first ro w sho ws the original misaligned images with o cclusions and illumi- nation v ariation; the second ro w sho ws the images aligned b y t-GRAST A; the third row sho ws the reco vered aligned images without o cclusion; and the bottom ro w is the o cclusion remo ved by our approac h. and after alignment, which are sho wn in Fig. 5(a)-(b). W e can see that the a verage faces after alignment are m uch clearer than those b efore alignment. Fig. 5(c)-(d) pro vides more detailed information, showing the unaligned and aligned images of John Ashcroft (mark ed by red b o xes in Fig. 5(a)-(b)). 3.3. Gener ality The previous exp eriments ha ve demonstrated the effectiv eness and ro- bustness of t-GRAST A. Here w e wish to show the generality of t-GRAST A b y applying it to aligning a differen t type of images – handwritten digits tak en from MINST database. F or this exp eriment, we again use Algorithm 1 to align 100 images of a handwritten “3” to a 29 × 29 canonical frame size. W e use Euclidean transformation G = E (2) and set the dimension of the subspace to b e 5. Fig. 6 sho ws that t-GRAST A can successfully align the misaligned digits and learn the lo w dimensional subspace, even though the original digits hav e significan t v ariation. W e can see that the outliers separated b y t-GRAST A are generated by v ariations in the digits that are not consistent with the global app earance. The outliers (d) would b e ev en more sparse if the subspace represen tation in (c) w ere to capture more of this v ariation; If desired, w e could ac hieve this tradeoff by increasing the dimension of the subspace. 19 Figure 5: (a) Av erage of 16 misaligned sub jects randomly selected from LFW database; (b) a verage of each sub ject aligned by t-GRAST A; (c) initial images of John Ashcroft (mark ed b y red b oxs in (a) and (b)); (d) images aligned by t-GRAST A. 3.4. Vide o Jitter In this section, we apply t-GRAST A to separation problems made difficult b y video jitter. Here we apply b oth the ful ly online mo de Algorithm 3 and the tr aine d online mo de Algorithm 4 to differen t datasets. W e sho w the sup eriorit y of t-GRAST A regarding both the sp eed and memory requiremen t of the algorithms. 20 Figure 6: (a) 100 misaligned digits; (b) digits aligned by t-GRAST A; (c) subspace repre- sen tation of corresp onding digits; (d) outliers. 3.4.1. Hal l Here we apply t-GRAST A to the task of separating mo ving ob jects from static bac kground in the video fo otage recorded by an unstable camera. W e note that in [6], the authors sim ulate a virtual panning camera to show 21 that GRAST A can quic kly trac k sudden changes in the bac kground subspace caused by a mo ving camera. Their low-rank subspace trac king mo del is w ell-defined, as the camera after panning is still stationary , and th us the recorded video frames are accurately pixelwise aligned. Ho w ever, for an unstable camera, the recorded frames are no longer aligned; the bac kground cannot b e well represen ted by a lo w-rank subspace unless the jittered frames are first aligned. In order to sho w that t-GRAST A can tackle this separation task, w e consider a highly jittered video sequence generated by a sim ulated unstable camera. T o sim ulate the unstable camera, w e randomly translate the original well-aligned video frames in x- / y- axis and rotate them in the plane. In this exp eriment, we compare t-GRAST A with RASL and GRAST A. W e use the first 200 frames of the “Hall” dataset 1 , eac h 144 × 176 pixels. W e first p erturb each frame artificially to sim ulate camera jitter. The rotation of eac h frame is random, uniformly distributed within the range of [ − θ 0 / 2 , θ 0 / 2], and the ranges of x- and y-translations are limited to [ − x 0 / 2, x 0 / 2] and [ − y 0 / 2, y 0 / 2]. In this example, we set the p erturbation size parameters [ x 0 , y 0 , θ 0 ] with the v alues of [ 20,20,10 ◦ ]. F or comparing with RASL, unlike [23], we just let RASL run its original batc h model without forcing it in to an online algorithm framew ork. The task w e giv e to RASL and t-GRAST A is to align each frame to a 62 × 75 canonical frame, again using G = Af f (2). The dimension of the subspace in t-GRAST A is set to b e 10. W e first randomly select 30 frames of the total 200 frames to train the subspace by Algorithm 1 and then align the rest using the tr aine d online mo de . The visual comparison b etw een RASL and t-GRAST A are sho wn in Fig. 7. T able 1 illustrates the n umerical comparison of RASL and t-GRAST A, for which w e ran eac h algorithm 10 times to get the statistics. F rom T able 1 and Fig. 7 we can see that the t wo algorithms ac hieve a very similiar effect, but t-GRAST A runs muc h faster than RASL: On a PC with Intel P9300 2.27GHz CPU and 2 GB of RAM, the a verage time for aligning a newly arriv ed frame is 1.1 second, while RASL needs more than 800 seconds to align the total batc h of images, or 4 seconds p er frame. Moreov er, our approac h is also sup erior to RASL regarding memory efficiency . These sup eriorities b ecome more dramatic as one increases the 1 Find these along with the videos at http://perception.i2r.a- star.edu.sg/bk_ model/bk_index.html . 22 size of the image database. T able 1: Statistics of errors in tw o pixels P 1 and P 2 , selected from the original video frames and traced through the jitter sim ulation process to the RASL and t-GRAST A output frames. Max error and mean error are calculated as the distances from the estimated P 1 and P 2 to their statistical center E ( P 1 ) and E ( P 2 ). Std are calculated as the standard deviation of four coordinate v alue ( X 1 , Y 1 ) for P 1 and ( X 2 , Y 2 ) for P 2 across all frames. Max Mean X1 std Y1 std X2 std Y2 std error error Initial misalignment 11.24 5.07 3.35 3.01 3.34 4.17 RASL 2.96 1.73 0.56 0.71 0.90 1.54 t-GRAST A 6.62 0.84 0.48 1.11 0.57 0.74 Figure 7: Comparison b etw een t-GRAST A and RASL. (a) Av erage of initial misaligned images; (b) av erage of images aligned by t-GRAST A; (c)av erage of background recov- ered b y t-GRAST A; (d) av erage of images aligned b y RASL; (e) av erage of background reco vered by RASL. In order to compare with GRAST A, w e use 200 p erturb ed images to re- co ver the background and separate the moving ob jects for b oth algorithms; Fig. 8 illustrates the comparison. F or b oth GRAST A and t-GRAST A, w e set the subspace rank = 10 and randomly selected 30 images to train the sub- space first. F or t-GRAST A, w e use the affine transformation G = Af f (2). F rom Fig. 8, w e can see that our approac h successfully separates the fore- ground and the background and simultaneously align the p erturb ed images. But GRAST A fails to learn a proper subspace, th us, the separation of bac k- ground and foreground is p o or. Although GRAST A has b een demonstrated to successfully track a dynamic subspace, e.g. the panning camera, the dy- namics of an unstable camera are to o fast and unpredictable for the GRAST A subspace tracking mo del to succeed in this con text without pre-alignmen t of the video frames. 23 Figure 8: Video background and foreground separation with jittered video. 1 st ro w: 8 misaligned video frames randomly selected from artificially p erturb ed images; 2 nd ro w: images aligned by t-GRAST A; 3 rd ro w: background reco vered by t-GRAST A; 4 th ro w: foreground separated by t-GRAST A; 5 th ro w: bac kground recov ered by GRAST A; 6 th ro w: foreground separated by GRAST A. 24 3.4.2. Gor e In this example, w e sho w the capabilit y of t-GRAST A for video stabi- lization applied to the dataset “Gore” describ ed in [15]. In [15], the original face images are obtained by a face detector, and the jitters are caused by the inheren t imprecision of the detector. In contrast, for t-GRAST A, w e simply crop the face from eac h image by a constant rectangle with size 68 × 44, whic h has the same p osition parameters for all frames. So in our case, the jitters are caused by the differences b etw een the motion and p ose v ariation of the target and the stabilization of the constan t rectangle. Figure 9: The first row sho ws the original misaligned images; the second ro w shows the images aligned by t-GRAST A; the third ro w shows the reco vered aligned images without outliers; and the bottom ro w sho ws the outliers remo ved by our approac h. F or this exp erimen t, the dimension of the subspace is set to b e 10, and w e again choose the affine transformation G = Af f (2). W e first use the Algorithm 1 to train an initial subspace by 20 images randomly selected from the whole set of 140 images. W e then use the ful ly online mo de to align the rest of the images. Fig. 9 show the results. t-GRAST A did w ell for this dataset with b etter sp eed than RASL: On a PC with In tel P9300 2.27GHz CPU and 2 GB of RAM, t-GRAST A aligned these images at 5 frames p er second. This is 5 times faster than RASL and 3 times faster than ORIA as describ ed in [23]. Although t-GRAST A w as not designed as a face detector, the exp erimen- tal results suggest that t-GRAST A can b e transformed in to a face detector, or more generally target trac ker, if the v ariation of p ose of the target is lim- ited in a certain range (usually 45 ◦ ). In this case, w e can further improv e the efficiency of t-GRAST A b y choosing a tight frame for the canonical image. 25 3.4.3. Sidewalk In the last exp erimen t, w e use misaligned frames caused b y real camera jitter to test t-GRAST A. Here w e align all 1200 frames of “Sidewalk” dataset 2 to 50 × 78 canonical frames, again using G = Af f (2) and subspace dimension 5. W e also use the first 20 frames to train the initial subspace using the batc h mo de Algorithm 1, and then use the ful ly online mo de to align the rest of the frames. Here we can see that aligning the total 1200 frames is a heavy task for RASL – for our PC with Intel P9300 2.27GHz CPU and 2 GB of RAM, it w as necessary to divide the dataset into four parts each containing 300 frames. W e then let RASL separately run on eac h sub-dataset. The total time needed by RASL w as around 1000 seconds for 1.2 frames p er second, while t-GRAST A ac hieved more than 4 frames p er second without partitioning the data. Compared to the tr aine d online mo de , the ful ly online mo de can track c hanges of the subspace ov er time. This is an imp ortant asset of the ful ly online mo de , esp ecially when it comes to large streaming datasets con tain- ing considerable v ariations. W e see that we usually need no more than 20 frames for ful ly online mo de to adapt to the changes of the subspace, suc h as illumination c hanges or dynamic bac kground caused b y the motion of the subspace. Moreov er, if the changes are slo w, i.e the natural illumination c hanges from dayligh t or the camera moving slowly , then t-GRAST A needs no extra frames to track such changes; it incorp orates such information with eac h iteration during the slowly changing process. 4. CONCLUSIONS AND FUTURE W ORK 4.1. Conclusions In this pap er we ha v e presented an iterativ e Grassmannian optimization approac h to sim ultaneously iden tify an optimal set of image domain transfor- mations for image alignmen t and the lo w-rank subspace matc hing the aligned images. These are suc h that the v ector of eac h transformed image can be decomp osed as the sum of a lo w-rank part of the reco vered aligned image and a sparse part of errors. This approac h can b e regarded as an extension of GRAST A and RASL: W e extend GRAST A to transformations, and extend 2 Find it along with other datasets containing misaligned frames caused b y real video jitters at http://wordpress- jodoin.dmi.usherb.ca/dataset . 26 Figure 10: Video background and foreground separation with jittered video. 1 st ro w: 8 original misaligned video frames caused by video jitter; 2 nd ro w: images aligned by t- GRAST A; 3 rd ro w: background reco vered b y t-GRAST A; 4 th ro w: foreground separated b y t-GRAST A. RASL to the incremen tal gradient optimization framework. Our approac h is faster than RASL and more robust to alignment than GRAST A. W e can effectiv ely and computationally efficiently learn the low-rank subspace from misaligned images, whic h is very practical for computer vision applications. 4.2. F utur e Work Though this w ork presents an approach for robust image alignmen t more computationally efficien t than state-of-the-art, a foremost remaining problem is ho w to scale the prop osed approach to a very large streaming dataset suc h as is t ypical in real-time video pro cessing. The fully online t-GRAST A algorithm presented here is a first step tow ards a truly large-scale real-time algorithm, but sev eral practical implementation questions remain, including online parameter selection and error p erformance cross-v alidation. Another question of in terest is regarding the estimation of d k for the subspace update. Though we fix the rank d in this pap er, estimating d k and switching betw een Grassmannians is a v ery interesting future direction. While preparing the conference version of this w ork [32], w e noticed an in teresting alignmen t approach proposed in [33]. Though the t wo approac hes of ours and [33] are b oth obtained via optimization ov er a manifold, they 27 p erform alignmen t for v ery different scenarios. F or example, the approac h in [33] fo cuses on semantically meaningful videos or signals, and then it can successfully align the videos of the same ob ject from different views; t- GRAST A manipulates the set of misaligned images or the video of unstable camera to robustly identify the low-rank subspace, and then it can align these images according to the subspace. An intriguing future direction would b e to merge these t wo approaches. A final direction of future w ork is to ward applications whic h require more aggressiv e background tracking than is p ossible b y a GRAST A-type algo- rithm. F or example, if a camera is following an ob ject around different parts of a single scene, ev en though the background ma y b e quickly v arying from frame to frame, the camera will get m ultiple shots of differen t pieces of the bac kground. Therefore, it ma y b e p ossible to still build a model for the entire bac kground scene using low-dimensional mo deling. Incorp orating camera mov ement parameters and a dynamical mo del in to GRAST A would b e a natural wa y to solve this problem, merging classical adaptiv e filtering algorithms with mo dern manifold optimization. 5. A CKNOWLEDGEMENTS This work of Jun He is supp orted by NSF C (61203273) and by Collegiate Natural Science F und of Jiangsu Pro vince (11KJB510009). Laura Balzano w ould like to ackno wledge 3M for generously supp orting her Ph.D. studies. References [1] S. Odio, Making faceb o ok photos b etter, accessed July 2010 at https: //www.facebook.com/blog/blog.php?post=403838582130 . [2] Instagram, Y ear in review: 2011 in n umbers, accessed Jan- uary 2012 at http://blog.instagram.com/post/15086846976/ year- in- review- 2011- in- numbers . [3] D. Bab win, Cameras mak e c hicago most closely w atched U.S. cit y (April 6 2010). [4] M. McCahill, C. Norris, Cctv in london, W orking P ap er 6, Cen tre for Criminology and Criminal Justice, Universit y of Hull, United Kingdom (June 2002). 28 [5] E. J. Cand ` es, X. Li, Y. Ma, J. W righ t, Robust principal comp onent analysis?, J. ACM 58 (3) (2011) 11:1–11:37. doi:10.1145/1970392. 1970395 . [6] J. He, L. Balzano, A. Szlam, Incremen tal gradient on the grassmannian for online foreground and background separation in subsampled video, in: Computer Vision and Pattern Recognition (CVPR), 2012 IEEE Con- ference on, 2012, pp. 1568–1575. doi:10.1109/CVPR.2012.6247848 . [7] G. Mateos, G. Giannakis, Sparsit y con trol for robust principal comp o- nen t analysis, in: Signals, Systems and Computers (ASILOMAR), 2010 Conference Record of the F ort y F ourth Asilomar Conference on, 2010, pp. 1925–1929. doi:10.1109/ACSSC.2010.5757875 . [8] R. Siv alingam, A. D’Souza, M. Bazakos, R. Mieziank o, V. Morel- las, N. P apanikolopoulos, Dictionary learning for robust background mo deling, in: Rob otics and Automation (ICRA), 2011 IEEE In terna- tional Conference on, 2011, pp. 4234–4239. doi:10.1109/ICRA.2011. 5979981 . [9] F. De la T orre, M. Black, A framew ork for robust subspace learning, In ternational Journal of Computer Vision 54 (1-3) (2003) 117–142. doi: 10.1023/A:1023709501986 . [10] P . Jo doin, J. Konrad, V. Saligrama, V. V eilleux-Gab oury , Motion de- tection with an unstable camera, in: Image Pro cessing, 2008. ICIP 2008. 15th IEEE International Conference on, 2008, pp. 229–232. doi: 10.1109/ICIP.2008.4711733 . [11] G. Puglisi, S. Battiato, A robust image alignmen t algorithm for video stabilization purp oses, Circuits and Systems for Video T ec hnology , IEEE T ransactions on 21 (10) (2011) 1390–1400. doi:10.1109/TCSVT.2011. 2162689 . [12] K. Simonson, T. Ma, Robust real-time c hange detection in high jitter, Sandia Rep ort SAND2009-5546 (2009) 1–41. [13] J. He, L. Balzano, J. Lui, Online robust subspace trac king from partial information, Arxiv preprin t 29 [14] Y. P eng, A. Ganesh, J. W righ t, W. Xu, Y. Ma, Rasl: Robust alignmen t b y sparse and low-rank decomp osition for linearly correlated images, in: Computer Vision and P attern Recognition (CVPR), 2010 IEEE Confer- ence on, 2010, pp. 763–770. doi:10.1109/CVPR.2010.5540138 . [15] Y. P eng, A. Ganesh, J. W righ t, W. Xu, Y. Ma, Rasl: Robust align- men t b y sparse and low-rank decomp osition for linearly correlated im- ages, Pattern Analysis and Mac hine In telligence, IEEE T ransactions on 34 (11) (2012) 2233–2246. doi:10.1109/TPAMI.2011.282 . [16] Z. Zhang, A. Ganesh, X. Liang, Y. Ma, Tilt: T ransform inv ariant low- rank textures, In ternational Journal of Computer Vision 99 (1) (2012) 1–24. doi:10.1007/s11263- 012- 0515- x . [17] A. W agner, J. W right, A. Ganesh, Z. Zhou, H. Mobahi, Y. Ma, T o w ard a practical face recognition system: Robust alignmen t and illumination by sparse representation, Pattern Analysis and Machine Intelligence, IEEE T ransactions on 34 (2) (2012) 372–386. doi:10.1109/TPAMI.2011.112 . [18] G. Huang, V. Jain, E. Learned-Miller, Unsup ervised joint alignmen t of complex images, in: Computer Vision, 2007. ICCV 2007. IEEE 11th In ternational Conference on, 2007, pp. 1–8. doi:10.1109/ICCV.2007. 4408858 . [19] E. Learned-Miller, Data driven image mo dels through contin uous join t alignmen t, P attern Analysis and Mac hine Intelligence, IEEE T ransac- tions on 28 (2) (2006) 236–250. doi:10.1109/TPAMI.2006.34 . [20] M. Co x, S. Sridharan, S. Lucey , J. Cohn, Least squares congealing for unsup ervised alignment of images, in: Computer Vision and Pattern Recognition, 2008. CVPR 2008. IEEE Conference on, 2008, pp. 1–8. doi:10.1109/CVPR.2008.4587573 . [21] M. Co x, S. Sridharan, S. Lucey , J. Cohn, Least-squares congealing for large n umbers of images, in: Computer Vision, 2009 IEEE 12th In terna- tional Conference on, 2009, pp. 1949–1956. doi:10.1109/ICCV.2009. 5459430 . [22] V. Chandrasek aran, S. Sanghavi, P . P arrilo, A. Willsky , Rank-sparsity incoherence for matrix decomp osition, SIAM Journal on Optimization 21 (2) (2011) 572–596. doi:10.1137/090761793 . 30 [23] Y. W u, B. Shen, H. Ling, Online robust image alignment via itera- tiv e conv ex optimization, in: Computer Vision and Pattern Recogni- tion (CVPR), 2012 IEEE Conference on, 2012, pp. 1808 –1814. doi: 10.1109/CVPR.2012.6247878 . [24] L. Balzano, R. No wak, B. Rec ht, Online identification and tracking of subspaces from highly incomplete information, in: Comm unication, Con trol, and Computing (Allerton), 2010 48th Ann ual Allerton Confer- ence on, 2010, pp. 704–711. doi:10.1109/ALLERTON.2010.5706976 . [25] P . Comon, G. Golub, T racking a few extreme singular v alues and v ectors in signal pro cessing, Pro ceedings of the IEEE 78 (8) (1990) 1327–1343. doi:10.1109/5.58320 . [26] A. Edelman, T. Arias, S. Smith, The geometry of algorithms with or- thogonalit y constrain ts, SIAM Journal on Matrix Analysis and Appli- cations 20 (2) (1998) 303–353. doi:10.1137/S0895479895290954 . [27] D. P . Bertsek as, Incremental gradien t, subgradient, and proximal meth- o ds for con vex optimization: A surv ey , T ec h. Rep. LIDS-P-2848, MIT Lab for Information and Decision Systems (August 2010). [28] S. Bo yd, N. Parikh, E. Ch u, B. Peleato, J. Ec kstein, Distributed opti- mization and statistical learning via the alternating direction method of multipliers, F ound. T rends Mach. Learn. 3 (1) (2011) 1–122. doi: 10.1561/2200000016 . [29] S. Bo yd, L. V andenberghe, Conv ex optimization, Cambridge univ ersit y press, 2004. [30] D. P . Bertsek as, Nonlinear Programming, A thena Science, 2004. [31] G. B. Huang, M. Mattar, T. Berg, E. Learned-Miller, Lab eled F aces in the Wild: A Database for Studying F ace Recognition in Uncon- strained En vironmen ts, in: Pro ceedings of the Europ ean Conference on Computer Vision, W orkshop on F aces in ’Real-Life’ Images: Detection, Alignmen t, and Recognition, 2008. [32] J. He, D. J. Zhang, L. Balzano, T. T ao, Iterativ e online subspace learning for robust image alignment, in: F ace and Gesture Recognition (F G), 2013 IEEE 10th Conference on, 2013. 31 [33] R. Li, R. Chellappa, Spatiotemp oral alignmen t of visual signals on a spe- cial manifold, P attern Analysis and Mac hine Intelligence, IEEE T rans- actions on 35 (3) (2013) 697–715. doi:10.1109/TPAMI.2012.144 . 32

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment