The ROMES method for statistical modeling of reduced-order-model error

This work presents a technique for statistically modeling errors introduced by reduced-order models. The method employs Gaussian-process regression to construct a mapping from a small number of computationally inexpensive error indicators' to a distribution over the true error. The variance of this distribution can be interpreted as the (epistemic) uncertainty introduced by the reduced-order model. To model normed errors, the method employs existing rigorous error bounds and residual norms as indicators; numerical experiments show that the method leads to a near-optimal expected effectivity in contrast to typical error bounds. To model errors in general outputs, the method uses dual-weighted residuals---which are amenable to uncertainty control---as indicators. Experiments illustrate that correcting the reduced-order-model output with this surrogate can improve prediction accuracy by an order of magnitude; this contrasts with existing multifidelity correction’ approaches, which often fail for reduced-order models and suffer from the curse of dimensionality. The proposed error surrogates also lead to a notion of `probabilistic rigor’, i.e., the surrogate bounds the error with specified probability.

💡 Research Summary

The paper introduces ROMES (Reduced‑Order‑Model Error Surrogates), a novel framework for statistically modeling the error introduced by reduced‑order models (ROMs). In many many‑query scenarios such as Bayesian inference, uncertainty quantification, or real‑time control, high‑fidelity simulations are prohibitively expensive, prompting the use of ROMs that project the high‑dimensional state onto a low‑dimensional subspace. While ROMs provide substantial speed‑ups, they inevitably introduce a model‑reduction error that must be quantified to avoid bias in downstream analyses. Existing approaches rely on rigorous error bounds, which are often overly conservative (effectivity factors far greater than one), or on multifidelity correction techniques that treat the error as a function of the original input parameters. The latter suffers from the curse of dimensionality and from the highly oscillatory nature of ROM error surfaces, especially when the input space is moderate to high dimensional.

ROMES addresses these limitations by exploiting the fact that ROMs naturally generate inexpensive, physics‑based error indicators. Typical indicators include the norm of the residual evaluated on the reduced solution, dual‑weighted residuals (which incorporate output sensitivities), and any available rigorous error bounds. These indicators, denoted ρ(μ)∈ℝ^q, are strongly correlated with the true error δ(μ) (either the state‑space error ‖δu‖ or the output error δs). The authors propose to learn a stochastic mapping ρ→δ using Gaussian‑process regression (GPR). GPR provides both a predictive mean μ̂(ρ) (the expected error) and a predictive variance σ̂²(ρ) (the epistemic uncertainty due to model reduction).

The training phase requires a modest offline dataset consisting of pairs (μ_i, ρ_i, δ_i) obtained by evaluating the high‑fidelity model at a limited set of parameter points. Once the GPR surrogate is trained, the online phase is extremely cheap: only the inexpensive indicator ρ(μ) needs to be computed from the ROM, after which the surrogate instantly yields a distribution for the error. The ROM output can then be corrected as s_corr(μ)=s_red(μ)+μ̂(ρ(μ)). Moreover, the predictive variance enables the construction of “probabilistic rigor” bounds: for a chosen confidence level α, one can define Δ_prob(μ)=μ̂(ρ(μ))+z_{α}·σ̂(ρ(μ)), where z_{α} is the standard‑normal quantile. This bound overestimates the true error with probability α, offering a statistically meaningful alternative to deterministic over‑conservative bounds.

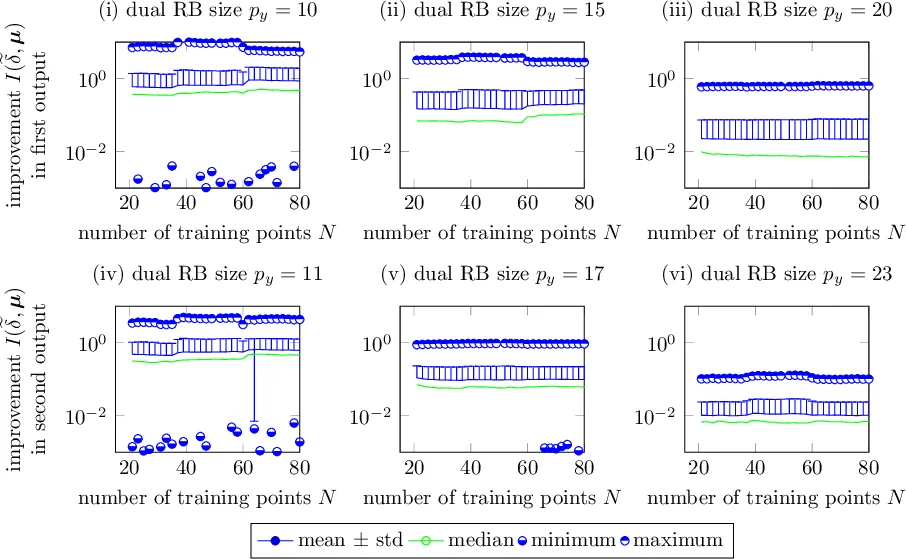

The paper validates ROMES on two benchmark problems. The first involves a parametrized Poisson equation in two dimensions with nine parameters. The authors show that the residual norm and the rigorous error bound are logarithmically linear with the true state error, and that ROMES achieves an expected effectivity close to one (≈1.2) while dramatically reducing variance compared with traditional bounds. The second experiment focuses on output error correction. Using dual‑weighted residuals as indicators, ROMES outperforms a standard multifidelity correction approach, which fails due to the high‑dimensional, highly oscillatory error surface. After correction, the output error is reduced by roughly an order of magnitude.

Key contributions of ROMES are:

- Indicator‑Driven Stochastic Modeling – By mapping cheap, physics‑based indicators to error via GPR, ROMES bypasses the need to learn the error directly as a function of the full input space.

- Joint Mean‑Variance Prediction – The surrogate provides both an expected error and a quantified epistemic uncertainty, essential for downstream Bayesian or risk‑aware analyses.

- Probabilistic Rigor – The method yields error bounds with a user‑specified confidence level, bridging the gap between deterministic rigorous bounds and purely statistical estimates.

- Non‑Intrusive Integration – ROMES requires only the ability to compute the chosen indicators from the ROM; it does not alter the ROM formulation or require intrusive modifications to the high‑fidelity solver.

- Scalability – Because the surrogate operates in the low‑dimensional indicator space, it remains tractable even when the original parameter space is moderately high dimensional.

In summary, ROMES offers a practical, statistically sound, and computationally efficient pathway to quantify and correct ROM errors, thereby extending the applicability of reduced‑order modeling to uncertainty‑critical engineering workflows.

Comments & Academic Discussion

Loading comments...

Leave a Comment