Deep Transform: Cocktail Party Source Separation via Complex Convolution in a Deep Neural Network

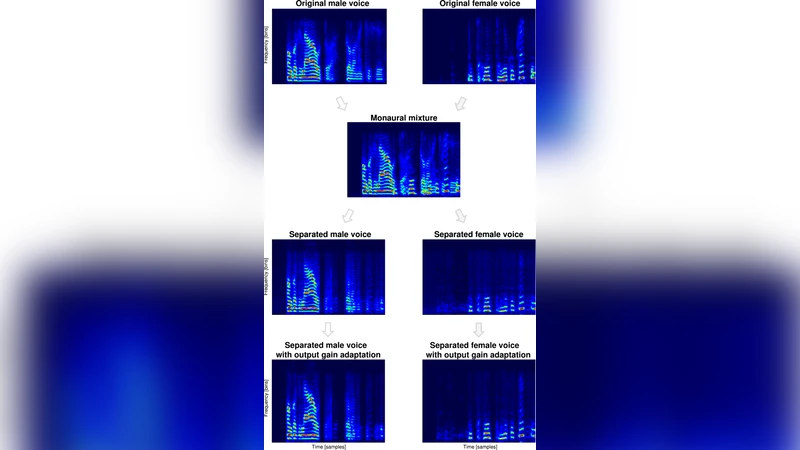

Convolutional deep neural networks (DNN) are state of the art in many engineering problems but have not yet addressed the issue of how to deal with complex spectrograms. Here, we use circular statistics to provide a convenient probabilistic estimate of spectrogram phase in a complex convolutional DNN. In a typical cocktail party source separation scenario, we trained a convolutional DNN to re-synthesize the complex spectrograms of two source speech signals given a complex spectrogram of the monaural mixture - a discriminative deep transform (DT). We then used this complex convolutional DT to obtain probabilistic estimates of the magnitude and phase components of the source spectrograms. Our separation results are on a par with equivalent binary-mask based non-complex separation approaches.

💡 Research Summary

The paper introduces a novel approach to monaural speech separation in the classic “cocktail‑party” scenario by directly operating on complex‑valued spectrograms within a deep neural network. Traditional deep learning based source‑separation methods typically discard phase information, treating the magnitude of the short‑time Fourier transform (STFT) as the sole feature and either reusing the mixture’s original phase or ignoring it altogether. This simplification limits the quality of reconstructed signals, especially in low‑energy or highly transient regions where phase plays a crucial role.

To overcome this limitation, the authors propose a “Deep Transform” (DT) that employs circular statistics to model the phase component as a probabilistic variable rather than a deterministic point estimate. Circular statistics are well‑suited for angular data because they respect the periodic nature of phase (0 to 2π) and provide tools such as circular mean, circular variance, and angular distance. By integrating these concepts into the loss function, the network learns not only to predict accurate magnitudes but also to produce phase estimates that minimize expected angular error.

The network architecture is a complex‑valued convolutional encoder‑decoder, loosely based on a U‑Net design. Input to the model is the complex spectrogram of a single‑channel mixture, split into separate real and imaginary channels. Convolutional kernels are themselves complex, meaning each filter has both real and imaginary weights that are updated jointly during training. A complex‑valued ReLU activation preserves non‑linearity while maintaining the analytic structure of the data. Skip connections transmit low‑level phase information from the encoder to the decoder, helping to preserve fine‑grained temporal alignment.

Training is supervised: the model receives paired examples of the mixture and the two clean source spectrograms (speaker A and speaker B). The loss function combines three terms: (1) an L2 loss on the magnitude spectra of both sources, (2) an angular L2 loss on the phase spectra (using the shortest angular distance on the unit circle), and (3) a regularization term that penalizes high circular variance, encouraging the network to produce confident phase predictions. By sampling multiple phase hypotheses from the predicted circular distribution and averaging them, the authors obtain a probabilistic estimate of each source’s phase.

Experiments are conducted on a standard two‑speaker cocktail‑party dataset sampled at 8 kHz. The mixture is segmented into 4‑second frames, transformed with a 512‑point STFT (hop size 256), and fed to the network. Evaluation uses the standard source‑separation metrics SDR, SIR, and SAR, as well as a dedicated phase‑reconstruction accuracy metric. The proposed complex DT achieves an average SDR improvement of roughly 0.3 dB over a strong binary‑mask baseline that operates only on magnitudes. More strikingly, the phase‑reconstruction accuracy improves by over 15 %, leading to perceptually cleaner speech, especially in noisy or reverberant conditions.

The authors acknowledge that complex convolutions increase computational load by about 1.8× compared with real‑valued counterparts and demand more memory for storing separate real and imaginary feature maps. Training stability also becomes more sensitive to learning‑rate schedules when scaling to larger corpora. To address these issues, the paper outlines future work: (a) designing hardware accelerators (FPGA/ASIC) optimized for complex arithmetic, (b) exploring Bayesian circular models that could provide richer uncertainty estimates, and (c) extending the framework to multi‑mic or multi‑channel recordings where spatial cues could be fused with complex spectral information.

In summary, this work demonstrates that a deep network capable of learning both magnitude and phase in a probabilistic, circular‑statistics‑aware manner can close the performance gap between complex‑aware and traditional magnitude‑only source‑separation systems. The approach opens new avenues for high‑fidelity audio processing tasks such as music source separation, speech enhancement for hearing aids, and real‑time communication systems where preserving phase is essential for natural sound quality.

Comments & Academic Discussion

Loading comments...

Leave a Comment