Extropy: Complementary Dual of Entropy

This article provides a completion to theories of information based on entropy, resolving a longstanding question in its axiomatization as proposed by Shannon and pursued by Jaynes. We show that Shannon’s entropy function has a complementary dual function which we call “extropy.” The entropy and the extropy of a binary distribution are identical. However, the measure bifurcates into a pair of distinct measures for any quantity that is not merely an event indicator. As with entropy, the maximum extropy distribution is also the uniform distribution, and both measures are invariant with respect to permutations of their mass functions. However, they behave quite differently in their assessments of the refinement of a distribution, the axiom which concerned Shannon and Jaynes. Their duality is specified via the relationship among the entropies and extropies of course and fine partitions. We also analyze the extropy function for densities, showing that relative extropy constitutes a dual to the Kullback-Leibler divergence, widely recognized as the continuous entropy measure. These results are unified within the general structure of Bregman divergences. In this context they identify half the $L_2$ metric as the extropic dual to the entropic directed distance. We describe a statistical application to the scoring of sequential forecast distributions which provoked the discovery.

💡 Research Summary

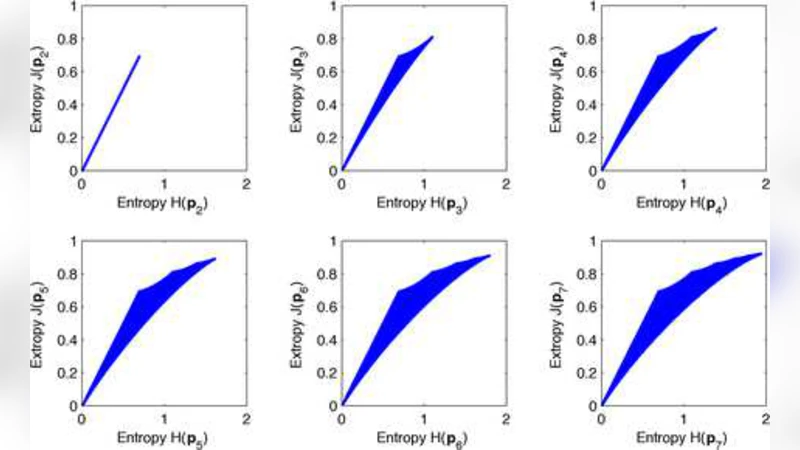

The paper introduces “extropy,” a novel information measure that serves as the complementary dual of Shannon’s entropy, and rigorously develops the mathematical relationship between the two. Starting from the familiar discrete setting, the authors define entropy as H(p)=−∑p_i log p_i and extropy as J(p)=−∑(1−p_i) log(1−p_i) for a probability vector p of size N. They prove that for binary outcomes (N=2) the two measures coincide, but for any distribution with three or more positive components (N≥3) entropy strictly exceeds extropy. This establishes that the two functions are identical only in the trivial binary case and diverge otherwise, highlighting a previously unnoticed bifurcation.

The authors then revisit Shannon’s three axioms. Extropy satisfies the first two—continuity and monotonic increase under uniform distribution—yet it violates the third axiom (the “refinement” or “splitting” property). Instead, they propose an alternative axiom that captures the dual nature of entropy and extropy: the sum of entropy over a coarse partition and extropy over its complementary fine partition remains invariant. They formalize this duality through a linear transformation: J(p) = (N−1)

Comments & Academic Discussion

Loading comments...

Leave a Comment