A Probabilistic Theory of Deep Learning

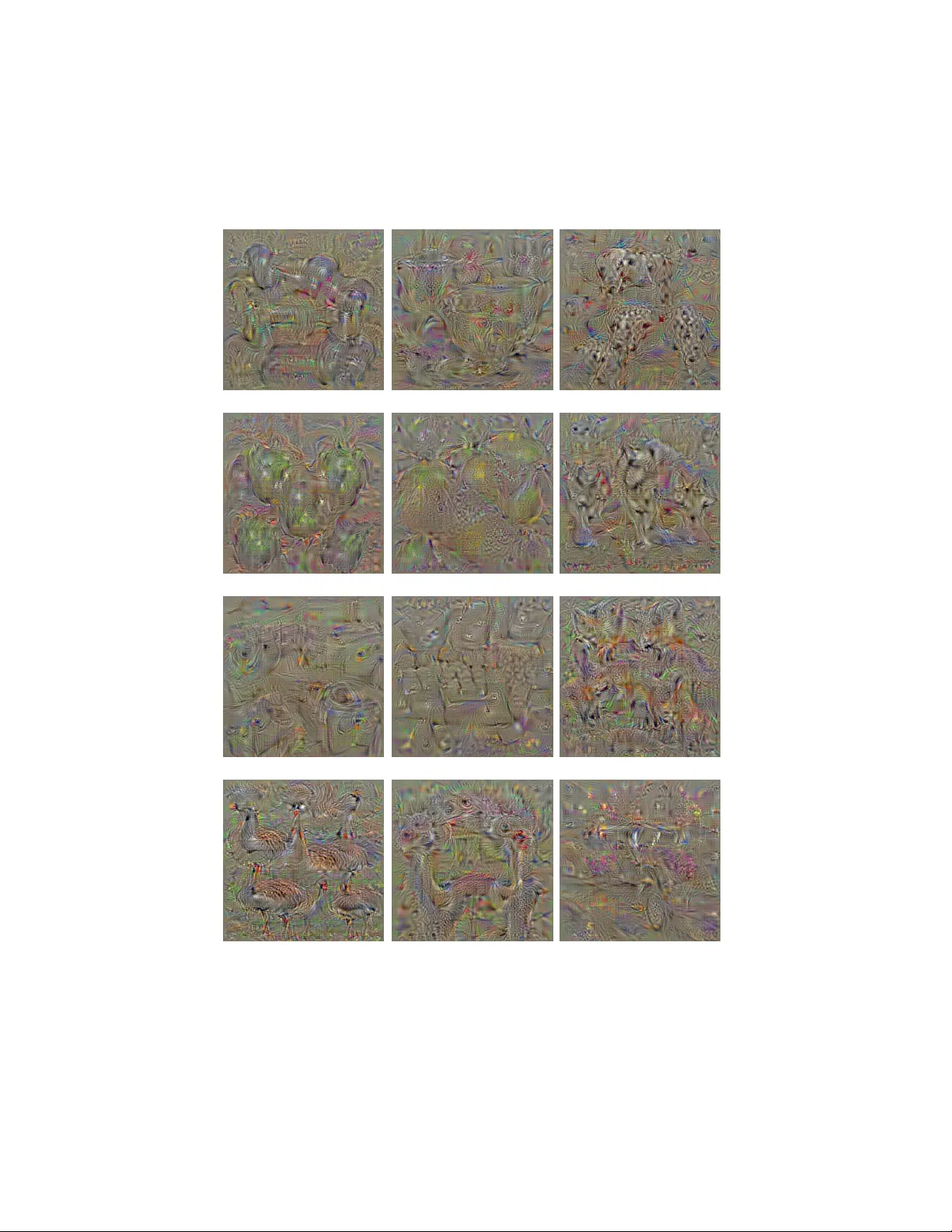

A grand challenge in machine learning is the development of computational algorithms that match or outperform humans in perceptual inference tasks that are complicated by nuisance variation. For instance, visual object recognition involves the unknow…

Authors: Ankit B. Patel, Tan Nguyen, Richard G. Baraniuk