Model selection and hypothesis testing for large-scale network models with overlapping groups

The effort to understand network systems in increasing detail has resulted in a diversity of methods designed to extract their large-scale structure from data. Unfortunately, many of these methods yield diverging descriptions of the same network, making both the comparison and understanding of their results a difficult challenge. A possible solution to this outstanding issue is to shift the focus away from ad hoc methods and move towards more principled approaches based on statistical inference of generative models. As a result, we face instead the more well-defined task of selecting between competing generative processes, which can be done under a unified probabilistic framework. Here, we consider the comparison between a variety of generative models including features such as degree correction, where nodes with arbitrary degrees can belong to the same group, and community overlap, where nodes are allowed to belong to more than one group. Because such model variants possess an increasing number of parameters, they become prone to overfitting. In this work, we present a method of model selection based on the minimum description length criterion and posterior odds ratios that is capable of fully accounting for the increased degrees of freedom of the larger models, and selects the best one according to the statistical evidence available in the data. In applying this method to many empirical unweighted networks from different fields, we observe that community overlap is very often not supported by statistical evidence and is selected as a better model only for a minority of them. On the other hand, we find that degree correction tends to be almost universally favored by the available data, implying that intrinsic node proprieties (as opposed to group properties) are often an essential ingredient of network formation.

💡 Research Summary

The paper tackles a central problem in network science: how to objectively decide which generative model best explains a given empirical network. Rather than relying on ad‑hoc community‑detection algorithms that often produce divergent partitions, the authors adopt a probabilistic framework based on stochastic block models (SBMs) and their extensions. They consider four model families: (1) the classic non‑overlapping SBM, (2) the degree‑corrected SBM (DC‑SBM) that introduces node‑specific propensity parameters, (3) an overlapping SBM where each node may belong to multiple blocks, and (4) an overlapping degree‑corrected SBM that combines both features.

The core of the methodology is the Minimum Description Length (MDL) principle, which equates model selection with data compression. For any model, the total description length Σ consists of two parts: the entropy of the data given the model parameters, S(G|θ), and the entropy of the parameter space itself, L(θ). Σ = L(θ) + S(G|θ) is exactly the negative log of the Bayesian posterior probability (up to a constant), so minimizing Σ is equivalent to maximizing the posterior. This formulation automatically penalizes model complexity, preventing over‑fitting that would otherwise plague maximum‑likelihood approaches.

The authors derive explicit entropy formulas for each of the four models. For the non‑overlapping SBM the entropy is a simple function of the block‑wise edge counts e_{rs} and block sizes n_r. The overlapping models are treated by “unfolding” each multi‑membership node into several virtual nodes, each with a single membership, which allows the same entropy expression to be used. Degree correction adds a term –∑i ln(k_i!) (or –∑{i,r} ln(k_{ir}!) for the overlapping case) reflecting the combinatorial choices of labeled half‑edges. Parallel edges are ignored under the sparse‑graph assumption, which is justified for the large networks studied.

Model selection proceeds in two steps. First, for each model the authors infer the optimal parameters that minimize Σ using a variational Bayesian algorithm combined with an efficient graph‑compression routine. This yields both the data‑fit term S and the parameter‑code term L. Second, they compare the total description lengths across models; the model with the smallest Σ is preferred. To quantify statistical confidence they compute posterior odds ratios (the exponential of Σ differences), which indicate whether a more complex model is truly justified by the data or merely capitalizes on extra degrees of freedom.

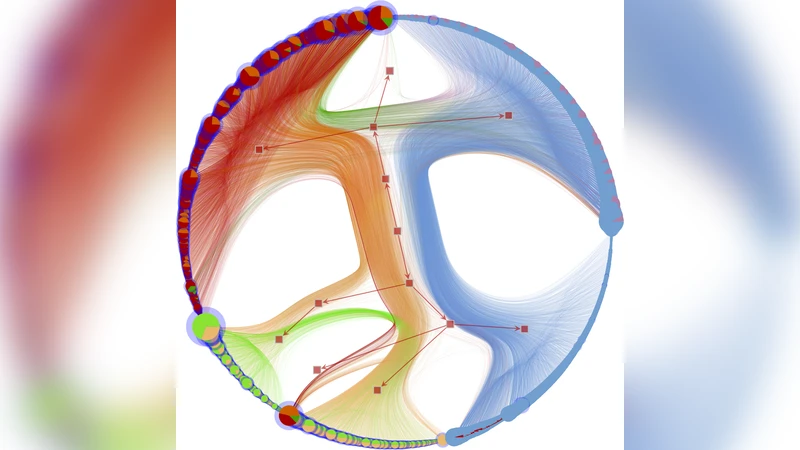

Empirically, the method is applied to over one hundred real‑world unweighted networks spanning social, biological, technological, and infrastructural domains. The results are striking: degree correction is favored in virtually every network, often reducing Σ by a substantial margin and yielding posterior odds ratios far exceeding unity. In contrast, overlapping community structure is supported in only a small minority (≈10 %) of the datasets; for the vast majority the overlapping models either do not improve Σ or do so only marginally, with odds ratios close to one. This suggests that many previously reported overlapping communities—especially those identified by non‑statistical heuristics—may be artifacts of model flexibility rather than genuine structural features.

The paper also discusses identifiability limits. Overlapping models possess a vastly larger parameter space, leading to many distinct parameter configurations that generate the same observed graph. The MDL framework naturally penalizes this ambiguity through the L(θ) term, ensuring that only statistically justified overlaps survive the selection process. The authors further analyze the theoretical conditions under which overlapping structures become distinguishable, showing that strong, dense intersections are required for a clear signal.

Implementation details are provided to demonstrate scalability. The inference algorithm runs in O(E log B) time (E = number of edges, B = number of blocks) and can handle networks with hundreds of thousands of nodes. The authors also outline how to incorporate priors, handle parallel edges via a Poisson variant, and extend the framework to weighted or multilayer graphs (though these extensions are left for future work).

In conclusion, the study presents a rigorous, unified approach to network model selection that balances fit and complexity via the MDL principle. It shows that degree heterogeneity is a pervasive feature of real networks, while overlapping community structure is far less common than often claimed. By providing a statistically sound method to compare competing generative hypotheses, the work offers a valuable tool for researchers seeking to uncover the true large‑scale organization of complex systems.

Comments & Academic Discussion

Loading comments...

Leave a Comment