Rotation-invariant convolutional neural networks for galaxy morphology prediction

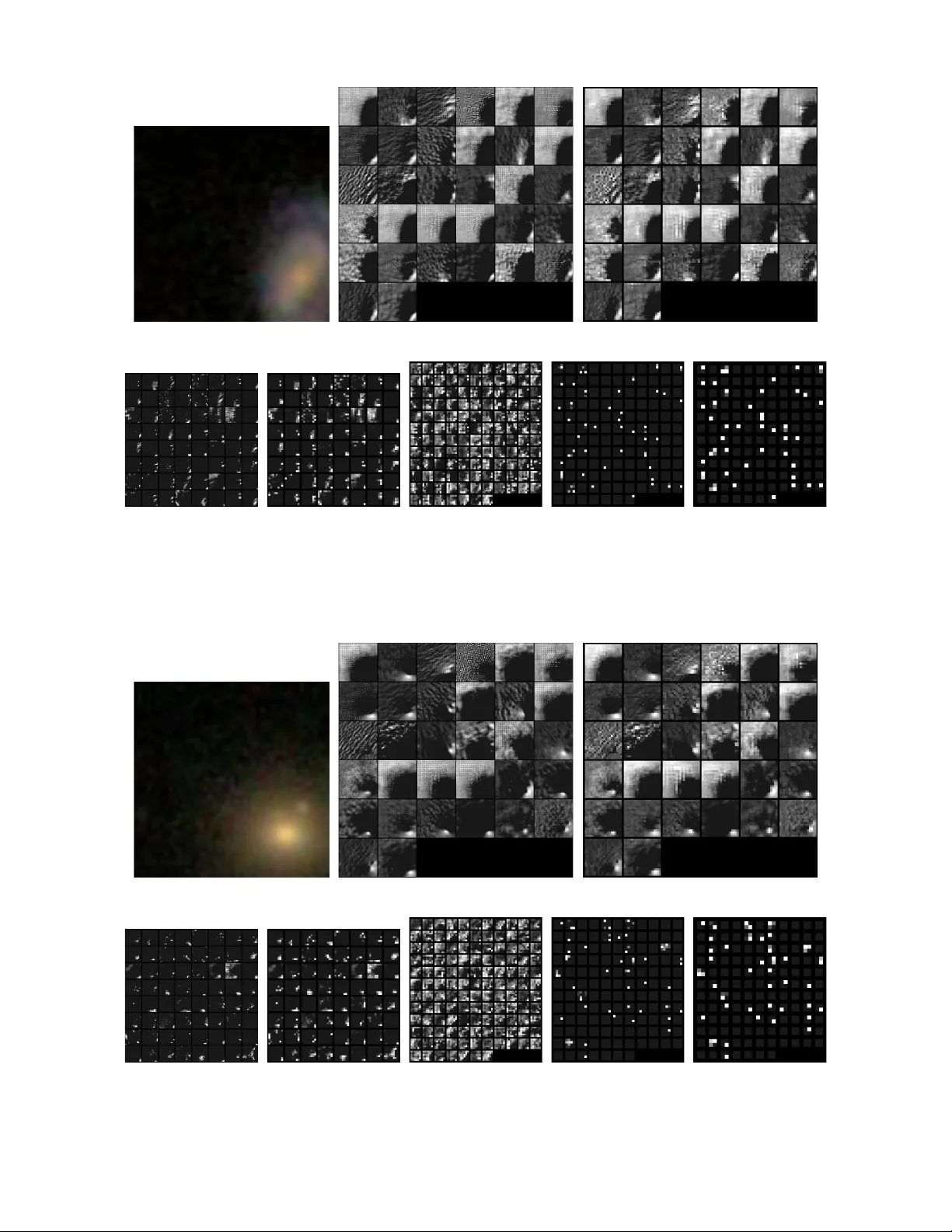

Measuring the morphological parameters of galaxies is a key requirement for studying their formation and evolution. Surveys such as the Sloan Digital Sky Survey (SDSS) have resulted in the availability of very large collections of images, which have …

Authors: S, er Dieleman, Kyle W. Willett