$ell_p$ Testing and Learning of Discrete Distributions

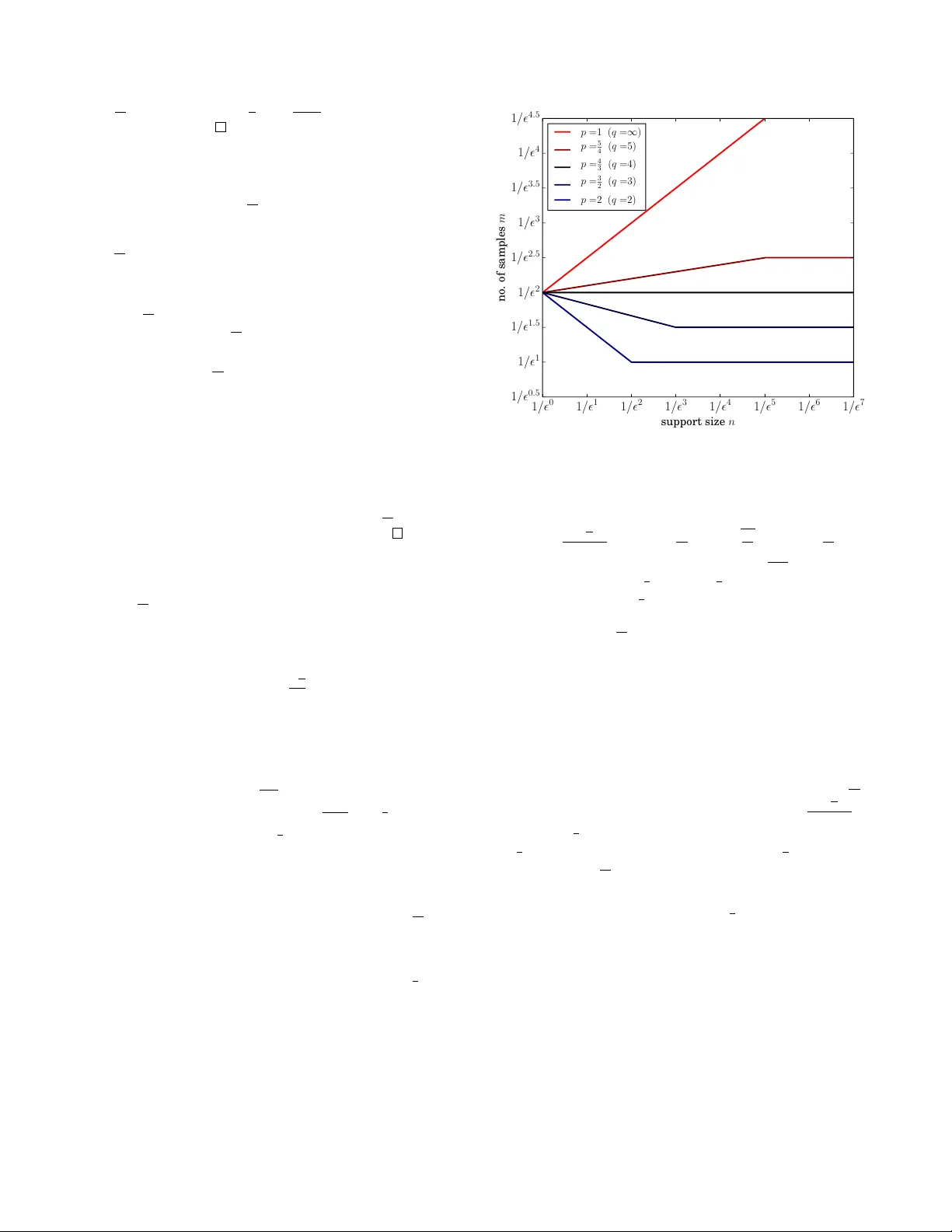

The classic problems of testing uniformity of and learning a discrete distribution, given access to independent samples from it, are examined under general $\ell_p$ metrics. The intuitions and results often contrast with the classic $\ell_1$ case. Fo…

Authors: Bo Waggoner