Implementation of a Practical Distributed Calculation System with Browsers and JavaScript, and Application to Distributed Deep Learning

Deep learning can achieve outstanding results in various fields. However, it requires so significant computational power that graphics processing units (GPUs) and/or numerous computers are often required for the practical application. We have develop…

Authors: Ken Miura, Tatsuya Harada

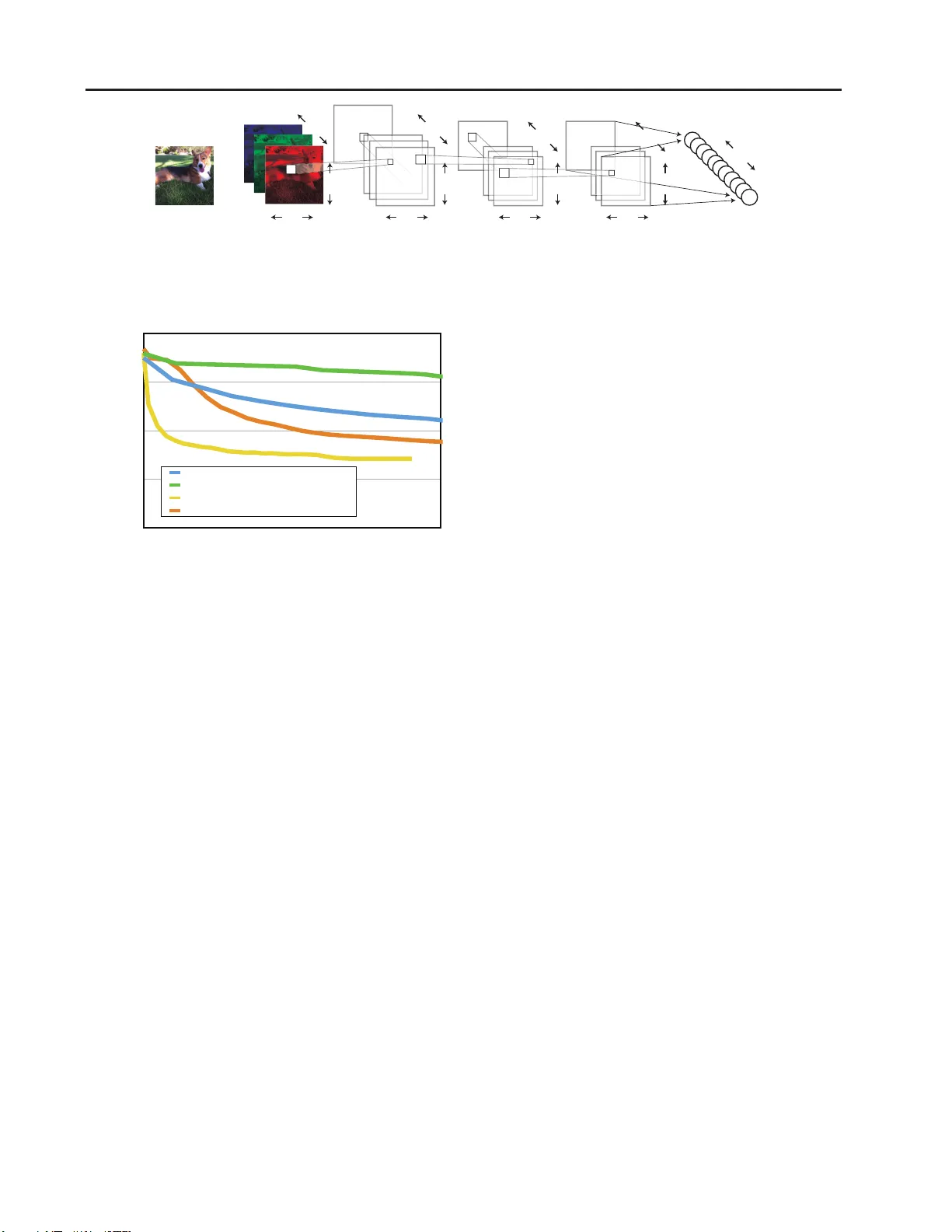

Implementation of a Practical Distrib uted Calculation System with Bro wsers and Ja v aScript, and A ppli cation to Distributed Deep Learn ing Ken Miura M I U R A @ M I . T . U - T O K YO . A C . J P T atsuya Hara da H A R A DA @ M I . T . U - T O K Y O . AC . J P Machine Intelligence Laborato ry , Department of Mechano-In formatics, The University of T okyo Abstract Deep learning can achieve outstanding results in various fields. Howe ver , it r equires so sig- nificant computatio nal power that g raphics p ro- cessing units (GPUs) and/or nu merous com put- ers are of ten requ ired for the practical applica- tion. W e have developed a new distributed cal- culation f rame work called ”Sashimi” that a llo ws any compu ter to be used a s a distribution node only by accessing a web sit e. W e ha ve also de- veloped a new Jav aScript neural network frame- work called ”Suk iyaki” that u s es general purpose GPUs with web br o wsers. Sukiyaki per forms 30 times faster than a conventional Jav aScript library for deep co n volutiona l neural ne tw orks (deep CNNs) learning. The combination of Sashimi and Su kiyaki, as well as new distribution algorithm s , demo nstrates the distributed deep learning of d eep CNNs only with web b ro wsers on various d e v ices. T he lib raries th at comprise the propo sed methods are available und er MIT license at http://mil-tokyo.g ithub .io/ . 1. Intr oduction Utilization of big data has recen tly come to play an in- creasingly important role in various business fields. Big data is often h andled with deep learnin g algo rithms that can achiev e outstanding results in various fields. F or e xam- ple, almost all of the teams t hat participated in the ILSVRC 2014 image recognition compe tit ion ( Russakovsky et al. , 2014 ) used dee p learnin g algorithm s. Such algorithm s are also used for speech reco gnition ( Dahl et al. , 2012 ; Hin- ton et al. , 2012 ) and molecular acti vity prediction ( Kaggle, Inc. ). Howe ver , deep lear ning a lgorithms have h uge comp uta- tional complexity and often req uire the use of g raphics pro - cessing units (GPUs) and nu merous comp uters for prac- tical distributed comp utation. It is difficult to construct a distributed computation en vironmen t, and it frequently requires the preparation of cer tain operating systems and installation of specific software, e.g., Had oop ( Shvachko et al. , 2010 ). For practical application o f deep learning al- gorithms, a new in stant distributed calculatio n en vironmen t is eagerly anticipated. 2. Sashimi : Dis tr ibut ed Calculation Framework W e have d e veloped a new distributed calculation fram e- work called ”Sashimi. ” In a general distributed calculation framework, it is difficult to in crease the n umber of node computer s because users mu st install c lient so ftw are on each node co mputer . With Sashimi, any computer can be- come a nod e computer only by accessing a certain web - site v ia a web brows er withou t installing any client soft- ware. The pro posed sy st em c an execute any code written in Ja vaScript in a distrib uted manner . 2.1. Implementation Sashimi consists o f two servers, the CalculationFramew ork and Distributor servers ( Figure 1 ). When a user runs a project that includes distributed processing u sing the Cal- culationFram e work and accesses the Distributor via web browsers, the processes can be distrib uted and executed in multiple web browsers. The results processed by the dis- tributed machin es can be used as if th e y wer e proce ss ed by a loc al machine withou t being consciou s of their differ- ences by using the CalculationFram e work. 2.1.1. CalculationFram e work CalculationFramework describes calculations that include distributed pro cess ing. If a user writes co de accor ding to a certain interface, the p rocesses are distributed and com - puted in multiple web browsers v ia Distributor . The results computed in the web br o wsers ar e the n au tomatically col- 1 MILJS : Brand New Ja vaScript Libraries f or Matrix Calculation and Machine Learning Sukiyaki DistributedCalculationFramework MySQL Calculation Framework Distributor User … AccessviaBrowsers TicketDistributor HTTPServer Execute HeavyProject HTTP WebSocket ProcessSmallTasks Figure 1. Sukiyaki Architecture lected. This allows the results to be used as if th e y were processed by th e local machine. In this subsection, we in - troduce PrimeListMakerProjec t, which finds prime num - bers from 1 to 10,00 0, as a n e xample. A ”project” is a progra mming u nit of CalculationFrame- work that has an endpoint from which a process starts. In a project, a user can execute distributed processing by creat- ing a task instance. Note that processes that do not require distributed processing are als o supp orted. A ”task” is a p rocess that is distributed and ex ecuted in web browsers. If a user writes a task ac cording to a cer- tain interface, the argumen ts are automatically di vided and distributed to web browsers. The processed resu lts are then auto matically co llected. In PrimeL is tMakerProject, the task that determines whether an in put in te ger is a prime number is called IsPrime T ask. This task is distributed among web browsers. The task is given argum ents gener- ated by the project, and it must return the calculated results using a callback f unction. Note that the user can use ex- ternal librar ies and datasets. In this example, the tas k calls is prime fu nction in an external library , which determines whether the input integer is a prime number . After the project generates th e task in stance with argu- ments, the fram e work generates tickets fo r each divided argument. Th e framew ork sen ds the codes and the argu- ments as tickets with the e xternal librar ies and datasets to the Distributor via My SQL. The tickets distributed by the Distributor are collected and can b e used again by the Cal- culationFram e work via MySQL. Since the project is im- plemented with server -side Ja v aScript Node.js and the task is implemen ted with Ja vaScript, they can be u sed without considerin g whethe r the code is executed by the server o r the browsers. 2.1.2. Distributor The Dis tributor distributes tasks and tickets, which are s ent from the CalculationFramework via MySQL, to browsers. The Distributor also collects the results calcu lated in the browsers. Note that the Distrib utor consists of two servers, i.e., HTTPServer and T icketDistributor . HTTPServer , wh ich is a web server im plemented with Node.js, provides static files that include a basic prog ram and discloses APIs that o f fer datasets to be used in the dis- tributed calculation. If a user wants to make a browser function as a no de, the user only need s to acc ess th e ba- sic program provided b y HT TPServer via the web br o wser . The basic progr am consists of a static HTML file and a Ja vaScript file. The basic progra m works as follows. 1. a connectio n with Ti cketDistributor is established us- ing W ebSo ck et 2. a ti cket request is sent to T icketDistrib utor 3. a task request is sent to TicketDis tributor if it has no t downloaded the task described in the ticket 4. a request fo r required externa l datasets and files is sent to HTTPServer 5. th e task with arguments described in the ticket is e xe- cuted 6. th e calculated result is return ed to T icketDistributor 7. r eturn t o Step 2. The task and external data are cached in the br o wser . If a pro gram run s f or a long time, memory usage incr eases due to the cache. Therefor e, we ha ve implemented garbage collection on the basis of the least recently used algorithm. If a n error o ccurs when th e task is r unning, an erro r report that inclu des a stack trace is sent to the T icketDistrib utor . Then, th e brows er reloa ds itself. Thu s, a task d escribed by the tickets g enerated by the Calculation Frame work can be continuo usly executed without special mainten ance on ce the user accesses the program . Users can check the p rogress o f a task and tickets via the HTTPServer control console. In this console, users can see the p roject name, the numb er of tasks, th e number of tick- ets w aiting to be processed, th e nu mber of executed tickets, the n umber of error repo rts, an d the c lient in formation for the project. Note that a con sole that can be used to execute code in web browsers is also provid ed. W ith this con sole, the user can make th e browsers reload themselves and redi- rect to anothe r distributed system. W e use respo nsi ve web design (R WD) technique s in the user interface (UI) o f the basic prog ram and th e control console. R WD techniques adap t the UI to the screen size of any PC, tablet, o r smartpho ne, which makes it easy to use any devices for distributed calculation and ch eck o f the progr ess . 2 MILJS : Brand New Ja vaScript Libraries f or Matrix Calculation and Machine Learning The task s a nd tickets gen erated b y th e Calculatio nFrame- work a re distributed to browsers via W ebSocket b y the T icketDistrib utor . The pro cessed results are also collected by the T icketDistributor . Unlike HTTPServer , the T icket- Distributor ru ns in a sing le pr ocess and communicates with each web browser unitarily and efficiently . When th e T icketDistributor rec ei ves a ticket req uest fr om the browser , it obtains tickets in ascending order of ”virtual created time” from the MySQL server . V irtu al created time is determined as follows. • the virtual c reated time is the ticket creation time of undistributed ti ckets • virtual created time is fi ve minu tes after tick et distri- bution if the tick ets have been distributed • virtual created time is fi ve minutes after the last ticket distribution if the ticket has been redistributed Thus, if the results are not returned within fi ve minutes, the tickets is treated in such a w ay as to b e re-created. Note that tickets are r edistrib uted in ascending or der b y distribution time if th ere are no further tickets to be distributed. Th us, if a web browser is ter minated after it receiv es a tick et, and/or if there are clients with low com putational cap ability , a n- other client ca n execute the task. T herefore, th e throughp ut can b e enhan ced. The tickets are redistributed at inter v als of at lea s t 10 seconds, wh ich prevents the last tick et fr om being distributed to many clients and pre vents the next cal- culation from being delaye d. W e impleme nted this algo- rithm using SQL, wh ich c an q uickly select tickets to be distributed. 2.2. Benchmark 2.2.1. Exper iment Con dition Using Sashimi, we demonstrate that a task with high co m- putational cost can be compu ted in para llel efficiently . Here, we compare the time required to class ify the MNIST dataset with a nearest neigh bor metho d by changing the number of clients. In this experim ent, 1,0 00 imag es fr om the 1 0,000 MNIST test images were classified by compar- ing them with 60,000 training images. W e used one to four clients on a desktop compu ter and tablet PCs describe d in T ab le 1 . W e accessed the Distrib utor using the Google Chrome web browser on both the deskto p and tablet envi- ronmen ts. 2.2.2. Results The r esults are shown in T able 2 . In both environments, the calculation time was reduce d with the distributed com- putation. The effect of the distributed comp utation was T able 1. Specifications o f De vices fo r Distribu ted MNIST Bench- mark DELL OPTIPLEX Nexus 7 Model DELL OPTIPLEX 8010 Nexus 7 (2013) OS W indows 7 Professional Android 4.4.4 CPU Intel Core i7-377 0 3. 4GHz Krait 1.50 GHz RAM 16GB 2GB remarkab le wh en th e pr oposed system was used with the tablet PC because the tab let has lower comp utational power than the desktop co mputer and the overhead time requir ed for the d istrib ution b ecomes relatively shorter . W e b elie ve that the propo s ed distrib uted c omputing m ethod will be- come more effecti ve f or other feature extraction methods with high compu tational costs such as SIFT and deep learn- ing. 3. Sukiyaki : Deep N eu ral Network Framework W e implemente d a learn ing algorithm f or deep neur al net- works (DNNs) with browsers based on Sashimi. Here, we explain the pro posed fr ame work and implementation for DNNs. W e also d is cuss the advantages o f th e proposed method over an e xisting library in a stand-alone en viron- ment. The distributed com putation of DN Ns is explained in the next section. W e primarily imp lemented d eep conv olutional ne ural net- works (deep CNNs) that o btain high classification accuracy in image recognition tasks. ConvNetJS ( Karpathy ) was im- plemented as a NN library using Jav aScript. Howe ver , its computatio nal sp eed is limited because it runs on only a single th read. T herefore, we developed a deep neur al net- work fr ame work called ”Su kiyaki” that utilizes a fast ma- trix library called Sushi ( Miura et al. , 2015 ). The Sushi m a- trix l ibrary is fast b ecause it is imp lemented on W ebCL and can utilize general purpose GPUs (GPGPUs) efficiently . 3.1. Implementation The Sukiyaki DNN framework consists o f the Sukiyaki ob- ject, which hand les proced ures for learn ing and testing in the ne ural network , an d layer objects. In this version, f or deep CNNs, we implemen ted a con volutional layer , a max pooling lay er , a fully- connected lay er , and an activ ation layer . Note th at we can ad d other layers if we implement certain method s such as forward, b ackward, and update. The for w ard, backward, and upd ate methods in each layer are impleme nted using the Sushi m atrix library ; thus, they can be executed in parallel on GPGPUs. W e can use AdaGrad ( Du chi et al. , 2011 ) as an on line parameter leaning metho d, whic h can learn parameters 3 MILJS : Brand New Ja vaScript Libraries f or Matrix Calculation and Machine Learning T able 2. Results of Distributed MNIST Benc hmark En viron ment Clients Elapsed T ime (sec.) Elapsed T ime (ratio) DELL OPTIPLEX 1 107 1 2 62 0.58 3 52 0.49 4 46 0.43 Nexus 7 1 768 1 2 413 0.54 3 293 0.38 4 255 0.33 quickly . T he original update rule in AdaGrad is as follows. θ i,t = θ i,t − 1 − α q P t u =0 g 2 i,u g i,t where, α is a scalar learning rate, θ i,t is the i-th elem ent of the parameter at tim e step t and g i,t is the i-th element of the gradient at time step t . Ho wev er , in this update rule, learn- ing usually b ecomes unstable b ecause the su m of squ ared gradients is minuscule early in th e learn ing process. There- fore, we h a ve m odified th e upd ate rule usin g a c onstant β as follows. θ i,t = θ i,t − 1 − α q β + P t u =0 g 2 i,u g i,t W e designed the Sukiyaki DNN framew ork to be used with both Node.js an d browsers so DNNs can be trained in a distributed manner using the Sashimi distributed calcu la- tion fram e work. For example, a model file wher ein the pa- rameters are encode d with base64 is formatted in JSON. Note that although the m odel file is a platform independent string fo rmat, it can be exchanged among machines witho ut round ing e rrors. 3.2. Benchmark 3.2.1. Exper iment Con dition W e compar ed the learning speed o f the Sukiy aki DNN framework with that of ConvNetJS, which is an existing Ja vaScript NN library . In this e xperimen t, we used the deep CNN model shown in Figure 2 . Fifty images per mini-batch were learn ed from the 5 0,000 training ima ges in cifar-10 ( Krizhevsky , 2009 ). Note that cifar-10 co nsists of 2 4-bit 32 × 32 co lor images in 10 classes. The m odel co n volves the inp ut imag es with 5 × 5 kernels in each conv olutional layer and generates three featu re m aps of size 32 × 32 × 16 , 16 × 16 × 20 , and 8 × 8 × 20 . Each con volutional layer is followed by an activ ation lay er and a m ax poo ling layer, and the size of the output is h alv ed. The fourth lay er is a f ully-connected lay er T able 3. Specifications of Device for N eu ral Netwo rk Libraries Benchmark Model MacBook Pro (Retina, 13-inch, Late 2013) OS Mac OS X Y osemite (10.10.1) CPU Intel Core i5-425 8U 2. 4GHz GPU Intel Iris RAM 8GB T able 4. Numbers of Batches Learned per 1 min. Con vNetJS Sukiyaki Node.js Firefox Node.js Firefox 17.55 2.44 545.3 9 31.39 that co n verts 320 in put eleme nts to 10 outp ut elements b y estimating class proba bilities v ia the softmax function. W e traine d t he network using No de.js and the Firefo x web browser with a MacBook Pro (specs described in T able 3 ) and compar ed th e learning speeds. 3.2.2. Results The results are shown in T able 4 and Figure 3 . As observed, Sukiy aki learned the network faster than Co n- vNetJS for both Node.js and Firefox. Th e conver gence speed of Sukiy aki was also faster than th at o f ConvNetJS. Note that Sukiy aki with No de.js lear ned the network 30 times faster than Con vNetJS. 4. Distribute d Deep Learning W e ran the Sukiyak i DNN f rame work on th e Sashimi distributed ca lculation framework an d r ealized distributed learning of deep CNNs. 4.1. Distrib ution Algorit hms Some previous studies have pr oposed method s f or dis- tributed l earning of DNNs and deep CNNs. Dean et al. ( Dean et al. , 201 2 ) proposed DistBelief, wh ich 4 MILJS : Brand New Ja vaScript Libraries f or Matrix Calculation and Machine Learning InputImage convolutionallayer fully-connectedlayer 32 16 3 20 20 10 32 32 32 16 16 8 8 Figure 2. Deep CNN for the Benchmark of Stand-alone Deep Learning 0.00 0.25 0.50 0.75 1.00 0 15 30 45 60 ConvNetJS(Node.js) ConvNetJS(Firefox) Sukiyaki(Ours)(Node.js) Sukiyaki(Ours)(Firefox) elapsedtime(min.) errorrate Figure 3. Error Rate is an efficient d ist ributed compu ting method for DNNs. In DistBelief, a ne tw ork is partitioned in to some subn etw orks, and different mac hines are respon sible for compu tation of the different s ubne tw orks. The no des with edges that cross partition bou ndaries must share their state informa tion be- tween mach ines. Howe ver , since we con sider m achines connected v ia the Interne t with slow throu ghput, i t is diffi- cult to share th e nodes’ state between different machines with th e pro posed framew ork. DistBelief focuses on a fully-co nnected network; thus, it is also difficult to directly apply this a pproach to con volutional networks that share weights among different nodes. Meeds et al. ( Meeds et al. , 2 014 ) dev eloped M LitB, wherein d if ferent trainin g data batche s are assigned to d if- ferent clients. The clients com pute gr adients and send them to the master that computes a weighted average o f g radients from all clients and updates the network. The new network weights are sent to the clients, and the clients then r estart to compute grad ients on the b asis o f the ne w weights. This ap- proach is simple and easy to implement; howev er , it m ust commun icate all network weights a nd gradien ts between the m aster and the clients. Thus, th e comm unication ov er- head becom es excessively large wit h a large network. Krizhevsky ( Krizhevsky , 2014 ) prop osed a me thod to par- allelize the trainin g of dee p CNNs using model p aral- lelism and data parallelism efficiently . Ge nerally , deep CNNs con sis t of ma n y co n volutiona l layers and a few fully - connected layers. Due to weight sharing, conv olutional layers in cur significant com putational cost relative to the small n umber of param eters. Ho wever , f ully-connected layers h a ve many more p arameters than conv olutiona l layers and less comp utational complexity . Krizhevsky ( Krizhevsky , 2 014 ) developed an efficient metho d to par- allelize the training of d eep CNNs by ap plying data par- allelism in the con v olutional lay ers and mod el parallelism in the f ully-connected layers. Howev er , we fo cus on dis- tributed computation via the Internet; thus, we must reduce commun ication costs in the p roposed framework. He et al. ( He et al. , 2015 ) implemented anoth er effecti ve method f or distributed dee p learnin g. Th e y parallelize the training of con volutional lay ers using d ata par allelism on multi-GPUs. Th en the GPUs are synchr onized and th e fully-co nnected layers are train ed o n a single GPU. The computatio nal comp le xity fo r training fully-con nected lay- ers is relati vely small so that the model pa rallelism of fully- connected layers d oes not n ecessarily contribute to fast learning. This method o f c ombining p arallelized an d stand- alone learning works efficiently and is easy to imp lement. Howe ver , this method has some computation al resources stay idle while f ully-connected layers are learne d on a sin- gle GPU and there is still room for improvement. SINGA ( W ang et al. ) is a distributed deep learning plat- form. It supports bo th model partition an d d ata partition , and we can m anage them automatically through d is tributed array data structure without much awareness of the array partition. While SINGA is designed to accelerate deep learning by using MPI, it is u nclear whether this app roach is approp riate fo r distrib uted calculation via the Internet. In this study , we have d e veloped a new method, which parallelizes th e training of conv olutional layers using data parallelism and does no t app ly model p arallelism to fully- connected lay ers. The propo sed metho d trains fully - connected laye rs on the ser v er while the clients train the conv olutional layer s . Unlike the method of He et al. ( He et al. , 2 015 ), conv olutional layers and fu lly-connected lay- 5 MILJS : Brand New Ja vaScript Libraries f or Matrix Calculation and Machine Learning 0 12.5 25 37.5 50 fully-connectedlayer convolutionallayer stand-alone 1client 2clients 3clients 4clients learningspeed(image/sec.) Figure 5. Learning Speed by Distributed Deep Learning ers are learned concurrently . Th is metho d reduces com- munication cost among m achines and utilizes the compu- tational capab ility o f the server while it awaits responses from the clients. 4.2. Benchmark 4.2.1. Exper iment Con ditions In this experiment, we p arallelized the training of the deep CNN shown in Figure 4 u sing the Su kiyaki DNN fram e- work and the Sashimi distributed calcu lation framework. W e used the comp uter shown in T able 5 as the server and clients. The client m achine had four GPU c ores; thus, we ran on e to four Firef ox web br o wsers on the client ma- chine. Th e browsers ac cessed the server ru nning Node.js and be gan training the network in parallel. W e comp ared the training speeds of bo th conv olution and fully-con nected layers by varying the number of clients. 4.2.2. Results The results are shown in Fig ure 5 . The prop osed distributed co mputation m ethod can train fu lly-connected layers 1.5 times faster th an the stand-alone comp utation method ind ependently of the n umber of clients because the server ca n be dev oted to training fully-connected lay- ers. Th e train ing speed o f the co n volutiona l layers b ecomes faster in propo rtion to the numb er of clients. Wi th fo ur clients to tra in conv olutional lay ers, th e prop osed method is two times as fast as the st and-alo ne method. 5. Conclusion and Futur e Plans In this paper, we have pr oposed a distributed calcula- tion framework using JavaScript to address the increasing need fo r computational resources req uired to process big data. W e ha ve d e veloped the Sashimi distributed calcula- tion framew ork, which can allow any web brows er to func- tion as a computation node. By applying Sashimi to an image classification problem, the task can be e xecuted in parallel, and its ca lculation speed becomes faster in propor- tion to the num ber of clients. Fu rthermore, we developed the Sukiyaki DNN framew ork, whic h u tilizes GPGPUs and can train d eep CNNs 30 times faster than a con ventional Ja vaScript DNN library . By building Sukiyaki on Sashimi, we also developed a parallel comp uting me thod for deep CNNs th at is suitable fo r distrib uted com puting via the In- ternet. W e h a ve sh o wn th at deep CNNs can b e tra ined in parallel using only web browsers. These libr aries are av ailable under MIT license at http://mil-tokyo.g ithub .io/ . In future, we plan to improve the efficiency of the distribution a lgorithm by con sider - ing clients’ co mputational cap abilities and supporting an - other n etw ork in Su kiyaki. Note that we welcome sugges- tions for im provements to o ur cod e and do cumentation. It is our hope that many prog rammers will further de velop Sukiyaki an d Sash imi to become a high-p erformance d is - tributed computing platform that an yone can use easily . Refer ences Dahl, G. E., Y u, D., D eng, L., and Acero, A. Con te xt- Dependen t Pre-trained Deep Neural Networks for Large V ocabulary Sp eech Recognition . IEE E T ransactions on Audio, Speech, an d Language Pr o cessi ng , 20(1 ):30–42, 2012. Dean, J., Corrad o, G., Monga, R., Chen, K., Devin, M., Mao, M., Ran zato, M., Senior, A., T ucker , P ., Y ang , K., Le, Q. V . , an d Ng , A. Y . Large Scale Distributed De ep Networks. I n Adva nces in Neural Info rmation Pr oce ss - ing Systems , pp. 1223 –1231. 2 012. Duchi, J., Hazan, E., and Sing er , Y . Ada pti ve subg radient methods for online learning and stochastic optimization. The J ournal of Machine Learning Resear ch , 12 :2121– 2159, 2011 . He, K., Zhan g, X., Ren , S., and Sun, J. Delving Deep into Rectifiers: Surpassing Huma n-Le vel Perfor mance on ImageNet Classification. arXiv: 1502.0185 2 , 20 15. Hinton, G., Deng, L., Y u, D., Dahl, G. E., Mohamed , A., Jaitly , N., Sen ior , A., V anh oucke, V ., Nguy en, P ., Sainath, T . N., and Kingsbury , B. Deep N eural Net- works for Acoustic Mod eling in Spee ch Recog nition: The Shared V ie ws o f Four Research Group s. IEEE Sig- nal Pr o cessing Ma gazine , 29(6):8 2–97, 20 12. Kaggle, Inc. Merck Molecular Acti v ity Chal- lenge. https://www. kaggle.com/c/ MerckActivit y . Karpathy , A. ConvNetJS. http://cs.stanford. edu/people/k arpathy/convnetjs/ . 6 MILJS : Brand New Ja vaScript Libraries f or Matrix Calculation and Machine Learning 10 InputImage convolutionallayers fully-connectedlayer 94 16 3 9 4 9 4 9 4 47 20 4 7 4 7 4 7 23 24 2 3 2 3 23 11 28 1 1 1 1 11 5 32 5 96 96 Figure 4. Deep CNN for the Distributed Deep Learnin g Benchmark T able 5. Specifications of Dev ices for Distr ib uted Deep Learn ing Server Client Mac Pro DELL Alienware Model Mac Pro (Late 2013) DELL Alienware Area-51 OS Mac OS X Y osem ite (10.10 .1) W indows 8.1 CPU Intel Xeon E5 3.5GHz 6-core Intel Core i7-596 0X 3. 00GHz 8-core GPU AMD FirePro D500 NVIDIA GeForce GTX TIT AN Z × 2 RAM 32GB 32GB Krizhevsky , A. Learning Multiple Layers of Fea- tures from Tiny Im ages. Master’ s thesis, Depart- ment of Computer Science, Univ ersity of T oro nto, 2009. URL http://www.c s.toronto.edu/ ˜ kriz/learnin g- features- 2009- TR.p df . Krizhevsky , A. One weir d tr ick f or parallelizing conv olu- tional neural networks. arXiv:140 4.5997 , 2 014. Meeds, E., Hen driks, R., F araby , S. A., Bruntink , M., an d W elling, M. ML itB : Machine Learn ing in th e Browser. arXiv:141 2.2432 , 201 4. Miura, K., Mano, T ., Kaneh ira, A., T suchiya, Y ., and Harada, T . MILJS : Brand Ne w JavaScript Li- braries fo r Matrix Calculation and Machin e Learning. arXiv:150 2.06064 , 20 15. Russakovsky , O., Deng , J., Su, H., Krause, J., Satheesh, S., Ma, S., Huang, Z., Kar pathy , A., Khosla, A. , Bernstein, M., Berg, A. C., a nd Fei-Fei, L . Image Net Large Scale V isual Recog nition Challenge, 2014. Shvachko, K., Kuang , H., Radia , S., and Chansler, R. The hadoo p distributed file system. In IEEE S ymposium on Mass Sto r age Systems a nd T echno logies , p p. 1–1 0, 20 10. W ang , W . , Chen, G., Dinh, T .T .A., Gao, J., Ooi, B.C., and T an, K .L. SINGA: A Distrib uted System for Deep Learning . T echn ical report. http://www.c omp. nus.edu.sg/ ˜ dbsystem/sin ga/ . 7 MILJS : Brand New Ja vaScript Libraries f or Matrix Calculation and Machine Learning Ap pendix. Sashimi Sample Program Source Code 1. prime li st maker project.js var ProjectBase = require('../project_base'); var IsPrimeTask = require('./is_prime_task'); var PrimeListMakerProjec t = function() { this.name = 'PrimeListMakerProje ct '; this.run = function(){ var task = this.createTask(IsPrimeTask); var inputs = []; for (var i = 1; i <= 10000; i++) { inputs.push({ candidate : i }); } task.calculate(inputs); task.block(function(results) { for (var i = 0; i < results.length; i++) { if (results[i].output.is_prime) { console.log(i + ' is a prime number.') } } }); }; }; PrimeListMakerPr oject .pro totype = new ProjectBase(); Source Code 2. is prime task.js var TaskBase = require('../task_base'); var IsPrimeTask = function() { this.static_code_files = ['is_prime']; this.run = function(input, output) { if (is_prime(input.candidate)) { output({ is_prime : true }); } else { output({ is_prime : false }); } }; }; IsPrimeTask.prototype = new TaskBase(); Source Code 3. is p rime.js function is_prime(candidate) { for (var i = 2; i <= Math.sqrt(candidate); i++) { if (candidate % i === 0) { return false; } } return true; } 8

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment