Two Algorithms for Orthogonal Nonnegative Matrix Factorization with Application to Clustering

Approximate matrix factorization techniques with both nonnegativity and orthogonality constraints, referred to as orthogonal nonnegative matrix factorization (ONMF), have been recently introduced and shown to work remarkably well for clustering tasks such as document classification. In this paper, we introduce two new methods to solve ONMF. First, we show athematical equivalence between ONMF and a weighted variant of spherical k-means, from which we derive our first method, a simple EM-like algorithm. This also allows us to determine when ONMF should be preferred to k-means and spherical k-means. Our second method is based on an augmented Lagrangian approach. Standard ONMF algorithms typically enforce nonnegativity for their iterates while trying to achieve orthogonality at the limit (e.g., using a proper penalization term or a suitably chosen search direction). Our method works the opposite way: orthogonality is strictly imposed at each step while nonnegativity is asymptotically obtained, using a quadratic penalty. Finally, we show that the two proposed approaches compare favorably with standard ONMF algorithms on synthetic, text and image data sets.

💡 Research Summary

**

The paper addresses the problem of Orthogonal Non‑negative Matrix Factorization (ONMF), a variant of NMF that imposes both non‑negativity and orthogonality on the factor matrices. While ONMF has been shown to produce highly interpretable clusters—especially in document classification—the existing algorithms typically enforce non‑negativity at every iteration and only approach orthogonality asymptotically, often through penalty terms or specially chosen search directions. This approach can lead to slow convergence, delicate parameter tuning, and occasional violation of the orthogonality constraint during early iterations.

The authors make two major contributions. First, they prove a precise mathematical equivalence between ONMF and a weighted version of spherical k‑means. In spherical k‑means, data points are normalized to unit length and clustering is performed using cosine similarity; the weighted variant introduces a per‑cluster scaling factor that matches the norm of each orthogonal basis vector in ONMF. By establishing this equivalence, the authors can reinterpret ONMF as a clustering problem that can be solved by an Expectation‑Maximization (EM)‑like procedure. In the E‑step, each data point is assigned to the cluster whose orthogonal basis yields the largest cosine similarity (effectively a hard assignment). In the M‑step, given the assignments, the orthogonal basis matrix is updated by solving a constrained least‑squares problem that reduces to a QR or SVD decomposition, thereby guaranteeing exact orthogonality at every iteration. This EM‑style algorithm inherits the monotonic decrease property of classic k‑means: the objective function never increases, and convergence is guaranteed to a stationary point that satisfies the KKT conditions of ONMF.

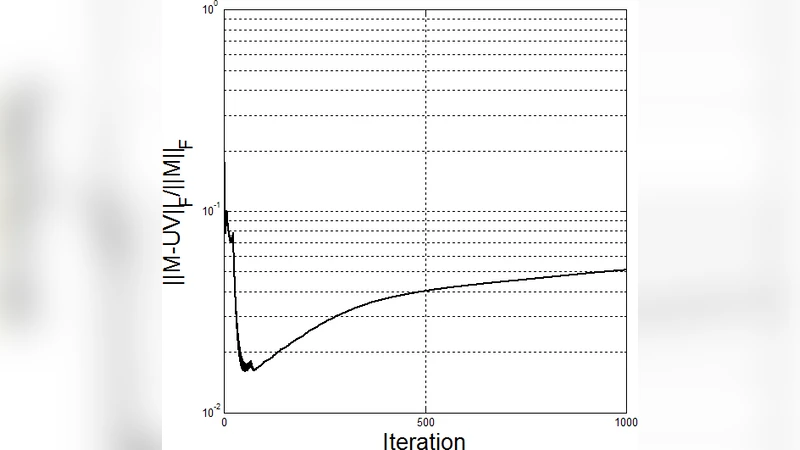

The second contribution is an augmented Lagrangian algorithm that flips the usual enforcement order: orthogonality is imposed strictly at each iteration, while non‑negativity is encouraged through a quadratic penalty that is gradually strengthened. The optimization problem is formulated as

\

Comments & Academic Discussion

Loading comments...

Leave a Comment